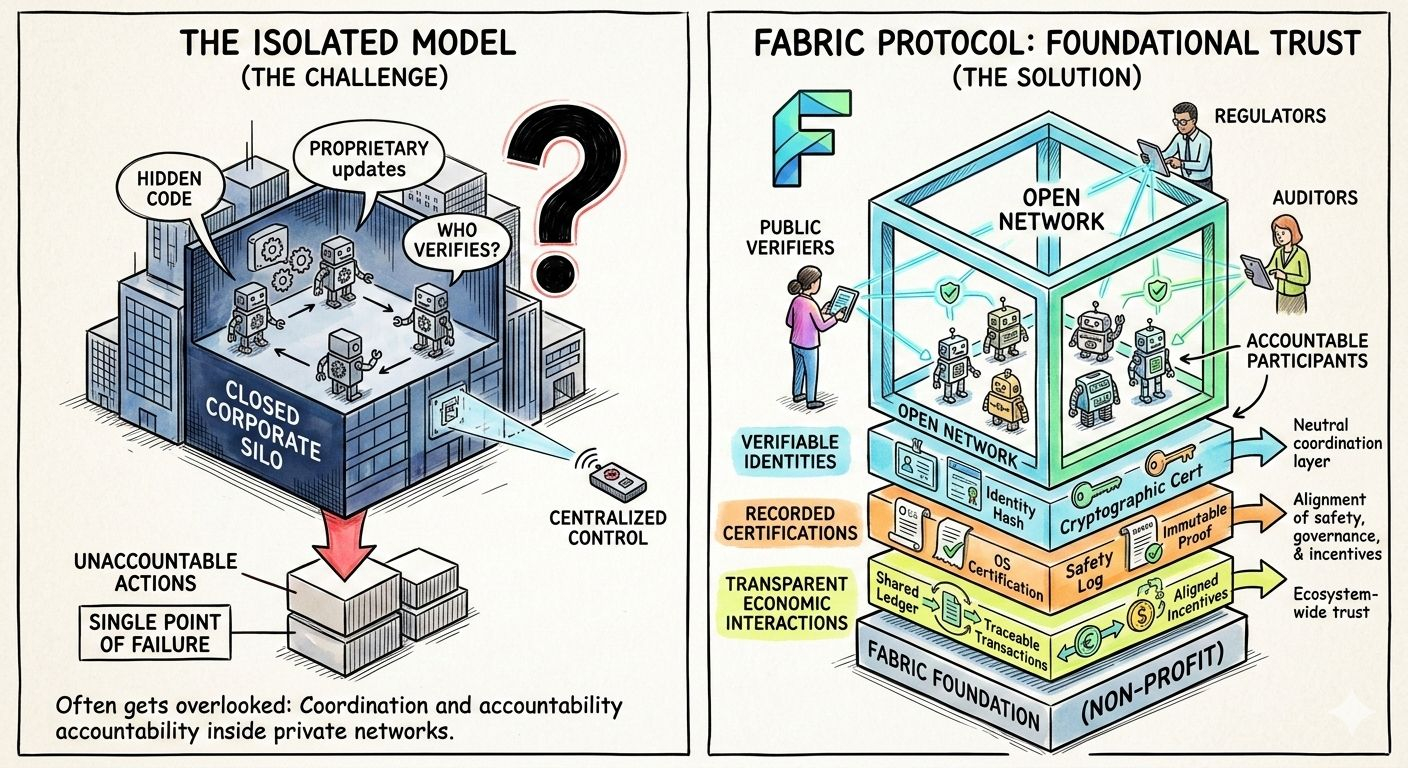

When people talk about the future of robotics, the focus is usually on smarter machines, better AI models, or more powerful hardware. What often gets overlooked is something far more foundational: coordination. As robots and autonomous agents become more capable, who verifies what they do? Who governs their updates? Who is accountable when they act? Fabric Protocol begins with this human concern — trust — and builds outward from there.

Supported by the non-profit Fabric Foundation, Fabric is designed as an open network where robots are not just machines but accountable participants in a shared system. Instead of operating inside closed corporate silos, robots under this framework can have verifiable identities, recorded certifications, and transparent economic interactions. The idea is not to replace robotics companies. It is to give the entire ecosystem a neutral coordination layer where safety, governance, and incentives are aligned.

At a structural level, Fabric feels less like a product and more like infrastructure. A public ledger anchors identities, certifications, and governance decisions. Robots or AI agents are registered in a way that makes their provenance and permissions traceable. The system does not attempt to store massive streams of sensor data on-chain. Instead, it records proofs — confirmations that certain software was used, that certain rules were followed, that certain actions were authorized.

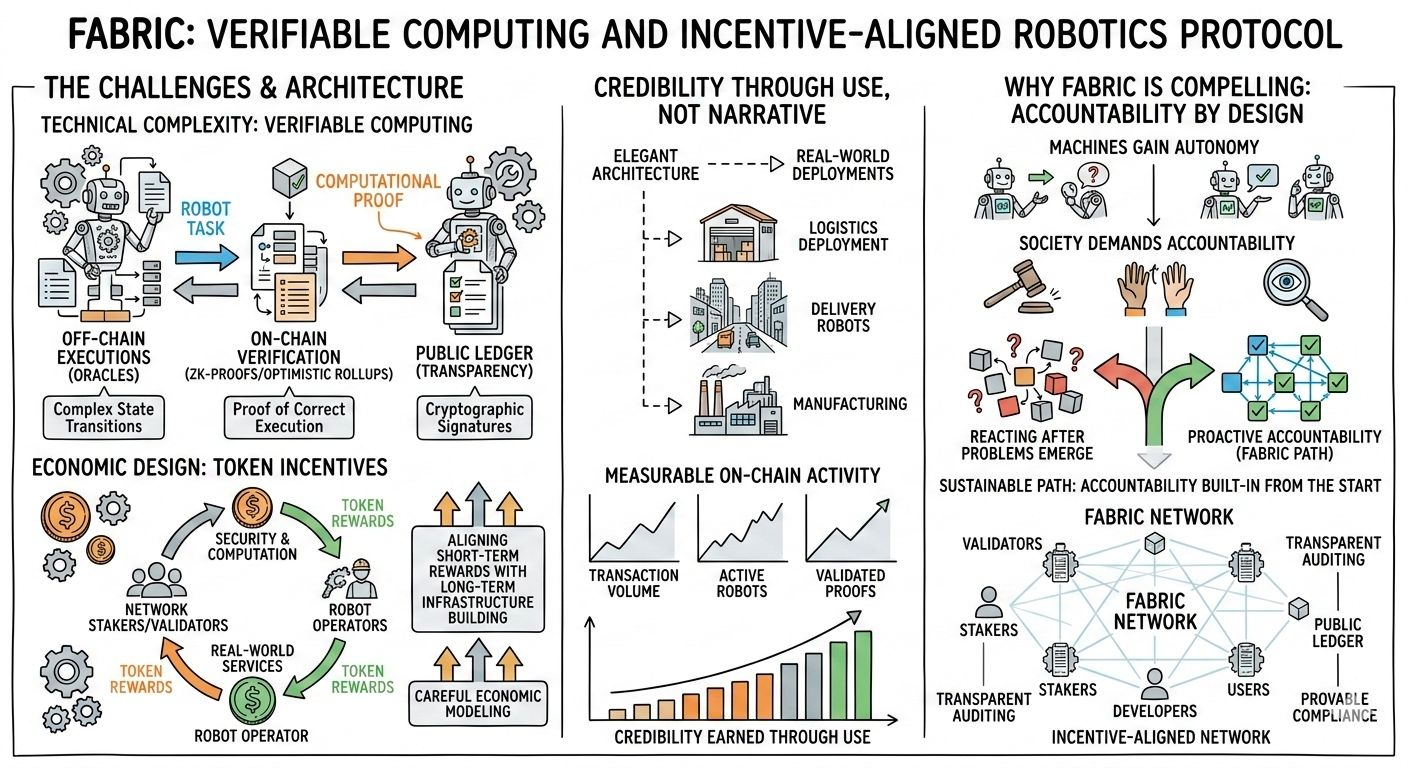

This is where verifiable computing becomes central. In robotics, actions happen in the physical world. Trusting those actions blindly is risky. Fabric’s approach introduces cryptographic attestations and hardware-backed verification to bridge that trust gap. A robot can prove it ran approved software. A module can prove it passed certification. An update can be traced and audited. These capabilities are not just technical add-ons; they are prerequisites for scaling human-machine collaboration safely.

On top of this technical foundation sits an agent-native layer. Robots and AI agents are treated as economic actors. They can negotiate tasks, settle payments, and operate under predefined policies. This framing is important. It acknowledges that autonomous systems are not just tools; they participate in value creation. Giving them structured economic and governance rails ensures that autonomy does not drift into opacity.

The $ROBO token binds this entire structure together. It serves as the medium for network fees, staking, and governance participation. If you want to register a robot, verify a module, or interact economically within the network, $ROBO becomes the unit of coordination. Participants who provide verification services or infrastructure can stake $ROBO to signal commitment and earn rewards. Governance decisions about protocol upgrades or standards are influenced by token participation.

However, the long-term strength of $ROBO will not come from exchange listings or market cycles alone. Its durability depends on real usage. The meaningful metrics are practical ones: how many robots are registered, how many attestations are recorded, how many services are settled in $ROBO. If the token becomes embedded in operational workflows — from compliance checks to automated service payments — it transitions from a tradable asset to essential infrastructure.

Fabric’s broader ecosystem role is particularly interesting. Robotics is fragmented. Manufacturers, regulators, insurers, developers, and enterprises often operate in parallel, not in coordination. Fabric proposes a shared trust layer that any of these actors can plug into. In theory, this reduces friction. A regulator can reference on-chain attestations. An insurer can evaluate risk based on transparent logs. A developer can build modules knowing there is a standardized certification pathway.

There are real challenges ahead. Verifiable computing in robotics is technically complex. Aligning token incentives with long-term infrastructure building requires careful economic design. Adoption will not happen simply because the architecture is elegant. It will require partnerships, real-world deployments, and measurable on-chain activity. The protocol’s credibility will ultimately be earned through use, not narrative.

What makes Fabric compelling is not hype but coherence. It recognizes that as machines gain autonomy, society will demand accountability. Building that accountability into a public, incentive-aligned network from the start is a more sustainable path than reacting after problems emerge.

If Fabric succeeds, it will not be because robots became smarter. It will be because trust became programmable. And in a world where autonomous systems increasingly shape economic and social outcomes, programmable trust may prove to be the most important innovation of all.