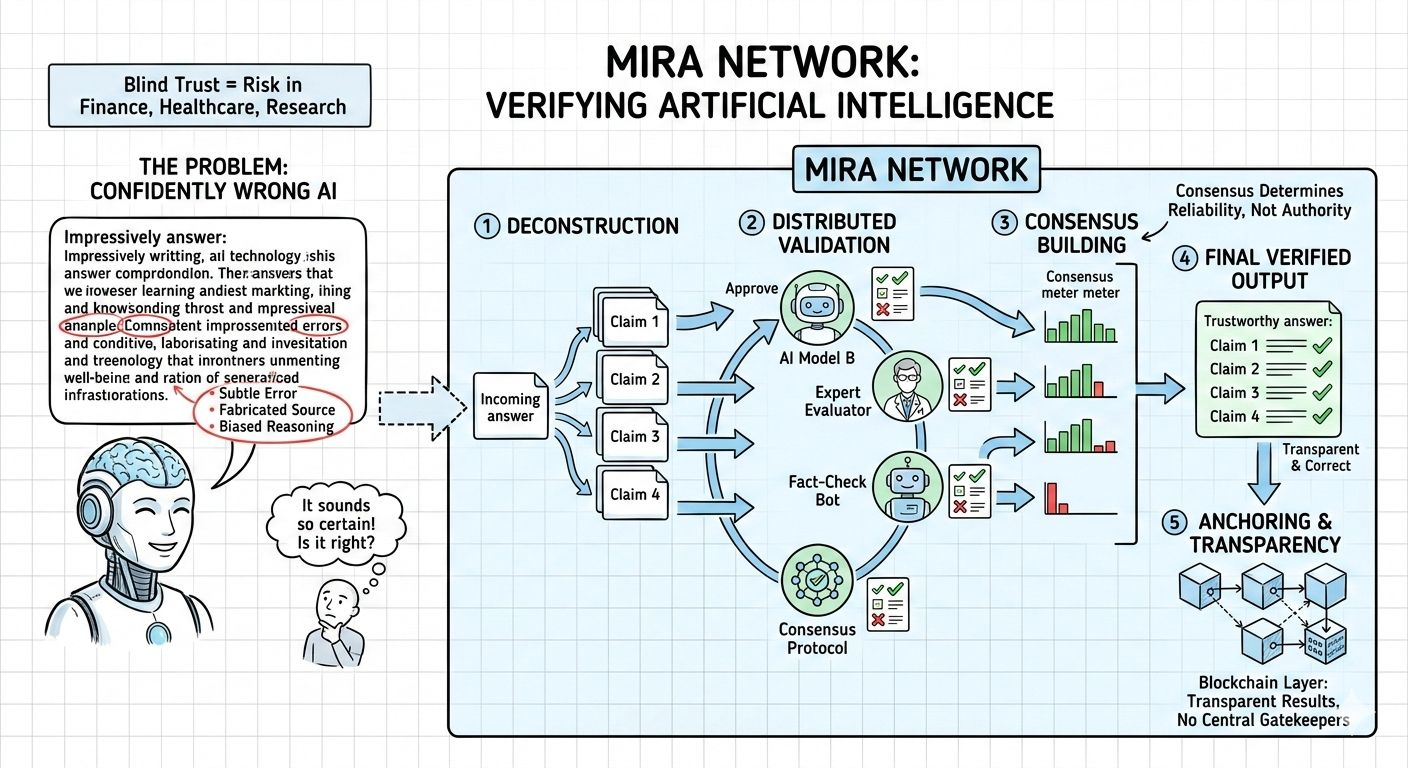

Artificial intelligence feels magical — until it gets something confidently wrong. Anyone who has used advanced AI models has seen it: beautifully written answers that contain subtle errors, fabricated sources, or biased reasoning. The experience is impressive, but also unsettling. We are interacting with systems that sound certain, yet operate on probability. As AI moves deeper into finance, healthcare, research, and governance, that uncertainty stops being abstract and starts becoming risky.

Mira Network is built around a simple but powerful idea: don’t blindly trust AI — verify it.

Instead of assuming a single model’s output is correct, Mira treats every response as something that can be examined. It breaks complex answers into smaller claims and distributes them across a network of independent validators. These validators — which may include different AI systems or specialized evaluators — assess whether those claims hold up. Consensus, not authority, determines reliability. The blockchain layer anchors the results transparently, removing the need for centralized gatekeepers.

What makes this approach feel different is its mindset. Mira doesn’t try to build the “perfect AI.” It accepts that models will make mistakes. Instead of chasing perfection, it builds accountability. That shift — from intelligence to trust — feels necessary in today’s AI landscape.

The token, $MIRA, plays a practical role in making this work. Validators stake tokens to participate, meaning they have real economic exposure. If they validate accurately, they are rewarded. If they act carelessly or dishonestly, they risk penalties. This creates a system where reliability is not just encouraged — it is financially reinforced. The token isn’t just a utility badge; it is the mechanism that aligns incentives across the network.

Why does this matter? Because AI errors are not equal. A small mistake in a casual conversation is harmless. A flawed output in an automated trading bot or medical assistant is not. As AI systems become more autonomous, the cost of error increases dramatically. Verification becomes valuable in a way that raw generation alone cannot match.

At the same time, decentralization is not a magic solution. If all validators think the same way or rely on similar training data, consensus could simply reinforce shared blind spots. Mira’s long-term credibility will depend on diversity — diverse models, diverse evaluators, and transparent methodologies. Trust cannot emerge from uniform thinking; it grows from balanced disagreement resolved through evidence.

From a broader perspective, Mira feels like infrastructure for an AI-native world. Developers building AI agents, DeFi integrations, or governance tools need a reliability layer they don’t have to design themselves. If Mira can provide that layer, it becomes more than a project — it becomes connective tissue for the ecosystem.

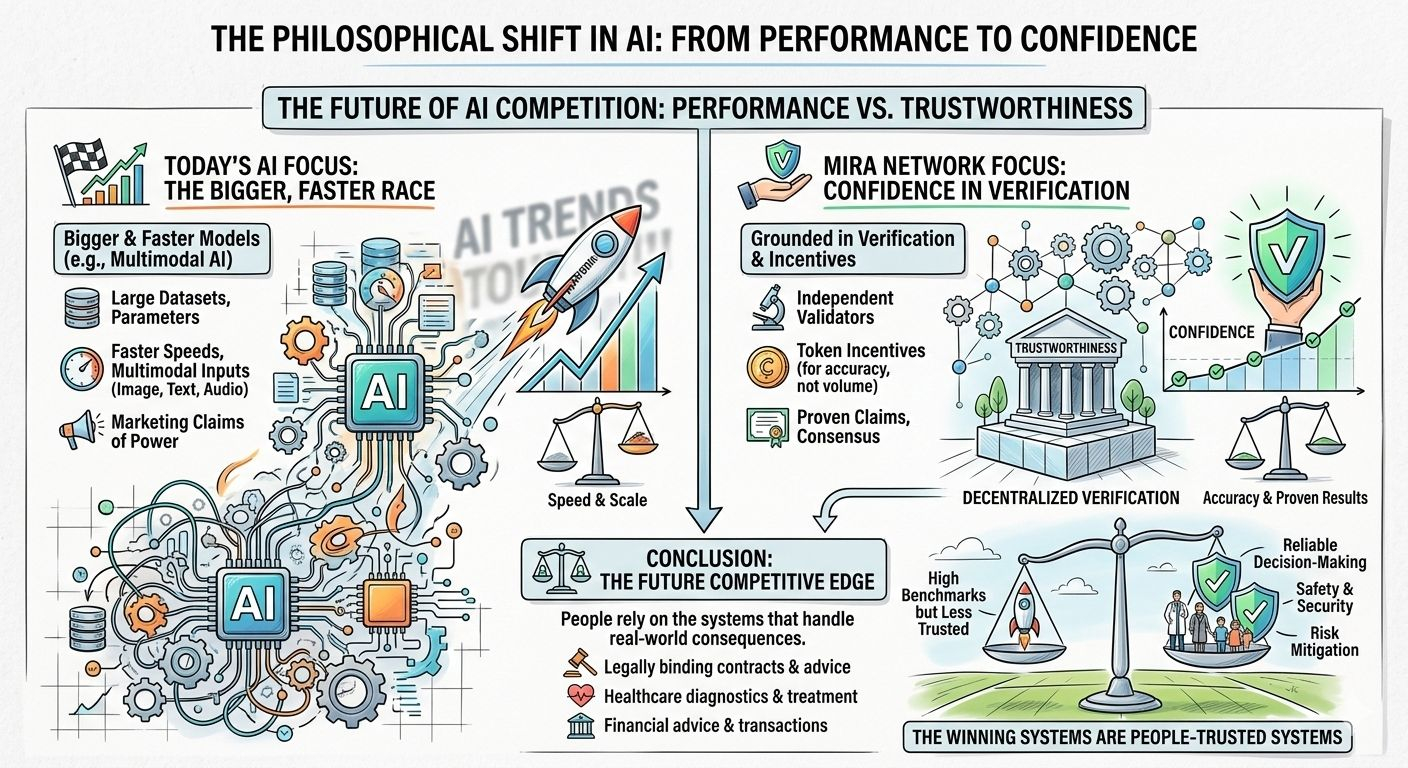

What stands out most is the philosophical shift. Many discussions around AI focus on making models bigger, faster, or more multimodal. Mira focuses on something quieter but arguably more important: confidence. Not confidence in marketing claims, but confidence grounded in verification and incentives.

In the coming years, AI will not just compete on performance benchmarks. It will compete on trustworthiness. The systems that survive will be the ones that people can rely on when decisions carry real consequences.

Mira Network is attempting to build that reliability into the foundation. And if AI is going to shape critical parts of our economy and daily lives, building trust directly into its architecture may be the most human decision we can make.