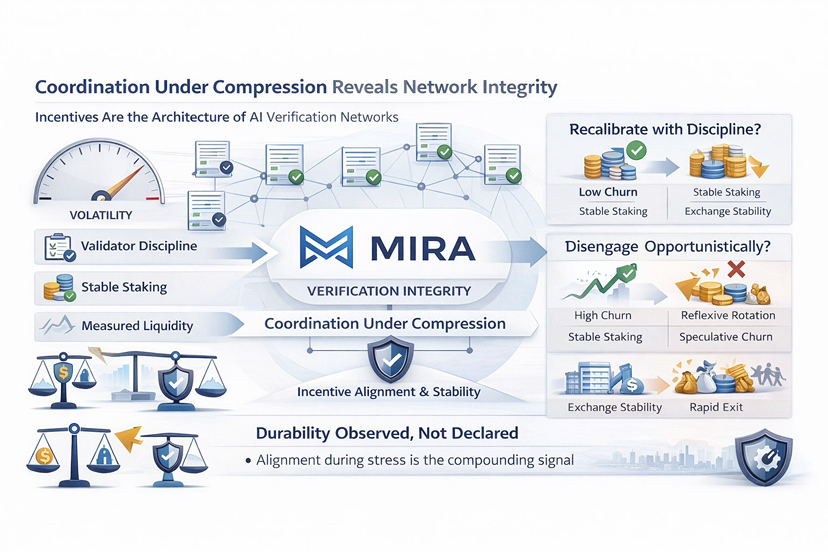

Nel corso degli anni, ho scoperto che il vero test di una rete non è la crescita durante l'espansione, ma la coordinazione sotto compressione. Quando gli incentivi si restringono e la volatilità aumenta, il comportamento si chiarisce. I partecipanti o si ricalibrano con disciplina o si disimpegnano. Quella divergenza rivela l'integrità strutturale.

Nelle reti di verifica dell'IA, gli incentivi sono l'architettura. Se i validatori vengono compensati per auditare e confermare i risultati dei modelli, la loro persistenza sotto ricompense normalizzate riflette se la verifica è economicamente razionale o semplicemente opportunistica. I sistemi sostenibili rendono la partecipazione onesta la strategia più efficiente, anche quando il potenziale a breve termine si moderano.

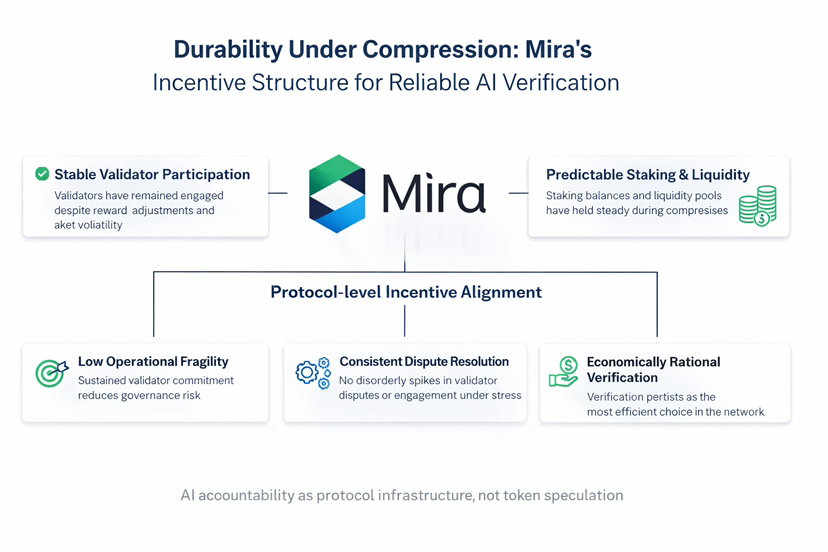

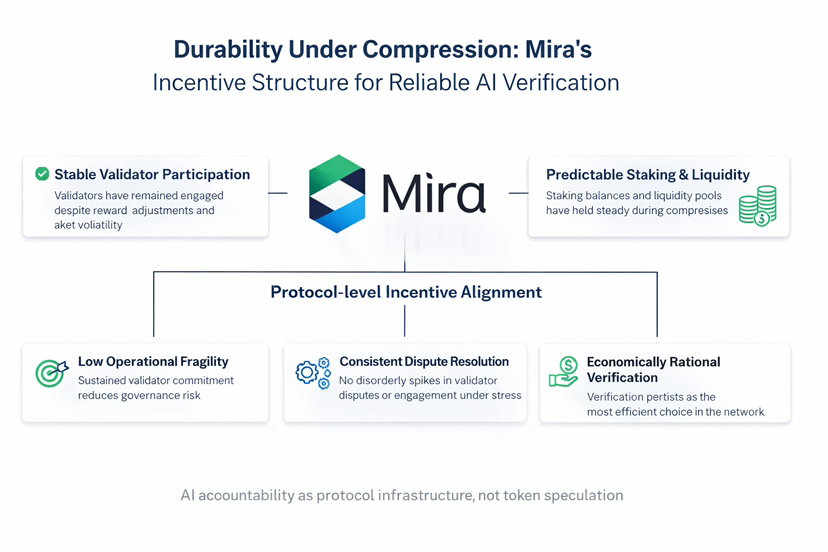

Il comportamento on-chain fornisce la prova più chiara. La partecipazione dei validatori in Mira non ha mostrato contrazione materiale durante gli aggiustamenti delle ricompense. I saldi di staking sono rimasti stabili piuttosto che ruotare riflessivamente. La profondità della liquidità è rimasta senza ritiri bruschi durante la volatilità. I flussi di scambio non hanno mostrato picchi disordinati che tipicamente segnalano un churn speculativo.

Attraverso una lente di capitale a lungo termine, questi segnali sono importanti. Un basso churn riduce la fragilità operativa. Uno staking stabile attenua il rischio di governance. Un comportamento di liquidità misurato supporta un'esecuzione prevedibile. Quando la frequenza delle controversie non si espande sotto stress, implica che gli incentivi alla verifica sono allineati e economicamente vincolati.

Il design di Mira, come lo valuto, posiziona la responsabilità dell'AI come infrastruttura del protocollo piuttosto che espressione di token. La verifica è incorporata nella logica del sistema e applicata economicamente. Questo sposta la fiducia dalla promessa al meccanismo.

La durabilità nella verifica dell'AI non sarà dichiarata. Sarà osservata. E nell'analisi dei sistemi, il coordinamento osservabile, specialmente quando gli incentivi si comprimono, è l'unico segnale che si compone.