Machine intelligence has evolved through three distinct phases: first it achieved remarkable speed, then dramatic cost reduction, finally achieving ubiquitous deployment. Yet throughout this evolution, one critical attribute remained absent: accountability.

This deficiency—the chasm between generated outputs and verifiable proof—represents the silent failure point in most AI implementations. Not through catastrophic collapse or warning sirens, but through gradual erosion: engineering teams inserting manual oversight stages, legal departments appending liability disclaimers, and compliance officers mandating human sign-off protocols before any AI-suggested action proceeds.

We constructed sophisticated computational systems, then surrounded them with human supervisors functioning as protective barriers.

Mira proposes an alternative methodology.

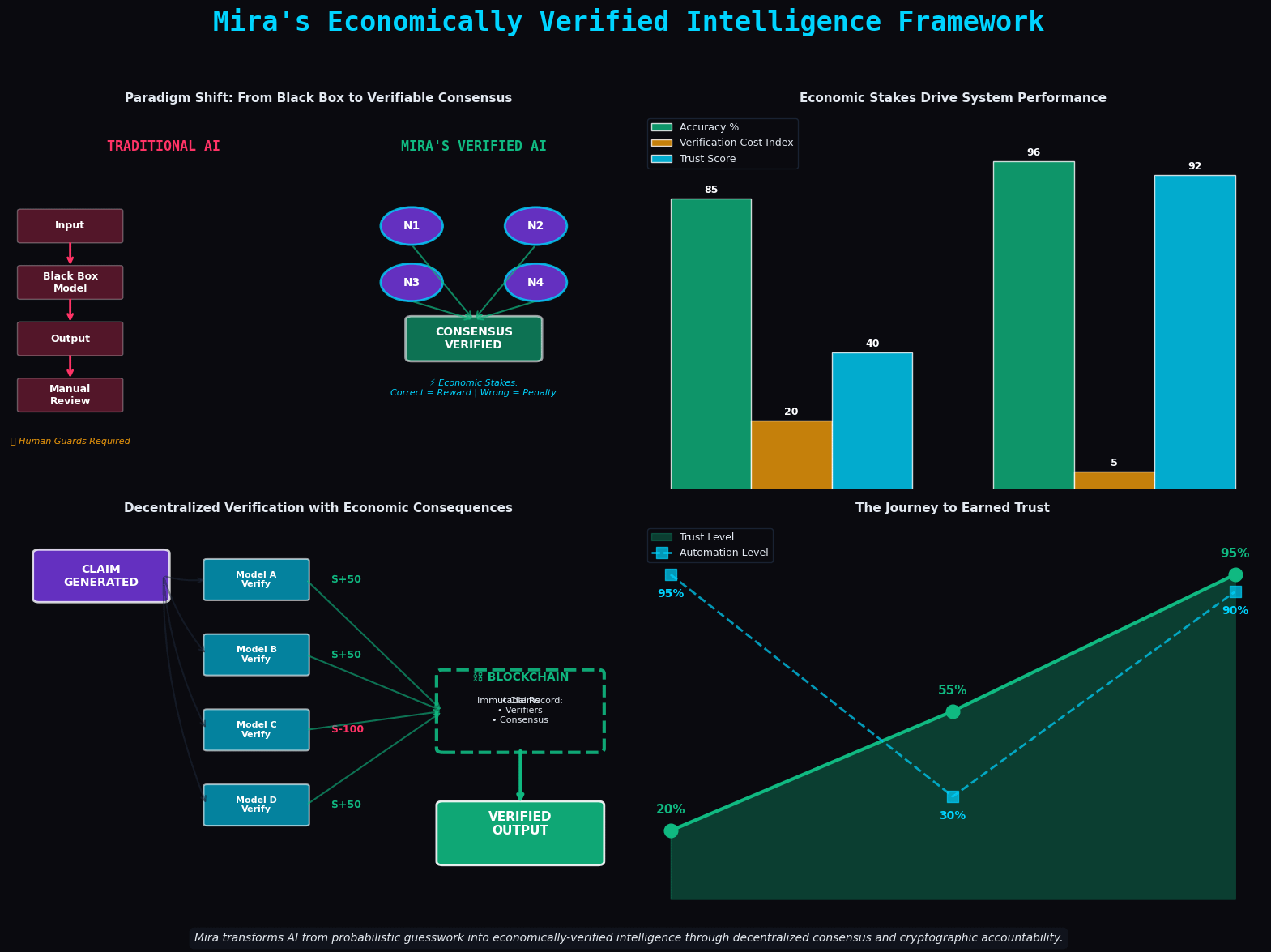

The foundational principle does not assume that artificial intelligence requires greater cognitive capacity. Rather, it recognizes that intelligence lacking verifiability constitutes an inherently incomplete architecture. A predictive model demonstrating 96% precision while remaining incapable of identifying its 4% error rate does not qualify as a delegable system—it becomes a system requiring constant supervision.

What transforms when we conceptualize an AI response not as a definitive solution, but as an assemblage of testable assertions?

Everything changes.

Assertions can be separated, cross-examined against one another, distributed across nodes operating diverse models, and evaluated not through surface-level plausibility—does this appear reasonable?—but through distributed agreement. Do multiple independent systems, with financial exposure at risk, concur?

This final element carries greater significance than commonly acknowledged. Contemporary AI infrastructures operate without financial repercussions for erroneous outputs. The algorithm incurs no losses. The hosting platform suffers no penalties. Confidence metrics and factual accuracy remain disconnected; they merely present similar superficial characteristics.

Mira implements consequence-based mechanisms. Validation nodes demonstrating incorrect verification face financial penalties. Nodes providing accurate validation receive compensation. Immediately, the optimization target shifts from eloquent expression to factual precision. Because precision generates revenue.

The distributed ledger component serves functional rather than decorative purposes. It establishes tamper-resistant documentation of asserted claims, evaluating parties, and reached consensus. Validation ceases being transient; it becomes examinable, traceable, and enduring.

This represents what demanding operational environments genuinely require.

Financial services reject probabilistic accuracy. Healthcare providers cannot accept generally reliable performance. Legal practitioners find "sounds reasonable" insufficient. These domains demand outputs capable of interrogation, decomposition into constituent decisions, and追溯 to confirmable origins.

Mira's architectural strategy wagers that artificial intelligence's next advancement lies not in capability expansion, but in trust earned through verification.

Not intelligence supplanting human judgment, but intelligence functioning within sufficiently rigorous frameworks to warrant delegation authority.

When this architecture functions, transformation occurs quietly. No spectacular product launches. Merely organizations progressively eliminating review checkpoints at AI workflow termini. Not through negligence, but through confidence that the system has merited such streamlining.