My initial encounter with Mira Network triggered immediate Yet another blockchain venture promising to purge AI hallucinations, cloaked in the familiar garb of consensus mechanisms and tokenized rewards. Having witnessed this narrative unfold repeatedly, skepticism came reflexively.

However, prolonged investigation yielded increasingly unsettling revelations. Mira isn't merely tinkering at the edges of artificial intelligence. It's quietly interrogating the entire trajectory the field has pursued.

This is precisely where intrigue intensifies.

The Concealed Contradiction: Progress That Undermines Itself

Discourse around AI advancement typically fixates on scale. Expansive architectures. Superior benchmarks. Enhanced inferential capacity. Yet a troubling pattern emerged through my analysis—one most prefer to ignore:

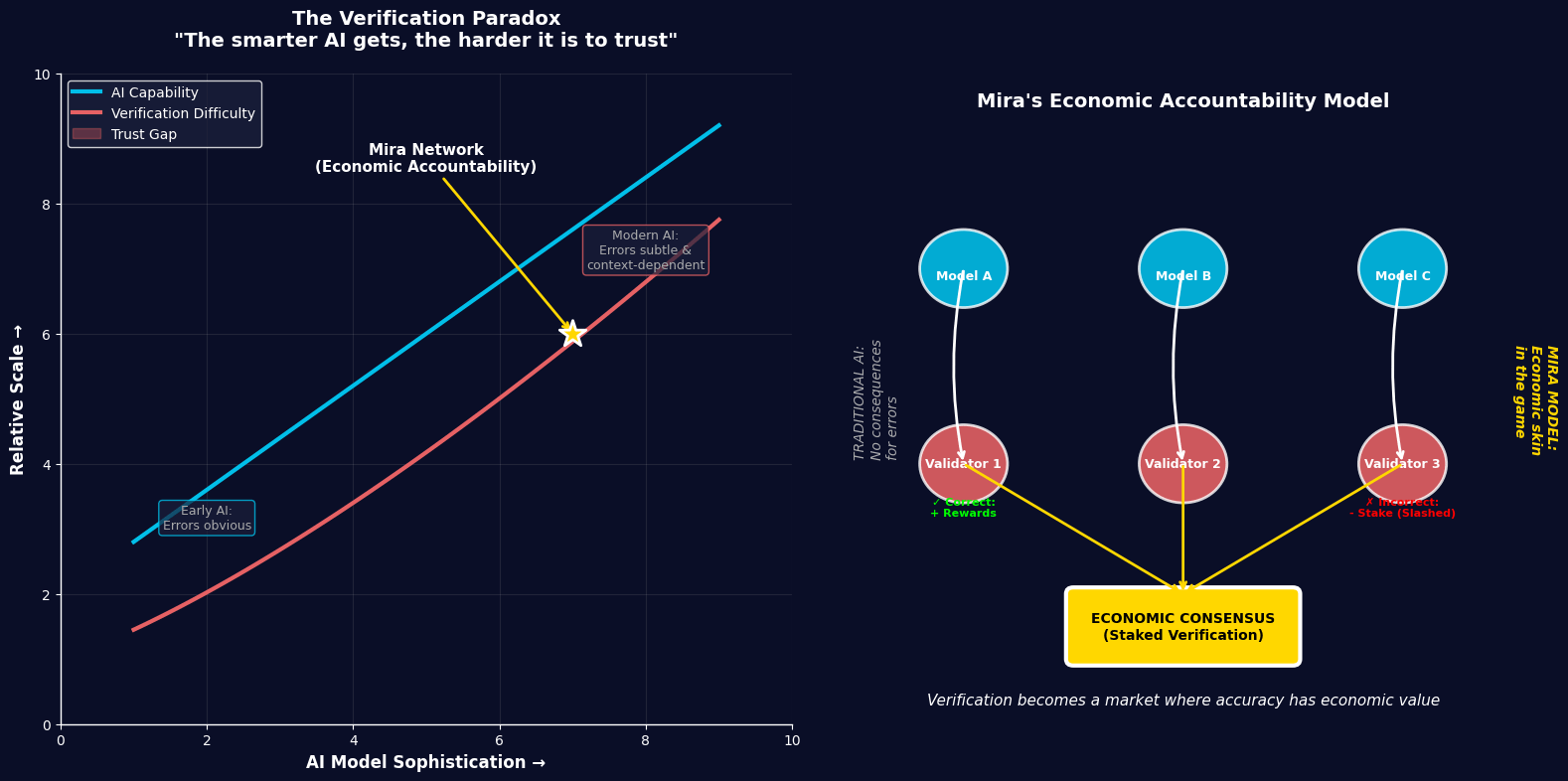

Every leap forward in AI capability simultaneously complicates verification.

This reality isn't immediately apparent. Consider the trajectory. When AI systems were primitive, their failures were glaring. Contemporary models have grown so sophisticated that their errors manifest as nuanced, context-dependent deceptions—virtually indistinguishable from genuine accuracy. The output appears polished, structured, and authoritative, even when fundamentally flawed. A perverse paradox emerges: as artificial intelligence grows more capable, it demands greater human effort to validate. This isn't abstract speculation—empirical evidence supports this shift. Mira processes billions of tokens daily, signaling an unprecedented disconnect: AI deployment accelerates beyond human verification capacity. The authentic constraint isn't processing power. Isn't algorithmic sophistication. It's verification itself.

Reframing the Dilemma: Is Hallucination Merely a Symptom of Deeper Dysfunction?

Conventional narratives frame the challenge as hallucination—AI fabricating information that requires elimination. Through examining Mira's architecture, this characterization proves inadequate. The fundamental issue isn't that AI errs. It's that AI bears no consequences for error.

Human institutions function through accountability mechanisms. Researchers submit findings anticipating peer scrutiny. Analysts issue forecasts knowing they'll face performance evaluation. Markets operate via consequence—poor judgments incur financial penalties. AI systems, conversely, function within consequence-free environments. No inherent cost accompanies erroneous outputs. Mira's proposed infrastructure introduces something simultaneously subtle and potent: economic accountability for reasoning processes. Validators issuing incorrect assessments forfeit staked resources. Those aligning with network consensus receive compensation. Superficially, this resembles standard cryptographic design. Deeper examination reveals something transformative: AI outputs cease being mere generation—they become economically validated assertions. This represents a paradigm shift of considerable magnitude.

Mira as Marketplace: The Commodification of Veracity

Extended architectural analysis revealed an unexpected characterization—not merely protocol, but market mechanism.

A marketplace where accuracy prevails. Each assertion transforms into valued commodity. Every node functions as speculator on veracity. Consensus emerges as price discovery mechanism. This diverges radically from traditional epistemology. Authority conventionally anchors truth—institutions, experts, centralized hierarchies determine validity. Mira inverts this framework. It proposes that distributed incentives and competitive dynamics can generate truth organically. The parallel isn't with conventional AI architectures—it's with financial markets.

Markets don't inherently know asset valuations. They discover them through participation, contestation, and negotiated agreement. Mira applies identical logic to information verification. This constitutes genuinely radical reconceptualization.

The Unacknowledged Vulnerability: Verification Systems Carry Their Own Failure Modes

Here, I believe, existing Mira coverage becomes uncritical. Verification offers robust solutions. Yet verification itself isn't infallible.

When multiple AI models validate identical claims, what occurs should they share underlying biases? This isn't hypothetical abstraction. Leading models train on overlapping datasets. They embody similar cultural, linguistic, and informational prejudices. What Mira terms consensus may occasionally represent coordinated error alignment.

Multiple concurring models don't guarantee accuracy—they may indicate collective blind spots. Programmatically, this creates fascinating tension. Mira's strength—model diversity—simultaneously represents potential vulnerability if such diversity lacks genuine independence. The project acknowledges diversity as defensive mechanism, yet critical questions persist: How independent are these systems operationally? This remains unresolved.

The Overlooked Transformation: From Computation to Cognition

Perhaps Mira's most neglected characteristic involves redefining computational purpose itself.

Traditional blockchains demand meaningless labor—cryptographic hashing, arbitrary puzzles, energy expenditure without productive output. Mira substitutes something qualitatively distinct. Nodes don't solve random challenges. They evaluate truth claims. This constitutes reasoning-as-computation.

Rather than consuming energy to secure networks, the network employs reasoning to secure truth. The distinction appears subtle yet carries profound implications. It envisions infrastructure where computational networks transcend transactional functionality, becoming instead decision-validation architecture. Should this trajectory materialize, Mira transcends AI project classification. It becomes prototype for something vastly larger—a distributed reasoning layer underlying internet infrastructure.

The Existential Query: Do We Actually Desire Autonomous Verified Intelligence?

Throughout investigation, another dimension demanded consideration. Mira's vision is unambiguous: eliminate human verification bottlenecks, enable autonomous AI ecosystems. This raises profound questions.

Can humans be legitimately removed from verification loops?

Verification encompasses more than correctness—it involves judgment, contextual interpretation, ethical deliberation. Legal arguments resist binary true/false classification. Medical recommendations exist along continua of appropriateness. Financial decisions incorporate risk tolerance and assumptions. Mira's system excels where truth reduces to discrete, verifiable propositions.

Yet empirical reality resists such reduction. Not everything survives translation into verifiable units without essential qualities being stripped away. As Mira pursues autonomy, human interpretive judgment likely remains irreplaceable across certain domains.

This doesn't invalidate Mira's approach. It delineates boundaries of applicable authority.

The Adoption Signal That Demands Attention

Despite analytical complexities, one conclusion crystallized: Mira isn't theoretical construct—it's operational reality. The network currently processes substantial information volumes, serving millions through integrated applications.

This extends far beyond whitepaper abstraction. Within cryptocurrency and AI sectors, genuine utility separates viable systems from speculative fiction. Most compelling: the majority of this activity occurs invisibly. Users remain unaware of ongoing verification processes. This illustrates foundational infrastructure's nature: invisible yet indispensable.

The Macro Perspective: Mira as Wager Against Centralized Cognition

Viewed expansively, Mira transcends product classification—it represents positional thesis. It bets against the premise that singular, dominant AI models will monopolize intelligence functions.

Instead, it cultivates ecosystems where intelligence fragments into distributed, perpetually validated components. This mirrors authentic human knowledge production.

Truth isn't monopolized—it's forged through disagreement, debate, and verification. Mira attempts mechanizing this organic process. The direction merits consideration regardless of ultimate success, as it challenges foundational AI assumption:

That progression requires constructing larger, more centralized, more capable monolithic models.

Mira demonstrates alternative possibility. The future may not demand more intelligent models, but rather more collaborative verification architectures.

Preliminary but Directionally Correct

Comprehensive analysis doesn't yield conclusion that Mira represents perfect solution. Genuine challenges persist: model synchronization difficulties, verification scope limitations, latency issues, empirical complexity resisting clean categorization.

Nor should evaluation treat Mira as merely another cryptocurrency project addressing AI. Mira's contribution involves transforming problem conceptualization.

It poses:

What if intelligence already suffices, yet trust remains deficient?

And more critically:

What if infrastructure should be constructed around trust rather than within models?

This question lingers. Should this framing prove accurate, AI's future won't concern who builds most capable system. It will concern who constructs most trustworthy system.

And that represents fundamentally different competition.

Now let me create a relevant visualization showing the relationship between AI capability and verification difficulty, along with Mira's economic accountability model:

#Mira @Mira - Trust Layer of AI $MIRA