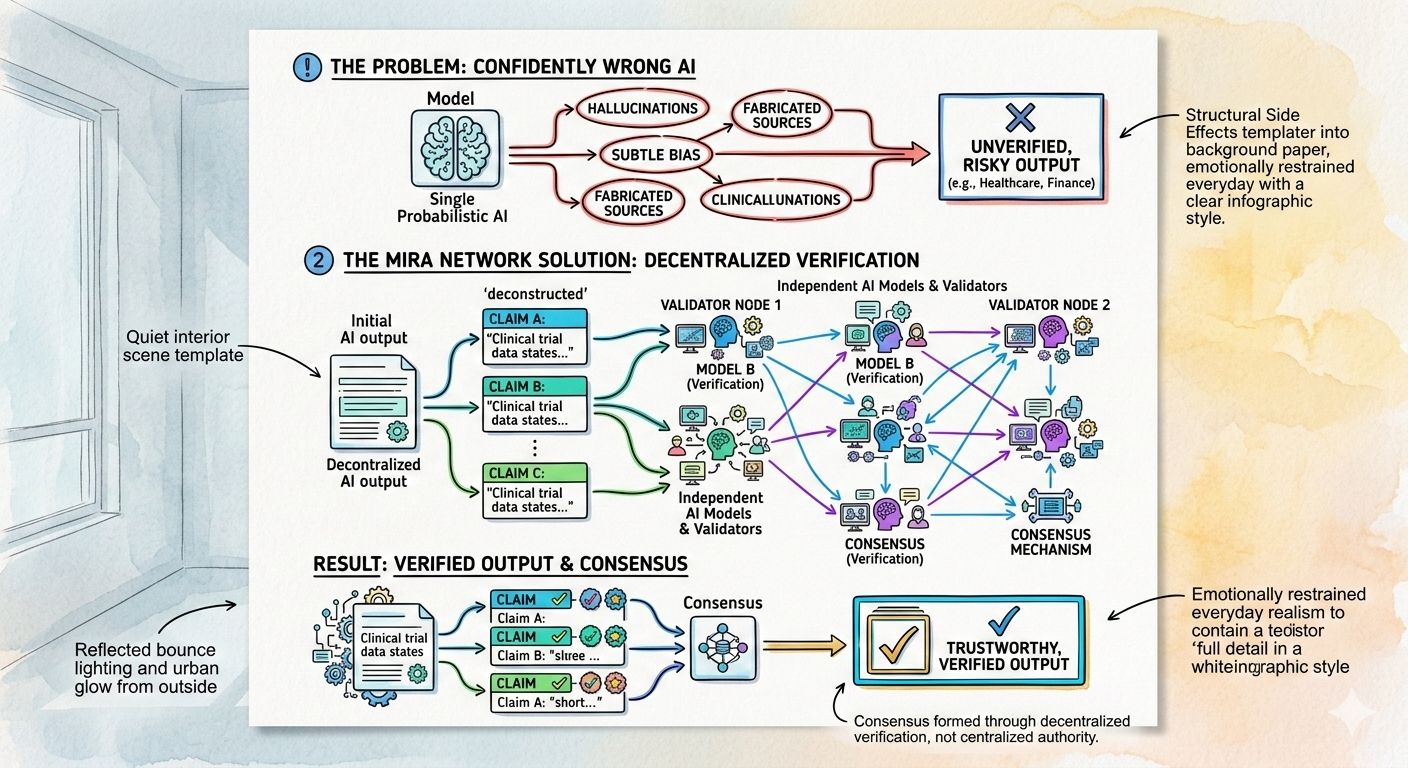

Artificial intelligence is impressive. It writes, analyzes, summarizes, codes, and even reasons in ways that felt impossible just a few years ago. But anyone who has used it seriously knows the uncomfortable truth: it can be confidently wrong. Hallucinations, subtle bias, fabricated sources — these are not rare edge cases. They are structural side effects of probabilistic systems. When AI is used for entertainment, that might be acceptable. When it’s used for finance, healthcare, governance, or autonomous systems, it becomes a serious problem.

Mira Network is built around a simple but powerful idea: instead of trusting AI outputs blindly, verify them. Rather than assuming a single model should be perfect, Mira treats every response as a collection of claims that can be checked. The network breaks down complex outputs into smaller, testable components and distributes them across independent AI models and validators. Consensus is then formed through decentralized verification, not centralized authority.

This shift matters. AI models are not designed to “know” truth; they generate probabilities. Mira acknowledges that reality and moves the trust layer outside the model itself. By anchoring verification results on-chain, the network creates a transparent and tamper-resistant record of how a conclusion was validated. Trust becomes something earned collectively, not assumed individually.

The $MIRA token is the economic backbone of this system. Validators stake tokens to participate in verification, which means they have something at risk if they behave dishonestly or carelessly. Accurate validation is rewarded, while poor performance can lead to penalties. This staking mechanism turns verification into a marketplace where incentives are aligned with reliability. Instead of relying on corporate reputation or closed review systems, the network relies on open economic accountability.

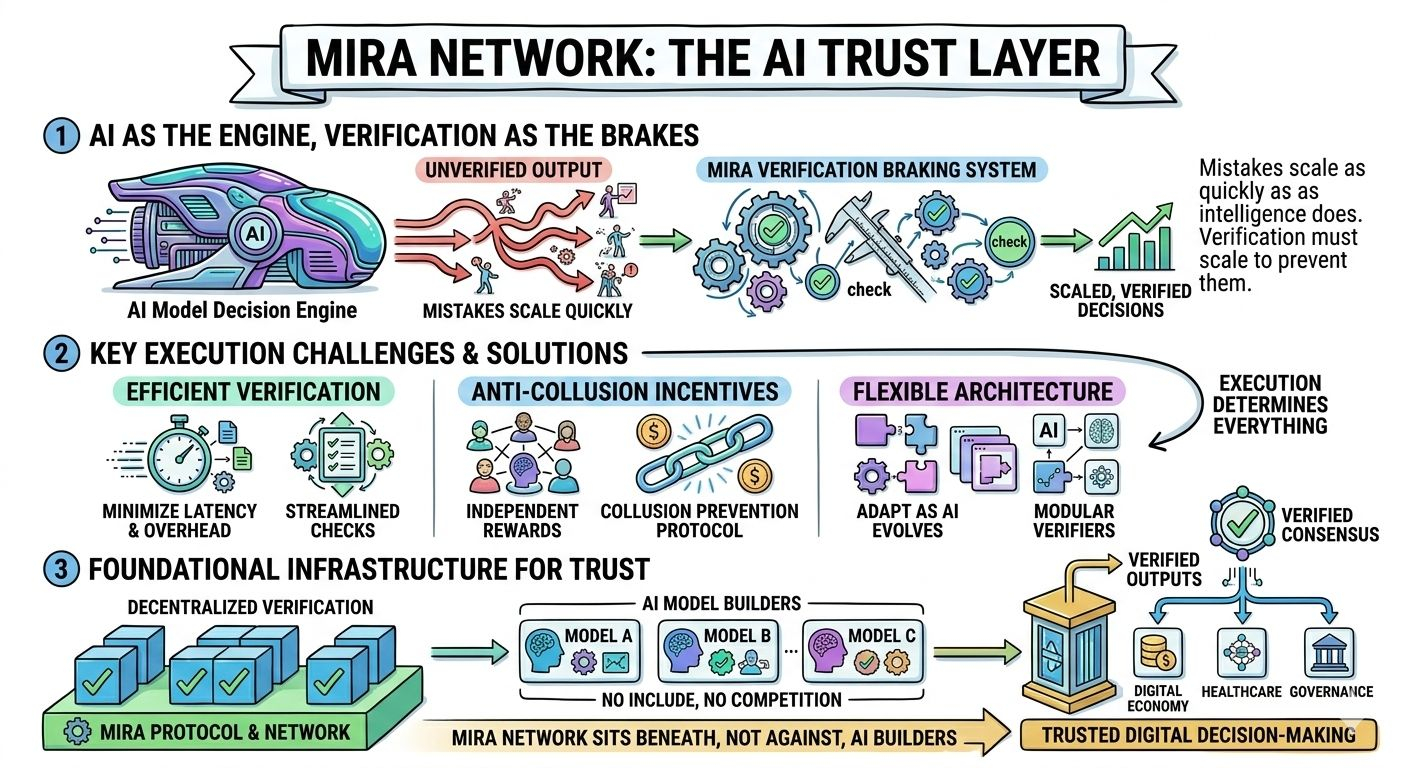

What makes this approach interesting is how naturally it fits into the current moment. AI is expanding rapidly into real-world infrastructure. Businesses are automating workflows. Developers are building autonomous agents. Regulators are asking difficult questions about transparency and accountability. In this environment, a decentralized verification layer is not just a technical upgrade — it’s a structural necessity.

Mira is not trying to compete with AI model builders. It is positioning itself as the trust layer that can sit beneath them. If AI becomes the engine of digital decision-making, verification becomes the braking system. Without it, mistakes scale as quickly as intelligence does.

Of course, execution will determine everything. Verification must be efficient. Incentives must be carefully designed to prevent collusion. The network must remain flexible enough to adapt as AI architectures evolve. But if those pieces come together, Mira could become part of the foundational infrastructure behind how intelligent systems are trusted.

We are entering a phase where intelligence is abundant but certainty is scarce. The next evolution of AI will not be defined only by smarter models, but by stronger guarantees. Mira Network represents a step toward that future — one where trust is not assumed, but systematically proven.