Mira Network E Perché Ho Smesso Di Credere Che “Probabilmente Corretto” Sia Sufficiente

Più mi affido all'AI nei flussi di lavoro quotidiani, più noto qualcosa di scomodo. Sembra giusto quasi tutto il tempo. Frasi strutturate, tono sicuro, spiegazioni chiare. Ma sembrare giusto ed essere giusto sono due cose diverse. Questo divario è esattamente dove il Mira Network inizia a avere senso per me.

La maggior parte dei sistemi AI oggi opera sulla fiducia. Interroghi un modello, questo risponde e tu lo accetti o lo verifichi manualmente. La responsabilità ricade sull'utente. Mira capovolge questa struttura. Non cerca di rendere un modello più intelligente. Costruisce uno strato di verifica decentralizzato che valuta ciò che il modello dice dopo il fatto.

Invece di trattare un output dell'IA come un blocco di testo unico, Mira lo scompone in affermazioni individuali. Queste affermazioni sono distribuite tra validatori AI indipendenti nella rete. Ogni validatore le esamina separatamente e si raggiunge il consenso utilizzando la coordinazione blockchain combinata con incentivi economici. Ciò significa che l'accuratezza non si basa su una singola autorità, ma su un accordo distribuito.

Il livello blockchain non è una decorazione qui. Fornisce trasparenza e immutabilità, quindi le decisioni di validazione vengono registrate pubblicamente. I validatori scommettono valore dietro le loro valutazioni, il che significa che ci sono conseguenze per l'approvazione di informazioni errate. Questa pressione economica cambia la dinamica della fiducia. La verità diventa allineata agli incentivi invece di basarsi sulla reputazione.

Ciò che rende questa architettura rilevante è il passaggio verso agenti IA che agiscono in modo autonomo. Quando l'IA redige solo email o riassume articoli, piccoli errori sono gestibili. Ma se i sistemi IA iniziano a eseguire transazioni finanziarie, gestire contratti o operare in ambienti regolamentati, non puoi accettare un'accuratezza probabilistica. Hai bisogno di output verificabili.

Mira assume che le allucinazioni non scompariranno completamente. Progetta attorno a questa assunzione. Questo sembra pratico. Naturalmente, rimangono delle sfide. La verifica aggiunge latenza e il ragionamento complesso deve essere scomposto con attenzione. La diversità dei validatori deve essere mantenuta per prevenire il pregiudizio condiviso.

Tuttavia, il principio è chiaro. L'intelligenza senza verifica non scala in ambienti ad alto rischio. Mira si posiziona come il livello di affidabilità per l'IA, trasformando output incerti in informazioni validate da consenso. Non è appariscente, ma affronta un problema fondamentale che diventerà solo più importante man mano che l'autonomia dell'IA aumenta.

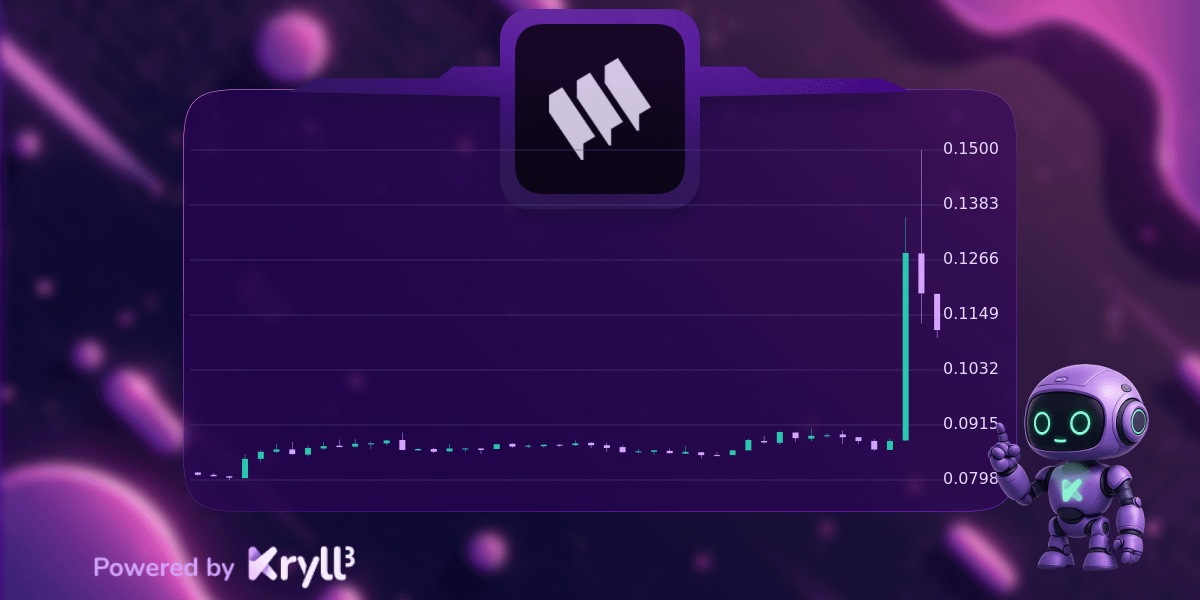

#Mira $MIRA @Mira - Livello di Fiducia di