I first encountered Mira while exploring decentralized AI protocols, and I dismissed it as yet another blockchain wrapper around existing models—after all, we've seen plenty of projects claiming to "decentralize" AI without addressing core computational hurdles. But my skepticism faded as I delved into its architecture, particularly how it orchestrates distributed AI inference. What stood out was Mira's methodical breakdown of complex outputs into verifiable units, enabling scalable, trustless computation that feels like a genuine step toward reliable AI in Web3.

AI systems today grapple with hallucinations—those confident but incorrect outputs that erode trust in high-stakes applications like financial analysis or medical advice. Picture an AI agent summarizing market trends: one wrong fact could cascade into poor decisions. This ties into Web3's AI integration narrative, where modular designs demand verifiable data availability and economic security, but centralized models often fall short on transparency and bias mitigation.

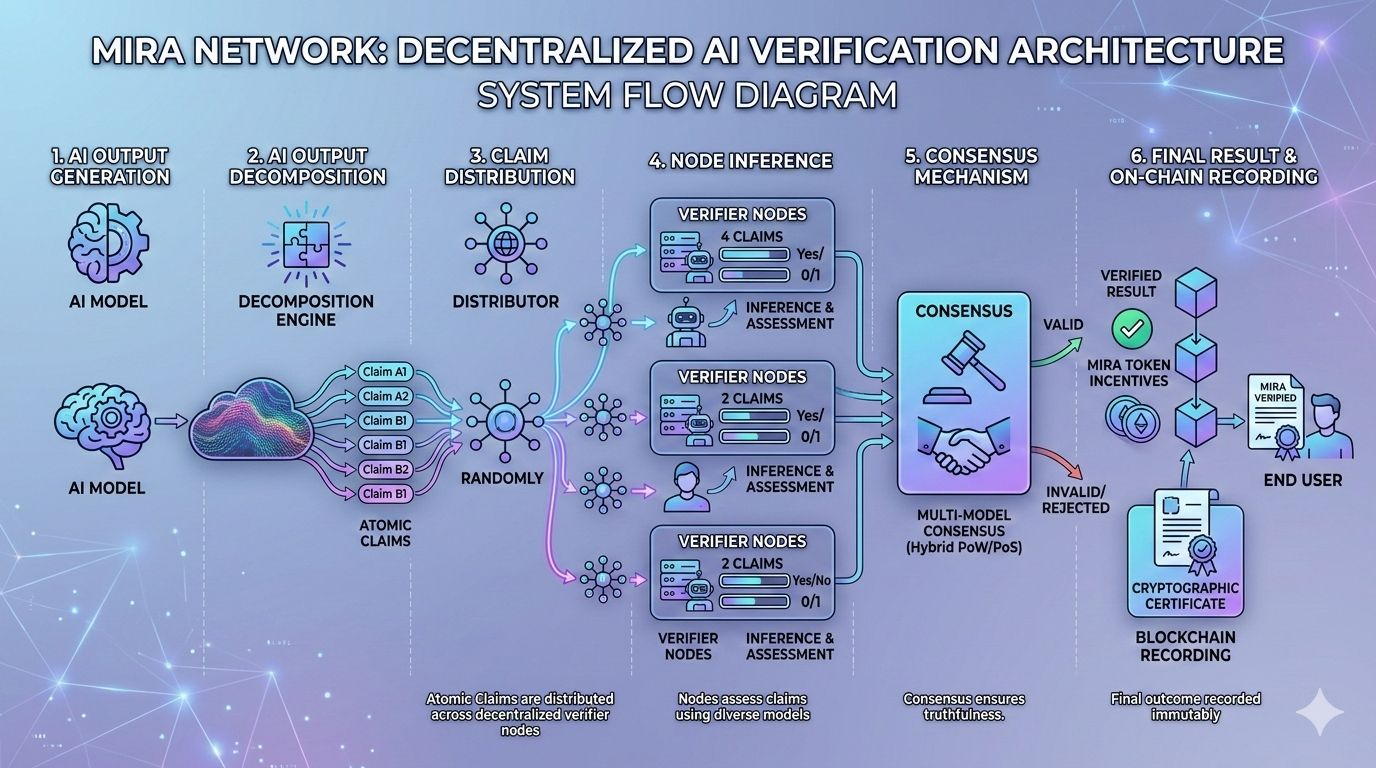

Mira approaches this by first decomposing AI-generated content into atomic claims through a process called binarization. These bite-sized facts are then distributed across a network of verifier nodes, each running independent AI models. The nodes perform inference computations to evaluate claims, voting on their validity before a consensus mechanism aggregates results and records them on the blockchain.

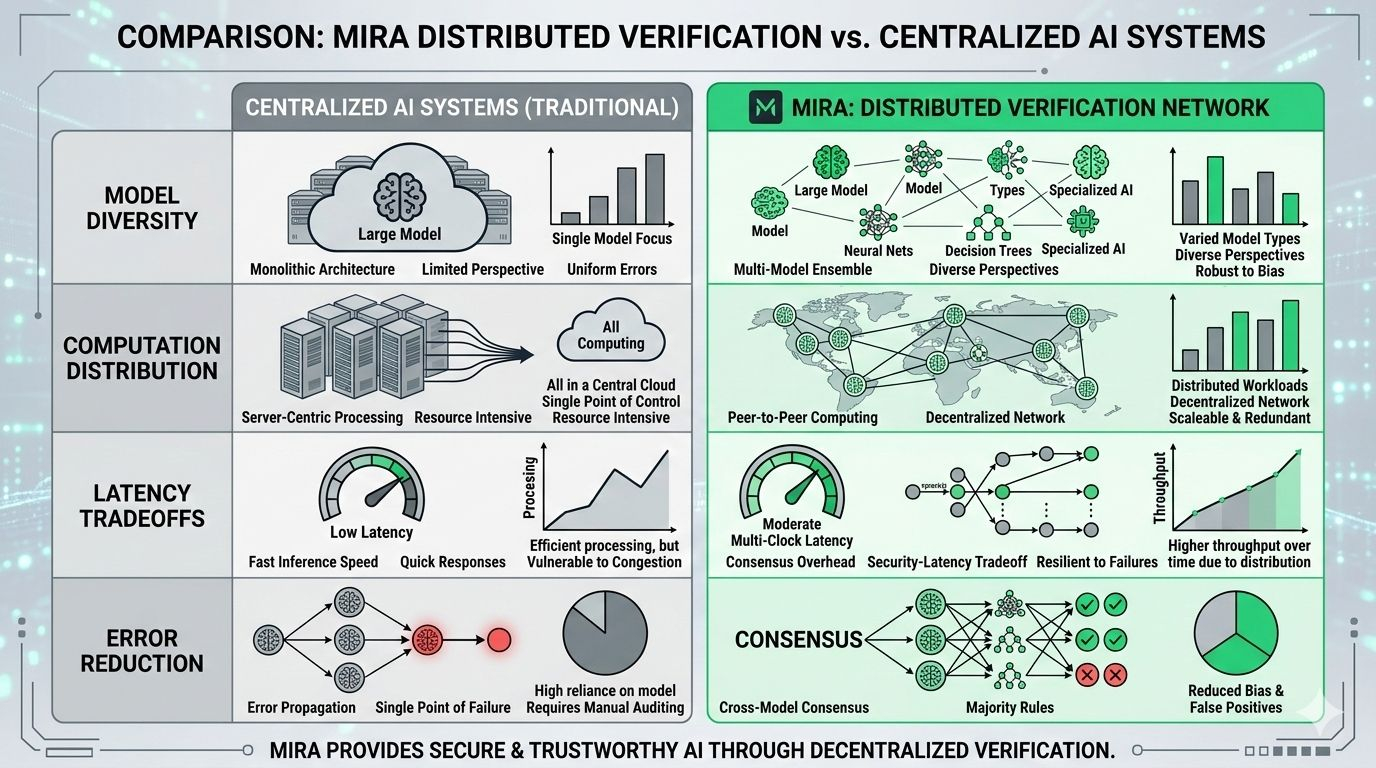

Contrast this with centralized systems like OpenAI's GPT series, where verification relies on internal safeguards without external audits. Mira's distributed model introduces redundancy via diverse models (e.g., GPT-4, Claude, Llama), shifting incentives from proprietary control to staked participation, but it trades off with potential latency in consensus rounds versus instant centralized responses.

Digging deeper, Mira's strength lies in exponential error reduction: with multiple verifiers, the chance of collective hallucination drops dramatically, fostering robust outputs for AI agents. Yet, this comes at a cost—intensive GPU demands could strain network growth if delegator participation wanes, and over-reliance on model diversity risks subtle biases if nodes converge on similar datasets.

The decentralized AI space faces ongoing battles with compute scarcity and regulatory ambiguity, especially as models grow more power-hungry. Mira's transparent architecture, emphasizing open verification logs, builds long-term value by enabling auditable AI ecosystems, positioning it as essential infrastructure rather than a fleeting experiment.

To assess similar protocols, consider the "Verification Compute Framework"—a tool for evaluating AI handling in decentralized networks: 1) Decomposition Efficiency: How effectively does it break down outputs for parallel processing? 2) Node Diversity: Are multiple models integrated to minimize shared errors? 3) Compute Scaling: What mechanisms exist for GPU delegation and throughput? 4) Consensus Integrity: Does the mechanism balance speed with security against collusion? 5) Auditability: Are verifications blockchain-recorded for transparency?

For those engineering AI-Web3 integrations, how might Mira's binarization evolve to handle multimodal data like images or code in real-time economies?

@Mira - Trust Layer of AI #Mira $MIRA