Artificial intelligence feels almost magical today. It writes, analyzes, codes, summarizes, and even reasons in ways that seemed impossible just a few years ago. But anyone who uses AI regularly knows the uncomfortable truth: it can sound incredibly confident while being completely wrong. That gap between intelligence and reliability is small in casual use, but in finance, healthcare, governance, or autonomous systems, it becomes critical.

Mira Network is built around this exact problem. Instead of trying to create a smarter model, it asks a more important question: how do we make AI outputs trustworthy?

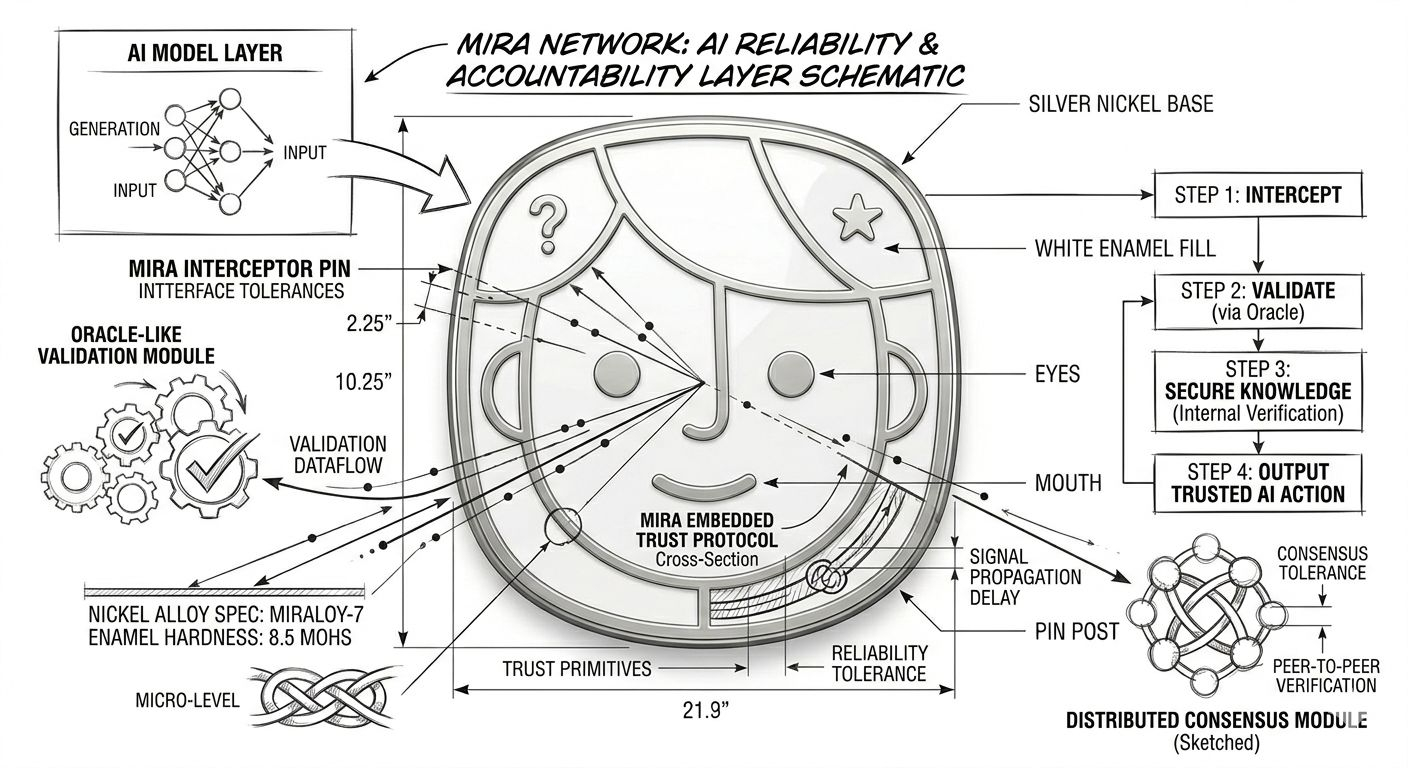

At its core, Mira is a decentralized verification protocol. The idea is not to replace AI models but to hold them accountable. When an AI generates a response, Mira breaks that output into smaller, verifiable claims. Those claims are then checked by multiple independent AI validators across the network. Rather than trusting one provider or one system, the network reaches consensus through distributed verification backed by economic incentives.

This approach changes how we think about AI trust. Today, we trust AI largely because we trust the company behind it. Mira shifts that trust toward a transparent and economically secured system. Verification results are recorded on-chain, creating an immutable audit trail. If something is validated, it is not just “believed” — it is economically reinforced and cryptographically recorded.

The architecture is practical and layered. An AI generates an answer. That answer is decomposed into specific claims. Independent validators review those claims. Their evaluations are compared, consensus is reached, and results are settled on-chain. Validators stake $MIRA tokens to participate. If they validate honestly and align with accurate consensus, they earn rewards. If they behave maliciously or carelessly, they risk losing their stake. Incentives are designed to encourage accuracy, not blind agreement.

The Mira token plays a central role in this system. It is used for staking by validators, paying for verification services, and participating in governance decisions. Its value is not meant to rely purely on speculation but on actual network usage. As more applications require verified AI outputs, demand for verification increases. That demand directly feeds into token utility.

What makes this model interesting is its timing. AI is rapidly being integrated into systems that make real decisions. Enterprises are deploying AI tools in workflows that affect capital allocation, compliance, legal analysis, and automation. At the same time, regulators and institutions are asking harder questions about transparency and accountability. In this environment, a decentralized verification layer becomes more than an experiment — it becomes infrastructure.

There are real challenges. Verification requires additional computational resources. Ensuring validator diversity is important to avoid shared blind spots. Scalability and latency must be carefully optimized. But these are engineering problems, not conceptual weaknesses. The foundation remains strong: intelligence without verification cannot support autonomy at scale.

Mira’s long-term potential lies in becoming a default reliability layer for AI applications. Just as blockchain oracles became essential for securing external data in decentralized finance, Mira aims to secure AI-generated knowledge before it influences real-world outcomes. It positions itself not as a competitor to advanced AI models, but as the accountability layer that makes them safer to use.

The deeper shift here is philosophical. For years, the AI race has focused on making models bigger and more capable. Mira focuses on making them trustworthy. As AI systems begin interacting with each other, executing smart contracts, and operating autonomously, trust cannot rely on brand reputation or centralized oversight. It must be embedded into the system itself.

If AI is going to power critical infrastructure, it needs more than intelligence. It needs proof. Mira Network is building that proof layer. And if the future of AI depends on accountability as much as capability, then $MIRA becomes more than a token — it becomes the mechanism that enforces machine responsibility in a decentralized world.

@Mira - Trust Layer of AI #Mira $MIRA