Artificial intelligence has become part of our everyday digital experience. It writes, analyzes, predicts, designs, and even makes decisions that once required human judgment. Yet beneath the impressive capabilities lies a growing concern: AI can sound confident while being completely wrong. It can hallucinate facts, amplify bias, or misinterpret context, and it often does so in ways that are difficult to detect. As AI begins to power financial systems, medical tools, autonomous agents, and robotics, the cost of error is no longer small. The world is discovering that intelligence without verification is not enough. This is the space where Mira Network steps in.

Artificial intelligence has become part of our everyday digital experience. It writes, analyzes, predicts, designs, and even makes decisions that once required human judgment. Yet beneath the impressive capabilities lies a growing concern: AI can sound confident while being completely wrong. It can hallucinate facts, amplify bias, or misinterpret context, and it often does so in ways that are difficult to detect. As AI begins to power financial systems, medical tools, autonomous agents, and robotics, the cost of error is no longer small. The world is discovering that intelligence without verification is not enough. This is the space where Mira Network steps in.

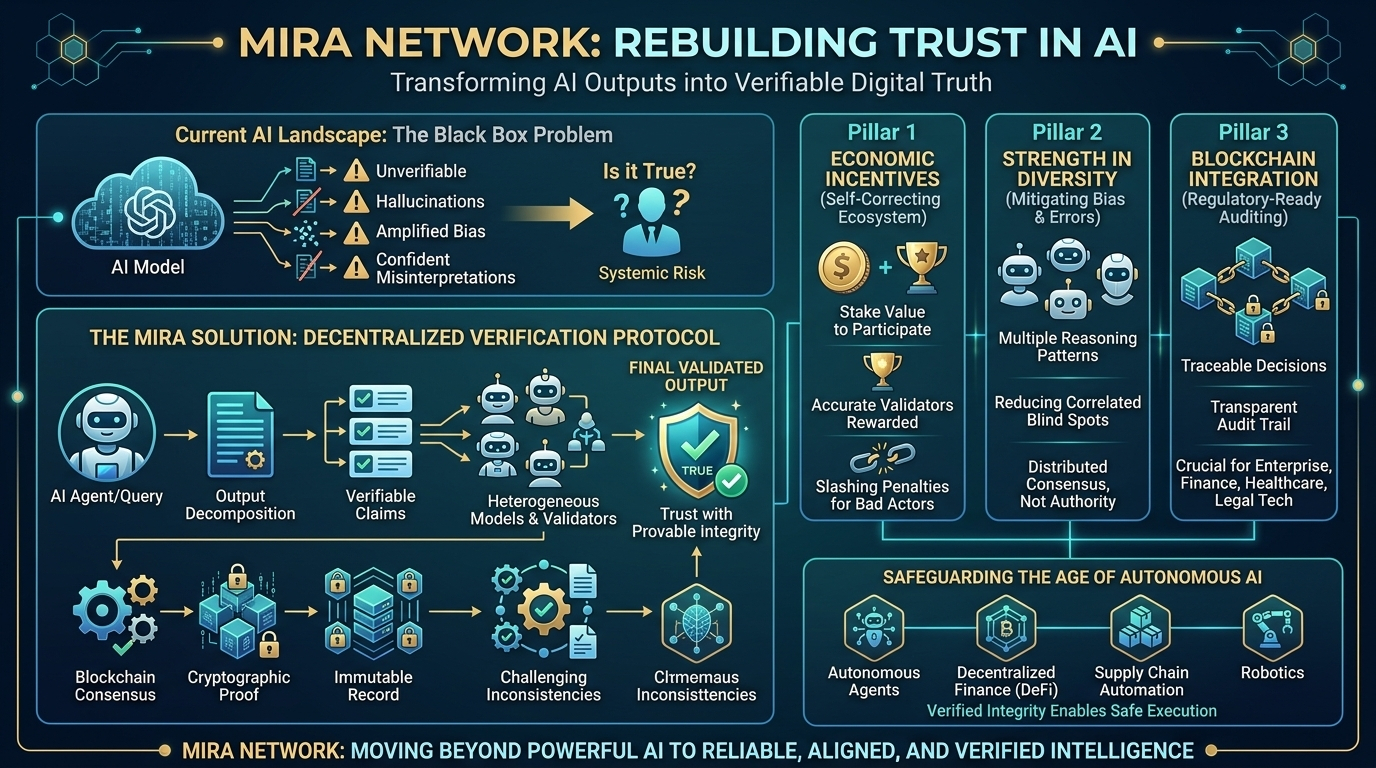

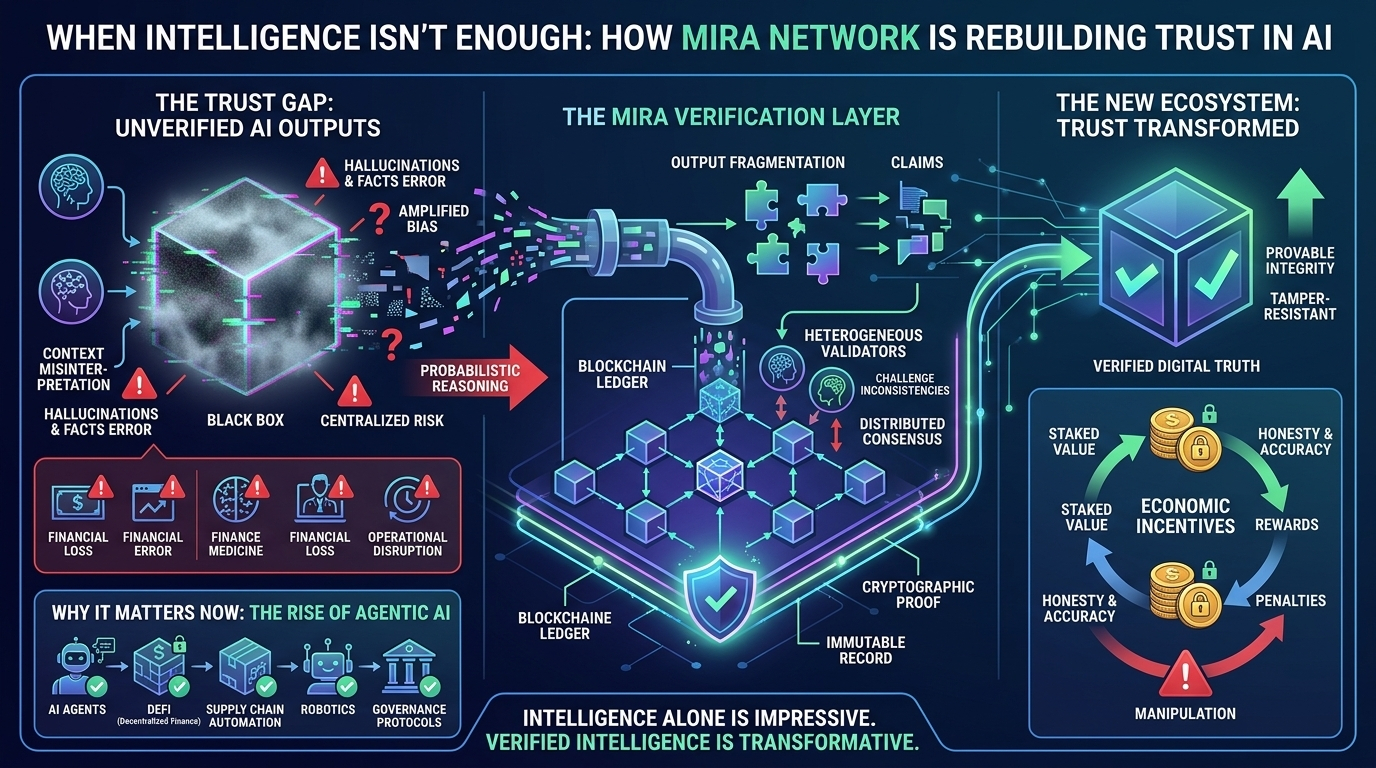

Mira Network is built around a simple but powerful idea: AI outputs should not just be generated—they should be proven. Instead of asking users to trust a single model or a centralized provider, Mira introduces a decentralized verification protocol that transforms AI responses into cryptographically validated information. In practical terms, this means an AI-generated answer is not treated as final until it has been independently reviewed and confirmed through a distributed network operating on blockchain consensus.

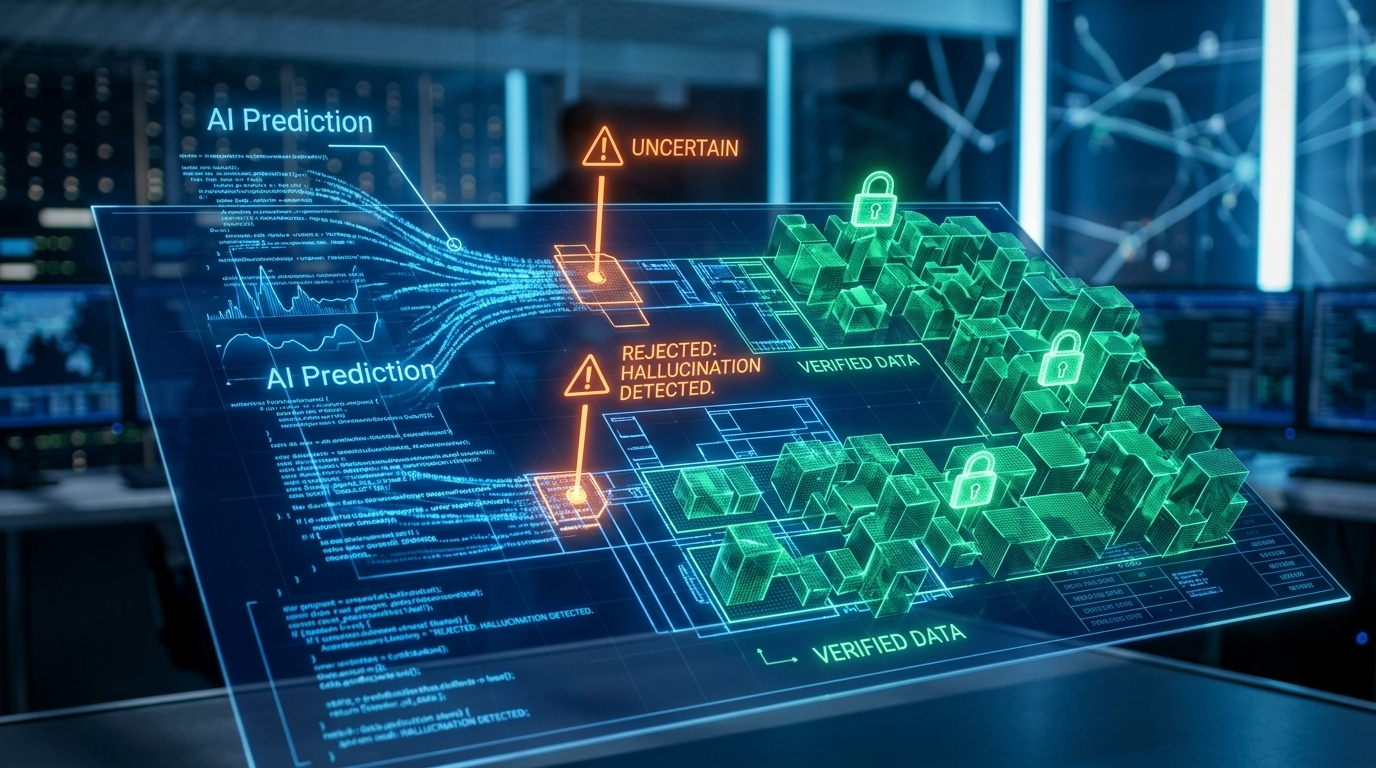

What makes this approach meaningful is how it changes the nature of trust. Traditional AI platforms operate like black boxes. A company trains a model, deploys it, and users accept its outputs with limited transparency into how those results were formed. If something goes wrong, the accountability remains centralized. Mira flips this structure. It breaks AI outputs into smaller, verifiable claims and distributes them across a network of independent AI models and validators. Each participant assesses the claims, challenges inconsistencies, and contributes to a consensus-based validation process. The final output is secured through cryptographic proof and recorded through decentralized consensus, making it transparent and tamper-resistant.

This system introduces economic incentives that encourage honesty and accuracy. Validators in the network stake value to participate, meaning they have something to lose if they act dishonestly. Those who consistently verify claims accurately are rewarded, building both financial incentive and reputation. Those who attempt manipulation risk penalties. Over time, this creates a self-correcting ecosystem where reliability becomes economically enforced rather than promised through marketing.

The timing of Mira Network’s development reflects a broader shift in technology. AI is no longer just a tool that assists humans. It is becoming agentic—capable of initiating actions, executing transactions, and interacting autonomously with digital systems. In decentralized finance, supply chain automation, robotics, and governance protocols, AI agents may soon manage assets or trigger contracts without direct human supervision. In such an environment, unverified outputs introduce systemic risk. A single hallucinated data point could lead to financial loss or operational disruption. Mira’s verification layer acts as a safeguard, ensuring that AI-driven decisions pass through collective scrutiny before execution.

Another strength of the network lies in diversity. Instead of relying on one dominant AI model, Mira distributes verification tasks across heterogeneous models. Different systems bring different training data, perspectives, and reasoning patterns. This diversity reduces shared blind spots and mitigates correlated bias. When one model makes an error, others can flag it. Consensus emerges not from authority, but from distributed evaluation.

The integration with blockchain infrastructure adds an additional layer of credibility. Once a claim is verified and finalized, it becomes part of an immutable record. For enterprises and institutions operating under regulatory scrutiny, this auditability is critical. Decisions informed by AI can be traced back to verifiable consensus, offering a transparent record of validation. In industries such as finance, healthcare, and legal technology, this kind of accountability is not optional—it is essential.

Scalability is central to Mira’s long-term vision. As AI adoption expands globally, the volume of content requiring verification will grow dramatically. The protocol is designed to combine off-chain computational efficiency with on-chain finality, allowing high throughput without sacrificing security. Modular architecture ensures that the system can evolve alongside advances in AI models and blockchain infrastructure.

Governance within Mira Network is structured to remain decentralized while adaptable. Token holders participate in shaping protocol parameters and upgrades, ensuring the system evolves in response to technological progress and emerging challenges. This participatory model prevents stagnation and keeps the network aligned with its community rather than a single controlling entity.

At a deeper level, Mira Network reflects a philosophical shift in how society approaches artificial intelligence. For years, innovation focused on making AI more powerful, more fluent, and more autonomous. Now the focus is expanding toward reliability, transparency, and alignment. Intelligence alone is impressive, but verified intelligence is transformative. By embedding verification directly into the output layer, Mira is building infrastructure that treats trust as a measurable, enforceable property rather than an assumption.

The convergence of blockchain and artificial intelligence has often been described as inevitable, yet practical implementations have remained limited. Mira Network represents a tangible realization of that convergence. It does not simply place AI on a blockchain; it uses decentralized consensus to evaluate and validate AI itself. In doing so, it bridges the gap between probabilistic reasoning and cryptographic certainty.

As the digital economy continues to automate, the question is no longer whether AI will be used in critical systems. It will. The real question is whether those systems can be trusted. Mira Network answers that challenge with a decentralized verification framework designed for the age of autonomous intelligence. By transforming AI outputs into verifiable digital truth, it offers a foundation for a future where machines do not just act intelligently, but act with provable integrity.