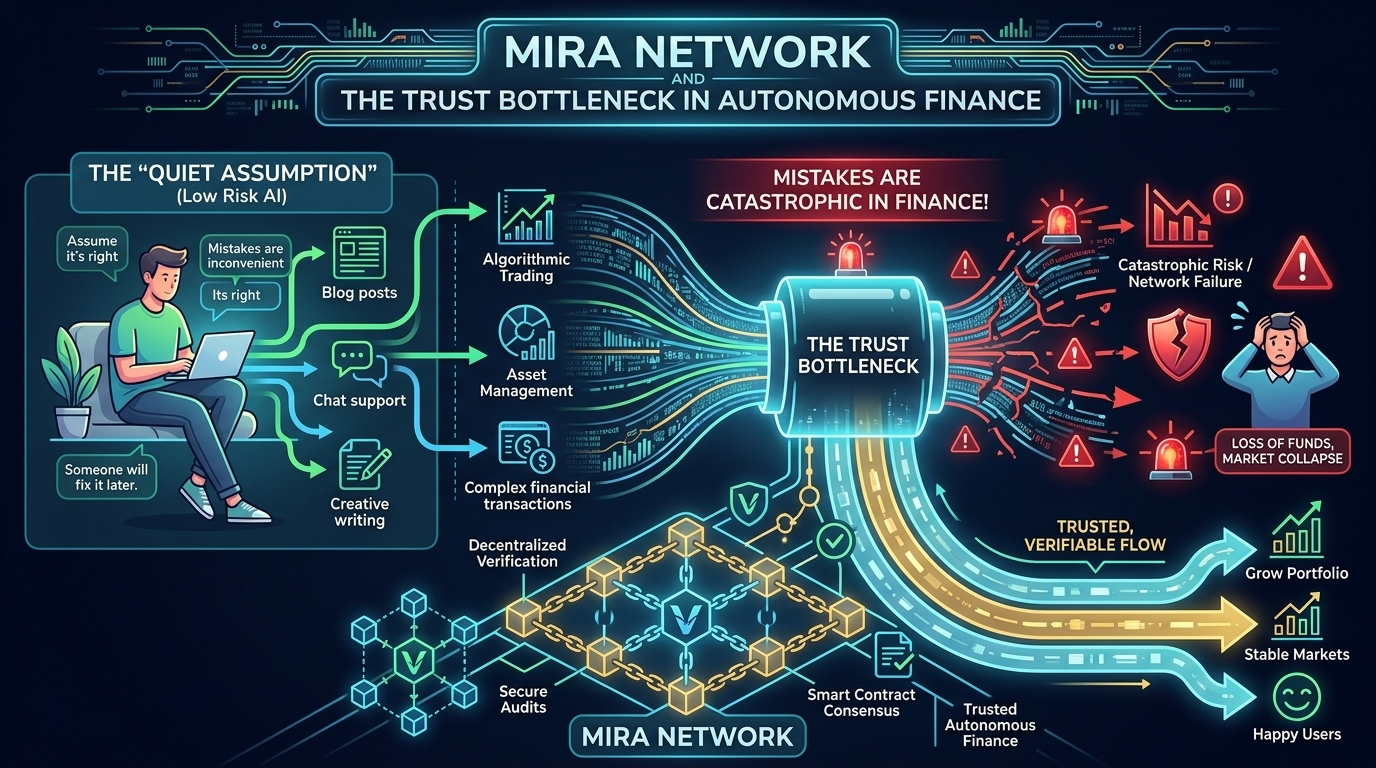

Most AI systems operate on a quiet assumption: the model is probably right, and if it’s wrong, someone will fix it later. In low-risk environments like drafting content or generating support replies, that logic holds. Mistakes are inconvenient, not catastrophic.

Most AI systems operate on a quiet assumption: the model is probably right, and if it’s wrong, someone will fix it later. In low-risk environments like drafting content or generating support replies, that logic holds. Mistakes are inconvenient, not catastrophic.

But finance is different.

When AI begins executing autonomous DeFi strategies on-chain, synthesizing complex research for investment theses, or shaping DAO governance decisions, “probably right” becomes a liability. Capital moves. Votes pass. Markets react. There is no pause button for review once transactions settle on a blockchain.

This is the trust bottleneck.

The challenge isn’t that AI models are inherently flawed. It’s that their reliability is opaque. A language model can produce a confident answer without providing a measurable signal of contextual accuracy. In high-stakes systems, that ambiguity creates structural risk.

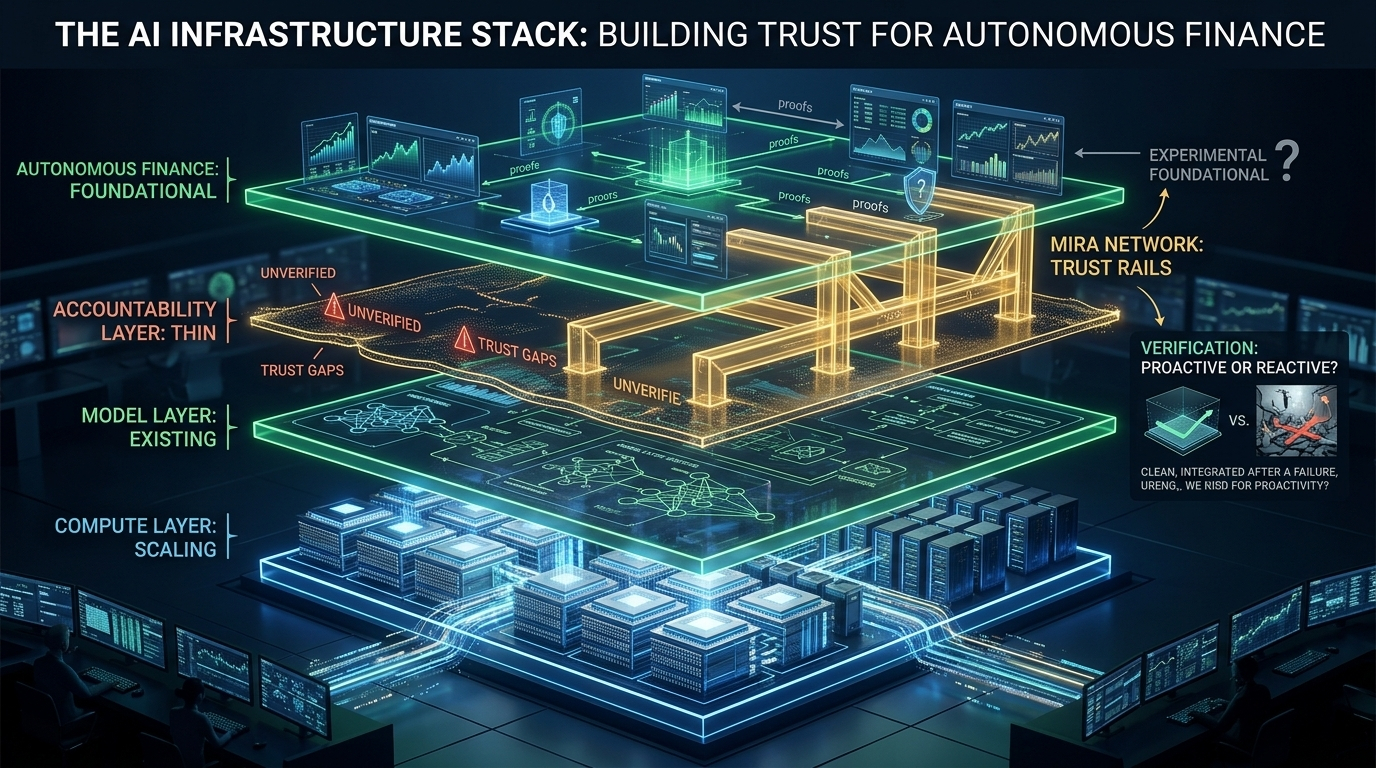

As AI capability accelerates, accountability infrastructure has not kept pace. We have compute. We have increasingly powerful models. What’s missing is a robust verification layer.

Decentralized verification networks offer a path forward. Instead of accepting outputs at face value, they decompose AI responses into discrete, reviewable claims. Independent validators assess those claims. Agreement with consensus is rewarded. Unsupported divergence carries economic consequences. Incentives shape diligence.

For Web3 ecosystems, this architecture has another advantage: auditability. When verification is anchored to blockchain records, every review becomes traceable. Who validated the output? When? On what basis? Transparency turns AI results from opaque predictions into defensible artifacts.

This shift reframes the adoption curve for AI in finance. The limiting factor is no longer model intelligence. It’s institutional trust.

Verification layers don’t just improve accuracy; they make AI outputs survivable under scrutiny. They enable autonomous systems to operate in environments where credibility is non-negotiable.

The AI infrastructure stack is still maturing. The model layer exists. The compute layer scales. The accountability layer remains thin.

Projects like Mira Network are positioning themselves to close that gap—building the trust rails required for autonomous finance to move from experimental to foundational.

In infrastructure markets, the systems that become default workflows tend to win. The open question is whether markets will prioritize verification proactively—or only after a failure makes its absence undeniable.