@Quack AI Official Agent Infrastructure & Economy: Reputation as the Core of Autonomous Trust

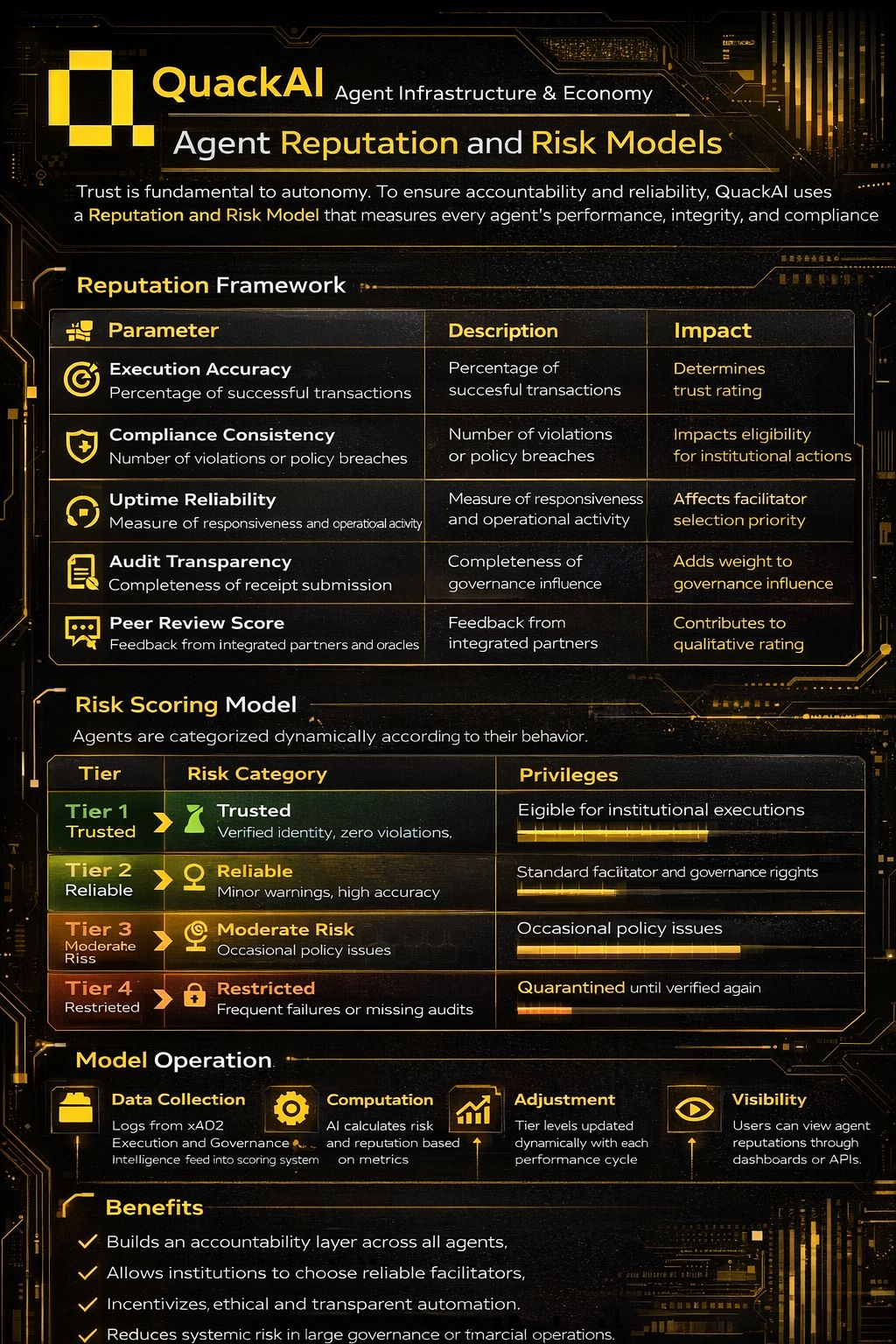

Autonomy without accountability is systemic risk. QuackAI addresses this through a structured Agent Reputation and Risk Model that continuously measures performance, integrity, and compliance.

The reputation framework evaluates five core parameters: execution accuracy (transaction success rate), compliance consistency (policy violations), uptime reliability (operational responsiveness), audit transparency (receipt completeness), and peer review score (partner and oracle feedback). These metrics form a quantifiable trust index for every agent.

Based on behavior and performance history, agents are dynamically categorized into four tiers:

Tier 1 – Trusted: Verified identity, zero violations, strong audit history. Eligible for institutional execution.

Tier 2 – Reliable: High accuracy with minor warnings. Full facilitator and governance participation.

Tier 3 – Moderate Risk: Policy inconsistencies. Limited reward access.

Tier 4 – Restricted: Repeated failures or missing audits. Temporarily quarantined.

The model operates in cycles: execution and governance logs feed the scoring engine, AI recalculates reputation, tiers adjust automatically, and results remain visible through dashboards or APIs.

The outcome is structural trust. Institutions can select facilitators with measurable reliability. Agents are incentivized toward ethical automation. Systemic risk is reduced at scale.

In QuackAI, reputation is not symbolic — it is computational, dynamic, and enforceable.

$Q