The current surge in generative AI has created a paradox we have more information than ever, but less certainty about what is actually true. In the high stakes world of on-chain finance and autonomous legal agents, "close enough" isn't good enough. This is where the @Mira - Trust Layer of AI shifts from a niche utility to essential infrastructure. By functioning as a decentralized verification layer, Mira ensures that AI outputs are not just generated, but rigorously cross examined.

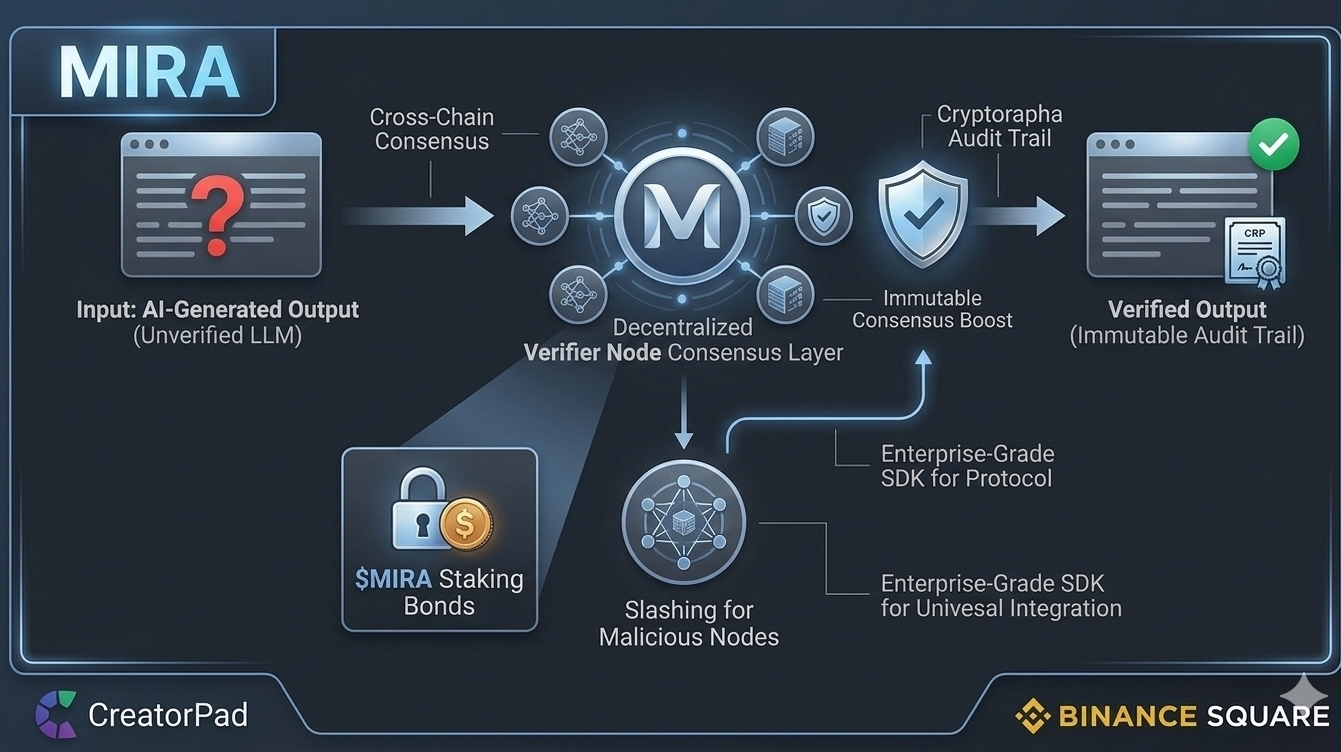

Instead of a single "black box" model providing an answer, $MIRA decomposes responses into atomic claims. These claims are then verified through a multi model consensus involving independent nodes. This systematic approach effectively neutralizes the risk of hallucinations those confident but incorrect fabrications that plague current LLMs. For developers in the Base ecosystem, this means they can finally deploy AI-driven dApps with a cryptographic guarantee of accuracy, moving past the experimental phase into enterprise grade reliability.

As we approach the Q2 2026 roadmap, the focus is clearly shifting toward the #Mira token’s role in securing this honesty. Through a sophisticated staking and slashing mechanism, the protocol creates real economic consequences for inaccurate data. With the current CreatorPad campaign reaching its peak, the narrative is no longer just about "making AI," but about "making AI trustworthy." Those who understand that verification is the next big alpha are the ones positioning themselves for the long term. 🚀🛡️