When Machines Start Checking Each Other: Inside Mira Network’s Approach to Trustless AI

Not long ago, asking an AI for information felt similar to asking the smartest student in the room for help. The answer would come quickly, confidently, and often impressively detailed. But every now and then, something felt off. A date didn’t exist, a quote was fabricated, or a statistic appeared from nowhere. The confidence remained, even when the accuracy didn’t.

This strange mix of brilliance and uncertainty is one of the defining traits of modern artificial intelligence. AI models are incredibly good at generating language and connecting ideas, but they don’t truly “know” things in the human sense. They predict the next word based on patterns. Most of the time that works beautifully. Occasionally, it produces something that sounds convincing but simply isn’t true.

The challenge becomes more serious as AI moves into real workflows. When machines begin summarizing research, helping with financial analysis, or powering automated agents that interact with other systems, a single confident mistake can ripple through an entire chain of decisions. The real question is no longer whether AI can generate answers—it clearly can. The deeper question is how those answers can be trusted.

Mira Network approaches this problem from a direction that feels almost philosophical: instead of trying to build the perfect AI, it assumes that no single model should be trusted on its own. The network treats AI outputs the way a careful researcher treats a source—with skepticism and verification.

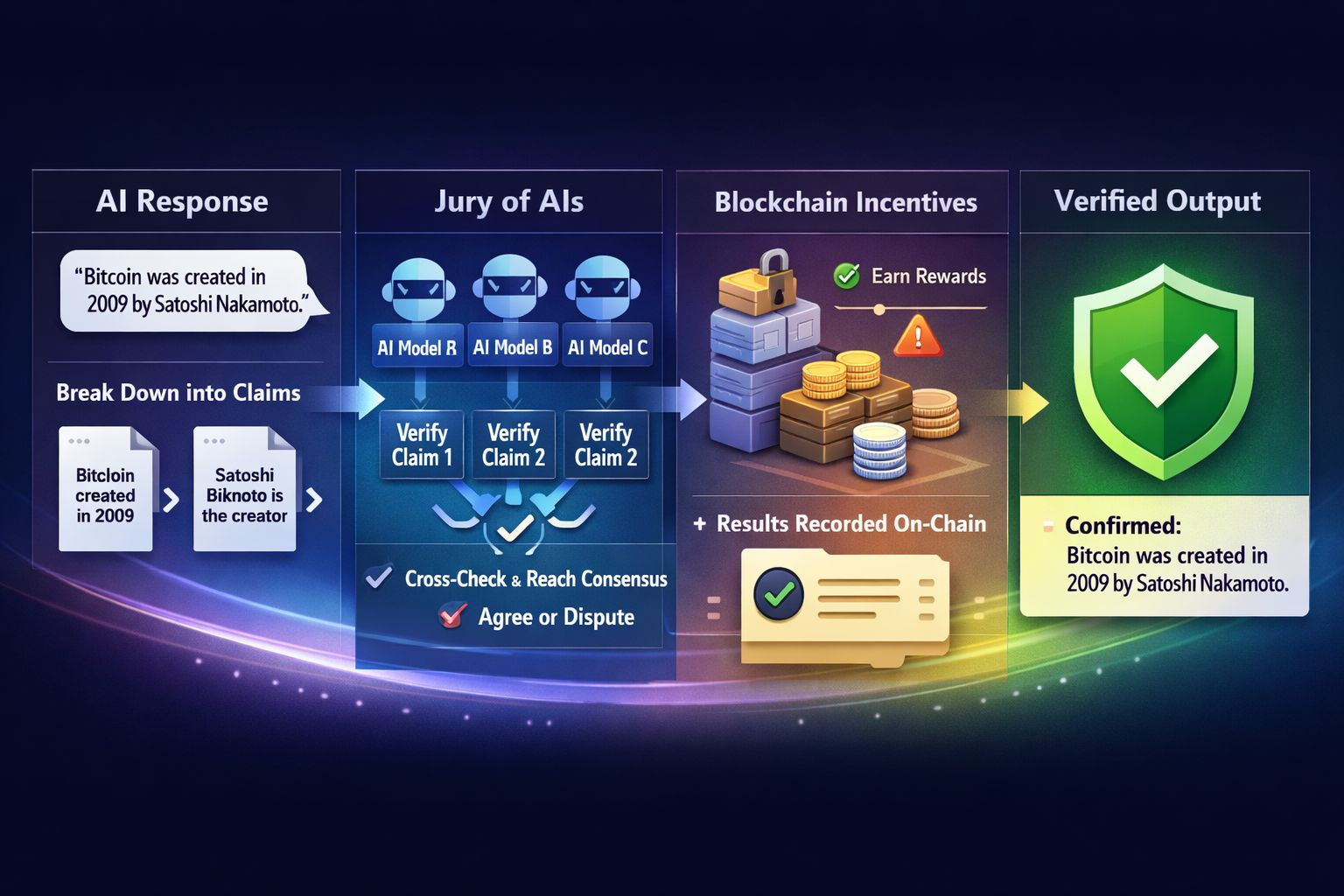

When an AI produces a response, Mira doesn’t simply accept the paragraph as a finished product. The system breaks the response into smaller factual statements. A single sentence might contain several claims—about a date, a person, a location, or a relationship between events. Each of those pieces becomes its own unit that can be checked independently.

This step may sound simple, but it changes the entire process. Rather than asking “Is this answer correct?”, the network asks a series of smaller questions: Is this date correct? Does this person exist? Did this event actually happen? By shrinking a complex answer into individual claims, the system turns AI output into something that can actually be tested.

Once those claims are isolated, the next step begins. Multiple independent AI models review them. Instead of relying on the same system that produced the answer, Mira sends the claim to several other models that evaluate whether it appears accurate based on their own training and reasoning.

The easiest way to imagine this process is to think of it as a jury of machines. One AI offers a statement. Several others evaluate it. Each gives its opinion about whether the claim holds up. The network then looks for agreement across those different perspectives.

This approach matters because every AI model carries its own biases, blind spots, and training quirks. When several different systems examine the same claim, the chances of them all making the exact same mistake drop significantly. Reliability begins to emerge from collective evaluation rather than from the authority of a single model.

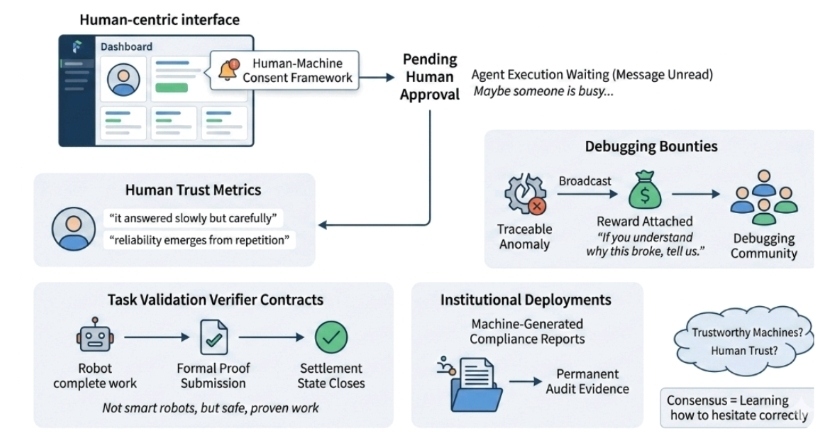

Of course, any system that depends on participants needs a way to keep them honest. Mira uses blockchain infrastructure to create that layer of accountability. Validators—participants who run verification models—stake tokens when they contribute to the process. If their evaluations align with the broader network consensus, they earn rewards. If they consistently produce unreliable results, their stake can be penalized.

The blockchain also acts as a permanent record of how verification decisions were made. Each claim, each evaluation, and each outcome is recorded in a way that can be audited. Instead of asking users to trust the system blindly, the network provides a transparent trail showing how conclusions were reached.

An interesting shift begins to appear when you look at the system from a broader perspective. Traditionally, improving AI reliability meant improving the model itself—better training data, larger architectures, more computing power. Mira’s design suggests a different path: reliability might come not from perfect models, but from systems that constantly question each other.

It’s a concept that echoes how human knowledge often works. Science advances through peer review. Journalism relies on fact-checking. Courts rely on multiple perspectives before reaching a verdict. In each case, trust does not come from a single voice but from a process of verification.

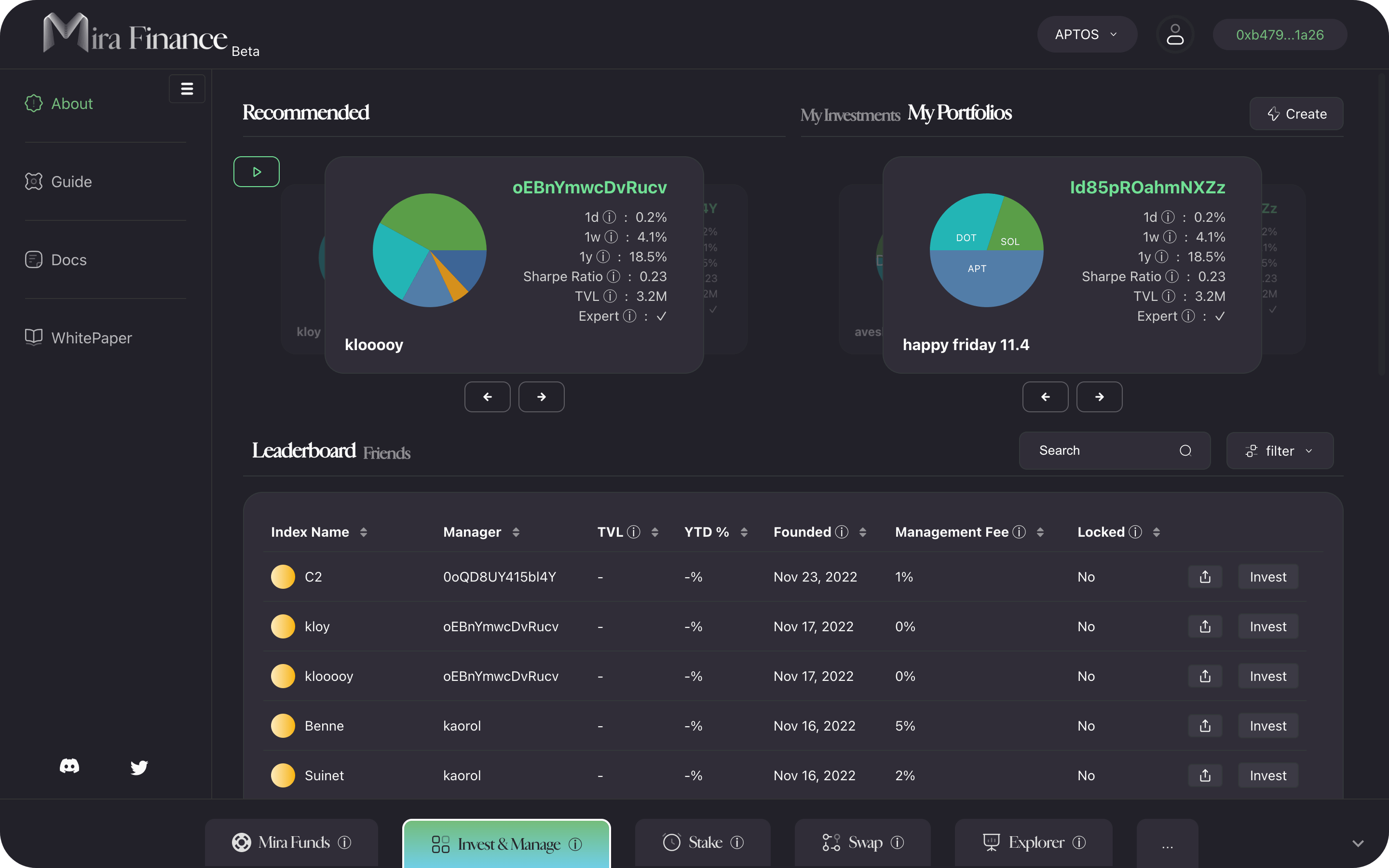

Recent developments around the network show that the project is moving toward becoming infrastructure for developers building AI applications. Tools have been introduced that allow applications to route AI outputs through Mira’s verification process automatically. Instead of delivering a response directly to the user, an application can pass it through the network to test the claims first.

There have also been efforts to scale the system so it can handle large volumes of verification requests, which becomes essential if AI systems begin relying on verification as a routine step. Some developers are experimenting with micro-payment mechanisms where applications pay small fees each time they request verification, effectively turning reliability into a service layer.

This emerging idea—that verification itself could become a market—is one of the more intriguing aspects of the project. As AI-generated content spreads across industries, the ability to prove whether a statement is trustworthy may become just as valuable as the ability to generate the statement in the first place.

Seen from this angle, Mira Network is not trying to compete with large AI models. Instead, it sits beside them, acting as a system that evaluates their outputs. The models create information; the network questions it.

In a world where machines can produce knowledge faster than humans can review it, the future of trustworthy AI may depend on systems where intelligence is constantly verified rather than blindly trusted.