There is a quiet shift happening in crypto that most people still think belongs to the future. In reality, it is already unfolding.

AI agents are no longer theoretical tools or experimental prototypes. They are already active on blockchains today. They manage wallets, rebalance DeFi positions, move liquidity between protocols and execute trades automatically. What analysts once predicted for 2027 has already begun to take shape.

But the arrival of AI agents inside financial systems has introduced a problem that traditional blockchain infrastructure was never designed to solve.

When a human makes a trade, responsibility is clear. We know who made the decision. When a smart contract performs an action, the logic is transparent and visible on the chain. Anyone can inspect the code and understand how the decision was made.

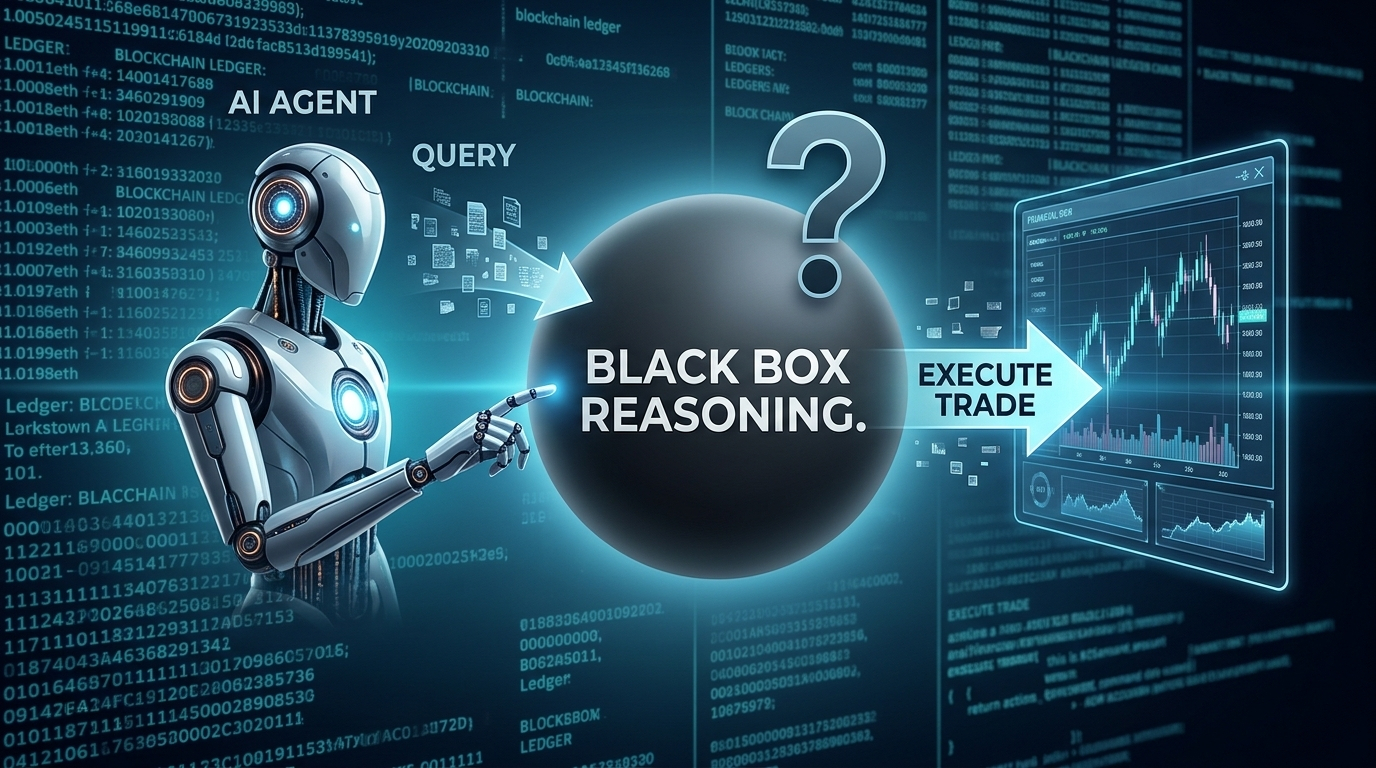

AI agents introduce a new layer of complexity. Their decisions are often influenced by large language models that analyze information and generate responses dynamically. The AI might ask a model about market conditions, risk exposure or optimal trade size. The answer then becomes part of the decision-making process.

The problem is simple but serious. Once that information enters the system, there is no reliable mechanism to verify where it came from, how accurate it was or who validated it. The decision happens, the trade executes, and the reasoning disappears into a black box.

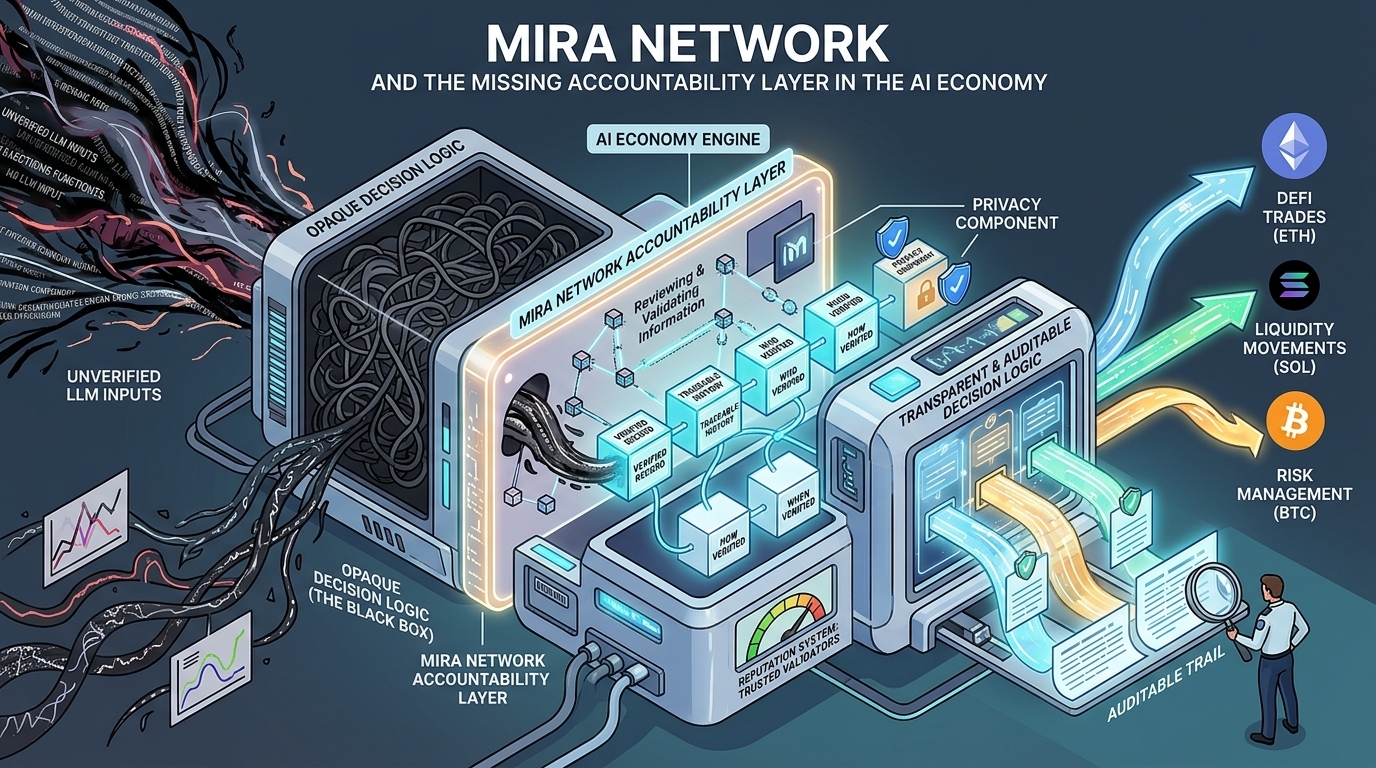

This is the gap that Mira Network is attempting to solve.

Instead of allowing AI systems to rely on unverified outputs, Mira Network introduces a verification layer for the information that feeds AI-driven decisions. When an AI agent queries a language model for insight or analysis, the response does not simply move forward unchecked. It enters a verification process where the information is reviewed and validated by participants in the network.

Once verified, the information becomes a certified record. Each piece of data carries a traceable history that shows who verified it, how the verification was performed and when it occurred. That record is then written permanently to the blockchain.

The difference may seem subtle at first, but it changes the nature of AI-driven systems entirely.

Instead of relying on opaque model outputs, AI agents begin to operate using verified information that can be audited and traced. If a decision later proves to be flawed, investigators can follow the chain of reasoning that led to it. The system does not simply show that a trade occurred. It reveals why it happened and who validated the underlying information.

This level of transparency is becoming increasingly important as regulators begin paying attention to automated decision-making systems. Financial authorities around the world are preparing frameworks for AI-driven markets, and one of their primary concerns is accountability. Regulators want to understand not just what actions an AI system took, but why those actions occurred.

Mira Network provides the structure needed to answer those questions. Every decision supported by its verification layer produces a record that can be reviewed from beginning to end. A compliance officer does not need to be a cryptography expert to understand the chain of events. The system organizes the information in a way that is both secure and interpretable.

Another important part of the design is the reputation system built into the network. Participants who verify information are evaluated over time based on the quality and consistency of their work. Those who demonstrate reliability gradually build stronger reputations, making their verifications more trusted within the system.

Over time, this creates a decentralized network of trusted validators rather than a system controlled by a single authority.

The architecture is also designed to work across major blockchain ecosystems. Whether AI agents are operating on Bitcoin, Ethereum or Solana, Mira Network can attach verification records to the decisions those agents make. As AI participation expands across chains, the accountability layer remains consistent.

There is also an important privacy component. The system allows companies to incorporate sensitive or proprietary data into AI decision processes without exposing the raw data itself. AI agents can rely on verified insights derived from private information while the underlying data remains protected.

This capability could become critical for institutions that want to deploy AI agents in financial environments but cannot risk exposing confidential datasets.

The challenge facing the AI economy is not simply about improving the accuracy of models. Even the most advanced model cannot create trust on its own. What markets require is a system that records how decisions were formed and who validated the information behind them.

Without that structure, AI-driven financial systems risk becoming impossible to audit or regulate.

Mira Network is designed to provide that missing layer.

As AI agents continue to expand their role across blockchain systems, the question will not only be how intelligent they become, but whether their decisions can be verified and understood. The long-term sustainability of the AI economy may depend less on the power of its models and more on the infrastructure that makes their decisions accountable.

$MIRA #Mira @Mira - Trust Layer of AI