I’ve noticed something after working around AI systems for a while. People rarely question answers that arrive quickly and sound confident. It happens everywhere. Someone asks a model about a historical event, a medical topic, or even something trivial like how many satellites orbit the Earth. The response appears instantly, written in clean paragraphs, often with references. Most readers assume it’s reliable.

And usually… it is.

But not always.

I remember testing an AI model months ago while researching a technical topic. The explanation looked perfect at first glance. Smooth language. Logical flow. A few citations sprinkled in. Only after digging deeper did I realize one of the core claims was simply wrong. Not maliciously wrong. Just… confidently incorrect.

That moment stuck with me. Not because the model failed. Models fail all the time. What stayed with me was how easily the error could have spread if nobody checked it.

This is the uncomfortable part of modern AI systems. They generate information extremely well. But generation is not the same thing as verification.

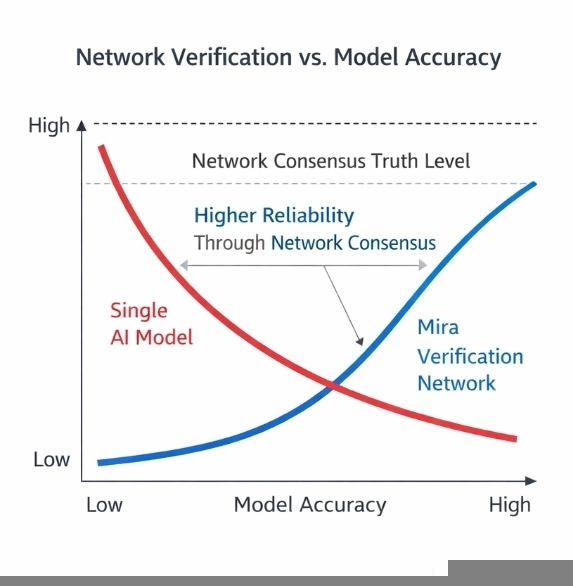

For years the industry tried to close that gap by improving the models themselves. Larger datasets, better reinforcement learning, stronger benchmarks. Every improvement helped a little. Yet the underlying structure remained the same: one system produces the answer and users decide whether to trust it.

The more I think about it, the more fragile that setup feels.

Because reliability may not be something a single model can fully guarantee.

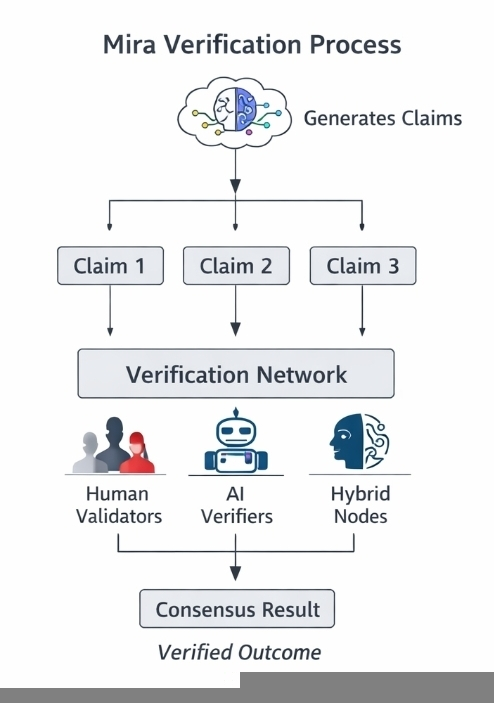

This is where the design philosophy behind Mira Network becomes interesting. Instead of asking a model to prove its own accuracy, the system assumes something different from the beginning: truth might need to emerge from a network rather than from a single machine.

At first that idea sounds theoretical. But the mechanics are actually fairly practical.

Imagine an AI model responding to a complex question. Instead of treating the output as one finished answer, the system separates it into smaller claims. Each claim becomes something that can be evaluated on its own. A statistic. A factual statement. A logical inference.

Now picture those claims moving through a verification layer.

Multiple validators examine them. Some might be automated systems designed to cross-check sources or run structured tests. Others might be participants who stake tokens and review the claims carefully. Each validator produces an evaluation. Agreement starts forming. Disagreement appears too.

Eventually the network reaches something like consensus.

Not perfect truth. But a collective judgement about whether the claim holds up.

This idea reminds me a little of how blockchains solved transaction verification years ago. No single node decides whether a transaction is valid. Instead, independent validators check the same information and converge on a shared result.

Mira Network seems to apply a similar coordination principle to information itself.

What makes this different from traditional fact-checking is the incentive layer. Verification isn’t just voluntary work or centralized moderation. Participants in the network may stake tokens when validating claims. If their assessments consistently diverge from accurate outcomes, they risk losing part of that stake.

That detail changes behavior.

When people have something at risk, they tend to slow down. They double-check things. Careless validation becomes expensive.

Still, incentives alone don’t solve everything. Coordination problems rarely disappear that easily.

One concern I keep returning to is scale. AI systems generate enormous volumes of information. Think about search engines, chat assistants, automated research tools. If every meaningful claim required verification, the network would need to process an enormous stream of data.

That’s not trivial.

Another concern is social behavior inside decentralized systems. Validators might start following majority opinions rather than independently analyzing claims. We’ve seen that dynamic in governance voting across many crypto networks. Incentives push participants toward safety. Sometimes that means aligning with the crowd.

So the system still depends on a delicate balance. Enough incentives to encourage honest verification. Enough independence to prevent blind consensus.

The question to ask is whether a decentralized network can maintain that balance over time.

I keep thinking about how this compares to the way trust formed on the early internet. Back then credibility came from institutions. Universities, journals, media organizations. Later it shifted toward platforms and reputation systems. Ratings, reviews, follower counts.

AI introduces a different problem. Machines are now generating enormous volumes of information faster than humans can evaluate it.

If that trend continues, relying on institutional verification alone probably won’t scale.

That’s where Mira Network’s approach starts to make sense conceptually. Instead of relying on a single authority or a single model, it distributes verification across a network of participants who evaluate claims collectively.

Accuracy becomes something the network arrives at together.

But even while writing this, I’m aware that the idea could unfold in many ways. Systems like this often look elegant in theory and messy in practice. Economic incentives can create strange behaviors. Verification markets might reward speed instead of careful analysis. Or developers might bypass the verification layer entirely if it slows their applications down.

All of that is possible.

Still, the underlying shift in perspective feels important.

For a long time the conversation around AI reliability focused on building better models. Mira Network seems to suggest a different path: building better verification systems around those models.

Maybe that’s the real lesson here.

AI models generate information. Networks evaluate it.

And if that model-network relationship becomes common in the future, the systems deciding what information we trust might look less like individual machines and more like coordinated ecosystems of participants trying, sometimes imperfectly to agree on what is actually true.