I’m waiting and watching the quieter parts of the crypto and AI world where the real ideas usually grow before anyone notices them. I’m looking beyond the excitement and the announcements, trying to understand what problems people are actually trying to solve. I’ve been noticing something strange over time. The louder the space becomes, the less people seem to talk about the foundations that everything depends on. I focus on the moments where a project feels less like noise and more like someone quietly trying to repair something that has been broken for a long time.

Most conversations about artificial intelligence and robotics revolve around progress. Faster systems, smarter models, machines that can see, hear, and understand the world better than they could yesterday. And honestly, some of those advances are incredible. Watching machines learn to navigate spaces or understand language still carries a sense of wonder.

But the longer I watch this space, the more I feel a small tension beneath all that progress.

The machines are improving, yet the systems around them still feel fragile.

A robot moving inside a lab or a warehouse is one thing. It operates inside a controlled environment where every variable is known. The people who built it also control the data, the rules, and the network it lives in. When something goes wrong, the same organization owns the explanation.

But the real world does not work like that.

Machines are slowly stepping outside those controlled environments. Delivery robots crossing streets. Automated systems working across supply chains. AI agents making decisions that affect businesses, customers, and sometimes entire communities.

When that happens, a deeper question appears quietly in the background.

How do we trust what these systems are doing?

Not trust in the emotional sense, but in the practical sense. When a machine makes a decision, who can actually verify how that decision happened. When data moves between systems, who can confirm it has not been altered. When something goes wrong, who can trace the chain of events clearly enough to understand the truth.

This is where I started paying attention to Fabric Protocol.

At first I approached it with the same caution I usually feel when I hear about new crypto infrastructure. The space has produced enough promises to make anyone skeptical. Grand visions are easy to write. Building something that quietly solves a difficult problem is much harder.

But the idea behind Fabric kept pulling my attention back to it.

The focus is not on making robots smarter. It is on creating an environment where machines can operate in a way that people can verify and understand.

That difference feels subtle, but it carries emotional weight when you think about it long enough.

Because intelligence without accountability creates discomfort. People might admire what machines can do, but they hesitate when those machines begin making decisions that cannot be explained.

Fabric approaches this problem from a different direction by focusing on verifiable computing. Instead of asking people to simply trust that a system behaved correctly, the system produces evidence that can be checked.

Imagine a robot performing a task. Instead of that action disappearing into a private database somewhere, the computation and the data surrounding that decision leave a trace that can be verified later. The record becomes part of a shared infrastructure where different participants can confirm what actually happened.

There is something quietly reassuring about that idea.

Not because it promises perfection, but because it respects the fact that mistakes and disagreements will happen. Verification gives people a way to resolve those moments with evidence instead of assumptions.

The use of a public ledger in this context feels less like a financial tool and more like a shared memory. A place where actions, data, and computations can be recorded in a form that does not belong to any single participant.

Once that layer exists, machines begin to feel less like isolated tools and more like participants in a system that people can observe.

That shift might sound technical, but emotionally it changes the relationship between humans and machines.

Right now many AI systems operate like black boxes. They produce answers, decisions, or actions, but the path leading to those outcomes often feels hidden. When the results are correct, people celebrate the intelligence. When the results are wrong, confusion appears.

Fabric seems to be exploring a world where those paths are visible enough to verify.

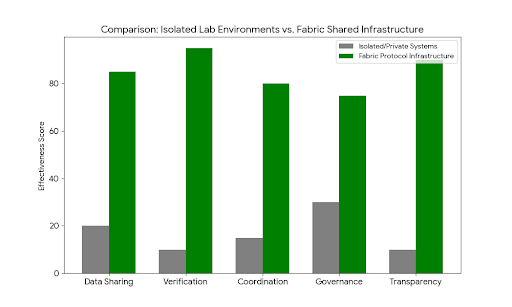

Another thing that keeps returning to my mind is how fragmented robotics development has been for years. Different companies build machines that rarely communicate with each other. Data sits inside isolated systems. Every new project begins almost from scratch because knowledge cannot easily travel between environments.

It feels inefficient, almost lonely in a technological sense.

The idea of a shared infrastructure where machines, data, and computation can coordinate begins to soften that fragmentation. Instead of isolated islands of innovation, systems could slowly become connected pieces of a broader network.

That possibility carries a quiet sense of relief when I think about the future of automation.

Because the world machines operate in is already complex. Cities, logistics networks, hospitals, and factories are full of unpredictable situations. If every robotic system remains isolated, the friction between them will only grow.

Infrastructure that allows collaboration could reduce that friction in ways people might not immediately notice.

Fabric also seems to approach governance as part of the system rather than something added later. That detail might sound technical, but emotionally it addresses one of the deepest fears people have about autonomous technology.

The fear that machines will operate without clear rules.

If governance mechanisms exist within the infrastructure itself, rules can evolve as technology changes. Communities and participants can adjust how systems behave without tearing everything apart and starting over.

It creates the feeling that the system is alive enough to adapt.

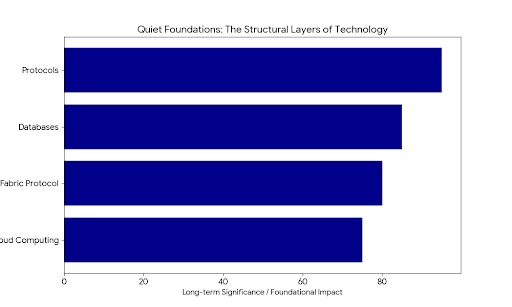

The longer I watch developments in AI and robotics, the more I realize something important. The most meaningful breakthroughs rarely appear in the spotlight. They emerge quietly in the layers that people rarely talk about.

Communication protocols built the internet long before social media existed. Database systems shaped modern software long before people started talking about cloud platforms.

Fabric feels like it belongs to that quieter category.

It is less about creating impressive demonstrations and more about building the invisible structures that future machines might depend on. The kind of infrastructure that people only recognize years later when they realize everything started building on top of it.

And maybe that is why it keeps sitting in the back of my mind.

Because when machines begin sharing our environments, making decisions, and coordinating tasks across industries and cities, intelligence alone will not be enough.

What people will really be searching for is the quiet confidence that the systems guiding those machines can actually be trusted.