Most people assume AI’s biggest challenge is intelligence. In reality the challenge has always been trust. Big models can produce confident answers but confidence does not equal correctness. Subtle errors—misread facts, fabricated citations, or incomplete reasoning—slip through quietly and rarely announce themselves loudly.

These errors aren’t catastrophic individually. But in financial systems smart contracts, or autonomous networks even small oversights can cascade into real-world consequences. And that’s exactly why relying on a single model is fragile.

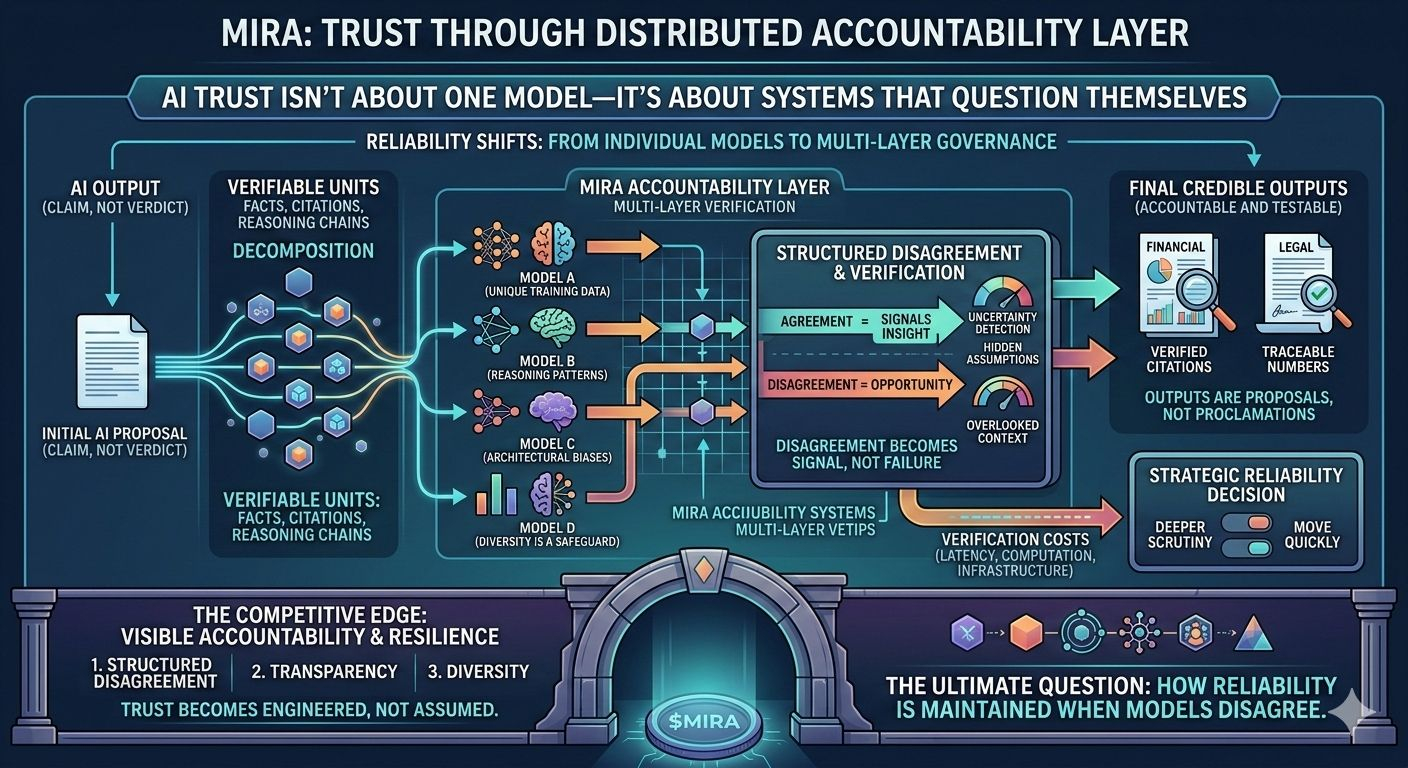

Mira introduces a new way: treat every output as a claim not a verdict. Multiple independent models evaluate the same claim each bringing unique training data, reasoning patterns, and architectural biases. Agreement signals insight, but disagreement signals opportunity—opportunity to detect uncertainty hidden assumptions, or overlooked context.

In practice, outputs are broken down into verifiable units. A complex financial summary becomes traceable numbers. A legal interpretation transforms into a chain of reasoning. AI doesn’t magically become smarter—its claims become accountable and testable.

Trust shifts away from individual models and moves toward multi-layer governance. Outputs are credible not because a model produced them but because independent systems reached compatible conclusions. Transparency is critical: overlapping datasets or similar architectures can bias consensus, so diversity is a reliability safeguard.

Verification has costs—latency, computation, and infrastructure. Applications integrating these layers must decide which claims need deeper scrutiny and which can move quickly. Reliability is no longer a passive attribute; it’s a strategic decision embedded in system design.

The competitive edge for the next generation of AI won’t come from who answers fastest or sounds smartest. It will come from visible accountability, structured disagreement, and resilience in the face of errors.

Mira’s multi-model governance isn’t just a feature—it’s an accountability layer for machine intelligence. Outputs become proposals, not proclamations. Disagreement becomes signal, not failure. Trust becomes engineered, not assumed.

The ultimate question isn’t whether models agree. It’s who interprets disagreement, which safeguards activate, and how reliability is maintained. This is the world where AI can truly be trusted.

#Mira

@Mira - Trust Layer of AI