I’m waiting and watching the quieter edges of the AI and crypto world where real systems usually begin forming long before anyone calls them important. I’m looking beyond the excitement, beyond the token announcements and predictions about robots taking over industries. I’ve been noticing something different when I slow down and pay attention to how the deeper infrastructure is evolving. I focus on the pieces that most people ignore at first, the layers underneath the technology where trust, coordination, and responsibility quietly start taking shape.

The more time I spend observing this space, the more one uncomfortable thought keeps coming back to me.

Machines are getting smarter every year. But the systems that are supposed to manage them still feel unprepared.

Robots can already move packages in warehouses, scan crops in fields, inspect buildings, and navigate city streets. AI agents can analyze data, coordinate logistics, and make decisions faster than most human teams.

Yet something about the structure around these machines still feels incomplete.

Not technically incomplete.

Structurally incomplete.

Because once machines start participating in real economic activity, the most important question is no longer what they can do.

The question becomes something far more human.

Can we trust what they did?

That question carries more weight than most people realize.

Imagine a robot inspecting critical equipment inside a factory. If it reports that everything looks safe and later something fails, people will not ask how advanced the robot was. They will ask a much simpler question.

What actually happened?

Who verified the result?

Where is the evidence?

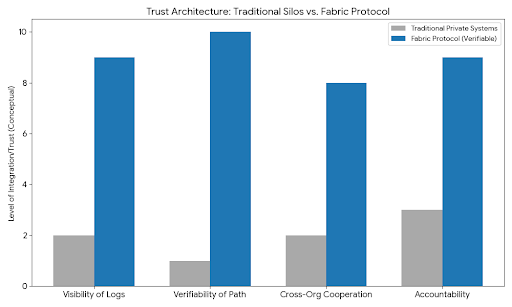

Right now those answers often live inside private systems owned by the company that built the machine. Logs sit on internal servers. Data is stored behind corporate infrastructure. If something goes wrong, outsiders have to trust whatever explanation is provided.

That model works while machines remain isolated tools.

But the moment machines begin interacting with different companies, public environments, and shared infrastructure, that quiet assumption begins to feel fragile.

This is where I first started paying attention to Fabric Protocol.

Not because it promised something louder or more futuristic than other projects.

Actually the opposite.

It felt like someone was quietly working on a problem that most people had not fully acknowledged yet.

The coordination layer for machines.

The more I looked at it, the more it seemed less like a robotics platform and more like a system designed to help the world understand what machines are actually doing.

That difference might sound small, but emotionally it changes everything.

Because trust in machines will not come from believing they are intelligent.

Trust comes from knowing their actions can be verified.

Fabric approaches this idea through something called verifiable computing. Instead of simply producing results, machines operating within the system can generate proof of how those results were created.

Not just the final answer.

The path that led to it.

That proof can be anchored to a public ledger where it becomes part of a shared record instead of a private log.

The moment you really sit with that idea, it starts to feel powerful in a quiet way.

It means that when a robot performs a task, the evidence of that task does not disappear inside a single organization. It becomes something other participants can observe and verify.

That matters more than people think.

Because the world we are moving toward will not be filled with robots working alone inside controlled spaces.

Machines will interact with supply chains, transportation systems, buildings, financial infrastructure, and other machines built by completely different organizations.

Each interaction creates a moment where trust has to exist between parties who may not know each other.

Without shared verification, every one of those interactions carries uncertainty.

With verification, the interaction becomes something closer to cooperation.

Another thing I noticed while watching Fabric evolve is the way it treats autonomous agents.

Most digital systems were designed for humans. Accounts belong to people. Interfaces are built for human attention. Permissions are granted through human identity.

But robots and AI agents do not behave like humans.

They do not log in occasionally and perform a task.

They operate constantly.

They generate streams of decisions and data every second.

Trying to fit that behavior into traditional systems often feels awkward. It is like forcing a new kind of life into an environment that was never designed for it.

Fabric seems to recognize that shift.

Instead of treating machines as secondary tools, the network allows them to act as participants.

Agents can coordinate tasks. They can verify results. They can interact with other agents within a structure where rules exist beyond any single company.

When I think about the future robot economy people talk about, this detail starts to feel extremely important.

The real transformation will not come from individual robots becoming brilliant.

It will come from networks of machines cooperating with each other while humans oversee the larger system.

That cooperation requires something deeper than intelligence.

It requires shared infrastructure.

Another quiet detail that stayed with me is the governance structure behind the protocol. It is supported by a foundation rather than controlled by a single corporation.

That choice changes the emotional tone of the system.

When infrastructure belongs entirely to one company, every participant eventually wonders whether the rules will change to benefit that company.

But when a system is built as a public network, the incentives feel different.

The rules evolve slowly. Sometimes frustratingly slowly.

But they evolve through participation rather than ownership.

For something as sensitive as machines operating across industries and public environments, that neutrality might matter more than people realize right now.

The strange part about all of this is how invisible these problems still are.

Most conversations around AI focus on capability. People talk about smarter models, faster robotics, and automation replacing jobs.

Those discussions grab attention because they feel dramatic.

But the real challenge quietly lives somewhere deeper.

How do we create systems where machines can act in the real world while humans still understand and trust the consequences of those actions?

The longer I watch how AI, robotics, and decentralized infrastructure are evolving together, the more I feel a strange shift happening.

At first the future looked like it would be defined by intelligent machines.

Now it feels like it may be defined by the networks that make those machines accountable to the world around them.

And the more I sit with that thought, the more I realize the most important technology of the robot age might not be the machines themselves, but the quiet systems that allow everyone else to trust what those machines actually did.