I’m waiting and watching the quieter layers of the AI world where the real problems slowly reveal themselves. I’m looking beyond the excitement around powerful models and impressive demos and focusing on something that feels far more fragile underneath it all. I’ve been noticing how easily people trust answers that sound intelligent. I focus on the strange tension between how confident artificial intelligence sounds and how uncertain its knowledge can actually be.

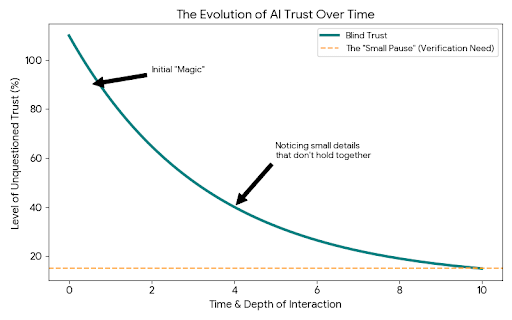

The first time you spend real time with modern AI tools, it feels almost magical. You type a question and seconds later a full explanation appears. It can break down complex topics, write long paragraphs, summarize research, even mimic reasoning that feels thoughtful and structured.

At first it feels like the future has quietly arrived.

But the longer you sit with it, the more subtle doubts begin to appear.

You start noticing small details that do not quite hold together. A reference that cannot be found. A statistic that looks believable but turns out to be slightly wrong. A confident explanation built on a weak assumption.

What makes these moments unsettling is not the mistake itself. Humans make mistakes constantly. What makes it uncomfortable is the certainty in the tone. The answer arrives with no hesitation. No visible doubt. It feels finished even when something inside it is quietly broken.

And that creates a strange emotional reaction the longer you use these systems.

You want to trust them. You want to believe the intelligence you are interacting with understands what it is saying. But part of you begins to pause before accepting anything too quickly.

That small pause has become more common for me the more I watch the evolution of artificial intelligence.

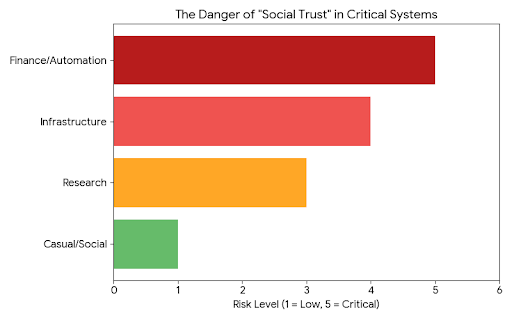

Right now most AI systems operate on a kind of social trust. The model produces an answer and the user decides whether it feels correct. Sometimes people double check. Sometimes they do not. When the topic is casual, the risk is small.

But the moment AI starts touching financial decisions, research, automation, or infrastructure, that casual trust begins to feel dangerous.

The strange thing is that the entire industry seems focused on making AI more powerful, while the question of reliability still feels unfinished.

Bigger models appear. Faster models appear. Smarter reasoning techniques appear. But the basic dynamic remains the same. A single system produces an answer and everyone else hopes the answer is right.

The more I observe this pattern, the more it feels like we are solving the wrong problem first.

Maybe intelligence alone is not enough.

Maybe the missing layer is verification.

That idea started lingering in my mind when I began quietly studying what Mira Network is trying to build. At first glance it looks like another project sitting somewhere between artificial intelligence and blockchain technology. That combination has appeared many times before and often feels forced.

But the longer I sat with the concept, the more it started to feel like it was addressing something deeper.

Instead of assuming AI outputs should be trusted, Mira treats them as something that needs to be questioned.

That shift might sound small, but it changes the entire relationship between humans and machines.

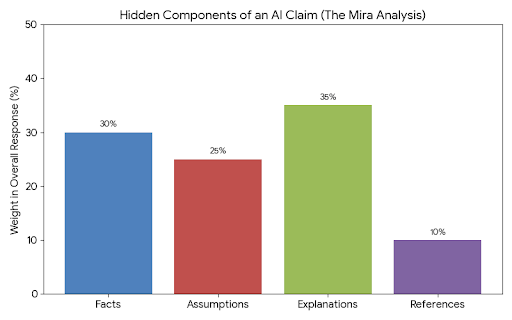

When an AI generates a long response, it is not just producing one statement. Hidden inside that response are many separate claims. Facts, assumptions, explanations, references. Humans often read the paragraph as a single block of information and accept it if the overall tone feels convincing.

Mira approaches it differently.

The system breaks the content into individual claims that can be examined one by one.

Suddenly the answer is no longer a finished truth. It becomes something closer to a set of ideas waiting to be tested.

Different AI models in the network participate in examining those claims. Instead of one voice declaring the answer, multiple voices evaluate whether each piece of information actually holds up.

Watching this structure unfold feels strangely familiar.

It begins to resemble the way humans search for truth.

In science, a discovery is not accepted immediately. Other researchers test it. Question it. Challenge it. Sometimes they confirm it. Sometimes they prove parts of it wrong. Over time a clearer picture emerges.

That process exists because knowledge becomes stronger when it survives scrutiny.

Artificial intelligence has mostly been operating without that layer.

Right now a model produces information and the responsibility for questioning it falls entirely on the person reading it. That works when AI is just helping write emails or summarize documents. But as soon as machines start interacting with other machines, the system becomes fragile.

A flawed piece of information can travel quickly through automated systems. Decisions get made. Processes move forward. And the original error quietly multiplies.

That possibility creates a quiet tension beneath all the excitement around AI.

We are building machines that can generate knowledge faster than humans ever could. But we have not yet built a shared system for verifying that knowledge at the same speed.

That is the part of Mira that keeps pulling my attention back.

Instead of chasing the dream of a perfect model, the project assumes imperfection will always exist. Errors will happen. Hallucinations will happen. Bias will appear.

So the real question becomes something simpler.

How do you catch the mistake before it spreads?

The answer Mira explores is surprisingly grounded. Turn verification into a network process. Let independent participants evaluate claims. Use incentives to encourage honest validation. Allow consensus to form around what information survives examination.

Blockchain begins to make sense inside that structure.

For years blockchains have been used to help strangers agree on financial records without trusting a central authority. Mira seems to be experimenting with the idea that the same logic could apply to information itself.

Instead of trusting a single AI model, trust emerges from the interaction between many evaluators.

What fascinates me about this direction is how calm it feels compared to the usual noise in the crypto world.

Most projects talk about speed, scale, or disruption. The conversation often revolves around how quickly something can grow or how big the network might become.

Mira feels quieter.

It feels like someone noticed a weakness that everyone else was stepping around and decided to address it directly.

The reliability of machine generated knowledge.

And the more I watch artificial intelligence evolve, the more that problem feels impossible to ignore. Because the future many people imagine involves autonomous systems making decisions, negotiating transactions, managing infrastructure, and interacting with each other constantly.

If those systems are built on information that has never been properly verified, the entire structure rests on unstable ground.

The longer I observe this space, the clearer one thought becomes.

The real breakthrough in artificial intelligence might not come from the machine that produces the most answers, but from the system that finally learns how to question them.