I’m watching the Fabric network the way you watch a busy intersection that doesn’t have traffic lights yet. Everyone approaches with their own expectations, slows down slightly, reads the behavior of others, and decides whether to move forward or hesitate. I keep seeing those small decisions happening everywhere across the system. No single movement feels dramatic on its own, but together they begin forming a rhythm. I’m waiting to see whether that rhythm becomes stable or whether it keeps shifting as more participants step in and the pressure on coordination increases.

I’ve noticed that the interesting part isn’t the infrastructure itself. It’s the behavior that starts forming around it. When machines, data, and human decision-making are tied together inside a shared environment, responsibility becomes blurry very quickly. If a robotic action produces an unexpected result, the question isn’t just what happened. It’s who interprets what happened, and whose interpretation becomes accepted by everyone else. I focus on those moments because they reveal where real influence begins to gather.

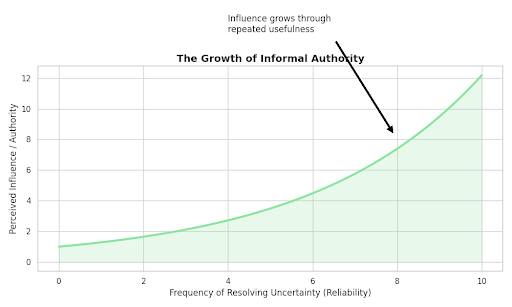

I keep seeing reliability quietly turn into authority. Some participants become the ones who step in when things stall or when confusion spreads through the network. They clarify a data signal, resolve a coordination issue, or stabilize a process that others were hesitant to touch. Over time people start watching them more closely. Not because the system formally gives them control, but because they repeatedly show up at the moments when uncertainty needs to be resolved. Influence in systems like this rarely arrives through design; it grows through repeated usefulness.

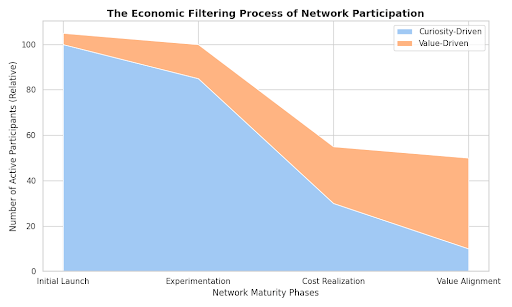

I’m also tracking how economic pressure slowly reshapes participation. Operating machines, running computation, maintaining data flows—none of this is free. In the beginning, many participants experiment with the system simply because the idea is interesting. But curiosity alone doesn’t sustain infrastructure. Eventually the participants who remain active are the ones who can carry the cost long enough to see value appear later. That quiet filtering process changes the character of the network over time.

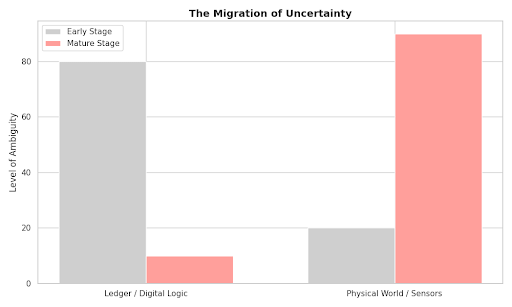

I’ve noticed how uncertainty migrates instead of disappearing. A ledger might make agreements transparent, and verification might make certain processes measurable, but once machines begin interacting with the physical world the system inherits a different kind of ambiguity. Sensors don’t always agree with each other. Environments behave differently from one location to another. I keep seeing people step into the role of interpreters—translating machine signals into something the rest of the network can understand and act on.

Governance becomes most visible when those interpretations collide. For long stretches it sits quietly in the background, almost invisible. Then a disagreement surfaces—about responsibility, resource allocation, or how to respond to a machine’s behavior—and suddenly everyone is paying attention to the rules again. I’ve noticed that governance rarely eliminates tension in these moments. It usually shifts the tension somewhere else in the system, sometimes in ways that only become clear later.

I’m watching how different types of participants react to that shifting pressure. Some move closer to the infrastructure layer, trying to stay near the systems that actually keep things running. Others invest their energy in governance discussions, knowing that interpretation of rules eventually shapes the entire network. A few focus almost entirely on data and coordination flows, understanding that whoever helps interpret machine behavior will quietly influence how the system evolves.

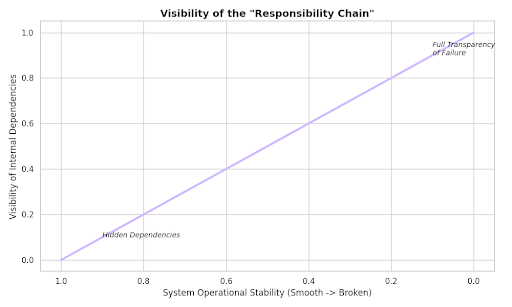

There are also invisible dependencies forming that most people don’t see right away. A robotic action might look simple from the outside, but behind it sits a long chain of data inputs, computational checks, and human decisions. When everything works, that chain remains hidden. But when something breaks, the entire sequence suddenly becomes visible, and the network has to figure out where responsibility actually sits.

I’m waiting to see how the system behaves when multiple stresses appear at the same time. Early on, participants tend to be patient. People tolerate inefficiencies because they believe the coordination mechanism will mature. But patience has limits. If the operational burden grows faster than the benefits, participants start asking harder questions about whether incentives are truly aligned.

I also pay attention to something less technical: how people feel about their position in the system. If participants believe risk and reward are balanced, they tend to keep contributing even when things get messy. But if they begin to suspect that some actors are quietly accumulating influence without carrying the same level of risk, the atmosphere shifts. Trust becomes thinner, and coordination requires more effort.

From where I’m standing, Fabric doesn’t look like a finished structure yet. It feels more like a live environment where incentives, machines, and human judgment are constantly negotiating with each other. I keep watching those negotiations closely. Not because they always produce clear answers, but because the way people respond to uncertainty usually tells you far more about a system than the design ever could.