@Fabric Foundation I keep coming back to a simple question when a robot makes a decision in the real world, how do I know where that decision came from? That is why Fabric Protocol has caught attention in open robotics. The official material presents it as a way to build robots with public records around coordination, oversight, identity, and incentives, while the organization behind it says its goal is to make machine behavior predictable and observable. What makes the timing interesting is that this is arriving just as open robotics is already building on shared software stacks and coordination frameworks, and as Fabric itself has moved from whitepaper language into a clearer 2026 rollout plan.

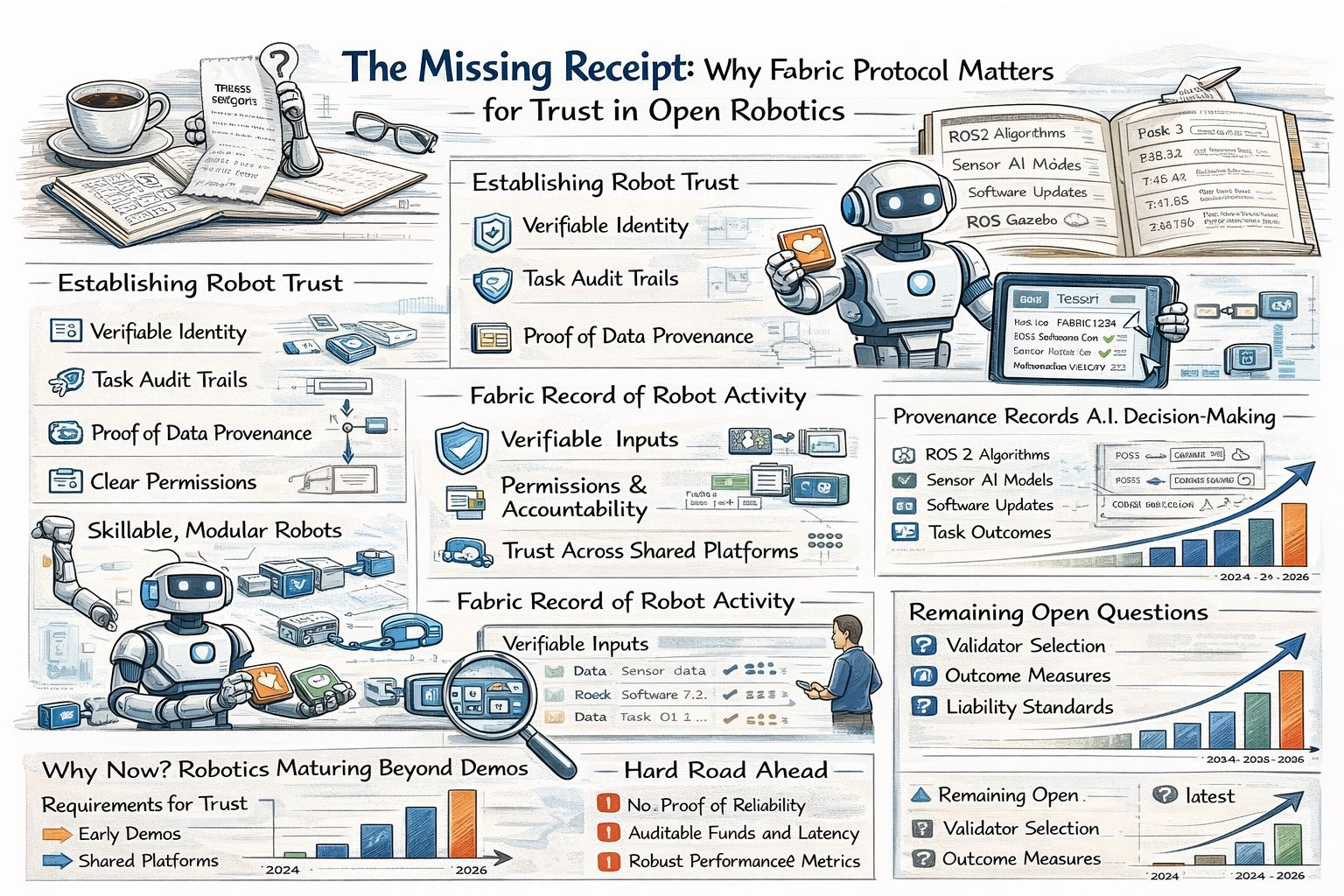

When I talk about data provenance here, I do not mean an abstract compliance phrase. I mean the plain record behind a robot’s knowledge and action. I want to know where the data came from, how it was handled, which software version shaped it, what task the machine was given, and whether anyone checked the outcome later. In plain English, provenance is the receipt. That matters more in robotics than in many other AI settings because a bad output does not just create a strange answer on a screen. It can lead to a failed grasp, a wrong turn, or a safety problem in a warehouse aisle or hospital corridor. Researchers have argued that authenticity, consent, and provenance remain fragmented across AI systems and that current fixes are too isolated to support trustworthy development at scale.

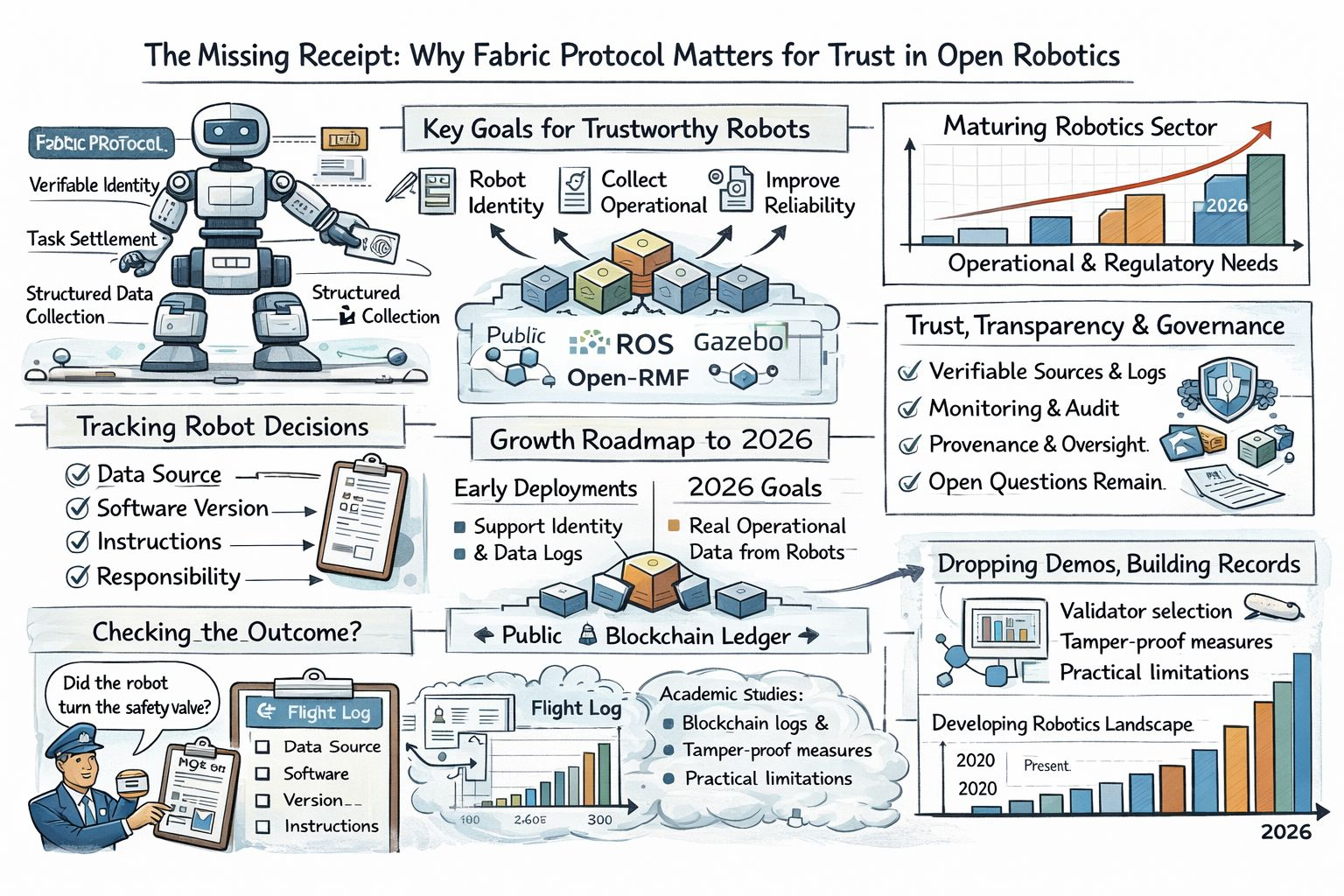

What I find genuinely useful in Fabric’s framing is that it treats reliability as a system problem rather than only a model problem. The project’s roadmap says its early deployments are meant to support robot identity, task settlement, and structured data collection. It also lays out 2026 goals that include collecting real operational data from active robot use, widening participation across platforms and environments, and improving coverage, quality, validation, reliability, and throughput over time. Its broader mission language makes the same point in simpler terms. A robot needs a verifiable identity, clear permissions, and a history that can be inspected if different operators, communities, and institutions are supposed to trust it. That matters because open robotics has long been good at sharing code while being much less consistent at sharing trust.

Still, I do not think provenance should be confused with proof of reliability. A ledger can show that a dataset was logged or that a task was marked complete, but it cannot rescue a bad sensor, a biased labeler, or a weak evaluation process. To Fabric’s credit, the whitepaper does not pretend everything is solved. It openly lists unresolved questions around validator selection, the definition of sub-economies, and the need for measures of success that are harder to game than simple revenue. I tend to trust projects a little more when they admit where the fog still is because reliability in robotics usually breaks at the messy edges and not inside clean diagrams.

There is also a useful reality check from outside this specific project. Academic work on robotics and distributed record-keeping has argued that immutable records, access control, and provenance can improve trust in robotic systems while also warning about practical limits. A separate study combining robotics middleware with a blockchain framework found that auditable teleoperation is technically feasible and described experimental latency in the hundreds of milliseconds, along with the tradeoffs that come with bringing blockchain into real robotic control. That is not the same thing as Fabric Protocol, and I do not want to blur the two. Still, it shows that the core idea is not imaginary. Parts of this trust layer are already being explored in real robotic workflows.

So why is this topic trending now? My read is that robotics has finally moved beyond the stage where clever demos are enough. Once machines are expected to work across organizations, update skills, collect feedback, and operate in public, provenance stops sounding academic and starts sounding like infrastructure. Fabric’s recent roadmap has helped push that argument into view, but the deeper reason is that the field itself is maturing. Maturity brings records, traceability, and difficult questions about trust. My own view is simple. I will take this kind of work seriously when a developer, an operator, a regulator, or an ordinary user can inspect a robot’s history as easily as reading a flight log. If Fabric helps make that normal, it will matter. If it cannot, the idea will remain interesting but incomplete.