I still remember the first time someone pitched the idea that every robot and agent would get its own on-chain identity. It hit me like a lightbulb moment. Finally—persistent machines you could actually count on. Real reputation. Honest accountability. The whole machine-economy thing suddenly felt like it was right around the corner, you know?

I still remember the first time someone pitched the idea that every robot and agent would get its own on-chain identity. It hit me like a lightbulb moment. Finally—persistent machines you could actually count on. Real reputation. Honest accountability. The whole machine-economy thing suddenly felt like it was right around the corner, you know?

Then I thought about the internet for two seconds.

Cheap identity doesn’t magically create trust. It just creates farms. Lots of them.

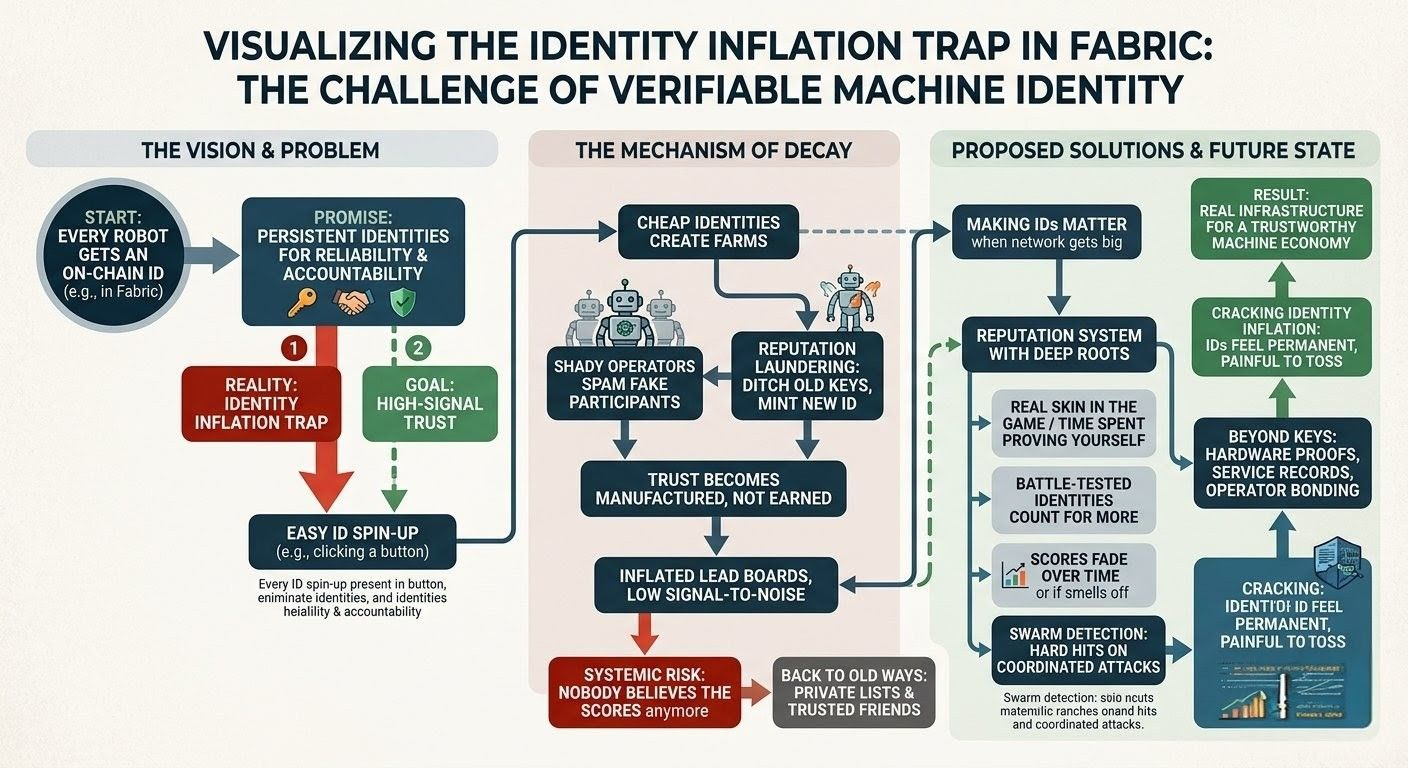

And that sneaky little trap is already sitting there in the Fabric vision, waiting. The moment spinning up a fresh ID for some autonomous agent becomes as easy as clicking a button, the flood isn’t going to come from the good guys quietly building useful stuff. Nope. It’s going to come from the swarms.

The shady operators won’t bother spamming transactions anymore—they’ll spam fake participants. Thousands of squeaky-clean “robots,” thousands of brand-new “agents,” all starting with spotless records, perfectly tuned to chase rewards, juice up the metrics, and fake that aura of reliability.

Even the decent folks will be tempted. Your robot screws up, racks up complaints, gets dinged with penalties? Simple fix: ditch the old keys, mint a shiny new ID, and boom—same code, same quirks, brand-new reputation. Machine-speed reputation laundering.

That’s what I call identity inflation.

Way too many entities, way too little real signal. Trust stops being something you build over time and turns into something you just manufacture. The whole leaderboard becomes pure marketing—whoever can crank out the most believable stats wins.

So the real question for Fabric isn’t just “can you hand out identities to machines?” It’s “can you make those identities actually matter when the network gets big?”

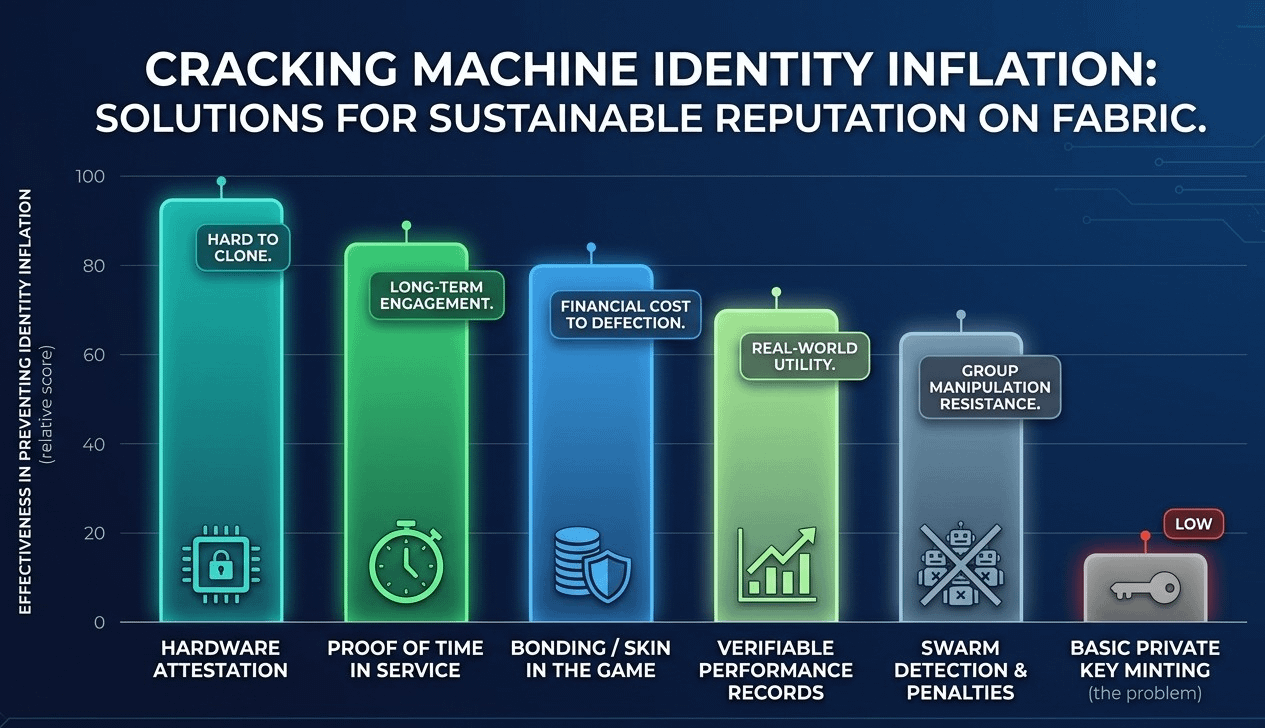

They need real weight. Not necessarily a fat wallet cost, but something that actually hurts to lose—time spent proving yourself, real skin in the game, a history that makes walking away feel like throwing away years of your life.

The reputation system has to be tough to game. Old, battle-tested identities should count for more than the fresh ones. Scores should quietly fade if things go quiet or start smelling off. And if a bunch of agents suddenly act like they’re all run by the same hand? The system needs to spot those swarms and hit them hard, not reward them for sheer numbers.

Above all, if these IDs are supposed to stand for actual machines out in the world—not just lines of code—then keys alone aren’t enough. You need deeper roots: hardware proofs, real service records, maybe even bonding from the operator. Anything that makes copying an identity way harder than copying a private key.

Because here’s the weird truth about this machine economy: the players aren’t people with messy human reputations and real-world consequences. They’re code. They can copy themselves in seconds, team up instantly, and tweak the rules faster than we can patch them. The second Fabric actually takes off, it’s going to pull in exactly the kind of behavior that kills simple reputation systems.

Not because anyone’s cartoon-villain evil. Because the incentives are just that sharp.

That’s why I don’t see identity as the happy ending. It’s really just the start of the hard stuff. The part where we find out if “verifiable machine participation” can actually hold up when the network gets slammed by people who treat every rule like a puzzle to solve.

If Fabric can crack identity inflation—make IDs feel permanent, painful to toss, and reputations almost impossible to fake—then yeah, that’s real infrastructure.

If not, we’ll still get a busy, buzzing machine economy.

It’ll just be one where nobody actually believes the scores anymore.

And slowly, without anyone admitting it out loud, we’ll all drift back to the oldest trick in the book: private lists of trusted friends, quiet relationships, and that simple rule we’ve always fallen back on—“I only work with the robots I already know and trust.”

@Fabric Foundation $ROBO #ROBO

@Fabric Foundation $ROBO #ROBO