The emergence of blockchain-based credential verification and token distribution systems reflects a growing need for scalable, secure mechanisms to authenticate identities and allocate digital assets across diverse networks. These infrastructures are no longer experimental curiosities; they are actively being implemented by projects in decentralized finance, digital identity, and enterprise blockchain settings. Understanding their operational design, incentives, and trade-offs is critical for anyone building, integrating, or regulating digital credential and token ecosystems.

At its core, the infrastructure for credential verification and token distribution seeks to address two interlinked problems. First, the digital environment requires a reliable mechanism to validate claims about identity, qualifications, or permissions. Traditional systems rely on centralized authorities to issue credentials, which creates bottlenecks, single points of failure, and limited interoperability. Second, the distribution of tokens—whether for governance, rewards, or access rights—requires a transparent, auditable system that aligns incentives and ensures compliance with predefined rules. Bridging credential verification with token allocation allows projects to enforce policies programmatically, such as granting tokens only to verified participants, or restricting certain actions to credentialed accounts.

Technically, these systems often employ a layered architecture. The base layer is typically a public or permissioned blockchain that records immutable transaction data. Smart contracts at this layer encode the rules for credential issuance, validation, and token distribution. Off-chain components are frequently used to handle identity verification checks, document validation, or attestations from trusted authorities. Zero-knowledge proofs and cryptographic commitments are increasingly integrated to preserve privacy while maintaining verifiability. This combination allows the system to confirm that a participant meets certain criteria without exposing unnecessary personal data.

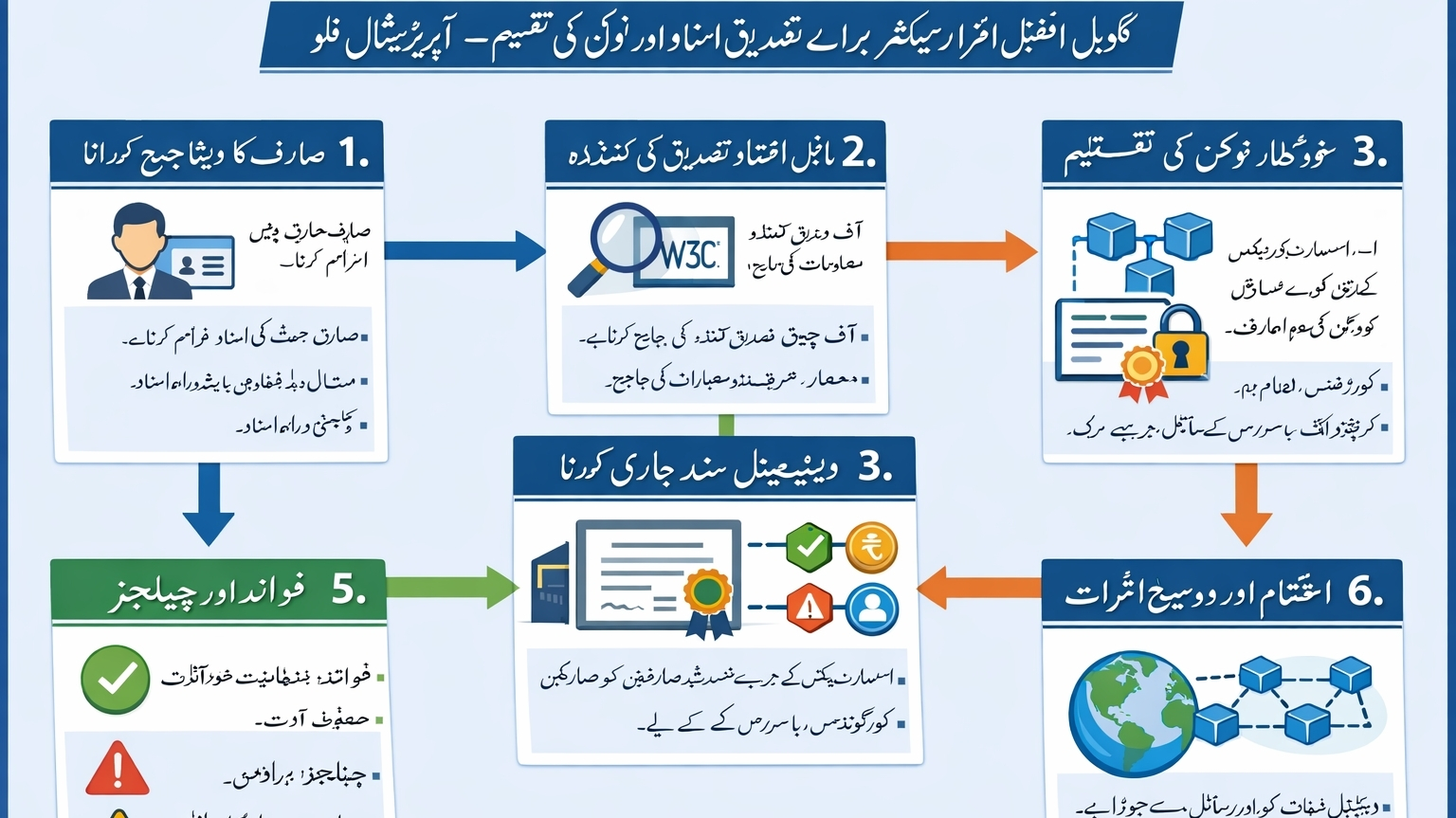

From an operational perspective, credential verification usually involves a multi-step flow. A user submits identifying information or proof of a claim to a trusted verifier. This verifier performs validation checks according to predefined standards and then issues a digital credential, often represented as a verifiable credential (VC) compliant with frameworks such as W3C standards. These credentials are cryptographically signed and anchored on-chain, ensuring tamper resistance. Once a credential is confirmed, the system can trigger token distribution events automatically, such as releasing governance tokens to verified contributors or granting access tokens for platform services. This integration reduces manual intervention and aligns token allocation with verifiable merit or eligibility.

The system’s design choices introduce specific trade-offs. Relying on off-chain verifiers can centralize risk, as a compromise or bias at the verification stage may undermine trust. Conversely, purely on-chain verification methods can be computationally expensive and less flexible in handling real-world documents. Token distribution mechanics also face challenges: fixed or rigid allocation rules may fail to accommodate legitimate edge cases, while fully dynamic, automated models can be exploited if economic incentives are not carefully calibrated. Observing deployed systems, it is evident that hybrid models—combining automated on-chain logic with human oversight for exceptional cases—tend to offer a practical balance between efficiency and resilience.

The implications for market participants are concrete. For end users, these systems affect accessibility and trust: a well-designed credential-verification system can streamline participation, allowing verified individuals to claim tokens or access services without repetitive KYC procedures. For developers, integrating with such infrastructure imposes requirements for interoperability, secure data handling, and adherence to tokenomics constraints. For organizations managing tokens at scale, these systems provide an auditable trail that can support compliance with regulatory frameworks, including anti-money laundering and know-your-customer standards, without necessitating full exposure of personal information.

Evaluating the strengths of these infrastructures, their principal advantage lies in automation coupled with verifiable accountability. Immutable record-keeping on a blockchain ensures that credential issuance and token allocations can be independently audited, enhancing transparency and reducing disputes. Furthermore, by linking credentials to tokens, projects can implement sophisticated access controls, incentivization schemes, and governance mechanisms that reflect verified participation rather than anonymous speculation.

However, limitations are evident and must be acknowledged. Scalability remains a concern: high-throughput verification processes or token distributions involving millions of users can encounter latency or transaction-fee issues on public blockchains. Privacy trade-offs are also inherent; even with cryptographic safeguards, metadata leakage or linkage attacks could expose patterns of participation. The reliance on trusted verifiers, while operationally pragmatic, introduces dependency on centralized entities whose governance and accountability must be carefully monitored. Additionally, token distribution linked to credentials can inadvertently create exclusionary effects if verification criteria are overly strict or biased, highlighting the need for inclusive design and periodic review of eligibility rules.

From a systemic perspective, these infrastructures illustrate broader trends in crypto and digital identity landscapes. Projects are increasingly seeking composable, interoperable credential systems that can function across multiple chains and applications. This trend reflects an understanding that the value of digital assets and permissions is maximized when they are portable, verifiable, and seamlessly integrated into existing protocols. Observations of live deployments suggest that modularity—where credential verification and token allocation are decoupled but interoperable—enhances flexibility while preserving security guarantees.

Practically, for an individual user interacting with such a system, the experience can be straightforward: a verified credential may allow them to claim governance tokens, participate in a community vote, or access premium services. For a builder, the infrastructure provides a framework where token distribution can be automated based on verifiable criteria, reducing operational overhead and mitigating risks of erroneous allocations. From a market standpoint, the credibility of the verification process directly influences token utility and the perceived legitimacy of the ecosystem, creating an incentive for projects to invest in robust, transparent verification protocols.

In conclusion, global infrastructures for credential verification and token distribution represent a critical evolution in the way digital assets and permissions are managed. By anchoring verification on blockchain records and automating token flows, these systems enhance transparency, efficiency, and alignment of incentives. Understanding the design, operational mechanics, and trade-offs of such infrastructures is essential for participants across the crypto ecosystem—from end users to developers and regulators. While challenges in scalability, privacy, and governance persist, these systems offer a framework that connects identity verification directly to asset management, a model increasingly relevant in decentralized finance, digital identity, and enterprise blockchain applications. The informed evaluation of these mechanisms equips stakeholders to navigate the complexities of emerging tokenized economies with a grounded understanding of their practical and systemic implications.