I remember the exact moment it started to bother me.@MidnightNetwork

It wasn’t anything dramatic. Just my younger brother sitting across from me late at night, phone in his hand, scrolling through a verification screen he didn’t fully understand. He looked up and asked, “Bhai, if I don’t allow this, will it still work?”

There was something in the way he said it not fear exactly, but a quiet dependence. Like he already knew the answer. Like the system wasn’t really giving him a choice, just the illusion of one.

I told him to just accept it.

And even as I said it, something in me felt off.

Because what I was really saying was: give it what it wants, or you don’t get to participate.$NIGHT

That moment stayed with me longer than it should have. Not because of the app, or the permissions, or even the data itself but because of how normal it felt. How easily we’ve learned to hand things over just to keep moving. No questions, no resistance. Just small, repeated acts of surrender that don’t feel like surrender anymore.

That’s where this idea of zero-knowledge systems starts to pull at me.

The promise is simple, almost comforting: you can prove something without revealing everything. You don’t have to expose yourself just to exist inside a system. You can keep your data, your identity, your edges intact and still function.

It sounds like relief.

But I’ve learned to be careful with things that sound like relief.

Because relief, in systems like these, is rarely where the story ends.

On paper, zero-knowledge proofs feel like a correction to something that has quietly gone wrong. For years, we’ve been building systems that ask for more than they need, storing more than they should, and exposing more than anyone can truly control. So the idea that you could reverse that that you could prove without revealing feels like someone finally noticed the discomfort.

But noticing discomfort and resolving it are not the same thing.

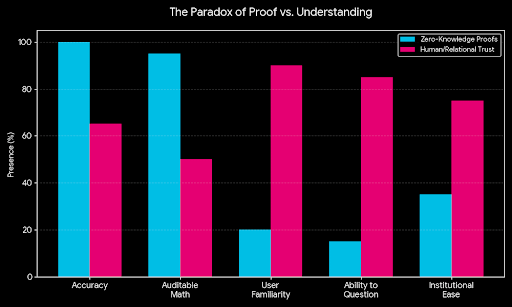

What I keep coming back to is this: proof is not the same as trust.

A system can prove that something is true. It can do it perfectly, mathematically, without error. But that doesn’t mean the person on the other side will accept it. It doesn’t mean an institution will be comfortable relying on it. It doesn’t mean a confused user will feel safe using it.

Because trust is not built on proofs alone.

It’s built on understanding, on familiarity, on the ability to question something when it feels wrong.

And zero-knowledge, by design, removes that ability to “look inside.”

That’s the part no one really sits with.

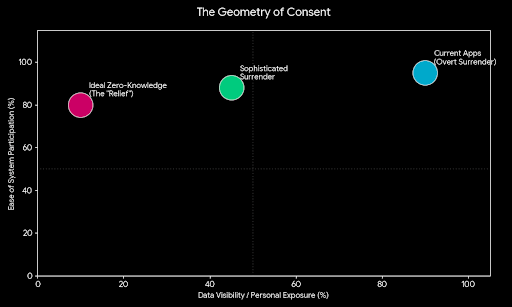

We say we want privacy, but what we often mean is: we want control over what is seen. Not the complete absence of visibility, but the ability to decide it. Zero-knowledge systems take a different path. They say: you don’t need to see anything, just trust the outcome.

And maybe that’s correct.

But it also feels like asking people to trust in a different direction than they’re used to.

I think about my brother again in that moment. If the system had told him, “Don’t worry, everything is verified, but you can’t see how,” would he have felt better? Or worse?

I’m not sure.

Because there’s a strange kind of anxiety that comes from not being able to trace something back. Even if it’s secure. Even if it’s private. There’s a human instinct to want to understand what’s happening, especially when it involves your identity, your access, your place inside something.

And when that instinct is blocked, something subtle shifts.

Not rejection. Just unease.

Then there’s the other side of it the institutions, the systems behind the systems.

They don’t operate on comfort. They operate on risk.

And risk, in their world, is not just about being wrong. It’s about being unable to explain why something was accepted or rejected. A zero-knowledge proof might say, “This is valid.” But what happens when someone asks, “Why?”

What happens when a regulator, or a manager, or a compliance officer needs more than just a yes or no?

Do they accept the boundary? Or do they start pushing against it?

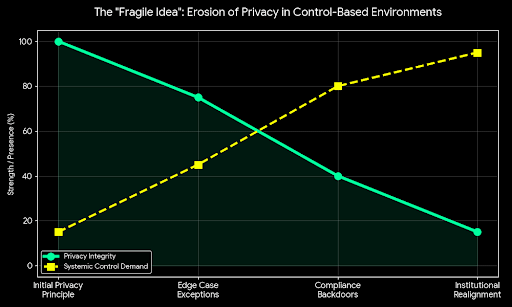

Because I’ve seen how these things usually go. Not in theory, but in practice. A system starts with strong principles privacy, minimal exposure, clean verification. And then, slowly, exceptions begin to appear. Not because the system failed, but because the environment around it couldn’t fully accept it.

A little more visibility gets added “just in case.” A small override is introduced “for edge cases.” A backdoor of explanation appears “for compliance.”

Each step feels reasonable.

But together, they start to change the shape of the system.

And that’s the part that makes me pause.

Not because I think the idea is flawed, but because I’ve seen how fragile good ideas become when they enter environments built on control.

Still, I can’t shake the feeling that something about this matters deeply.

Because beneath all the complexity, all the hesitation, all the unanswered questions there’s a very simple human desire sitting at the center of it.

The desire to exist without constantly proving yourself in ways that expose you.

The desire to be trusted without being fully seen.

The desire to participate without feeling like you’re slowly giving pieces of yourself away.

That’s not a technical problem. That’s a human one.

And maybe that’s why this doesn’t feel easy to resolve.

Because even if the technology gets it right—even if the proofs are perfect, the systems secure, the design elegant—the real question remains: will people accept a kind of trust they cannot verify for themselves?

Or will they keep reaching for visibility, even when it costs them something they can’t get back?

I don’t have a clean answer for that.

I just remember my brother, staring at that screen, waiting for me to tell him what to do.

And I wonder what it would take for him to feel like he actually had a choice.

I want to believe systems like this are a step in that direction.

I’m just not sure yet whether they change the experience—or simply reshape the surrender in a quieter, more sophisticated way, especially in something like a zero-knowledge blockchain.