I’ve been paying attention to how verification actually feels in real situations, not how it’s supposed to work on paper, and the more I look at it the more it feels off, like something we all accepted without really questioning, because every time I try to prove something small it somehow turns into me revealing way more than I intended, and I just go along with it because that’s how every system seems to work.

At first, it doesn’t bother you.

You upload a document.

You share your details.

You pass the check.

Done.

But then it happens again somewhere else.

And again.

And again.

Same information.

Different platforms.

And you start to realize… none of it is really contained.

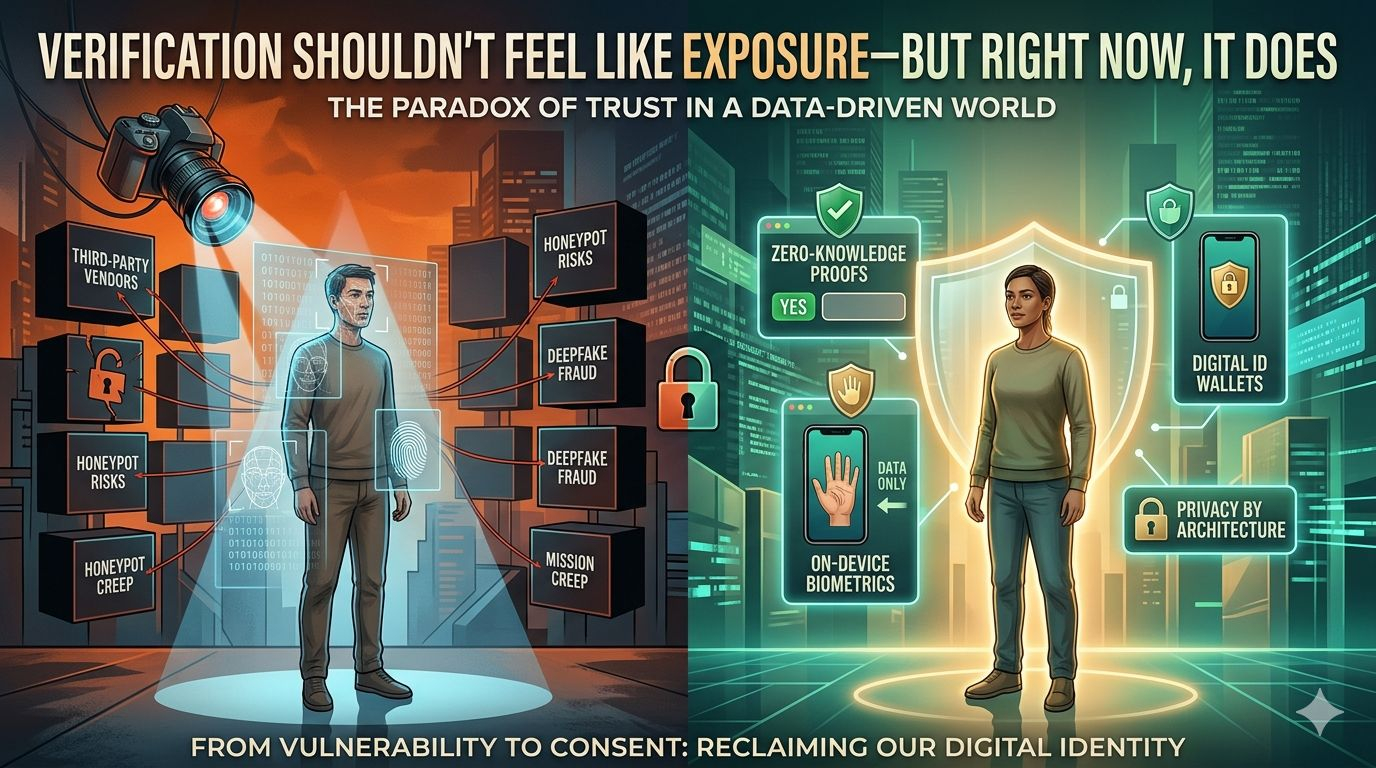

Your data isn’t just being used.

It’s being left behind.

Stored in places you don’t track.

Processed by systems you don’t see.

Sometimes copied into layers you didn’t even agree to.

Nothing breaks immediately.

That’s why it feels fine.

But slowly, it starts to feel heavier.

Like every “simple verification” is quietly expanding your exposure without you noticing.

And the strange part is, most of this isn’t even necessary.

That’s what keeps bothering me.

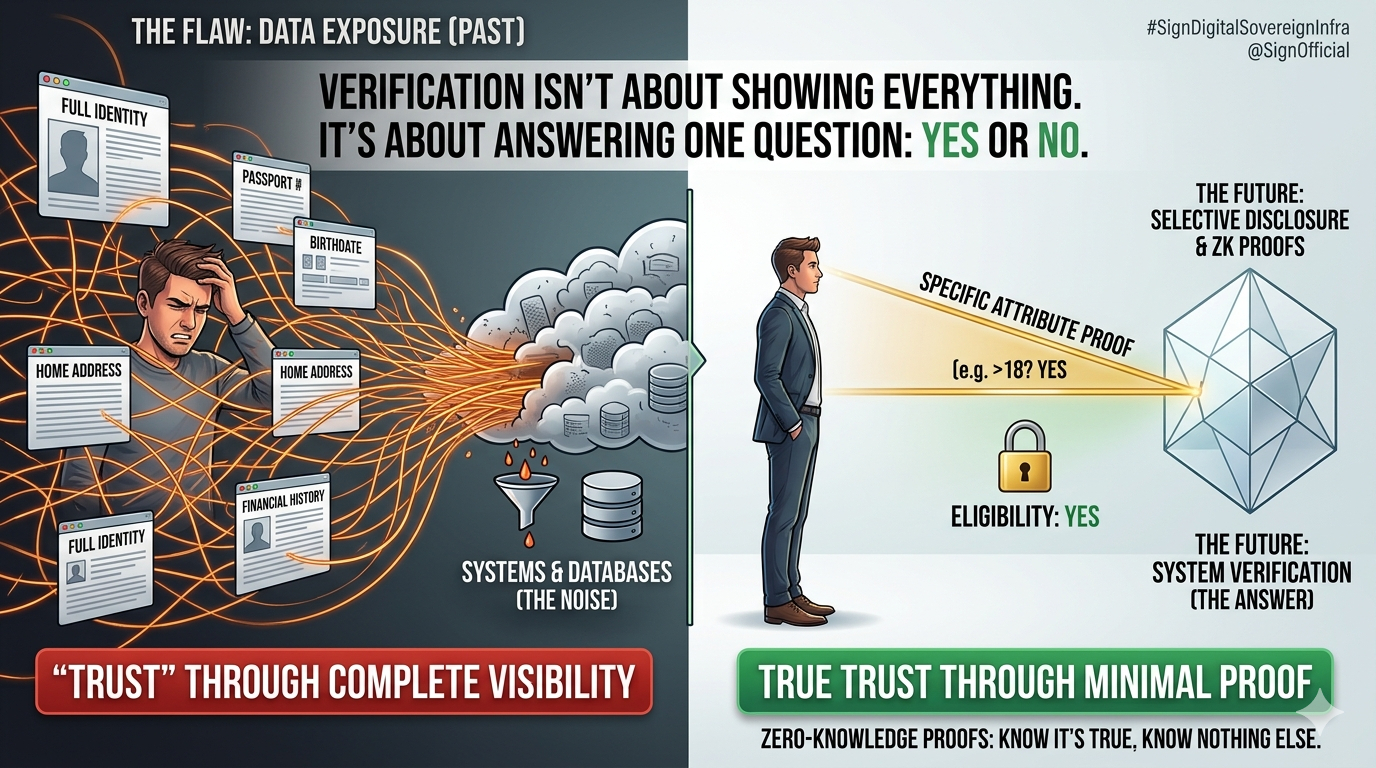

Because when you strip it down, verification isn’t about showing everything.

It’s about answering one question.

That’s it.

Are you eligible?

Yes or no.

Are you over a certain age?

Yes or no.

Do you meet the condition?

Yes or no.

But instead of just answering that, systems ask for the full story behind it.

Not because they need it.

But because that’s how they were built.

And we just adapted to it.

The more I think about it, the more it feels like a design flaw we normalized.

Because there’s a difference between proving something and exposing everything.

And right now, most systems don’t separate the two.

They treat visibility as a requirement for trust.

But it isn’t.

You don’t need to see all the data to know something is true.

You just need proof that it is.

That shift sounds simple, but it changes the whole experience.

Instead of handing over your identity, you prove one attribute.

Instead of exposing your history, you confirm one condition.

And nothing else moves.

That’s where things start to feel different.

Lighter.

More controlled.

Like you’re not losing something every time you pass a check.

And when I look at ideas like selective disclosure or zero-knowledge proofs, what stands out isn’t the tech… it’s the feeling.

You’re still being verified.

But you’re not being opened up.

The system gets what it needs.

But it doesn’t take what it doesn’t.

That’s such a small shift on the surface.

But it fixes something deeper.

Because the real problem was never verification.

It was how much of you had to be exposed just to get through it.

And the more systems move into sensitive areas like identity, money, access… the less sustainable that model becomes.

People don’t push back immediately.

They adjust.

They tolerate.

Until one day it just feels like too much.

And when that happens, the system doesn’t break all at once.

It just becomes something people trust less, use less, rely on less.

Quietly.

That’s why this matters more than it looks.

Because good systems don’t make you feel like you’re giving something up every time you interact with them.

They take what they need.

And leave the rest untouched.

And maybe that’s

the real shift here.

Not better verification.

Just less unnecessary exposure.