There’s something I used to believe was pretty straightforward:

whoever stakes more → has more influence.

And most of the time… that doesn’t end well.

Back in early 2025, we saw governance cases getting manipulated by just a handful of large wallets. It didn’t take a crowd — a small group with enough tokens could shape the outcome. When capital is big enough, “truth” sometimes takes a back seat.

So when I first came across SIGN using staking to validate models, my initial reaction was:

“Here we go again — another playground for whales.”

But the deeper I looked, the more I realized the core mechanism here is actually different.

SIGN doesn’t use staking to vote on what’s right or wrong.

It uses staking as a financial commitment to belief.

You don’t just “support” a model — you put money behind it.

If the model is wrong? You pay for it. Not with reputation, but with real assets.

The key lies in one mechanism: slashing.

Without slashing, staking is just a formality.

With strong enough slashing, staking becomes accountability.

Think of it like co-signing a loan.

You’re not just saying “I trust this person” — you’re putting your own money at risk. If they fail, you take the hit.

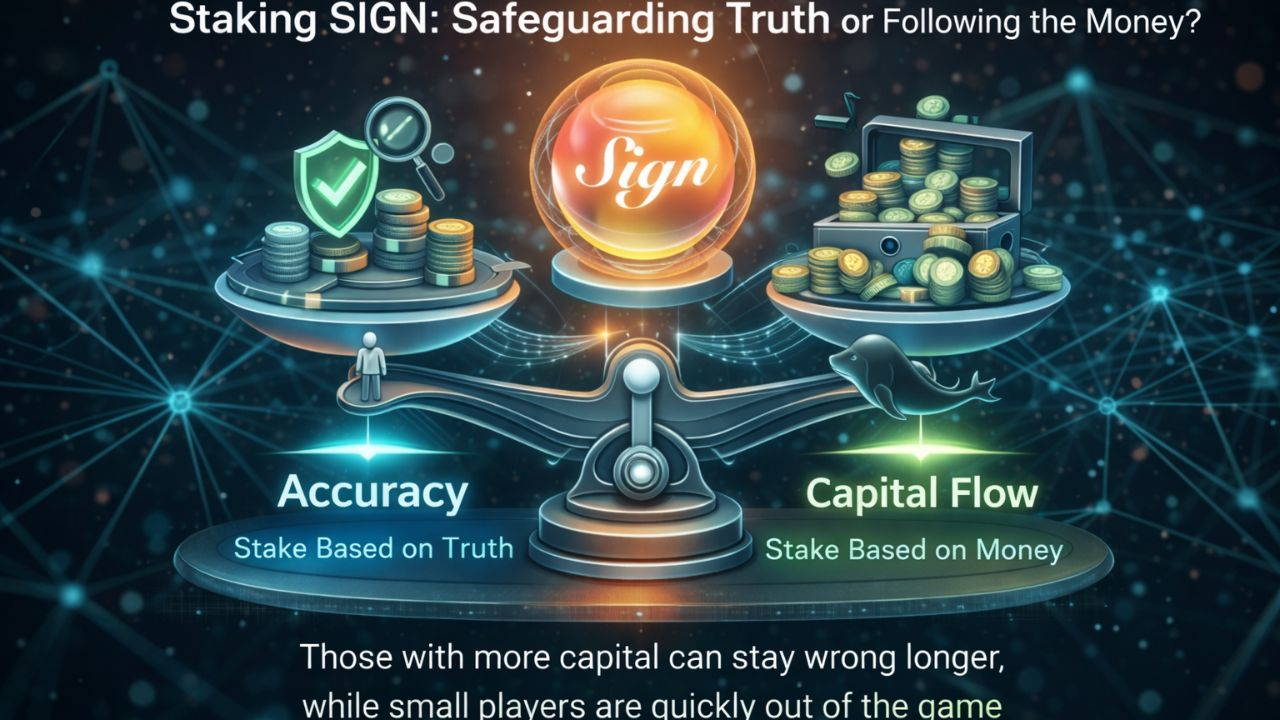

⚖️ SIGN sits between two extremes that have already failed

No staking → open participation → spam & Sybil attacks

Heavy staking → whale dominance → centralization

SIGN tries to balance both:

👉 Stake to create responsibility

👉 Keep the system open for multiple models to compete

Sounds great in theory. But reality is messier.

⚠️ Problems start to appear in practice

Two likely scenarios:

1. Whales shape “truth”

A model gets heavily staked — not necessarily because it’s right, but because it has strong capital backing. Over time, it becomes the default standard.

2. Fragmentation

Smaller participants spread their stake across many models → too many options, but none strong enough to build consensus.

Neither outcome is ideal.

One leads to soft centralization, the other to chaos.

🧠 The most important insight

In this system:

It’s not that those who stake more are more correct.

It’s that they can afford to be wrong longer.

Large holders can keep backing a flawed model for extended periods.

Smaller players? One mistake and they’re out.

And from here, a subtle but dangerous shift happens:

👉 People start staking based on capital flow

👉 Not based on accuracy

At that point, the system no longer reflects truth

—it reflects where the money is going

🚧 What SIGN needs to address

For this model to truly work, a few things are critical:

Limit the influence of large stakes per model

Increase the speed and severity of slashing

Introduce weighting based on historical accuracy

Reduce the ability for capital to “sustain being wrong”

🎯 Conclusion

At first, I thought staking was about power.

But looking closer, it feels more like high-risk insurance.

You take a side with your capital.

If you’re right → you earn

If you’re wrong → you pay

But here’s the catch:

those with more money can always afford more “insurance.”

👉 SIGN succeeds if:

The cost of being wrong is high — and happens fast

👉 SIGN fails if:

Large capital can afford to stay wrong for too long

And once you see it this way,

it’s hard to look at staking as just “belief” ever again.

@SignOfficial #SignDigitalSovereignInfra $SIGN