Sign Protocol is one of those projects I didn’t take seriously at first.

I’ve seen this pattern too many times. Take a vague problem—trust, identity, verification—wrap it in clean language, add some cryptography, and call it infrastructure. Most of it doesn’t survive contact with real systems. It either becomes niche or quietly disappears when things get complicated.

Still, I kept looking at this one. Not because of the branding, but because the problem it’s pointing at is real—and it’s worse than most people admit.

I’ve worked around systems that execute perfectly and still fail completely. Payments go through. Access gets granted. State changes happen exactly as designed. No errors. No downtime. Everything looks healthy.

Then someone asks for proof.

That’s where it breaks.

Not because the data doesn’t exist, but because it doesn’t hold together. Logs are scattered. Context is missing. Decisions can’t be reconstructed cleanly. You end up stitching together partial records and hoping nobody asks deeper questions.

It’s a mess.

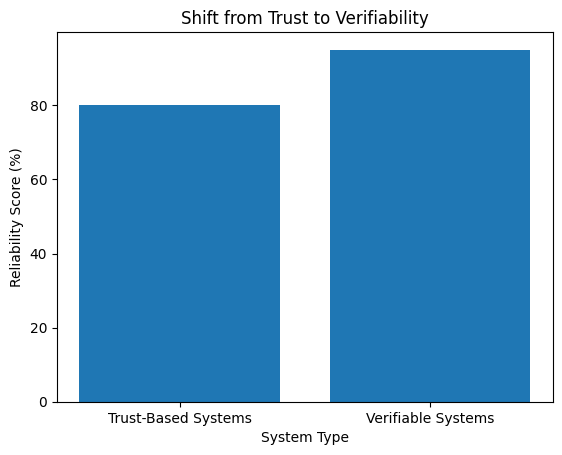

The industry likes to talk about trust as if it’s something you can engineer directly. You can’t. What you can do is make systems that explain themselves—clearly, consistently, and without relying on someone’s memory or authority.

Most systems don’t do that.

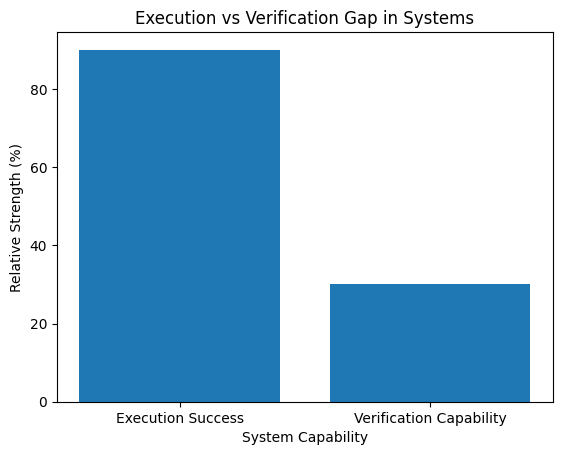

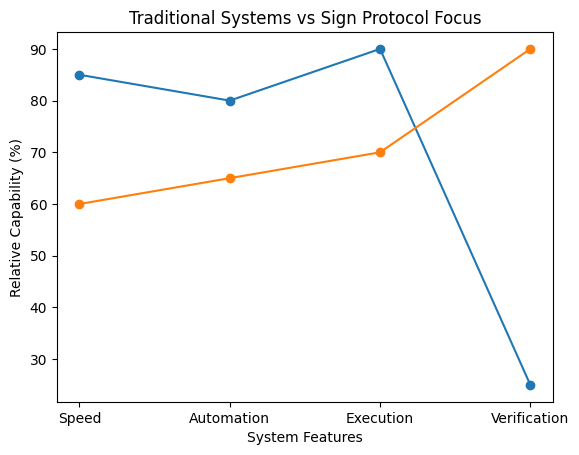

They’re built to run, not to justify their behavior later. And those are two very different requirements.

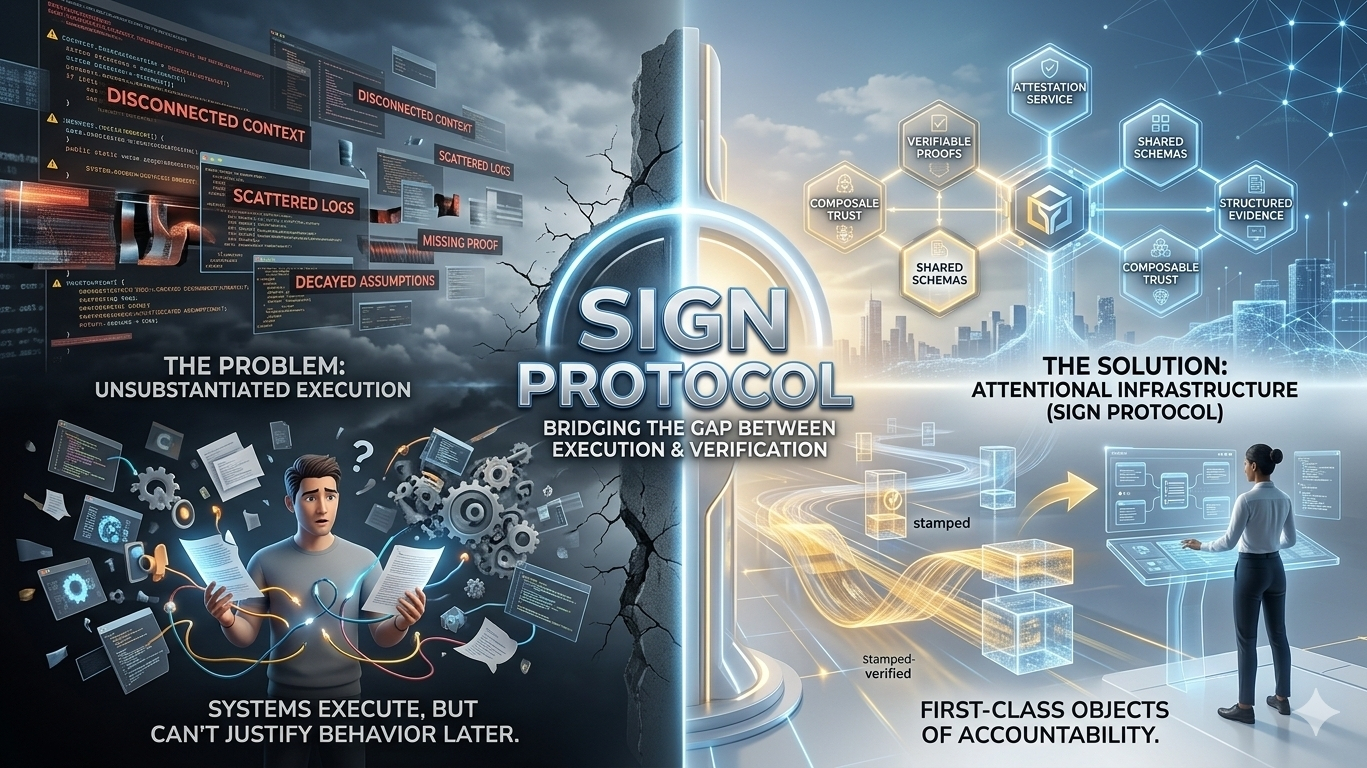

What Sign Protocol is trying to do is narrow that gap. Not by adding another application layer, but by forcing structure onto something that’s usually left loose: evidence.

Every action becomes something you can attest to. Not just “this happened,” but “this happened under these conditions, verified by this party, using this schema.” That last part matters more than people think. Without structure, data is just noise.

I’ve seen teams assume logs are enough. They aren’t. Logs tell you that something happened. They rarely tell you whether it should have happened.

That distinction causes problems everywhere.

Token distributions are a good example. On paper, they’re straightforward. Define eligibility, execute distribution, move on. In reality, I’ve seen disputes weeks later because nobody could clearly prove why certain wallets qualified and others didn’t. The system executed fine. The logic didn’t hold up under scrutiny.

Same story with identity systems. KYC gets done, but it doesn’t travel well. Every platform redoes it because there’s no shared, verifiable layer of proof. You end up with duplicated effort and inconsistent standards.

Audits are another one. A report says something was reviewed. Maybe it was. Maybe it wasn’t thorough. There’s usually no structured, machine-verifiable trail that shows what was actually checked.

Again, execution isn’t the problem. Verification is.

Sign Protocol’s approach is to treat attestations as first-class objects. Structured, queryable, and anchored in a way that survives beyond the original system. That’s the part I find interesting. Not the idea of signing data—we’ve had that for years—but the insistence on making it usable later.

Because that’s where most designs fall apart.

They assume the system boundary is permanent. It isn’t. Data moves. Systems integrate. Teams change. Months later, someone new needs to understand what happened, and the original context is gone.

If the evidence isn’t structured properly, it’s effectively lost—even if it technically still exists.

I like that Sign leans into schemas. It’s not exciting, but it’s necessary. Shared structure is the only way different systems can interpret the same piece of data without ambiguity. Otherwise, you’re back to custom logic and implicit assumptions.

And assumptions don’t scale.

There’s also a practical angle here that people tend to overlook. As systems get more interconnected—across chains, services, and jurisdictions—the cost of blind trust goes up. You can’t rely on reputation when everything is composable and loosely coupled.

You need something you can verify independently.

Not “we checked this.”

Not “this is compliant.”

Actual evidence you can inspect.

That’s a higher bar than most systems are built for.

I don’t think Sign Protocol magically solves this. No single layer does. The reality is messier. Adoption is hard. Standards take time. And most teams won’t prioritize this until they’re forced to—usually after something goes wrong.

But the direction makes sense.

If anything, the industry has spent too long optimizing for execution speed while treating verification as an afterthought. That imbalance is starting to show. More complexity, more integrations, more scrutiny—and the same weak audit trails underneath.

At some point, that stops being acceptable.

What I see in Sign Protocol isn’t a finished answer. It’s a push toward making systems accountable in a way they currently aren’t. Less reliance on trust, more emphasis on verifiable context.

That’s not flashy. It doesn’t demo well. But it’s the kind of thing that quietly becomes essential once systems mature.

And if you’ve ever had to explain a system’s behavior weeks after the fact—with incomplete logs and too many assumptions—you already know why this matters

#SignDigitalSovereignInfra @SignOfficial $SIGN