I used to believe that if systems were transparent enough, trust would follow naturally.

It felt intuitive put everything on chain, make it verifiable, remove ambiguity, and the system would take care of itself. For a while, I leaned into that idea. Transparency looked like the cleanest solution to broken coordination and weak accountability.

But over time, something didn’t hold.

The most transparent systems weren’t always the most usable. In some cases, they were the least adopted. Users hesitated to engage, institutions avoided deeper integration, and participation often remained shallow, visible, but not sustained.

That’s where the discomfort started to build.

When I stepped back, the issue wasn’t transparency itself, it was how rigidly it was being applied.

Most architectures treated data in extremes. Either everything was pushed on chain for maximum visibility, or everything stayed off chain under opaque control. There was very little nuance in how information was structured, accessed, or verified.

And that created a quiet friction.

Users don’t naturally want permanent exposure of their activity. Institutions can’t operate under full public visibility due to compliance and operational constraints. At the same time, fully off chain systems often lack credibility because verification depends on trust rather than proof.

So what we ended up with were systems that looked decentralized but behaved selectively centralized. Interfaces controlled access, auditability was inconsistent, and privacy often meant obscurity instead of intentional design.

The ideas sounded important, but they didn’t translate into practice.

At some point, I stopped focusing on whether a system was “more decentralized” or “more transparent.”

Instead, I started asking a different question:

Does this system reduce friction, or does it quietly introduce more of it?

That shift moved my attention from architecture to behavior. I began observing how systems were actually used whether interactions repeated, whether integrations deepened, whether users needed to think about the system at all.

The systems that worked didn’t demand awareness. They operated in the background, embedding themselves into workflows without forcing users to constantly manage exposure, permissions, or verification.

That’s when my perspective started to change.

When I came across @SignOfficial and its $SIGN framework, it didn’t immediately feel like a breakthrough.

If anything, at first, it felt understated.

But upon reflection, what stood out wasn’t what it claimed, it was the question it quietly introduced:

Can a system be both verifiable and private, without forcing users to choose between the two?

That question sits at the center of most real world systems, not just in crypto.

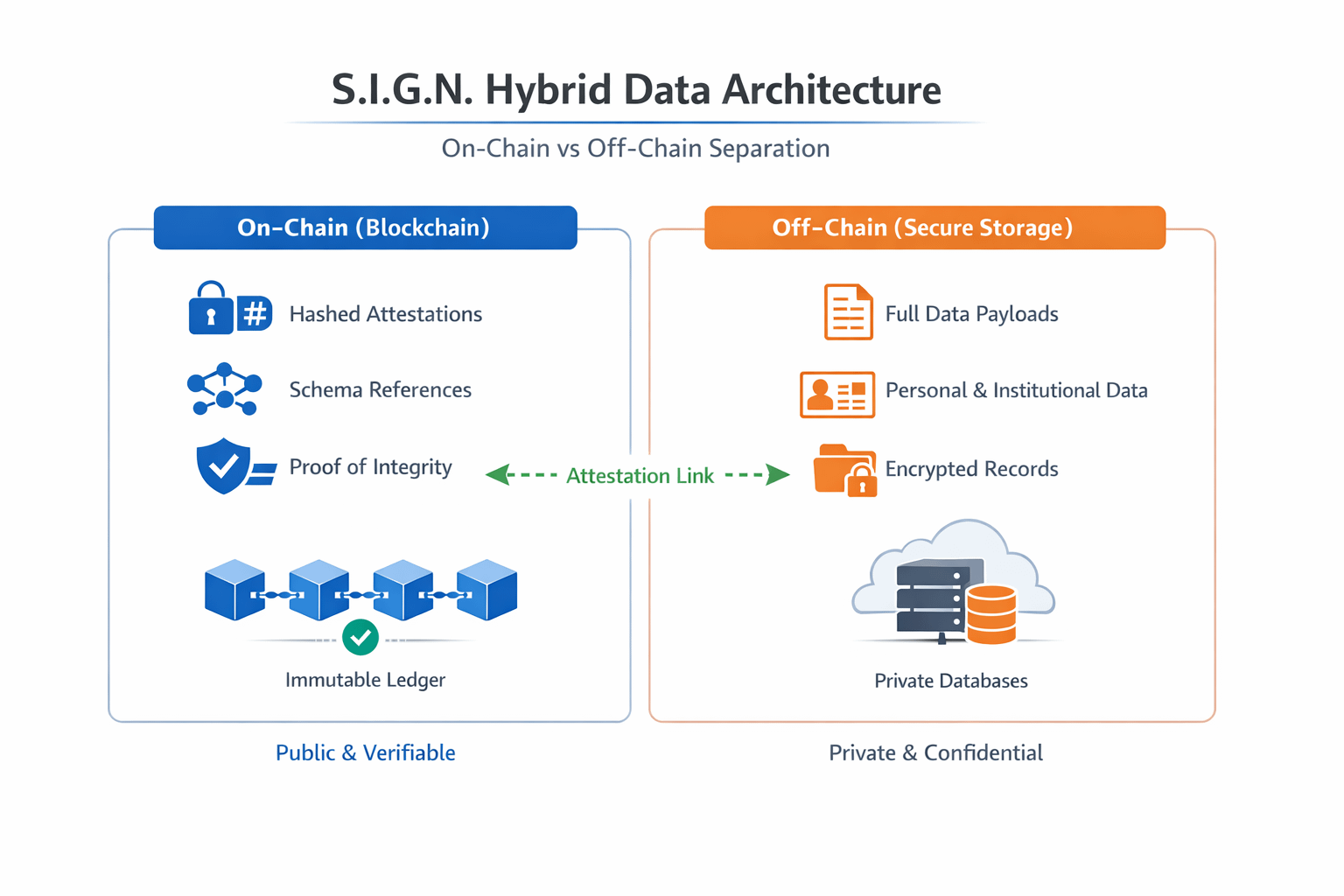

#SignDigitalSovereignInfra security and privacy model doesn’t try to maximize transparency or privacy in isolation. Instead, it separates where each one belongs.

Not all data needs to be on chain. And not all verification requires exposure.

In this model, attestations, structured proofs of claims are anchored on chain in a minimal form. What is stored publicly are verifiable references: hashes, schema linked records, and integrity proofs that ensure data has not been altered.

The underlying data, the sensitive context, personal details, or institutional records remains off chain.

But importantly, it doesn’t disappear. It becomes selectively accessible.

What makes this design practical is how it structures information.

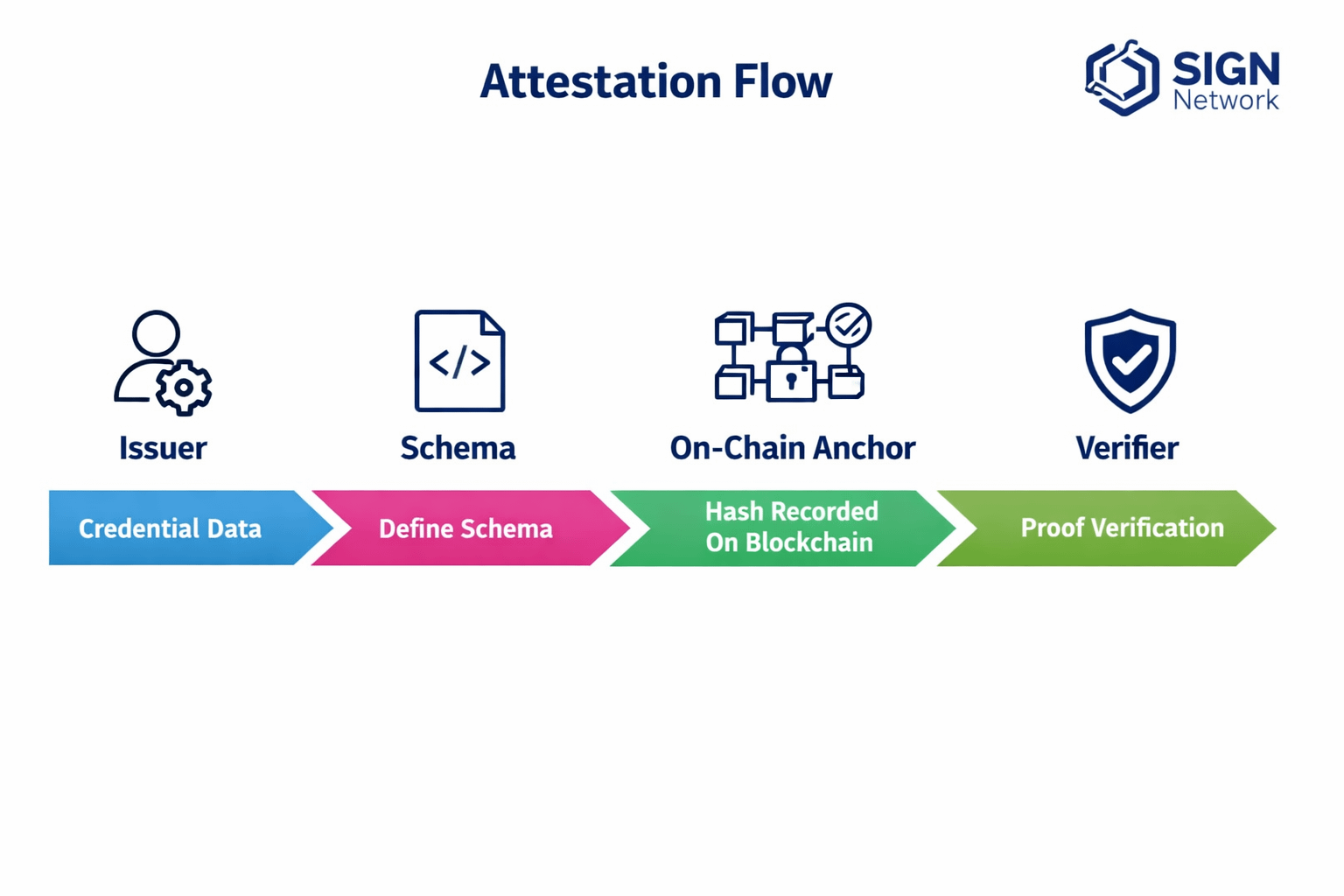

Attestations are defined through schemas. These schemas standardize what a claim represents, how it is issued, and how it can be interpreted across systems. This allows attestations to be reused rather than recreated, forming a consistent layer of verifiable context.

On chain, the system maintains cryptographic hashes of attestations, schema references, and proof of issuance and integrity. This creates a verifiable anchor without exposing raw data.

Off chain, it maintains full data payloads, sensitive attributes, and contextual records linked to identity.

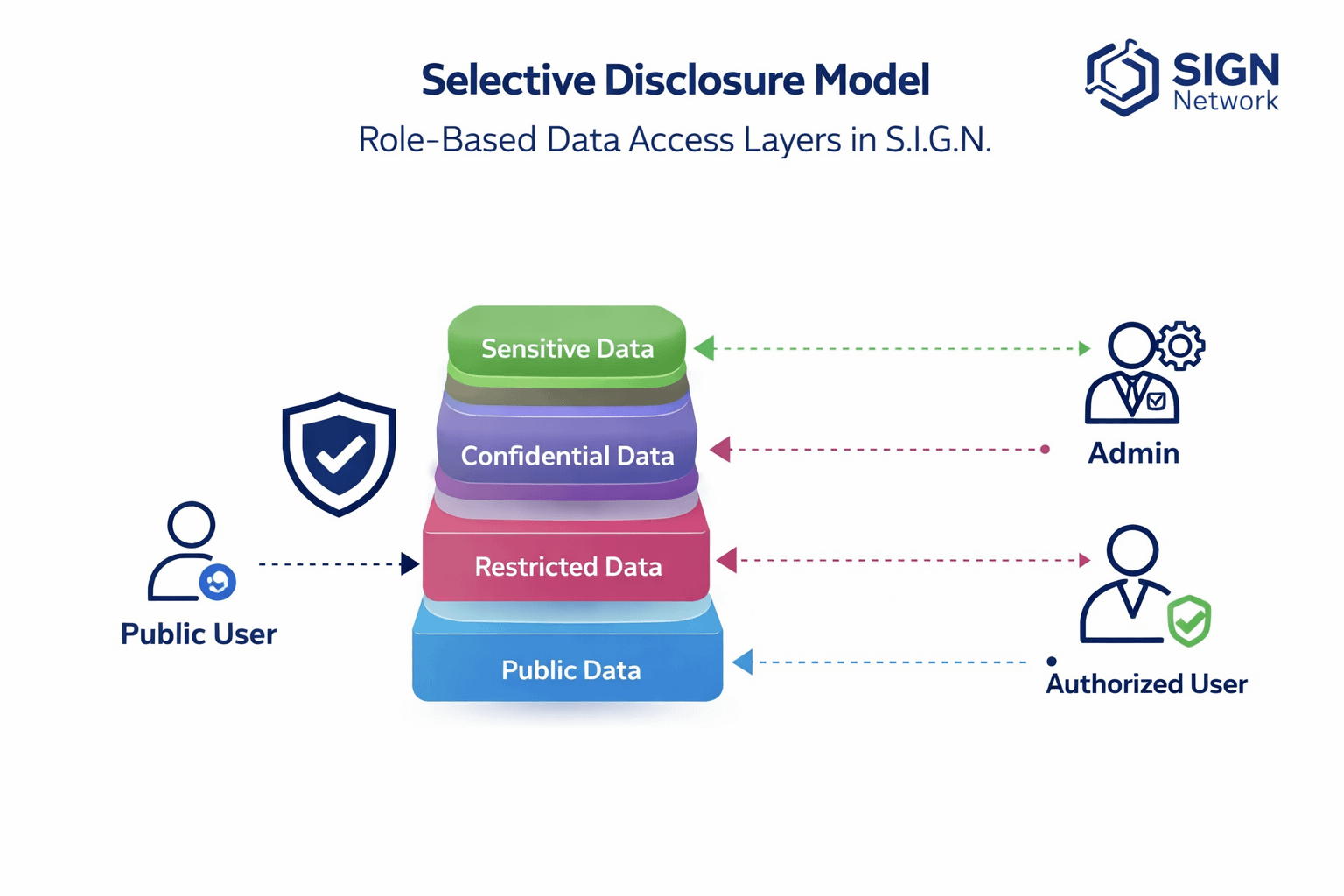

Access to this data is not public by default. It is controlled.

Permissions are tied to identity and role, allowing authorized parties such as auditors, institutions, or counterparties, to access underlying data when required. This enables lawful auditability without forcing universal disclosure.

Each attestation remains traceable and non repudiable. Actions can be verified, origins can be validated, and audit trails can be reconstructed without exposing sensitive information to the public layer.

Trust, in this model, is not just about data, it’s about who issues it.

Issuers create attestations based on defined schemas, and their credibility becomes part of the system. Verifiers rely on both the integrity of the data and the reputation of the issuer. This separation introduces a more realistic trust model, one that mirrors how institutions already operate.

Security also extends beyond the protocol itself. It depends on how operators manage keys, enforce access controls, and structure data flows. The system provides the framework, but its reliability depends on disciplined implementation.

What I found interesting is that this model doesn’t feel entirely new, it feels familiar.

Financial systems validate transactions without exposing every detail. Identity systems confirm credentials without revealing full personal data. Audit systems allow selective access rather than permanent visibility.

Sign follows the same logic, but replaces institutional trust with cryptographic guarantees and portable attestations.

What changes isn’t just infrastructure, it’s interaction.

Users don’t need to repeatedly prove the same information. Institutions don’t need to over-collect data. Systems can interoperate through shared verification rather than repeated disclosure.

This approach aligns with a broader shift in digital systems.

Trust is no longer built through visibility alone. In many environments, excessive transparency reduces participation rather than increasing it. People are more aware of data exposure, and institutions are under pressure to balance access with responsibility.

At the same time, regions building new digital infrastructure, particularly in the Middle East and parts of Asia require systems that support both compliance and scale. These systems cannot rely on full transparency or full opacity. They require structured disclosure.

A model that separates verification from exposure fits naturally into this direction.

Not because it is novel but because it is compatible with how real systems function.

Markets tend to reward visibility, metrics, announcements, and surface-level activity.

But systems like Sign operate differently. Their value is not in being seen, but in being used repeatedly, quietly, and across contexts.

There is a gap between attention and utility.

Markets price expectations. Infrastructure earns relevance through integration, repetition, and dependency.

And those signals emerge slowly.

Even with strong design, adoption is not guaranteed.

If identity is not embedded into real workflows, it remains optional. If attestations are not reused across applications, they remain isolated events. If schemas are not standardized or adopted widely, composability weakens.

There is also the problem of thresholds.

A single interaction rarely justifies structured verification. But repeated interactions across services, institutions, and ecosystems, create compounding value.

If that threshold of repeated usage is not reached, the system risks remaining technically sound but practically underutilized.

What this framework ultimately made me reconsider is the relationship between systems and behavior.

Technology does not create trust on its own. It creates conditions under which trust can emerge.

Privacy is not just protection, it is confidence in participation.

Transparency is not just visibility, it is accountability with boundaries.

Balancing the two is not about maximizing features. It is about aligning systems with how people actually interact.

At this point, I look for different signals.

Not announcements, but integrations.

Not one time usage, but repeated interaction across contexts.

Not theoretical capabilities, but systems that quietly become part of infrastructure.

If applications begin requiring identity linked attestations, if issuers establish credibility over time, if verifiers rely on these attestations consistently, and if operators sustain secure and disciplined environments, those are the signals that matter.

Everything else is secondary.

I no longer think transparency alone builds trust.

And I no longer think privacy alone protects it.

The systems that last are the ones that structure both, carefully, deliberately, and without demanding attention.

Because the difference between an idea that sounds necessary and infrastructure that becomes necessary is simple:

It gets used again.