LOOK I’ve started to notice a pattern in myself whenever a new infrastructure project shows up claiming to “fix trust.” I stop paying attention. Not immediately, but gradually—like a slow closing of a tab I never meant to open in the first place. It’s not that I think the problems aren’t real. It’s that I’ve heard the language too many times. Everything promises to unify, to streamline, to eliminate friction. And yet, in practice, friction just relocates. It doesn’t disappear.

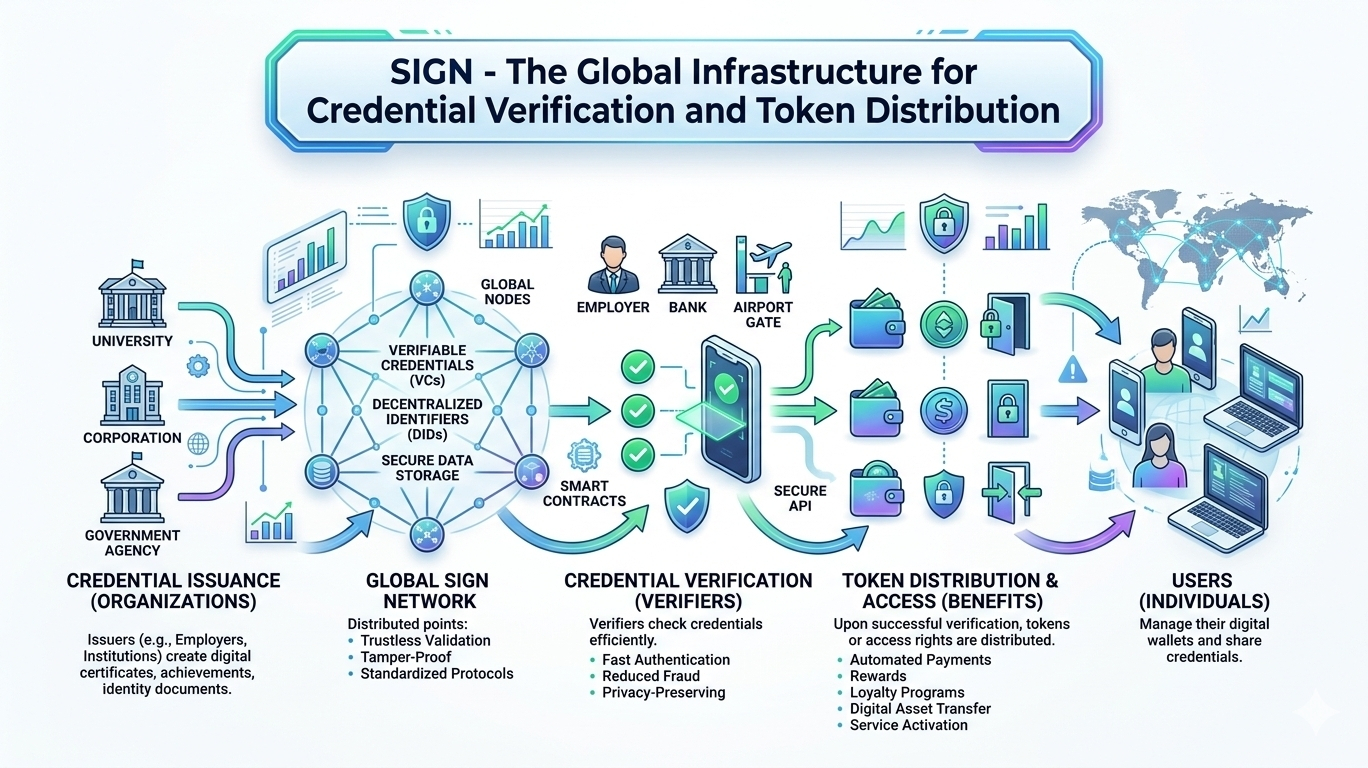

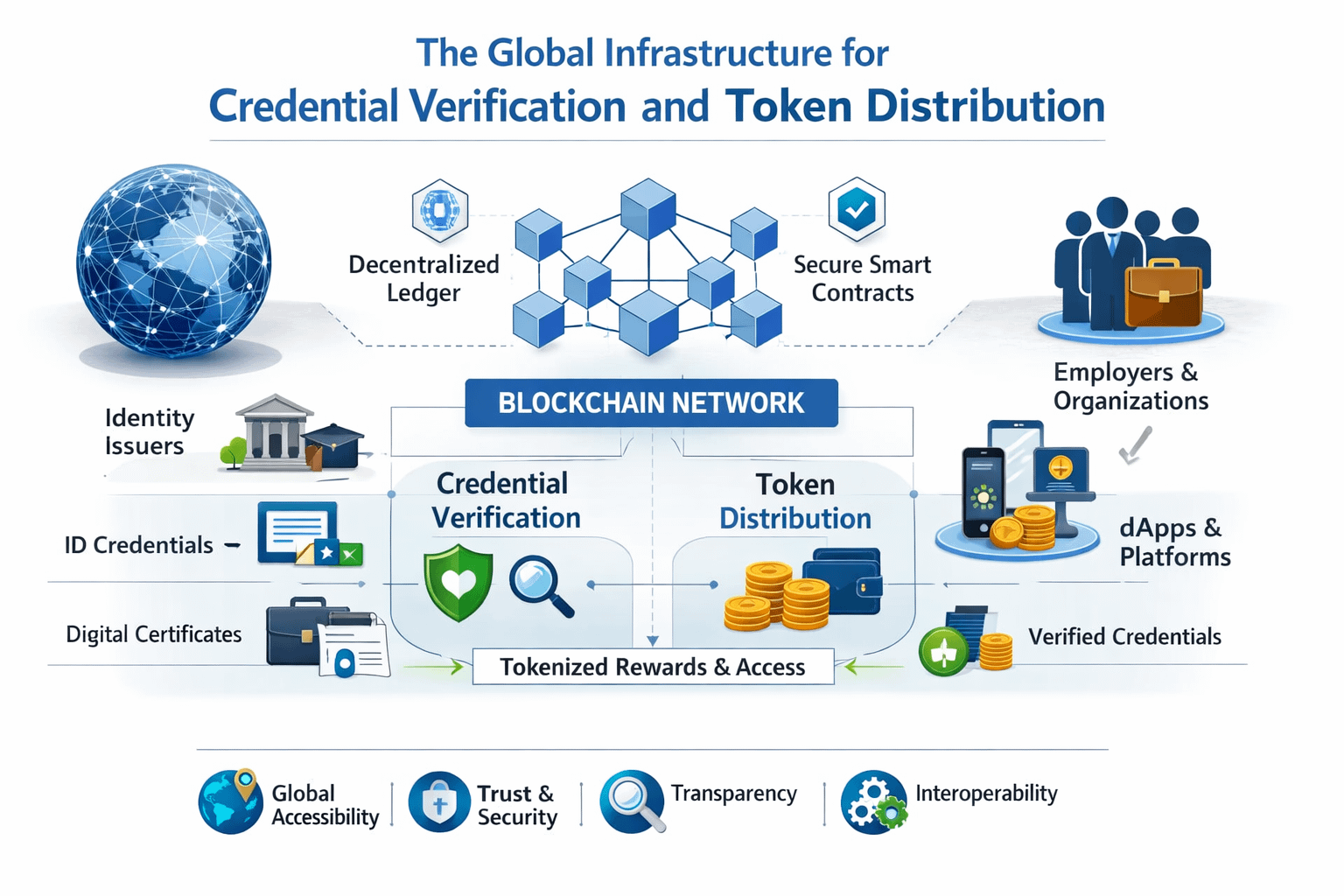

So when I first came across something like SIGN, positioned as infrastructure for credential verification and token distribution, I instinctively categorized it the same way. Another attempt to standardize trust. Another layer that assumes the problem is lack of verification, or lack of data integrity, or lack of coordination between systems. I didn’t think much of it.

But then I started thinking about something more mundane. A document I had to submit more than once. The same identity proof, verified by one authority, then rejected or rechecked by another—not because it was incorrect, but because it didn’t “fit” the second system’s expectations. It wasn’t legible in the same way. The truth hadn’t changed. Only the context had.

That’s where the friction actually lives, I think. Not in whether something is true, but in whether that truth can travel.

And that’s where my attention shifted, almost reluctantly. Because what I began to notice isn’t that systems fail to verify things. They do that constantly, often redundantly. The real failure is that each system seems to require its own interpretation of truth. Verification becomes localized. Meaning becomes trapped.

I started looking again at what these newer infrastructures are trying to do, and something felt slightly different—not in what they claim, but in how they frame the problem. The language around attestations, for example, kept coming up. Not just signatures, not just proofs, but statements about something, anchored in a way that other systems can reference.

It sounds subtle, maybe even semantic. But it began to feel like a shift from proving something once, to making that proof usable elsewhere without losing its shape.

That distinction stayed with me longer than I expected.

Because if I think about most real-world systems—financial, governmental, even social—they’re not short on verification mechanisms. Banks verify identity. Governments verify citizenship. Platforms verify accounts. The issue isn’t absence of proof. It’s that each verification exists inside its own boundary. Crossing that boundary often means starting over.

And that repetition isn’t just inefficient. It changes behavior. It introduces delays, inconsistencies, and sometimes quiet exclusions. Not because someone is being denied outright, but because their proof doesn’t translate cleanly.

So when I look at something like SIGN now, I don’t see it as trying to “verify better.” That framing feels too narrow. It’s closer to an attempt to preserve the meaning of a verification event as it moves across systems. To make an attestation carry context, not just correctness.

And that raises a different kind of question.

Because if meaning can travel, then trust behaves differently.

In cross-border scenarios, for instance, identity is constantly reinterpreted. A credential issued in one jurisdiction often has to be revalidated—or sometimes reissued—just to be accepted elsewhere. The data is there. The truth is there. But its usability depends on local recognition.

The same applies to compliance. A transaction might meet regulatory standards in one system but fail in another, not because it’s non-compliant in essence, but because the compliance context doesn’t map. So systems compensate by layering additional checks, more documentation, more repetition.

It’s not that they don’t trust the data. It’s that they don’t trust its meaning outside their own frame.

And that’s where this idea starts to feel less technical and more structural.

If infrastructure can reduce how often truth needs to be re-proven, then it’s not just optimizing processes. It’s changing how systems relate to each other. It’s shifting from isolated verification loops to something more continuous—where a verified state can persist, or at least be referenced, across environments.

But that also introduces tension.

Because preserving meaning across systems isn’t neutral. It implies some level of standardization, or at least shared interpretation. And that can conflict with local control. With regulatory nuance. With the ability to reinterpret data based on context.

There’s also the question of visibility. If attestations become portable, what gets exposed along the way? Even with privacy-preserving techniques, there’s metadata. There’s structure. There’s an underlying trace of how something was verified and by whom.

So the trade-off starts to emerge quietly. Efficiency versus control. Portability versus discretion.

And I don’t think systems have resolved that tension yet. They’re just beginning to surface it.

In some regions, especially where digital infrastructure is being built rapidly—parts of the Middle East, emerging markets, even experimental CBDC environments—you can see early versions of this. Pilots that try to unify identity layers, or streamline distribution of funds, or create interoperable credential systems.

They often work, at least in controlled settings. But the gap between pilot and production is still there. Not just technically, but politically. Institutionally. There’s inertia, but also something more subtle—hesitation around what it means to let trust travel too freely.

Because once a system accepts externally generated meaning without reinterpreting it, it gives up a degree of control. And not every system is ready for that.

That’s where my skepticism returns, though it feels different now. Less dismissive, more cautious.

It’s not that these infrastructures won’t work. Some of them probably will, in specific contexts. The question is what happens when they do.

If attestations become widely accepted across systems, does that reduce friction, or does it create new forms of dependency? If verification becomes portable, who defines the standards of meaning? And what happens to systems that don’t—or can’t—align with those standards?

There’s also the possibility that control doesn’t disappear, it just becomes less visible. Embedded in the infrastructure itself. In how attestations are structured, in which entities are trusted to issue them, in how revocation or updates are handled.

So even as repetition decreases, influence might consolidate.

I’m not sure that’s a flaw. But it’s not a neutral outcome either.

What I keep coming back to is that initial realization—that verification isn’t the core problem. Context loss is. And any system that tries to address that is operating at a deeper layer than most infrastructure narratives acknowledge.

It’s not just about moving data. It’s about preserving what that data means as it moves.

And that feels significant in a way that’s hard to fully articulate. Not because it’s revolutionary, but because it’s subtle. It changes how trust behaves, almost invisibly.

But I’m still not entirely convinced where that leads.

Because if meaning starts to travel more freely across systems, it might reduce friction in ways we’ve been trying to solve for years. Or it might expose new kinds of rigidity—where meaning becomes fixed in ways that are harder to adapt, reinterpret, or challenge.

And maybe that’s the part that keeps me from settling into a clear conclusion.

This kind of infrastructure doesn’t just make systems more efficient. It reshapes the boundaries between them.

That probably matters more than it looks.

But I’m still not sure what it becomes once it actually works.

@SignOfficial #SignDigitalSovereignInfra $SIGN