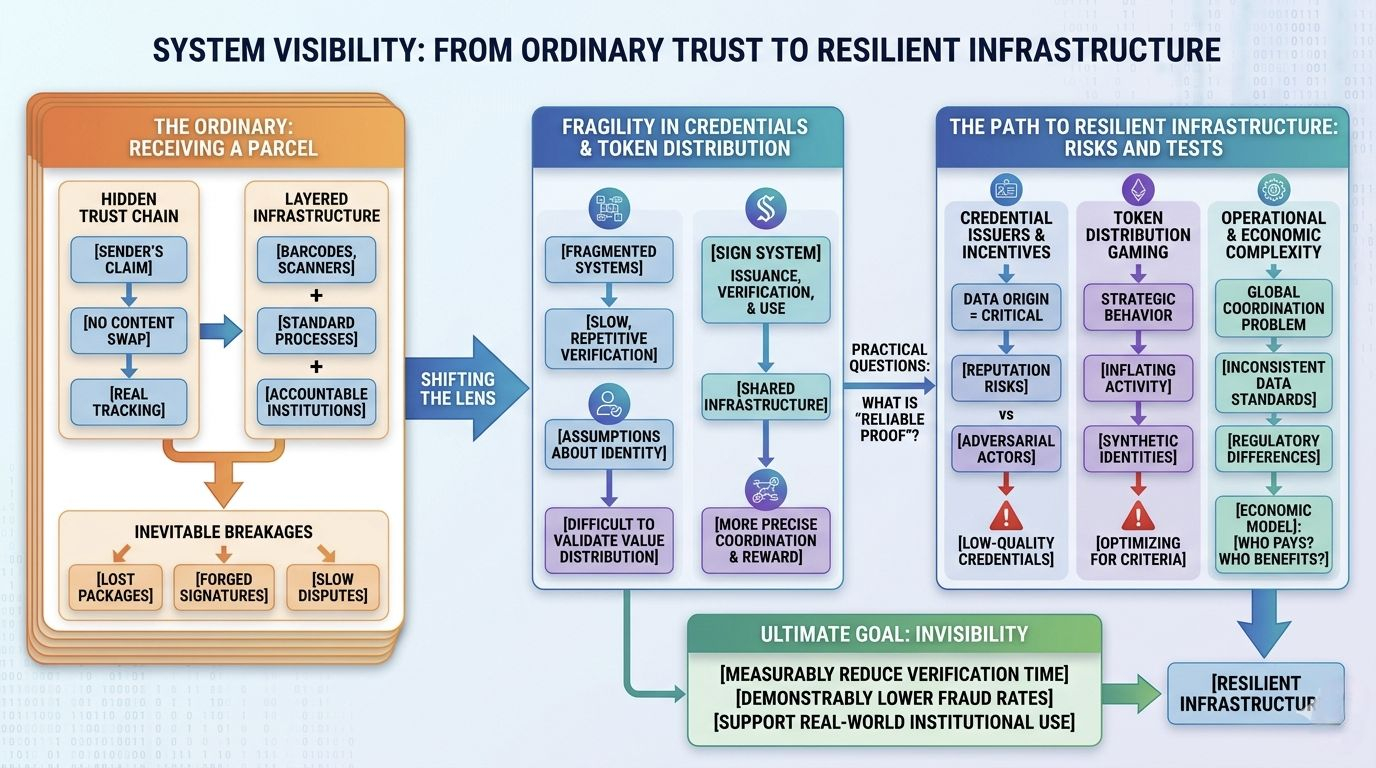

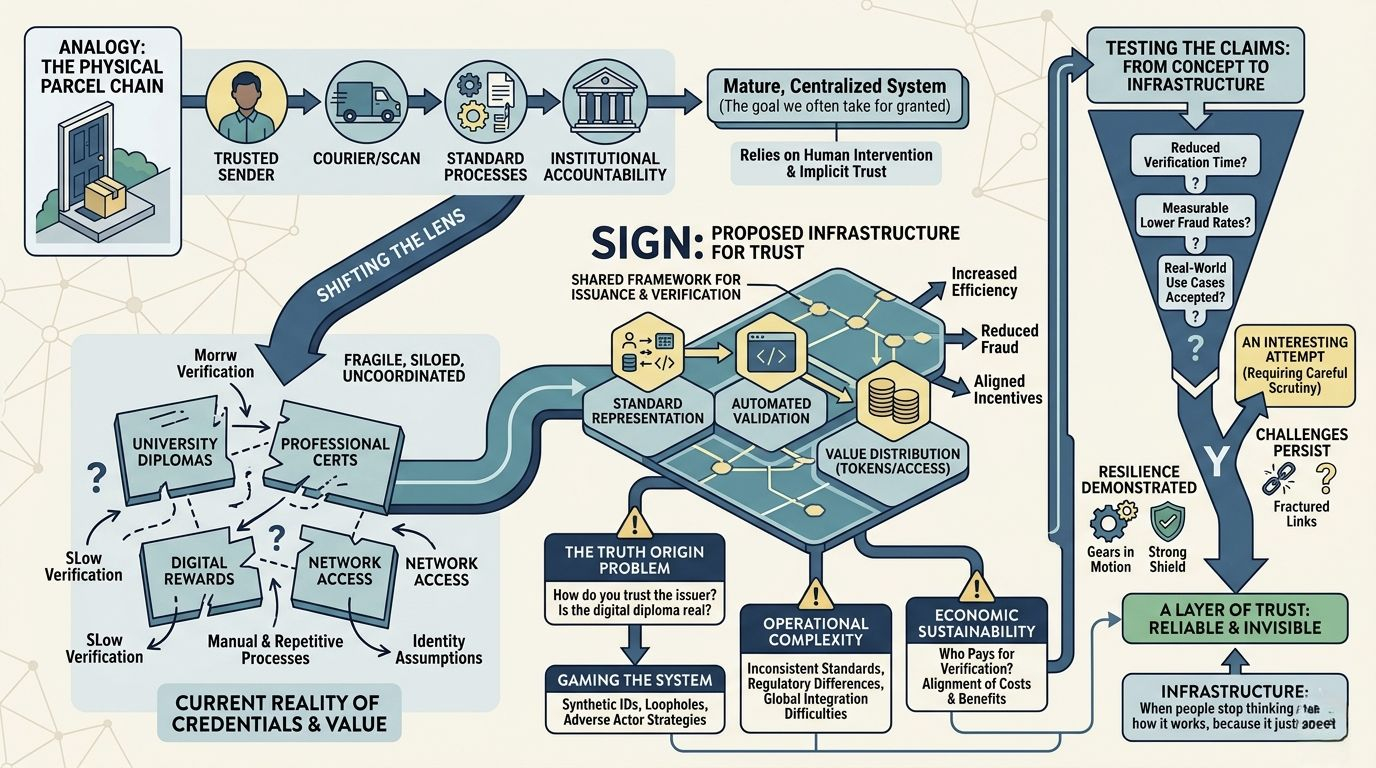

I think about something as ordinary as receiving a parcel. When a package arrives at my door, I rarely question the entire chain behind it. I trust that the sender is who they claim to be, that the courier didn’t swap the contents, and that the tracking system reflects reality. But that trust isn’t magic—it’s the result of layered infrastructure: barcodes, scanning systems, standardized processes, and institutions that are accountable when something goes wrong. And yet, even in this relatively mature system, things break. Packages get lost, signatures are forged, and disputes can take days or weeks to resolve. The system works, but it’s far from perfect—and more importantly, it relies heavily on centralized coordination and human intervention.

When I shift that lens to credential verification and token distribution, the fragility becomes even more apparent. Today, proving something as simple as a degree, a certification, or even participation in a digital network often involves fragmented systems that don’t communicate well with each other. Verification is slow, repetitive, and often manual. At the same time, distributing value—whether in the form of tokens, rewards, or access—relies on assumptions about identity and legitimacy that are difficult to validate at scale.

This is the gap that SIGN appears to be trying to address: building a kind of shared infrastructure where credentials can be issued, verified, and then used as a basis for distributing tokens or other forms of value. On the surface, the idea feels intuitive. If you can reliably prove who someone is or what they’ve done, you can design more precise systems of coordination and reward. In theory, this reduces fraud, increases efficiency, and aligns incentives more clearly.

But I find myself asking a more practical question: what does “reliable proof” actually mean in the real world?

In any credential system, the weakest point is not the technology—it’s the origin of the data. If a university issues a diploma, the credibility of that diploma depends on the institution, not the format in which it’s stored. Digitizing that credential or placing it on a decentralized system doesn’t automatically make it more truthful. It may make it easier to verify, harder to tamper with, and more portable—but it doesn’t solve the fundamental problem of trust in the issuer.

This creates an interesting tension. SIGN can potentially standardize how credentials are represented and verified, but it still depends on a network of issuers whose incentives may not always align. Some may have strong reputations to protect, while others might not. If the system is open, it has to deal with adversarial actors who will attempt to game it—issuing low-quality or even fraudulent credentials that technically meet the system’s requirements but undermine its integrity.

Then there’s the question of token distribution. Tying rewards to verified credentials sounds efficient, but it also introduces new forms of gaming. If tokens have real economic value, participants will optimize for whatever criteria the system uses. That could mean inflating activity, creating synthetic identities, or finding loopholes in how credentials are issued and recognized. In other words, the system doesn’t just need to verify truth—it needs to withstand strategic behavior.

I also think about operational complexity. For a system like SIGN to work at a global level, it has to integrate with a wide range of institutions, platforms, and user behaviors. That means dealing with inconsistent data standards, regulatory differences, and varying levels of technical maturity. It’s not just a technical problem—it’s a coordination problem. And coordination at that scale tends to move slowly, especially when there are no immediate incentives for established institutions to change their existing processes.

There’s also an economic layer that can’t be ignored. Who pays for verification? Who benefits from it? If the costs of issuing and verifying credentials fall on one group while the benefits accrue to another, the system may struggle to sustain itself. Infrastructure only persists when the incentives are aligned well enough that participants continue to support it without constant external pressure.

What I find most interesting is not the promise of the system, but whether its claims can be tested in practice. Can it reduce verification time in a measurable way? Can it demonstrably lower fraud rates? Can it support real-world use cases where institutions and users rely on it not just as an experiment, but as a default layer of trust? These are the kinds of questions that move a system from concept to infrastructure.

Because ultimately, infrastructure is defined by invisibility. The best systems are the ones people stop thinking about—not because they’re simple, but because they’re reliable. They handle edge cases, resist abuse, and continue to function under pressure. That’s a high bar, and most systems don’t reach it.

My own view is cautious but curious. SIGN is addressing a real and persistent problem, and the direction makes sense at a conceptual level. But the difficulty lies not in designing the framework—it lies in making it resilient in the face of imperfect data, misaligned incentives, and adversarial behavior. If it can demonstrate that kind of resilience in real-world conditions, then it starts to look less like an idea and more like infrastructure. Until then, I see it as an interesting attempt—one that deserves attention, but also careful scrutiny.

In the end, I don’t see SIGN as a finished solution—I see it as a pressure test for an idea that sounds simple but is deeply hard to execute. If it works, it won’t be because the concept was elegant, but because it survived contact with reality.

And maybe that’s the real tension here.

Because if trust can truly be turned into infrastructure, then everything built on top of it changes quietly—but permanently.

If it can’t, then this becomes just another system that looked solid… until someone leaned on it.

The difference won’t show up in whitepapers or demos—it will show up the moment the system is pushed to its limits.

And when that moment comes, we won’t be asking what SIGN promises—we’ll be watching what it actually holds together.

@SignOfficial #SignDigitalSovereignInfra $SIGN