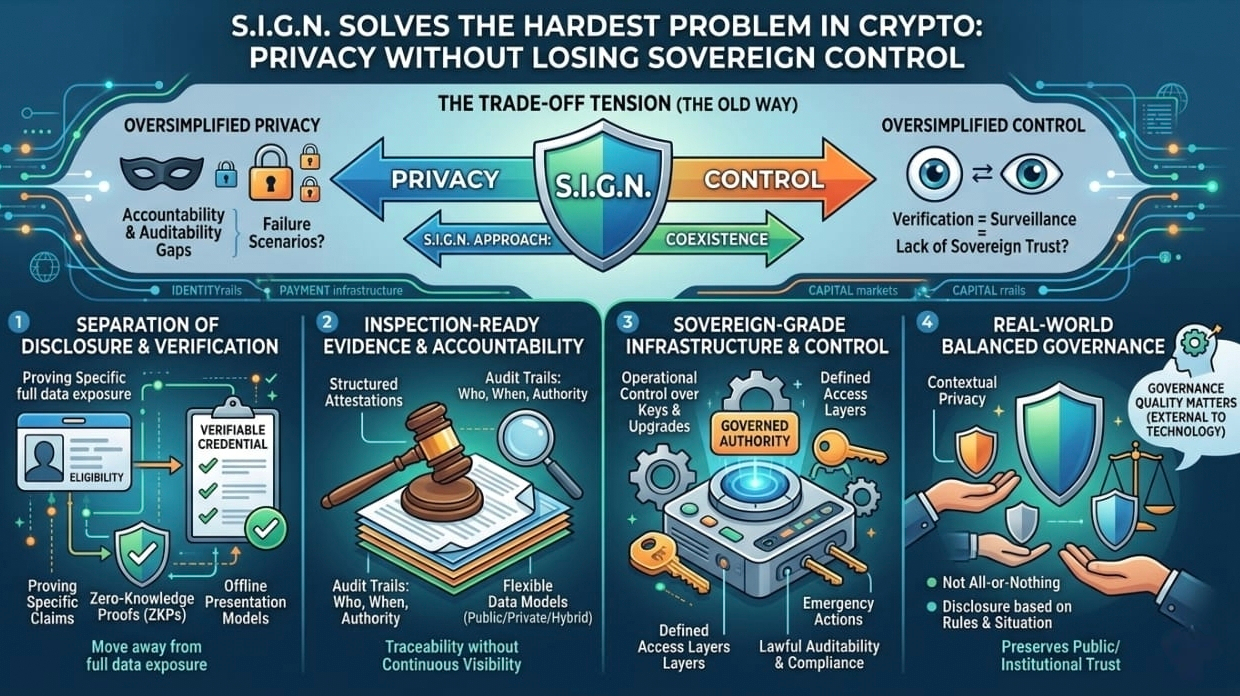

One thing I keep coming back to with SIGN is how calmly it approaches a problem that most systems either avoid or oversimplify: the relationship between privacy and sovereign control. In most architectures I’ve studied, this tension shows up early and gets resolved too quickly. The system picks a side. Either it leans so heavily into privacy that institutions start asking uncomfortable questions about accountability, auditability, and failure scenarios, or it leans so hard into control that “verification” quietly becomes another word for surveillance. And this tradeoff doesn’t stay theoretical for long. It becomes very real in identity systems, payment rails, and public benefits infrastructure, where sensitive data is not optional but foundational.

What made me pause about SIGN is that it doesn’t pretend this tension disappears. The system is framed as sovereign-grade infrastructure for money, identity, and capital, but instead of hiding the complexity, it acknowledges it directly. Privacy by default for sensitive data exists alongside lawful auditability, inspection readiness, and strict operational control over keys, upgrades, and emergency actions. That combination doesn’t feel like a consumer product narrative. It feels like something designed for environments where oversight is required and failure has consequences. That shift in framing alone makes the rest of the architecture easier to take seriously.

The core idea that keeps standing out to me is the separation between disclosure and verification. SIGN does not assume that verifying something requires exposing everything behind it. Instead, it builds around verifiable credentials, decentralized identifiers, selective disclosure, privacy-preserving proofs, revocation systems, and even offline presentation models. In simple terms, the system moves away from constantly querying centralized databases and toward proving only the specific claim that matters in a given moment. You don’t expose a full identity profile, you prove eligibility. You don’t reveal complete histories, you present a signed and valid claim. That shift is where privacy actually remains intact instead of being sacrificed for functionality.

But what makes the design more interesting is that it doesn’t stop at privacy. Many systems would end the conversation there, but SIGN continues by emphasizing something equally important: inspection-ready evidence. Not symbolic proof or abstract assurances, but structured evidence that can answer real questions later. Who approved a decision, under what authority, when it happened, which rules were active at the time, and what supports the claim during an audit. This is where the system starts to feel less like a privacy tool and more like accountability infrastructure. Through structured attestations, schemas, and flexible data models that can exist in public, private, or hybrid forms, it creates a way to preserve both verification and traceability.

At some point while thinking through this, I realized that sovereign control does not actually require constant visibility into everything. What it requires is control over rules, authority over operators, clearly defined access layers, and the ability to audit when necessary. SIGN seems to reflect that reality. It introduces different operational modes, allowing confidentiality-first environments, transparency-driven systems, or a mix of both depending on context. Instead of forcing everything into a single model, it treats disclosure as something that should be governed and situational. That approach feels much closer to how real-world systems function compared to the usual all-or-nothing thinking in blockchain design.

There was also a moment where my own perspective shifted while going through this. I used to think the hardest problem in these systems was making them trustless. But now it feels like the harder problem is making them verifiable without requiring total exposure. In practice, institutions don’t always need to see everything. They need confidence in outcomes, traceability of decisions, and the ability to investigate when something breaks. That is a very different requirement than full transparency, and it changes how systems should be designed from the ground up.

That said, my hesitation doesn’t come from the logic of the architecture itself. On paper, it works. The uncertainty comes from how “lawful auditability” is defined and applied in real deployments. Those boundaries always look clean in documentation, but in reality they depend on governance quality, operator incentives, access policies, and institutional behavior. The system can define what is technically possible, but it cannot guarantee how power will be exercised. That part is always external to the technology, and it is where most real-world outcomes are decided.

Still, I keep coming back to SIGN because it feels like it is aiming at the right problem. It does not confuse privacy with invisibility or control with total exposure. Instead, it treats privacy as selective provability and control as governed authority over systems and rules. That distinction matters because the future of these systems will not be decided by whether they are fully decentralized or fully controlled. It will be decided by whether they can verify what matters, reveal only what is necessary, preserve accountability, and still operate within real institutional constraints. That balance is difficult, but this is one of the few approaches that seems willing to confront it directly rather than avoid it.

@SignOfficial #SignDigitalSovereignInfra $SIGN