Let’s try to understand what the real story is.

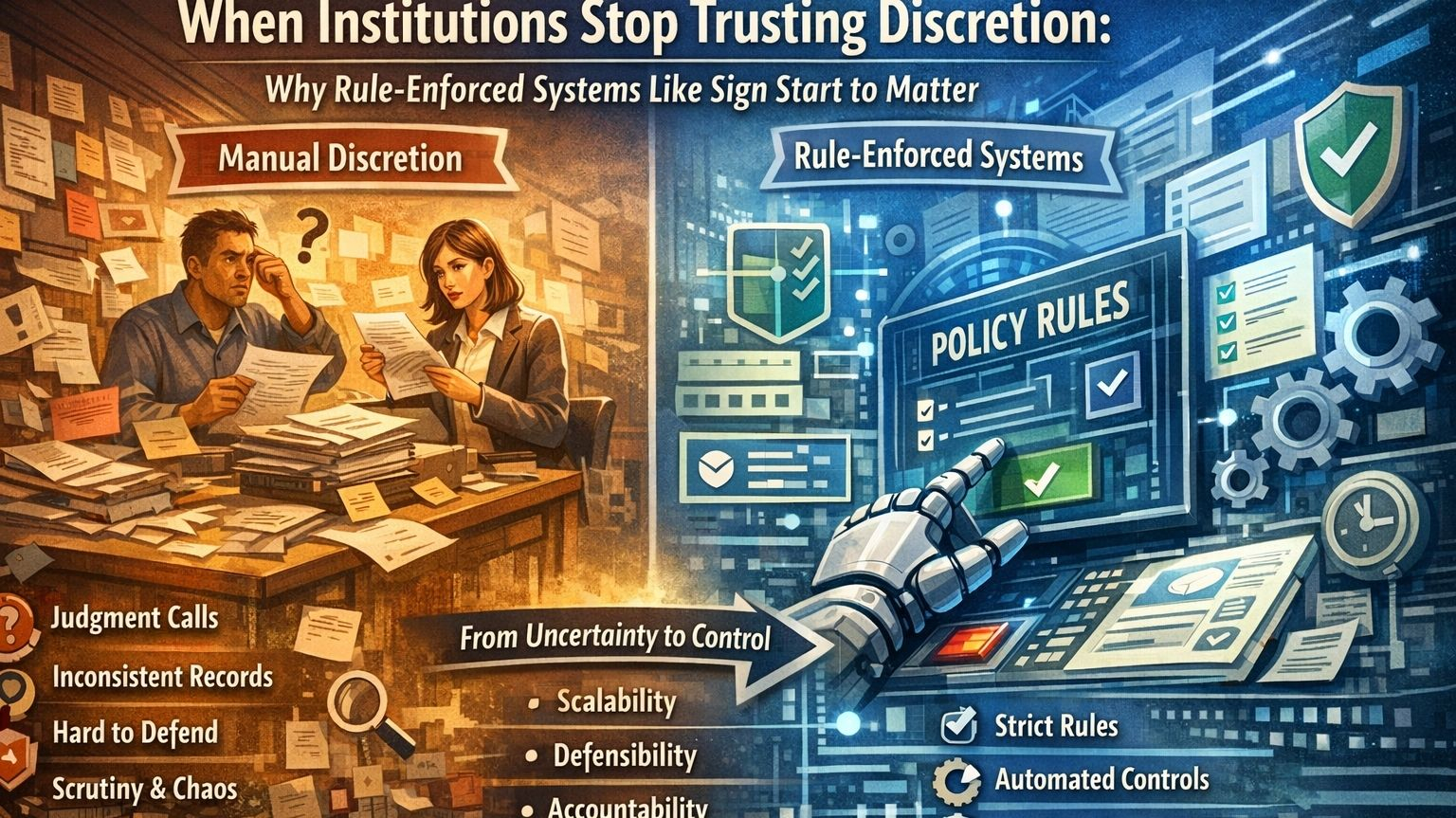

Systems usually become rule-heavy when people stop trusting discretion to hold up over time.

That is the thought I keep coming back to with Sign’s broader architecture. Nobody wakes up one day and decides they want policy controls, eligibility gates, auditable distributions, clawbacks, hard caps, emergency pauses, and versioned rulesets just because structured systems look elegant. Things move in that direction when the older way starts feeling too loose, too hard to defend, or too dependent on judgment calls that become uncomfortable the moment someone asks for an explanation later. In Sign’s case, that pressure seems to show up most clearly in the way S.I.G.N. and TokenTable are framed: infrastructure for rules-driven allocation, distribution, and enforcement in environments where mistakes do not stay small for long.

What makes that interesting is that the project does not really imagine a world without human judgment. It imagines a world where institutions no longer feel safe leaving so much of the important part unstructured. The logic behind the New Capital System is not only about moving funds in a programmable way. It is about applying eligibility rules, enforcing policy limits, preserving auditability, reducing leakage, preventing duplication, and doing all of that at a scale where manual cleanup starts becoming its own failure mode. That does not read like convenience for convenience’s sake. It reads more like administrative discomfort finally becoming too expensive to ignore.

That is why I do not think the real question is whether manual decision-making was simply bad. The deeper issue is whether manual discretion became too expensive to explain. A person can make a fair decision and still leave behind a weak reason trail. A team can run a grant or benefits program honestly and still end up with duplicate payouts, uneven treatment, or records that do not survive later review very well. Once systems get bigger, the value of discretion starts running into the burden of justification. And that seems to be one of the tensions Sign is trying to answer. It is not only asking what should happen. It is asking how institutions will later prove that what happened followed the rules they said they were following.

That shift matters because rule-enforced systems always come with a trade-off. They promise consistency, but they also narrow the room for interpretation. In theory, that can look like fairness. The same input produces the same output. The same threshold gets applied the same way. The same cap, the same schedule, the same conditions. But fairness through rules can become brittle very quickly if the world those rules were written for starts changing faster than the rules themselves. That is why features like versioned rulesets, revocation, clawbacks, emergency pauses, and guardrails do not feel ornamental here. They feel like quiet signs that the people designing this already know rule-based enforcement eventually runs into situations it cannot neatly absorb.

This is also where the idea of “neutral enforcement” starts needing a harder look. A programmable rule can feel neutral because it behaves consistently. But someone still chose that rule. Someone decided the threshold, the categories, the identity linkage, the revocation logic, the per-entity cap, the list of exceptions, or the decision to allow no exceptions at all. Once rules are encoded, power does not disappear. It just changes address. Often it moves away from the person applying the rule in the moment and toward the people who shaped the structure earlier. That may reduce arbitrary discretion on the front line, which can be a real improvement. But it can also centralize a different kind of discretion further upstream, where it becomes less visible in daily practice.

There is another institutional motive here that feels just as important: the fear of later scrutiny. A lot of organizations do not move toward rule-heavy systems because they suddenly became philosophically committed to code. They move there because unstructured judgment becomes hard to defend once oversight arrives. If a regulator asks why one entity was approved and another was not, “our staff exercised reasonable discretion” often sounds weaker than “the decision followed this ruleset, under these constraints, with this evidence attached.” That difference matters. It is not only about automation. It is about defensibility. It is about turning a decision into something that can survive after the people who made it are no longer there to narrate it.

Still, rule-driven architecture does not magically reduce misuse. Sometimes it only changes its shape. Fraud can move upstream into identity linkage, policy design, or external inputs. Favoritism can shift into who gets to write or revise the rules. Administrative chaos can shrink at the point of execution and reappear elsewhere, especially once edge cases start piling up and appeals become harder because the system keeps insisting it followed the rule exactly. That is the uncomfortable part of programmable compliance. It can solve one institutional weakness while deepening another. It may reduce arbitrary treatment in one place while making exceptions colder and more politically sensitive in another.

So why did rule-enforced systems start to feel necessary? Probably because too many institutions stopped trusting loose processes to survive scale, repetition, and scrutiny. That is what Sign’s broader architecture seems to be responding to. Not a rejection of people exactly, but a recognition that people making judgment calls inside poorly documented systems became too hard to defend afterward. That is why the foundation here is not just programmability. It is human inconsistency, explanation burden, and enforcement anxiety. The deeper question is not only what can be automated. It is what kind of decision can still be explained once the moment has passed and the system is forced to answer for itself.