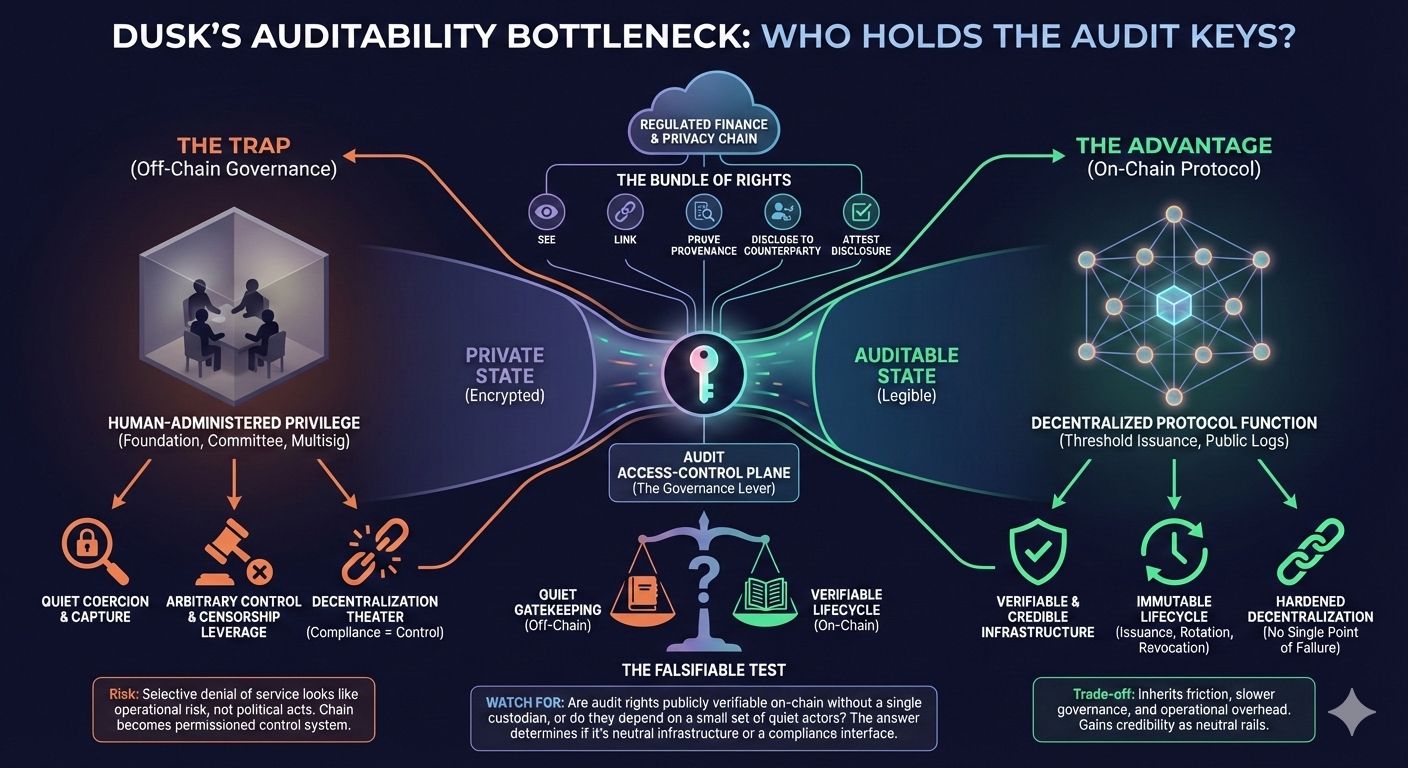

If you tell me a chain is “privacy-focused” and “built for regulated finance,” I don’t start by asking whether the cryptography works. I start by asking a colder question: who can make private things legible, and under what authority. That’s the part the market consistently misprices with Dusk, because it’s not a consensus feature you can point at on a block explorer. It is the audit access-control plane. It decides who can selectively reveal what, when, and why. And once you admit that plane exists, you’ve also admitted a new bottleneck: the system is only as decentralized as the lifecycle of audit rights.

If you tell me a chain is “privacy-focused” and “built for regulated finance,” I don’t start by asking whether the cryptography works. I start by asking a colder question: who can make private things legible, and under what authority. That’s the part the market consistently misprices with Dusk, because it’s not a consensus feature you can point at on a block explorer. It is the audit access-control plane. It decides who can selectively reveal what, when, and why. And once you admit that plane exists, you’ve also admitted a new bottleneck: the system is only as decentralized as the lifecycle of audit rights.

In practice, regulated privacy cannot be “everyone sees nothing” and it cannot be “everyone sees everything.” It has to be conditional visibility. A regulator, an auditor, a compliance officer, a court-appointed party, some defined set of actors must be able to answer specific questions about specific flows without turning the whole ledger into a glass box. That means permissions exist somewhere. Whether it is view keys, disclosure tokens, or scoped capabilities, the power is always the same: the ability to move information from private state into auditable state. Storage becomes a bandwidth business, and once that happens, you stop competing on cheap bytes and start competing on how well you can keep repairs from dominating the economics. That ability is not neutral. It’s the closest thing a privacy chain has to an enforcement lever inside the system, because visibility determines whether an actor can be compelled, denied, or constrained under the compliance rules.

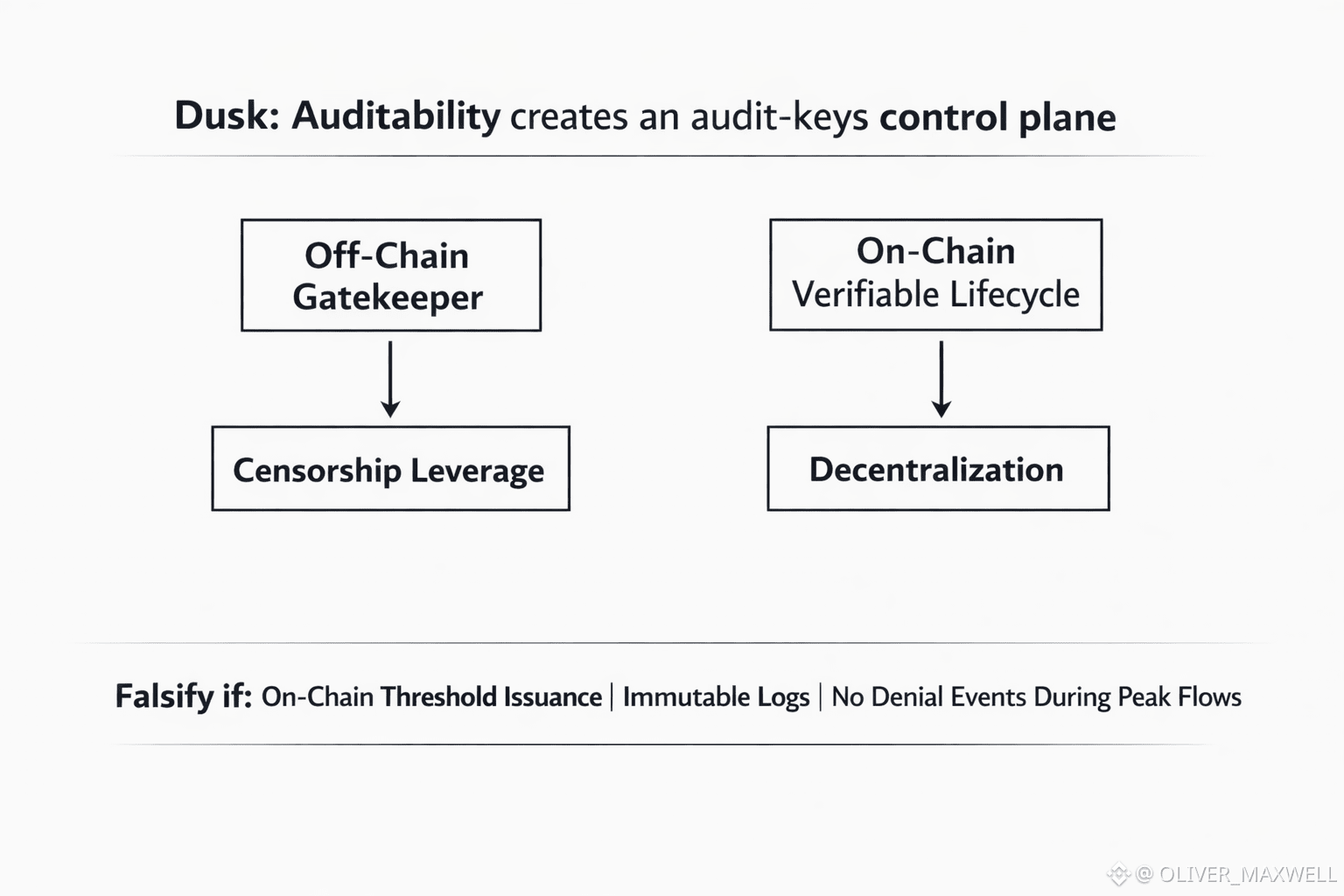

Any selective disclosure scheme needs issuance, rotation, and revocation. Someone gets authorized. Someone can lose authorization. Someone can be replaced. Someone can be compelled. Someone can collude. Someone can be bribed. Even if the chain itself has perfect liveness and a clean consensus protocol, that audit-access lifecycle becomes a parallel governance system. If that governance is off-chain, informal, or concentrated, then “compliance” quietly becomes “control,” and control becomes censorship leverage through denying audit authorization or revoking disclosure capability. In a system built for institutions, the most valuable censorship is not shutting the chain down. It’s selectively denying service to high-stakes flows while everything else keeps working, because that looks like ordinary operational risk rather than an explicit political act.

I think this is where Dusk’s positioning creates both its advantage and its trap. “Auditability built in” sounds like a solved problem, but auditability is not a single switch. It’s a bundle of rights. The right to see. The right to link. The right to prove provenance. The right to disclose to a counterparty. The right to disclose to a third party. The right to attest that disclosure happened correctly. Each of those rights can be scoped narrowly or broadly, time-limited or permanent, actor-bound or transferable. Each can be exercised transparently or silently. And each choice either hardens decentralization or hollows it out.

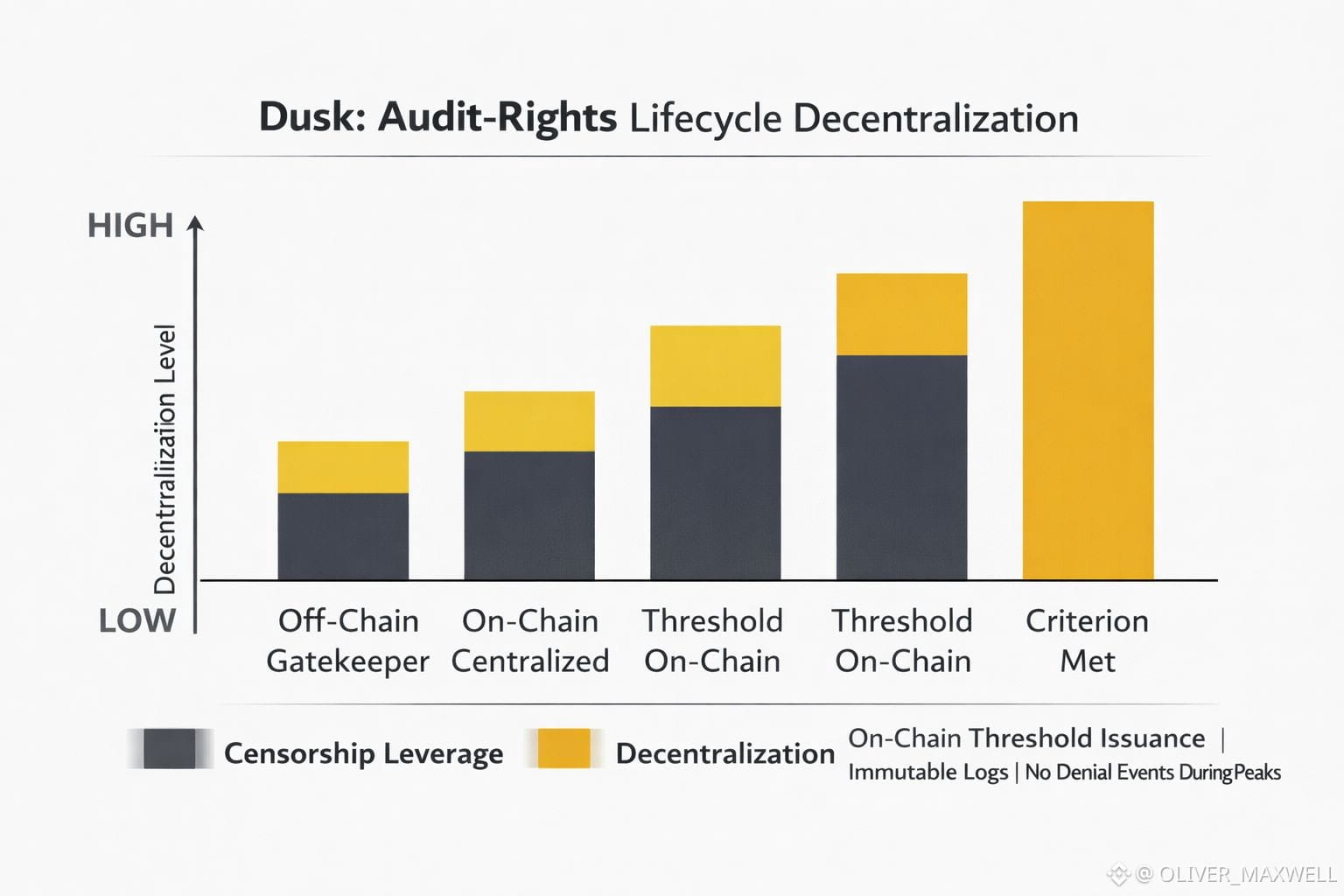

There are two versions of this system. In one version, audit rights are effectively administered by a small, recognizable set of entities: a foundation, a compliance committee, a handful of whitelisted auditors, a vendor that runs the “compliance module,” maybe even just one multisig that can authorize disclosure or freeze the ability to transact under certain conditions. That system can be responsive. It can satisfy institutions that want clear accountability. It can react quickly to regulators. It can reduce headline risk. It can also be captured. It can be coerced. And because much of this happens outside the base protocol, it can be done quietly. The chain remains “decentralized” in the narrow consensus sense while the economically meaningful decision-making funnels through an off-chain choke point.

In the other version, the audit-rights lifecycle is treated as first-class protocol behavior. Authorization events are publicly verifiable on-chain. Rotation and revocation are also on-chain. There are immutable logs for who was granted what scope and for how long. The system uses threshold issuance where no single custodian can unilaterally grant, alter, or revoke audit capabilities. If there are emergency powers, they are explicit, bounded, and auditable after the fact. If disclosure triggers exist, they are constrained by protocol-enforced rules rather than “we decided in a call.” This version is harder to capture and harder to coerce quietly. It also forces Dusk to wear its governance choices in public, which is exactly what many “regulated” systems try to avoid.

That’s the trade-off people miss. If Dusk pushes audit governance on-chain, it gains credibility as infrastructure, because the market can verify that compliance does not equal arbitrary control. But it also inherits friction. On-chain governance is slower and messier. Threshold systems create operational overhead. Public logs, even if they don’t reveal transaction content, can reveal patterns about when audits happen, how often rights are rotated, which types of permissions are frequently invoked, and whether the system is living in a perpetual “exception state.” Worse, every additional control-plane mechanism is an attack surface. If audit rights have real economic impact, then attacking the audit plane becomes more profitable than attacking consensus. You don’t need to halt blocks if you can selectively make high-value participants non-functional.

There’s also a deeper institutional tension that doesn’t get said out loud. Many institutions that Dusk is courting don’t actually want decentralized audit governance. They want a name on the contract. They want a party they can sue. They want a help desk. They want someone who can say “yes” or “no” on a deadline. Dusk can win adoption by giving them that. But if Dusk wins that way, then the chain’s most important promise changes from censorship resistance to service-level compliance. That might be commercially rational, but it should not be priced like neutral infrastructure. It should be priced like a permissioned control system that happens to settle on a blockchain.

So when I evaluate Dusk through this lens, I’m not trying to catch it in a gotcha. I’m trying to locate the true trust boundary. If the trust boundary is “consensus and cryptography,” then the protocol is the product. If the trust boundary is “the people who can grant and revoke disclosure,” then governance is the product. And governance, in regulated finance, is where capture happens. It’s where jurisdictions bite. It’s where quiet pressure gets applied. It’s where the most damaging failures occur, because they look like compliance decisions rather than system faults.

This is why I consider the angle falsifiable, not just vibes. If Dusk can demonstrate that audit rights are issued, rotated, and revoked in a way that is publicly verifiable on-chain, with no single custodian, and with immutable logs that allow independent observers to audit the auditors, then the core centralization fear weakens dramatically. If, over multiple months of peak-volume periods, there are no correlated revocations or refused authorizations at the audit-rights interface during high-stakes flows, no pattern where “sensitive” activity reliably gets throttled while everything else runs, and no dependency on a small off-chain gatekeeper to keep the system usable, then the market’s “built-in auditability” story starts to deserve its premium.

If, instead, the operational reality is that Dusk’s compliance posture depends on a small set of actors who can quietly change disclosure policy, quietly rotate keys, quietly authorize exceptions, or quietly deny service, then I don’t care how elegant the privacy tech is. The decentralization story is already compromised at the layer that matters. You end up with a chain that can sell privacy to users and sell control to institutions, and both sides will eventually notice they bought different products.

That’s the bet I think Dusk is really making, whether it says it or not. It’s betting it can turn selective disclosure into a credible, decentralized protocol function rather than a human-administered privilege. If it succeeds, it earns a rare position: regulated privacy that doesn’t collapse into a soft permissioned system. If it fails, the chain may still grow, but it will grow as compliance infrastructure with a blockchain interface, not as neutral financial rails. And those two outcomes should not be priced the same.