It is easy to talk about churn as if it begins with absence.

A player stops showing up, the login streak breaks, the account goes quiet, and only then does the discussion begin. On paper, that makes sense. A clean date. A visible endpoint. Something easy to count. But the more closely you look at how players drift away from a game like Pixels, the less convincing that explanation feels.

People usually do not leave all at once.

What happens instead is quieter. They are still around, technically. They log in. They spend some energy. They touch the game just enough to look active from a distance. But something has already shifted. The rhythm is weaker. The intent is weaker. The return is less certain. A quest sits unfinished. A session ends without a real reason to come back later. The player has not vanished yet, but they are no longer fully inside the game either.

That in-between state matters more than most teams treat it.

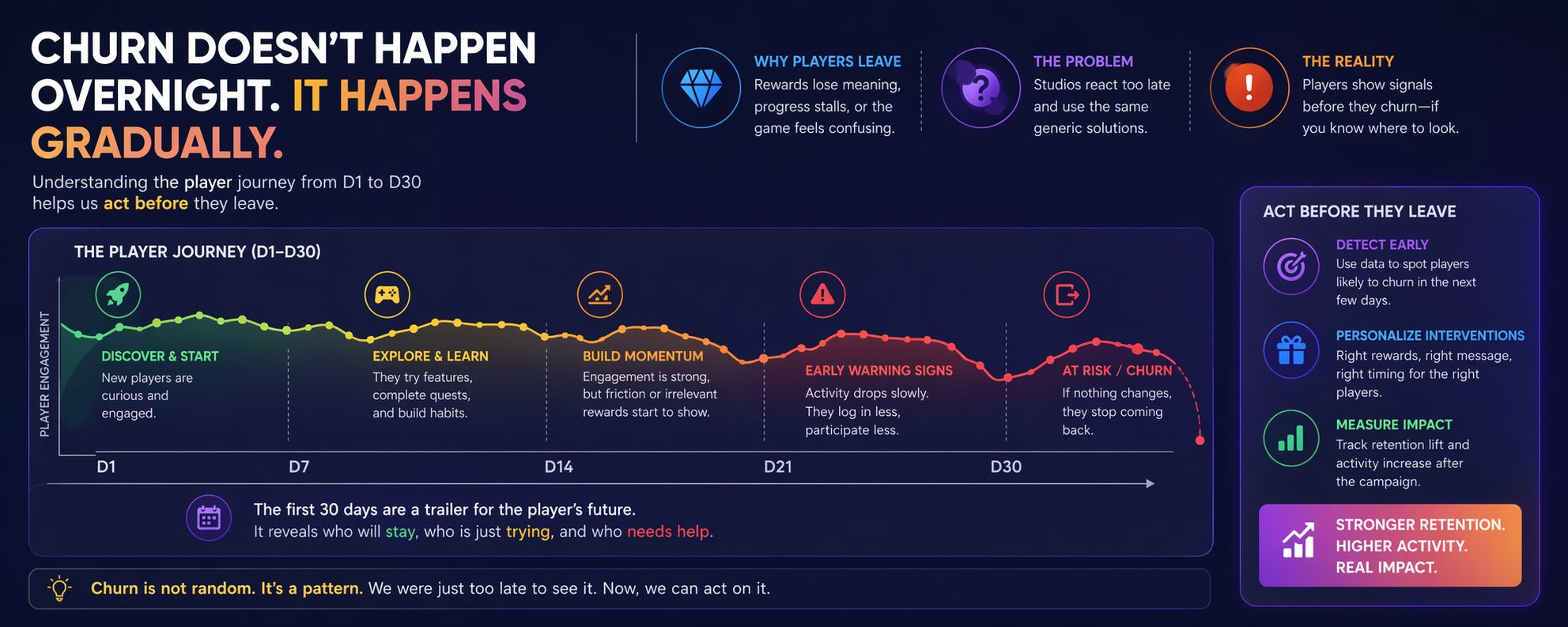

What stands out in the way Stacked looks at this is that it refuses to reduce churn to a single final moment. It pays attention to the stretch from day one to day thirty, which is a far more revealing period than many retention dashboards suggest. Those early weeks do not just show whether someone is active. They start to reveal what kind of relationship that player is building with the game. Some are settling into habit. Some are only visiting out of curiosity. Some clearly want to stay, but keep running into small forms of friction that slowly wear that intention down.

And that distinction matters, because not all departures come from the same place.

A high-value player may leave not because they lost interest in the game itself, but because the rewards no longer connect to what progression means for them. A more casual player may disappear for almost the opposite reason: the game never became legible enough in the first place. One is under-stimulated. The other is under-oriented. Yet a surprising number of studios still respond to both with the same blunt instruments: more events, more bonuses, more noise.

That approach survives because it is convenient, not because it is precise.

The more interesting observation is that players often signal their exit before they actually take it. Their activity does not collapse overnight. It thins out. The graph softens. Engagement becomes inconsistent. The decline is not dramatic enough to feel urgent, which is probably why it gets missed. But that slow drop often says more than the final zero ever could. By the time a player is fully gone, the real process has already happened.

So the more uncomfortable question is not why players churn after they leave. It is why teams keep waiting for certainty when the warning signs are already visible.

Once you start looking at churn this way, the triggers also become less theatrical than people expect. Sometimes the issue is not that the game is broken or boring. Sometimes it is much smaller, and therefore easier to overlook. A reward arrives that has no value to that specific player. Progress begins to feel sticky rather than satisfying. An event appears, but has nothing to do with the way that person actually plays. None of these things sound dramatic in isolation. In aggregate, they barely register. But in the lived experience of a player, these small mismatches accumulate into a quiet loss of momentum.

And momentum, once interrupted often enough, is difficult to restore through generic generosity.

That is why the most useful part of this kind of system is not simply that it identifies a problem. Plenty of analytics tools can point at decline after the fact. The more meaningful step is moving from recognition to action while the player is still reachable. Not with a giant feature release. Not with a months-long roadmap adjustment. Sometimes the response can be narrow and immediate: a modest campaign for a specific segment, a reward that actually aligns with their progress, an intervention designed for the reason they are fading rather than for churn in the abstract.

That is where the story becomes less speculative and more practical.

What seems to surprise teams is that the intervention does not always need to be dramatic to matter. A small, well-timed push can alter behavior enough to show up in retention and activity a few days later. Not because players were manipulated into returning, but because the game met them at the right moment with something relevant. That difference is subtle, but important. It shifts retention from wishful thinking into something closer to response design.

After looking at it this way, churn feels less like randomness and more like delayed recognition.

The pattern was there. The signals were there. The reasons were there too, though often buried under averages and broad campaign logic. What changed was not the existence of the problem, but the willingness to notice that leaving usually begins before the player is counted as gone. And once you see that clearly, the old habit of reacting at the very end starts to look less like strategy and more like hesitation.