A few nights ago the electricity went out in my area for about ten minutes. No drama, just one of those quiet blackouts. What struck me wasn’t the darkness. It was how many things stopped at once. The elevator froze. The internet router blinked off. Even the small grocery store downstairs couldn’t process payments because the system that authorizes transactions wasn’t reachable. No human made a decision in that moment. A chain of systems simply failed together.

We talk about “governance” as if it only belongs to governments or boards of directors. But most of our daily life is already governed by software. Your bank transfer clears because a set of automated checks approve it. Your ride-hailing account is suspended because an algorithm flags unusual behavior. There’s no committee debating your case. There’s code, running rules that someone defined long ago. Quietly. At scale.

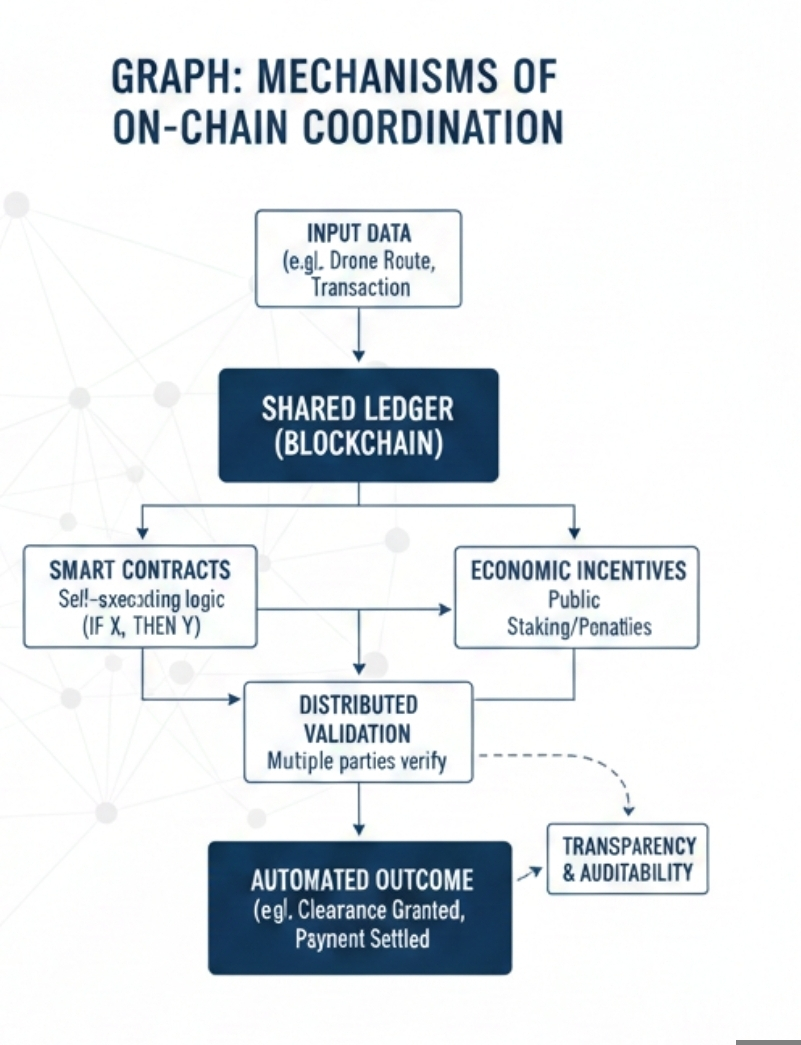

Fabric’s on-chain coordination model sits inside that reality, but it makes something explicit that is usually hidden. Instead of governance happening inside a private company server, it moves the rules into a shared blockchain environment. “On-chain” just means the logic and records live on a distributed ledger—basically a database copied across many independent computers so no single party controls it. That sounds technical, and it is. But the real shift isn’t technical. It’s philosophical.

If machines are going to interact with other machines—delivery robots, autonomous vehicles, trading bots—they need a way to agree on outcomes. Not by emailing each other. Not by waiting for a human manager. They need coordination embedded into infrastructure. Fabric tries to encode that coordination into smart contracts, which are self-executing programs that automatically run when predefined conditions are met. No discretion. If X happens and proof is submitted, Y follows.

I used to think automation was mostly about speed. Make it faster, reduce cost, remove friction. Lately I’ve started to think it’s more about authority. Who decides what counts as valid? Who enforces the rules? In most digital systems today, the answer is simple: the platform. The company owns the server, defines the policy, and can change it at will. Fabric pushes that authority outward. The rules are public. The validation process is shared. Once recorded, outcomes are hard to reverse.

That matters more than people realize. When rules are transparent and tamper-resistant, behavior changes. We see a softer version of this on Binance Square. Creators know that visibility is shaped by engagement metrics, ranking systems, and algorithmic signals. Even without reading the full documentation, you feel the incentives. You adjust your posting time. You refine your tone. You respond quickly to comments because activity affects reach. The dashboard doesn’t shout instructions, but it nudges you constantly. Fabric’s model works the same way for machines. Incentives are coded into the protocol. Act within parameters, and you’re rewarded. Deviate, and the network penalizes you.

There’s something slightly unsettling about that. Incentives are powerful. If the reward system is misaligned, behavior distorts quickly. In human systems, at least you can appeal to context. You can explain. In machine governance, the appeal process is often just another rule. That rigidity can be a strength. It can also feel cold.

Consider a network of autonomous drones sharing airspace. Each drone submits data about its route and energy levels. The protocol checks whether it meets safety thresholds. If yes, it grants clearance. If not, it denies access automatically. No phone call. No negotiation. That clarity reduces accidents. But it also removes flexibility. What if an emergency requires bending the rules? You have to anticipate that scenario in advance and code for it. If you forget, the system won’t improvise.

Fabric leans on distributed validation to prevent abuse. Instead of trusting a single operator, multiple participants check whether an action follows the agreed logic. Often this involves staking, where validators lock tokens as collateral to signal they are acting honestly. If they validate false information, they lose part of that stake. It’s an economic incentive system. Simple in theory. Harder in practice.

Because incentives don’t just encourage honesty. They encourage optimization. And optimization sometimes turns into gaming. If enough value flows through the system, participants may search for edge cases. They may coordinate quietly. They may concentrate power by accumulating more tokens. Decentralization is not immune to hierarchy. It just expresses it differently.

What I find most interesting is not the technical layer but the social layer underneath. When governance moves on-chain, responsibility becomes shared and diffuse. If something goes wrong—say a smart contract executes in a way that causes loss—who is accountable? The developer who wrote the code? The token holders who voted for it? The validators who confirmed transactions? Machine governance blurs traditional lines of liability. That has legal implications, but also moral ones.

There’s another angle that doesn’t get enough attention. By embedding rules into infrastructure, we freeze assumptions about the world. Every protocol reflects a worldview. What counts as valid proof. What counts as fair compensation. What counts as misconduct. Those definitions are written once and then scaled globally. Updating them is possible, but it requires coordination again. Governance doesn’t disappear. It just shifts layers.

In environments driven by AI, this becomes even more complex. AI systems produce outputs that may contain uncertainty or error. If Fabric-style governance validates those outputs before they are accepted on-chain, the validation logic becomes critical. How do you measure correctness? How do you quantify credibility? Ranking systems and evaluation dashboards influence which validators are trusted more. Over time, reputational scores form. Machines begin to prefer interacting with high-scoring peers. That sounds efficient. It can also create feedback loops where early advantages compound.

I don’t think machine governance is inherently good or bad. It feels inevitable. The scale of digital interaction simply outpaces human supervision. But inevitability shouldn’t be confused with neutrality. The design choices inside Fabric’s coordination model—how consensus is reached, how penalties are structured, how upgrades occur—will shape behavior in ways that may not be obvious at first.

What gives me cautious optimism is the transparency element. Unlike opaque corporate algorithms, on-chain rules can be inspected. You can read the contract. You can analyze transaction history. That visibility doesn’t guarantee fairness, but it gives people tools to question the system. In a world increasingly governed by invisible code, that alone has value.

Still, I keep thinking about that blackout. When systems fail, we are reminded how dependent we’ve become. Machine governance promises resilience through distribution. No single server. No single authority. But distribution introduces complexity. And complexity introduces new forms of fragility.

Maybe the real shift isn’t that machines are governing. It’s that we are choosing to let them govern in structured, programmable ways. Fabric doesn’t create that impulse. It formalizes it. It says, if governance is going to happen through software anyway, let’s make the rules shared, visible, and economically aligned.

That’s a pragmatic approach. Not utopian. Not dystopian. Just a recognition that coordination at scale requires infrastructure. Whether that infrastructure ultimately empowers participants or subtly constrains them will depend on how carefully we design it—and how willing we are to question it once it’s running.