Artificial intelligence has become deeply embedded in modern digital infrastructure. Systems now generate research summaries, write software, analyze data, and assist in decision-making across industries. Yet despite the impressive capabilities of these models, a persistent problem continues to shape the conversation around AI reliability. Even the most advanced models can produce confident answers that are incorrect, incomplete, or biased. This issue, often described as hallucination, is not simply a technical inconvenience. It represents a structural limitation in how AI systems generate information.Mira Network emerges from this context as an attempt to rethink how AI outputs are evaluated and trusted. Rather than relying on a single model or centralized authority to determine whether an answer is correct, Mira proposes a decentralized verification layer. The protocol reframes the question of AI reliability as a problem of distributed consensus. In this design, information produced by AI is not accepted at face value. Instead, it becomes a claim that must be verified by multiple independent systems working together through a blockchain-based framework.

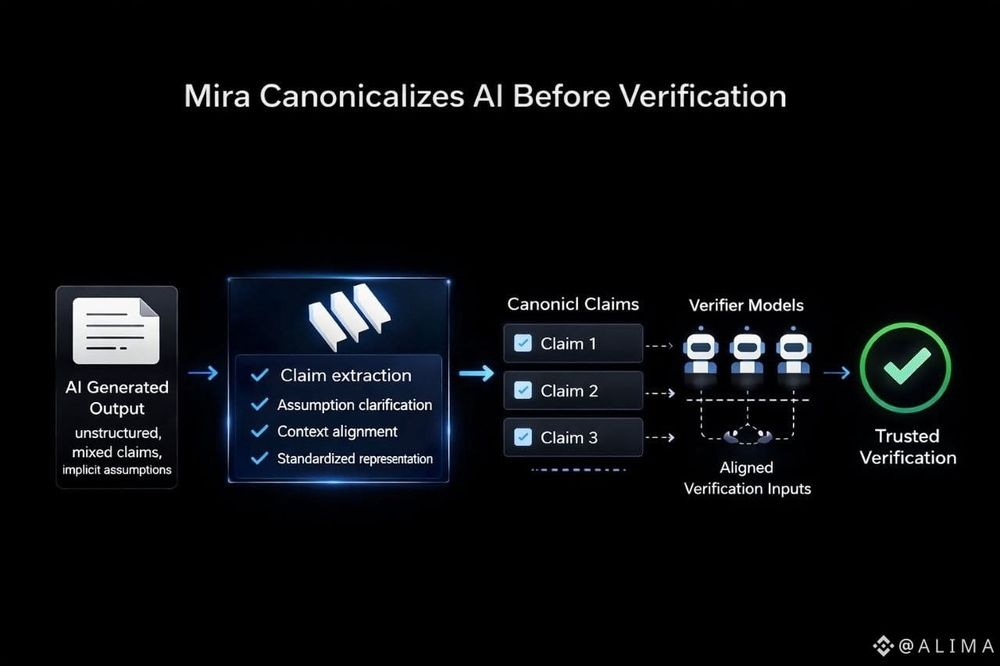

The idea behind Mira Network is relatively straightforward but carries deeper implications for how information is validated in automated environments. When an AI system generates a complex response, that response can be decomposed into smaller, verifiable claims. Each claim can then be evaluated by a network of independent models that attempt to confirm or challenge its validity. Through a coordinated process, the network arrives at a consensus regarding whether the information is trustworthy.

This process resembles the way decentralized networks validate transactions. In traditional blockchains, no single participant determines whether a transaction is legitimate. Instead, multiple validators collectively confirm the state of the ledger. Mira adapts a similar principle to the domain of information verification. The goal is to transform AI outputs into something closer to verifiable data rather than unexamined text generated by a single model.The architecture of Mira Network rests on several conceptual layers. At the base level lies the generation of content by an AI model. This content might include explanations, data analysis, or responses to complex queries. Once generated, the content is broken into atomic statements that can be individually assessed. Each statement becomes a unit of verification that can be tested by other AI systems participating in the network.These verification tasks are distributed across nodes that run independent models. Each node evaluates the claim and submits its judgment to the network. The system then aggregates these judgments and applies a consensus mechanism to determine the final outcome. If a sufficient level of agreement is reached among the verifying nodes, the claim can be considered verified within the context of the protocol.The use of multiple models is an important design choice. AI systems often make mistakes for different reasons, depending on their training data and architecture. By distributing verification across diverse models, the network reduces the likelihood that a single bias or error pattern will dominate the outcome. Instead of relying on the authority of one system, Mira relies on the statistical strength of collective evaluation.Blockchain infrastructure provides the coordination layer that makes this process possible. The ledger records verification results and maintains a transparent history of decisions made by the network. This record serves as a form of accountability. Each claim and its verification process become part of an auditable structure, allowing participants to understand how conclusions were reached.The presence of the token within this system reflects another dimension of the protocol’s design. In decentralized networks, incentives often play a crucial role in coordinating participation. The token functions as a mechanism for rewarding nodes that contribute verification work and behave according to the protocol’s rules. At the same time, the system can impose penalties on participants whose behavior undermines the integrity of the verification process.Economic incentives in decentralized systems are not merely financial constructs. They are tools for shaping behavior in open networks where participants may not know or trust one another. By linking verification activity to incentives, Mira attempts to encourage accurate evaluations while discouraging manipulation or careless participation. The token therefore acts as an operational component of the protocol’s governance and coordination structure.Another aspect of Mira Network’s design is its emphasis on modularity. AI technology evolves rapidly, and new models are introduced regularly. A verification network that depends on a single model or architecture would quickly become outdated. Mira addresses this challenge by allowing different models to participate in the network over time. As new systems emerge, they can potentially join the verification process and contribute their perspective to the evaluation of claims.This modular approach reflects a broader trend in decentralized infrastructure. Instead of building rigid systems that depend on fixed components, developers increasingly design protocols that can integrate new tools as they become available. For Mira, this flexibility is particularly important because the field of artificial intelligence is characterized by constant experimentation and improvement.At a deeper level, Mira Network represents an attempt to bridge two distinct technological domains. Artificial intelligence excels at generating and interpreting complex information. Blockchain systems excel at coordinating decentralized actors and maintaining transparent records. By combining these capabilities, Mira aims to create an environment in which AI outputs can be systematically verified rather than simply accepted.

The challenge of verification becomes especially significant when AI systems operate in contexts where accuracy matters. Automated decision-making, research assistance, and data analysis all depend on reliable information. When AI systems produce incorrect outputs, the consequences can propagate through larger workflows. Mira’s approach suggests that reliability should not depend solely on improving individual models. Instead, reliability can emerge from structured collaboration among many systems.The concept of distributed verification also introduces an interesting philosophical shift. Traditionally, information has often been validated through centralized institutions such as academic journals, regulatory bodies, or editorial oversight. In digital environments driven by automated systems, these traditional forms of validation may not scale effectively. A decentralized network offers an alternative model in which verification is performed collectively by participants distributed across the network.

This does not eliminate the complexity of determining truth or accuracy. AI models themselves are imperfect tools for evaluation, and consensus among models does not guarantee correctness. However, the protocol attempts to mitigate these limitations by structuring the verification process in a transparent and accountable manner. Each step of evaluation becomes visible within the system’s record, allowing observers to understand how judgments were formed.

The decomposition of complex content into smaller claims is another significant design element. AI outputs often appear as long passages of text, making it difficult to isolate specific assertions. By breaking responses into individual statements, Mira transforms an abstract piece of text into a set of discrete propositions that can be tested. This granular approach allows the network to examine information more systematically.

From a technical perspective, this method resembles the logic used in formal reasoning systems. Instead of evaluating an entire argument at once, the system examines each premise and inference separately. If a particular statement fails verification, it can be flagged without necessarily rejecting the entire piece of content. This creates a more nuanced understanding of reliability.

The presence of independent verification nodes also raises questions about coordination and trust. In decentralized systems, participants may have varying incentives or levels of expertise. Mira attempts to address this challenge through mechanisms that track the performance and reputation of nodes over time. Participants who consistently provide reliable evaluations can gain greater influence within the network, while those who behave unpredictably may lose credibility.

Reputation systems are not new in distributed networks, but their application to AI verification introduces new complexities. Determining whether a verification judgment is accurate can itself be a difficult task. The protocol must therefore rely on patterns of agreement across the network rather than definitive proof in every instance. This creates a dynamic environment in which trust emerges gradually through repeated interactions.

Another dimension worth considering is the relationship between human oversight and automated verification. Mira Network primarily focuses on AI models as participants in the verification process. However, the outcomes produced by the network may ultimately be interpreted by human users who rely on these systems for information. The transparency provided by the blockchain layer allows these users to examine how conclusions were reached.

This transparency does not guarantee that users will always understand the technical details of the verification process. Yet it provides an alternative to opaque AI systems whose outputs cannot be easily traced. In the Mira framework, verification is not hidden within a single algorithm. It becomes a collaborative procedure documented across the network’s ledger.

The emergence of verification protocols such as Mira reflects a broader recognition that artificial intelligence requires new forms of infrastructure. As AI systems become more capable, the question of reliability becomes increasingly important. Rather than focusing solely on improving model accuracy, some projects explore how networks of systems can collectively evaluate information.

Mira’s design illustrates how decentralized principles can be applied beyond financial transactions. Blockchain networks were originally developed to maintain distributed ledgers for digital currencies. Over time, these systems have been adapted for many other purposes, including identity management, data coordination, and governance. In the case of Mira, the blockchain becomes a coordination layer for verifying the outputs of intelligent machines.

The token $plays a role in maintaining the operational dynamics of this network. By linking incentives to verification activity, the protocol attempts to create a self-sustaining environment in which participants contribute computational resources and analytical work. The token becomes part of the network’s internal economy, shaping how nodes interact with one another.

Understanding Mira Network ultimately requires looking beyond the surface description of decentralized verification. The project represents a broader experiment in how information systems might evolve in an era where machines generate a significant portion of digital content. When algorithms produce knowledge at scale, traditional forms of validation may struggle to keep pace. Mira proposes a framework in which verification becomes an integral part of the generation process itself.

In this sense, the network does not simply evaluate information after it is produced. It integrates verification into the lifecycle of AI outputs. Each statement moves through a structured process of examination before being recognized as reliable within the system. This approach attempts to transform AI responses from isolated pieces of text into elements of a collectively validated knowledge structure.

Whether such systems ultimately become central to AI infrastructure remains an open question, but the conceptual direction is clear. Mira Network treats reliability not as a property of a single model but as the result of distributed collaboration. Through a combination of AI evaluation, blockchain coordination, and incentive design, the protocol outlines a method for turning uncertain outputs into information that has undergone systematic scrutiny.

The broader significance of this approach lies in how it reframes trust in automated systems. Instead of asking users to trust the authority of a single AI model, Mira invites a network of independent systems to examine and verify each claim. Trust becomes something constructed through collective verification rather than assumed by default.