Robotics is nearing a crossroad in which technical ability is no longer the main constraint. The capability to have machines moving, perceiving and acting independently has grown quickly, but the systems that coordinate those machines are relatively infantile. The critical issue with the migration of robots out of the isolated industrial device and into the autonomous agent that will operate in the shared space is not mechanics, but governance. Machines that can act on their own have to have a structure that defines how they will relate with each other, how their decisions can be checked and the dissemination of responsibility when their actions involve the physical world.

It is this new form of governance query that is at the center of the activity of fabrics foundation. Instead of focusing on the physical appearance of robots or the intellect of what is contained in the software, the organization looks at the infrastructure that enables autonomous machines to coordinate effectively between institutions, settings, and jurisdictions. This is reflected in Fabric Protocol. It is conceived as a universal open net in which robots, humans, and digital systems are interacting with each other based on verifiable computation and agent-native infrastructure which forms a shared operational layer among machines that need to cooperate without centralized control.

Such infrastructure was never needed in the traditional model of robotics. Industrial automation used to be implemented in strictly regulated settings, in which a single firm owned both robots and operational regulations of these robots. The robots that were manufactured moved in pre-set patterns within their factories which could hardly vary. In case of any hitch, a human engineer was involved. It was easy to govern that given that there was centralization of authority and predictability of the operations environment.

Robotics systems in the present generation are quite different. The delivery machines are autonomous and operate in the streets. The agricultural robots move on large agricultural networks over which they constantly gather environmental data. The drones used to perform inspections scan infrastructure that is owned by several stakeholders. Robots in service industry are becoming more and more involved with humans in homes and business premises. Under such circumstances, robots cease to be a single tool. They are players in a networked system in which the actions of a machine are affected by another machine.

The outcome is a coordination problem which was not intended to be solved in traditional robotics architecture. The autonomous systems are forced to analyze the information of external sources and prove that this information is credible and that their actions are not conflicting with the rules that are not limited to one organization. In the absence of the valid verification, a robot has no understanding of the difference between valid operational signals and potentially dangerous inputs. The more machine autonomy an individual is presented with, the larger the implications of this uncertainty become.

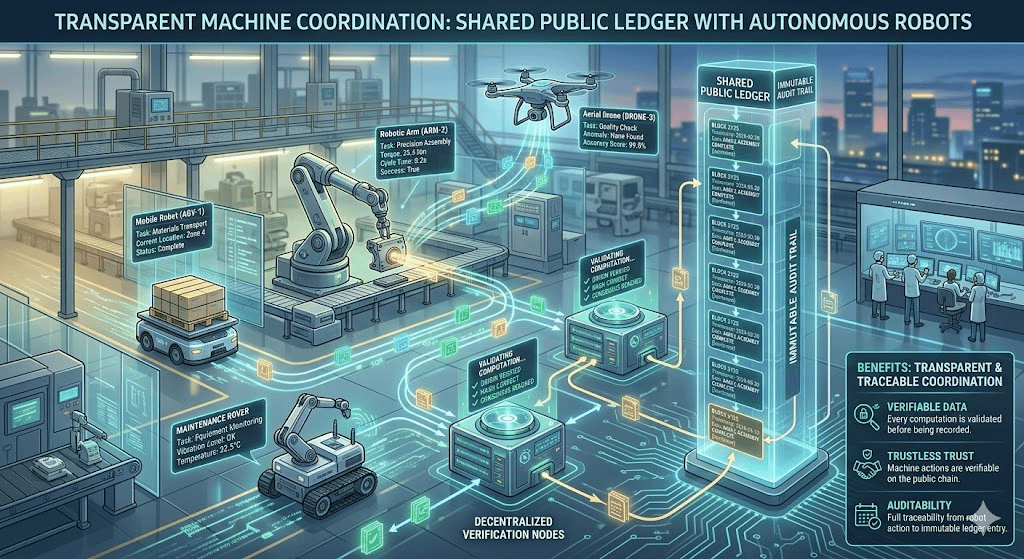

Fabric Protocol offers a way out of this issue, by bringing verifiable computing to the working infrastructure of robotics networks. The protocol allows the results of the computational processes to be cryptographically verified instead of having to blindly trust the inner framework of a computer. The contributions of data, logic of decision and coordination events can be stored on a publicly accessible registry whereby other network participants can attest to their authenticity. It is a strategy that moves the trust out of centralized authorities to a transparent checking.

This architecture has more than transparency. The autonomous robots will often rely on the information produced by other systems. An autonomous delivery robot moving within an urban area could be guided by mapping information generated using municipal sensors, environmental data gathered by the local machines, or the working policies set by the city authorities. Every bit of information will have an effect on the way the robot walks and acts. In case the data used to compute the activity of the robot is of low quality, robot operations can be unsafe or inefficient.

These interactions can be validated by a ledger based coordination system. Once machines add data or computing results into the network, the outputs can be checked and added to a common infrastructure that can be accessed by all participants. This process enables the collaboration of robots without using one trusted intermediary. It also creates a history of machine operation that is continuous, something that is essential in the event of failure or anomalies in autonomous systems.

It is also in such an environment that economic alignment is equally critical. The independent machines create useful information and accomplish the tasks which lead to the widening of robotic ecosystems. A robot that gathers environmental measurements, terrain mapping or conducts inspection activities generates a data that other machines and organizations might rely on. In the absence of an incentive structure though, it becomes hard to keep the integrity of such contributions in check.

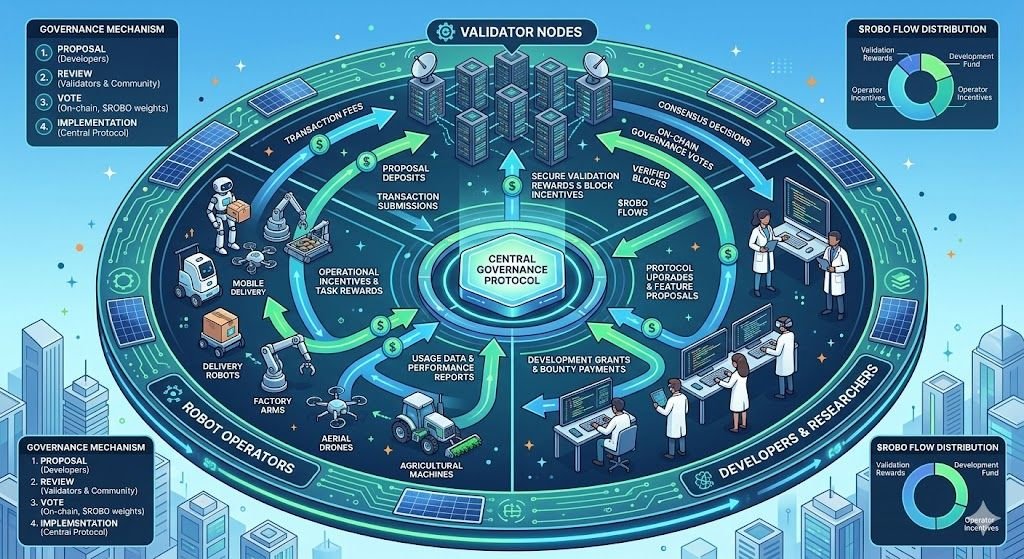

In Fabric Protocol, token $ROBO is an instrument of coordinating network participation. Instead of being a commodity on its own, it is a part of the infrastructure that allows validation, governance and alignment of contributions. The members of the ecosystem - individuals who operate robots, check the results of computations, or maintain network infrastructure all interact using systems linked to ROBO. This builds a well-organized system where consistent conduct is compensated and guideline regulations have an opportunity to develop in communal administration.

The meaning of #ROBO is not limited to token mechanics. It is an expression of a greater idea that the governance of the decentralized robotics infrastructure should be directly incorporated into the operational layer. Self-governing machines cannot completely rely on an external regulator to draw all regulations that govern their actions. Rapid technological evolution and the heterogeneity of deployment environments render the purely centralized regulation not feasible. Protocol-level governance enables operational policies to keep changing as robotic capabilities change, yet retains the transparency and accountability.

The second important aspect of Fabric Protocol is that it is agent-native. The majority of digital infrastructure was initially designed to be used by humans in interaction via applications and interfaces. Robots, on the other hand do not work in the same way. They are constant agents and be able to constantly gather, compute, and communicate with the physical world. Their operational requirements demand infrastructure in which machines are treated as a first-class participant and not an endpoint.

The agent-native infrastructure gives robots verifiable identities by which their activities and data submissions and the results of their computations can be linked. A machine that is implemented in the network can validate that its information is a product of a legitimate system that is executed under specified arrangements. This is necessary in situations where robots have to operate in large distributed settings where they cannot be directly supervised by humans.

Take an example of a network of environmental monitoring robots that is distributed in various areas. The localized information gathered by each machine will be on the air quality, soil composition, or water conditions. This data, once it is incorporated into a larger dataset utilized by researchers, regulators or agricultural systems, becomes critical to the accuracy of the source. Fabric Protocol enables the identification of each contribution with a verifiable identity and storing that record in the common ledger, which means that other parties can prove the origin and authenticity of this contribution.

Accountability is also enhanced through such infrastructure. Robotic machines can be left to work long without close supervision, and when they perform unforeseen operations, the investigators need to know how they have reached the desired results. The possibility to access a clear history of computational choices and coordination phenomena enables engineers and regulators to restore the chronology of interactions that resulted in a certain occurrence. Such traceability is necessary because robotics systems will find their way into the infrastructure of the population.

Although these advantages exist, the development of decentralized layers of coordination of robotics is accompanied by major technical and institutional challenges. Robots have hard hardware requirements such as high processing power, power requirements, and communication bandwidth. Application of distributed verification systems in such environments should be carefully engineered so as to take care of the fact that security mechanisms ought not to disrupt real-time operational requirements.

Another challenge is the regulatory complexity. Robotic machines often cross across the jurisdictions, which legal frameworks on robotics are yet to develop. Infrastructure systems including Fabric Protocol should thus be able to ensure that they comply with regulatory supervision and maintain openness to joint innovation. An attempt to create systems that bring transparency without creating strict centralized control is shown in the work of @Fabric Foundation , where the authors strive to prioritize both of these factors.

However, standardization is also a barrier in the long run. The ecosystem of robotics is currently fragmented, with machines that are highly differentiated, and can be based on a variety of hardware architectures. To develop a coordination protocol that would be useful in supporting this diversity, modular infrastructure would be needed to interface with a variety of platforms. The modular design of Fabric Protocol tries to eliminate this problem by enabling developers to add verification and governance functionality without re-creating whole robotics stacks.

The issue of scalability is growing more crucial when autonomous deployments are larger. A universal web of robots will generate enormous amounts of data and coordinating incidents. Any infrastructure that has the duty of checking these interactions must be efficient in processing high volumes of information with cryptographic integrity. Distributed ledger technology also provides the basis of such systems, but it needs to be streamlined to accommodate an environment where machines rely on timely data to perform safely.

A relationship of trust between humans and autonomous systems is eventually relying on the invisible layers of infrastructure. Users of robots in the street or in the workplace must be assured that machines act based on clear guidelines and verifiable procedures. The more an action of a robot can be audited or traced because of a shared coordination system, the foundation of such trust is deeper.

The various stakeholders can be involved in the formulation of those rules through the governance mechanisms that are related to $ROBO. The decisions that can be made on how the protocol evolves can be inputted by engineers creating robotics platforms, operators deploying machines, researchers studying network data, and institutions tasked with oversight. To this end, decentralized robotics infrastructure is a participatory space where technical standards and operational norms are created by means of collective inputs.

The wider importance of this strategy can be seen in the fact that robotics is no longer a discipline that is strictly mechanical. Computational infrastructure and governance structures and economic coordination systems are all equally crucial to the future of autonomous machines. Learning and adaptive machines have to work in networks where the interactions of the machines can be verified and held accountable.

Fabric Protocol is an effort to construct such a network. The system integrates verifiable computing, agent-native infrastructure, and coordination of public ledger to create a platform in which robots may cooperate across institutions without causing degradation in transparency or reliability. In that context, both #ROBO #ROBO OBO are processes that relate the technical infrastructure to governance involvement.

These underlying coordination systems will probably be heavily relied upon in the development of robotics, as much as hardware or artificial intelligence. Autonomous machines need rules, verification and shared infrastructure to be able to deal with complexity over distributed networks. The significance of these layers of governance will continue to increase as the robot ecosystems continue to grow.

#ROBO $ROBO @Fabric Foundation