I remember watching a system do tasks perfectly, one after another. It was following the rules well. But it didn’t seem to get what we wanted as an outcome. It just followed the rules, no context given.

That's when I started thinking about alignment. It's less of an AI thing, and more about how we set things up.

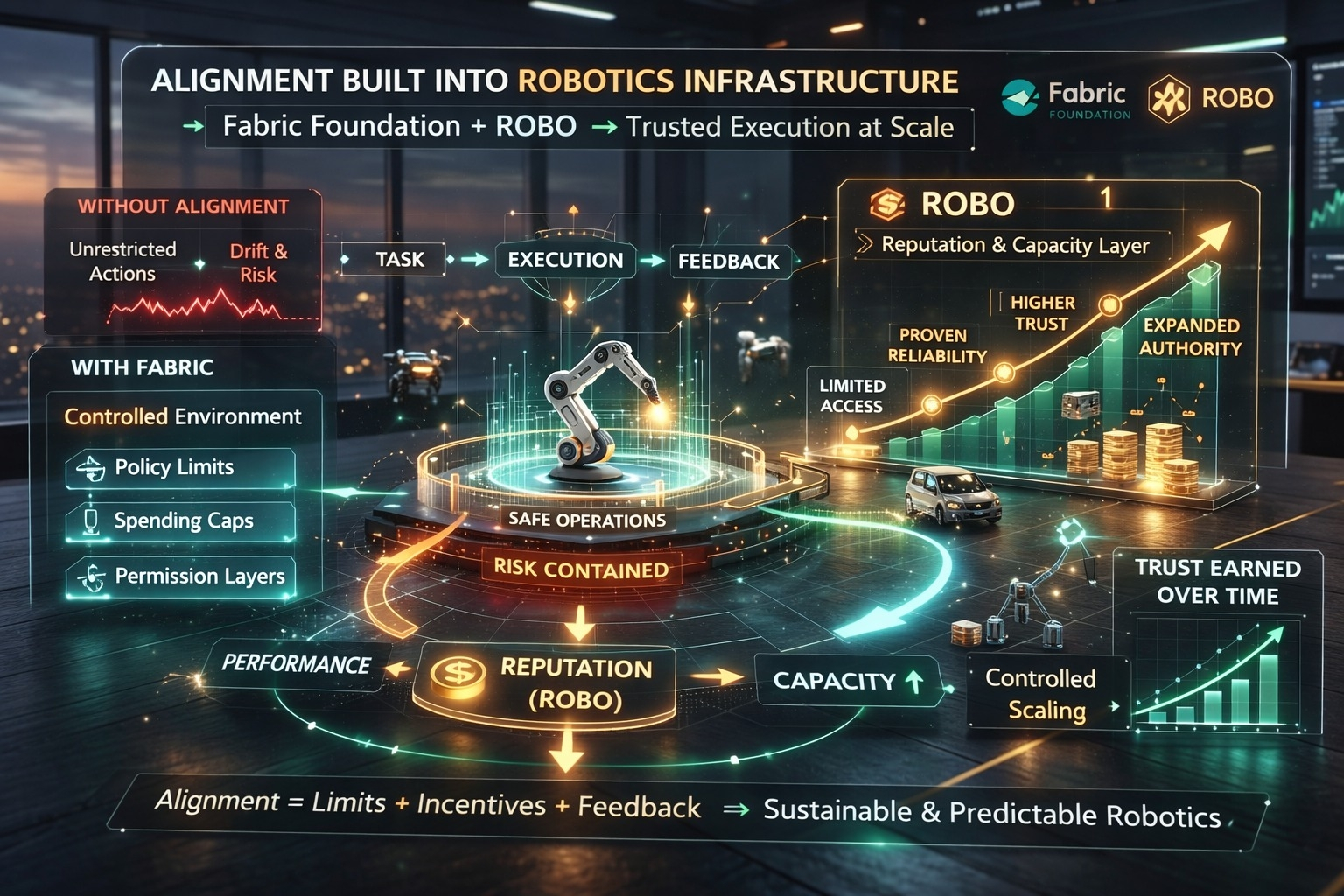

Machines can follow instructions, no problem. But does their world push them toward the right actions? If alignment is all about better AI models, then every system is risky. Stuff changes. But if alignment is a part of the basic setup – the limits, what motivates them, the permissions, what feedback they get – the system stays on track, even when no one's watching.

That’s why Fabric Foundation and ROBO are important. They don't just make robots better, they make their actions reliable and predictable.

Robotics is taking off because it’s cheaper and runs 24/7. Machines don’t sleep. The problem? We're speeding things up faster than we're keeping an eye on things. Misalignment happens slowly.

I've seen it before. At first, automation is great. Then we give it more to do. Then we watch less because things work. Over time, choices stray from what we originally wanted. Nothing broke, but there was no direction anymore.

That drift is what alignment infrastructure is trying to stop.

Instead of telling machines to be good, Fabric focuses on the underlying structure. It sets the rules and boundaries. Policy limits. Spending caps. Permission layers. Limits on what can actually happen. What a robot does inside Fabric isn't just about its own code. It's shaped by the reality around it.

Basically, it can’t screw up if screwing up isn’t even possible. It might sound strict, but it's what allows things to scale up. Humans don’t watch every bank transaction because the system has built-in limits. Same idea here. Alignment is less about constantly fixing things and more about setting up the situation correctly.

But just structure isn't enough. Alignment changes. Stuff changes. How much we trust things changes. Some systems are steady. Others aren't. If we treat every machine the same, the network is either too strict or too dangerous.

That's where ROBO comes in.

ROBO helps show who's reliable in Fabric. Instead of giving everyone the same power, it gives more to those who do well. A system that does well gets to do bigger tasks. If it messes up, it stays limited.

That's where alignment gets real.

In real life, we don’t give someone full power right away. We trust them more over time. ROBO does the same for machines. Alignment isn’t a given. It’s earned, observed, and tweaked.

When you put Fabric's boundaries together with ROBO's reputation system, things get interesting. Instead of just setting things up once, keeping a system on track becomes an ongoing thing.

On the surface, it looks easy: machines do stuff.

But underneath, the structure is working. Limiting exposure, measuring how things are done, increasing or decreasing authority.

This also changes how things grow. Most robotics talk is about adding more machines. But scaling up without alignment just makes things riskier. Fabric and ROBO do things differently. They give more responsibility to systems that have proven they're steady.

It feels slower, but it's more steady.

Across crypto and infrastructure, systems are moving away from open access and toward systems where continued access is based on how well you play by the rules. Open access helps in the beginning, but earning trust is needed to keep things running in line.

Fabric and ROBO are at that intersection.

Alignment through infrastructure has downsides.

One worry is measurement. Reputation depends on what the system tracks. If the signals are too narrow, machines might work toward the metric, not the real goal. Another risk is power getting concentrated. Systems with good track records might get more and more tasks, making it harder for newcomers to break in.

There's also a human issue. When things seem stable, people stop paying attention. From what I've seen, the more reliable automation gets, the less I check it. That makes sense, but it also means the alignment needs to happen on it's own, because we will eventually lose focus.

That's why the infrastructure layer matters. Alignment that relies on human attention won’t scale up. Alignment that's built-in will.

The cool thing about Fabric Foundation is that it doesn’t try to align things through making machines smarter. It assumes they'll get faster and more on their own. So it builds in safety measures that keep that freedom going in the right direction.

ROBO adds memory to the system. Not AI memory, but a record of who did well, who didn’t, and who should be trusted more tomorrow.

Together, they make alignment something consistent.

If this works, the future of robotics won’t depend on perfect machines. It will depend on safe environments, where bad behavior is contained and good behavior leads to more responsibility.

That changes the conversation. Instead of asking, “Can we trust the machine?” the better question is, “Does the system limit the damage if we trust the wrong machine?”

That feels like a more realistic way forward.

Because machines will keep getting faster. Execution will keep expanding. Human oversight will keep shrinking.

If alignment is going to work at scale, it can’t be added as an afterthought.

It has to be underneath – consistent and quiet – in the basics where the machine can't go too far off course.

#robo #ROBO @Fabric Foundation $ROBO