I don’t look at Mira Network as another AI narrative layered onto crypto. I look at it as an infrastructure correction. For years, we have treated AI outputs as probabilistic suggestions wrapped in confidence. The model speaks, we assume competence, and we move forward. Mira changes that dynamic by introducing something the AI stack has historically lacked: deterministic, on-chain verification of inference.

Mainnet is live. Staking is active. Integrations are expanding. But those milestones only matter because of what they secure — a verification layer that converts AI outputs into cryptographic attestations. That is not branding. That is a structural shift.

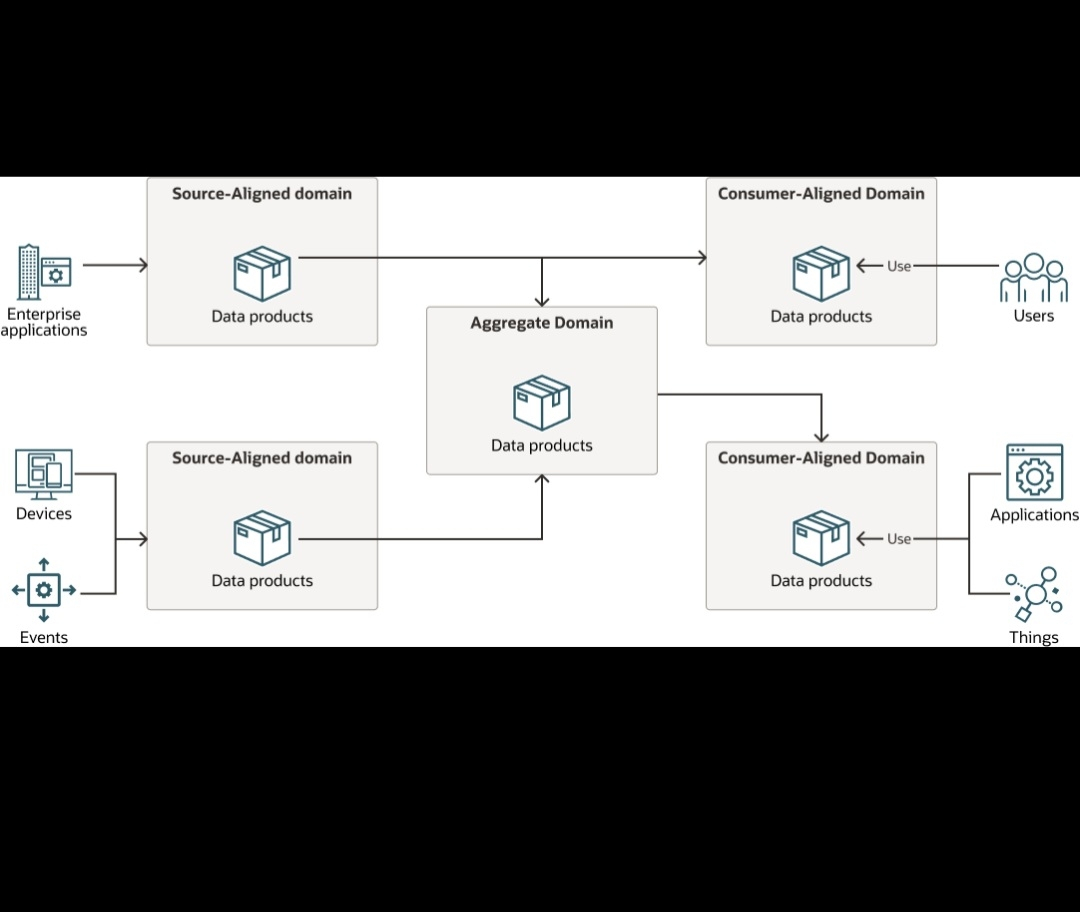

What Mira is building can be understood as a three-part system: inference, validation, and finality. AI models produce outputs. Independent validators re-compute or verify those outputs. The network aggregates results and anchors proofs on-chain. The outcome is no longer just “model said X.” It becomes “network verified X under defined rules.” That difference is subtle in wording but massive in implication.

In my view, the real product here is not AI access — it’s AI accountability.

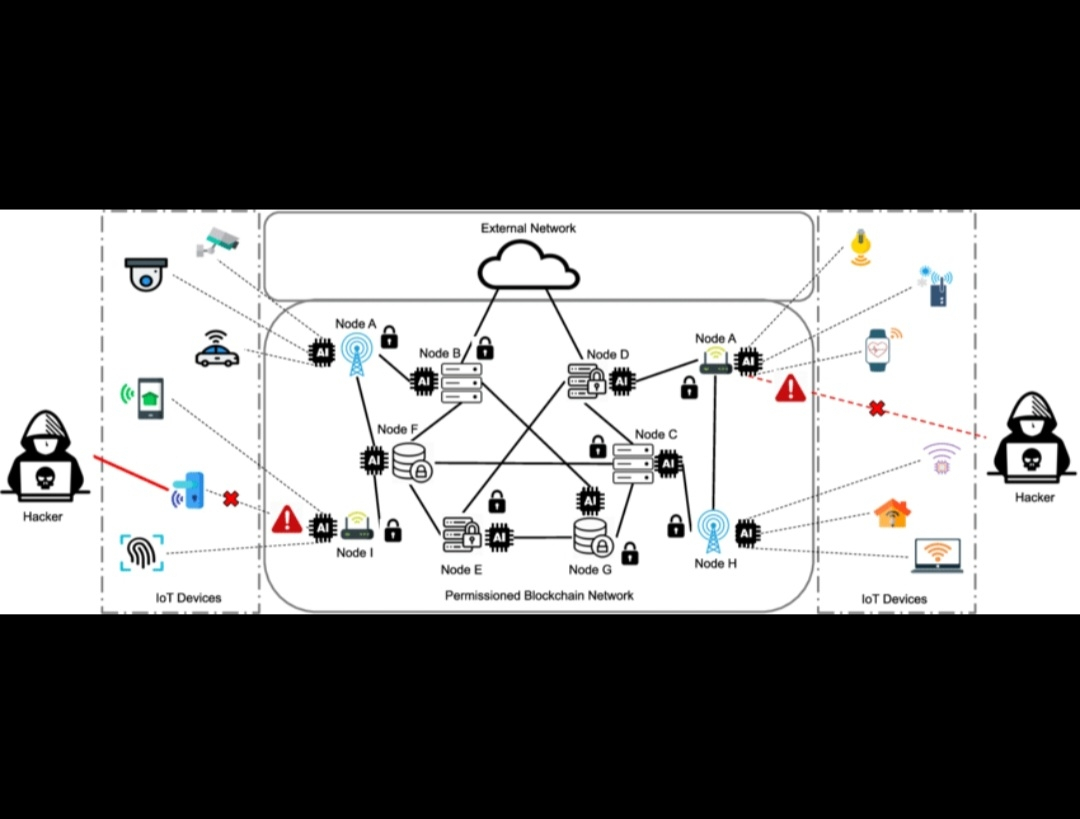

The staking layer matters because verification cannot be soft. Validators must have economic weight behind their attestations. When capital is bonded, verification becomes enforceable. When incentives are aligned, dishonest validation becomes economically irrational. That’s the core game-theoretic protection layer that gives Mira credibility beyond simple API orchestration.

And this is where $MIRA’s role becomes operational rather than symbolic. Staking secures the verification network. Incentives coordinate validators. Governance parameters shape verification standards. The token is not attached to narrative velocity — it is embedded into network security and coordination.

I pay attention to that distinction.

Most AI-crypto intersections chase distribution — more models, more integrations, more user endpoints. Mira is focused on something narrower but deeper: proof integrity. That focus suggests long-term infrastructure thinking rather than short-term surface expansion.

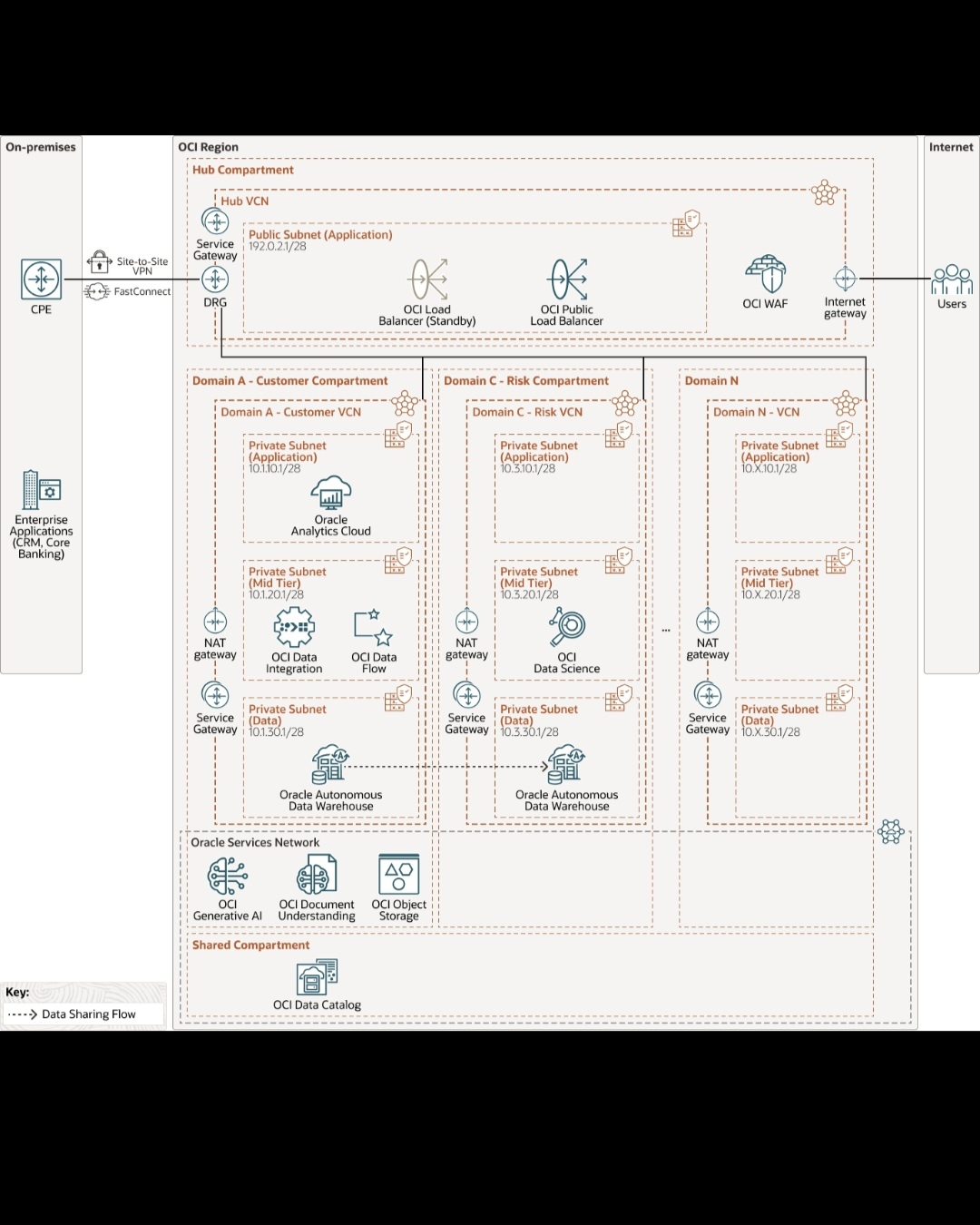

Technically, the interesting challenge is reproducibility. AI models can be non-deterministic. Latency matters. Hardware environments differ. Mira’s architecture acknowledges that verification cannot rely on blind trust in off-chain computation. Instead, it introduces structured validation flows where outputs are either reproducible or cross-checked under predefined parameters. The more standardized the verification rules, the stronger the settlement guarantees.

This is particularly important for use cases where AI outputs trigger economic actions: automated trading signals, risk assessments, content validation, governance analytics, or any machine-generated input that feeds on-chain execution. Without verification, those workflows are probabilistic. With Mira, they can become attestable.

There is also a subtle but important market positioning angle. As AI adoption accelerates, regulatory scrutiny around AI reliability and accountability increases. Systems that can prove how outputs were generated — and validated — will sit closer to institutional adoption. Mira is not selling creativity. It is selling auditability.

I see that as a long-term alignment with enterprise-grade expectations.

The network’s progress — mainnet activation, validator onboarding, ecosystem expansion — signals that Mira is transitioning from concept to enforceable infrastructure. That transition phase is critical. Many AI-linked projects stall at demonstration. Mira is already operating in production mode, which means real economic actors are interacting with its verification mechanism.

Another layer I consider is composability. Because proofs are anchored on-chain, other protocols can reference them. That means Mira’s verification results are not terminal outputs; they are inputs for broader decentralized systems. Over time, this can transform Mira from a standalone AI validator into a foundational verification primitive.

The more protocols that depend on verified AI outputs, the more structurally embedded the network becomes.

What differentiates Mira in my assessment is restraint. The communication centers on verifiability, staking security, and ecosystem integration — not speculative promises. That restraint suggests operational discipline. Infrastructure projects that survive tend to emphasize process integrity over narrative amplification.

From a systems design perspective, Mira is addressing a fundamental asymmetry: AI produces high-value outputs but lacks built-in trust guarantees. Blockchains provide trust guarantees but lack native intelligence. Mira sits at that intersection and attempts to resolve the trust gap without compromising decentralization.

The staking mechanics reinforce that alignment. Validators are not passive observers; they are active participants in the verification cycle. Their capital is exposed to performance and integrity. That exposure is what gives the verification layer weight.

As more integrations come online, the network effect is not simply more users — it is more verified outputs circulating across ecosystems. Each verified result strengthens the credibility of the layer itself.

I do not frame this as an AI narrative play. I frame it as a verification infrastructure buildout. In markets saturated with speculative positioning, credibility compounds slower but lasts longer. Mira’s design choices — economic staking, on-chain proof anchoring, validator coordination — are signals of a team prioritizing enforceability over aesthetics.

If AI is to move from experimental novelty to economic backbone, it needs something stronger than accuracy claims. It needs settlement-grade verification.

That is the lane Mira is occupying.

And if that thesis plays out — if verified AI becomes a prerequisite rather than an upgrade — then Mira’s role shifts from optional layer to structural necessity. Not hype. Not prediction. Mechanism.

That is why I pay attention here.