Most robotics narratives still focus on capability milestones. I care more about error economics.

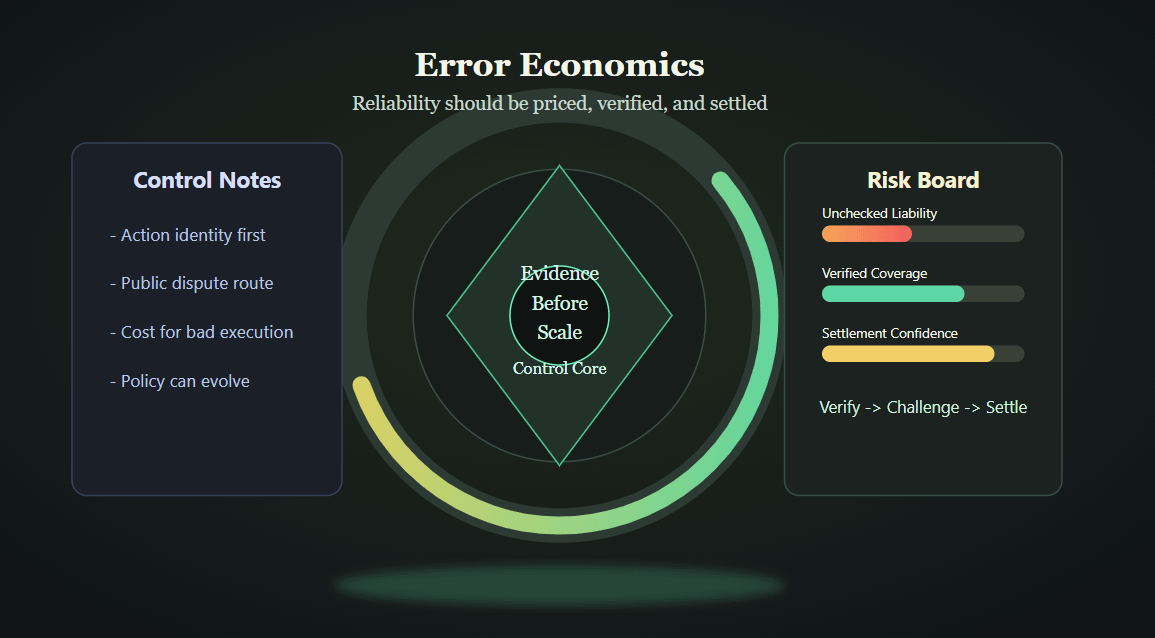

In real operations, every wrong action has a cost surface: direct loss, recovery time, customer trust damage, and governance overhead. If a system can fail without meaningful consequence for low-quality behavior, reliability claims become marketing language.

This is where Fabric's design thesis is compelling. Instead of treating governance as a document and verification as an optional add-on, the protocol links identity, challenge rights, validator participation, and economic consequences into the same operational loop. In plain terms: actions can be checked, disputes can be formalized, and bad behavior is not free.

That mechanism-level approach is important for teams building long-running robot services. You need more than throughput. You need a credible control layer that can absorb conflict, surface evidence, and evolve policy without freezing deployment. Otherwise every incident becomes an ad-hoc firefight.

I also think this is where `$ROBO` has strategic relevance. Utility and governance only matter when they are attached to measurable system behavior. The useful benchmark is not narrative excitement. The benchmark is whether the network can keep quality high while handling pressure, disagreement, and continuous updates.

My bias is clear: speed is valuable, but unmanaged speed is expensive. The better system is the one that can prove outcomes and price failure correctly before scale multiplies the damage.

Would you deploy autonomous robot workflows at scale without a public mechanism to challenge and settle contested outcomes?

@Fabric Foundation $ROBO #ROBO