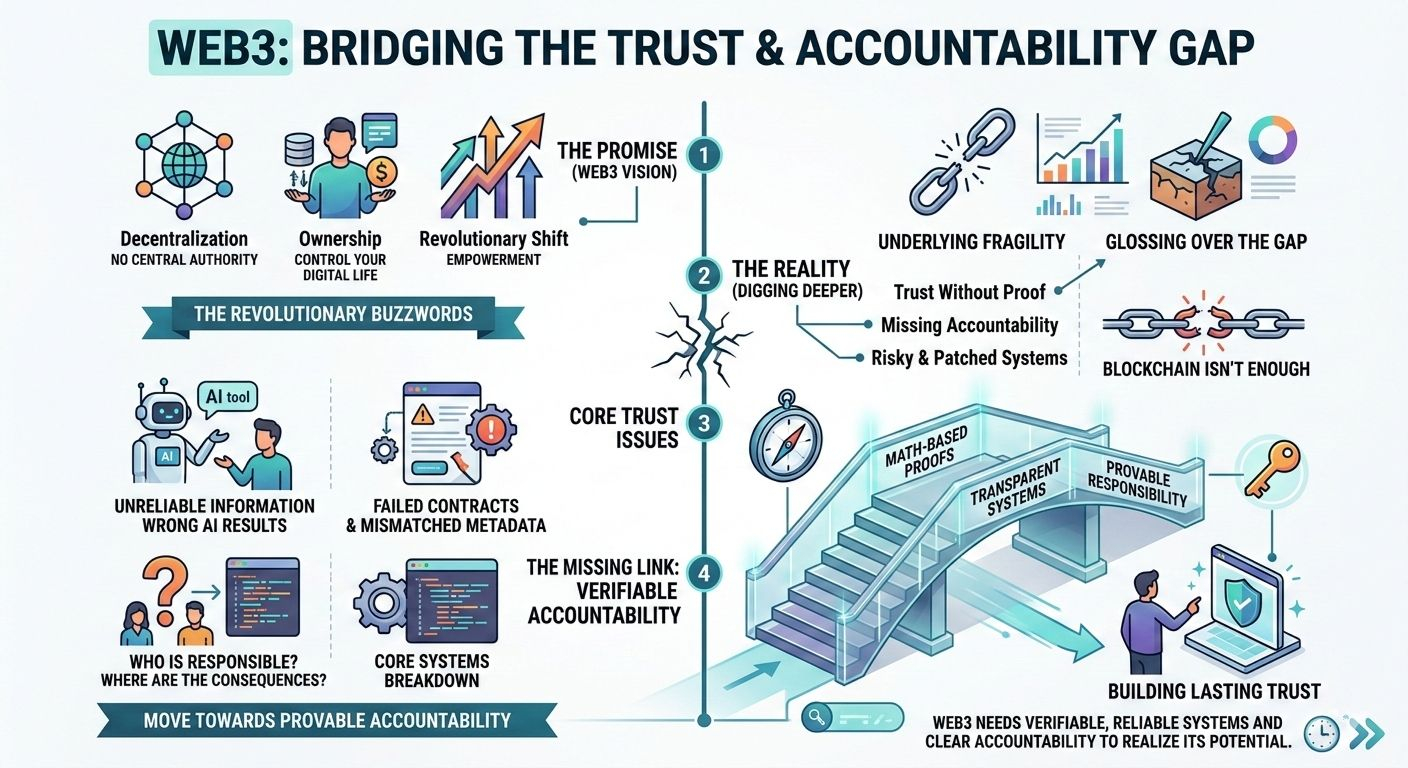

Here’s the thing about Web3: it’s sold to us as this revolutionary shift. We hear about decentralization, ownership, and a new world where we control everything. But if you dig a little deeper, it feels like we're glossing over something crucial. Something that, if we don’t fix, could stop this whole thing from actually going anywhere. That thing is trust.

It’s easy to get caught up in the buzzwords. The world’s changing. We’re promised a future where we’re free from the control of big companies, where we own our data, our assets, our digital lives. But the truth is, when it comes to Web3, there’s a big gap between what we’re promised and what we’re actually given. Sure, there’s decentralization—everything’s on a blockchain, right? But that’s not enough. Without a way to actually trust the data, the systems, and the people we’re interacting with, what are we left with?

We’ve built this massive ecosystem, full of users, apps, tokens, NFTs, DAOs—you name it. But underneath it all, there’s this uncomfortable truth: it’s all pretty fragile. If you look at how things work in Web3 right now, it’s not nearly as reliable as we like to pretend it is. And I’m not talking about the flashy stuff, like the next big DeFi protocol or the hype around NFTs. I’m talking about the core issues: the way information flows between users and systems, the way things break down without any accountability, and the way mistakes or failures often just vanish without any real consequences.

Let’s be real for a minute. Have you ever had one of those moments where you used an AI tool and it came back with completely wrong information? Maybe it was a contract that didn’t execute properly, or an NFT with mismatched metadata. We’ve all been there, right? It’s frustrating, especially because we don’t have a clear way of checking if these systems are working the way we think they are. And when something goes wrong, who’s responsible? Who’s making sure things are functioning like they should?

We trust these systems—sometimes blindly. But trust without proof is risky. And right now, that’s what we’re dealing with. Most of the “solutions” out there? They don’t actually solve the problem. They’re just patches over a much deeper issue. A lot of what we see are half-hearted attempts at making things better, with no real accountability. We rely on validators, or on systems that are just assumed to be working correctly. But when those systems break, what then?

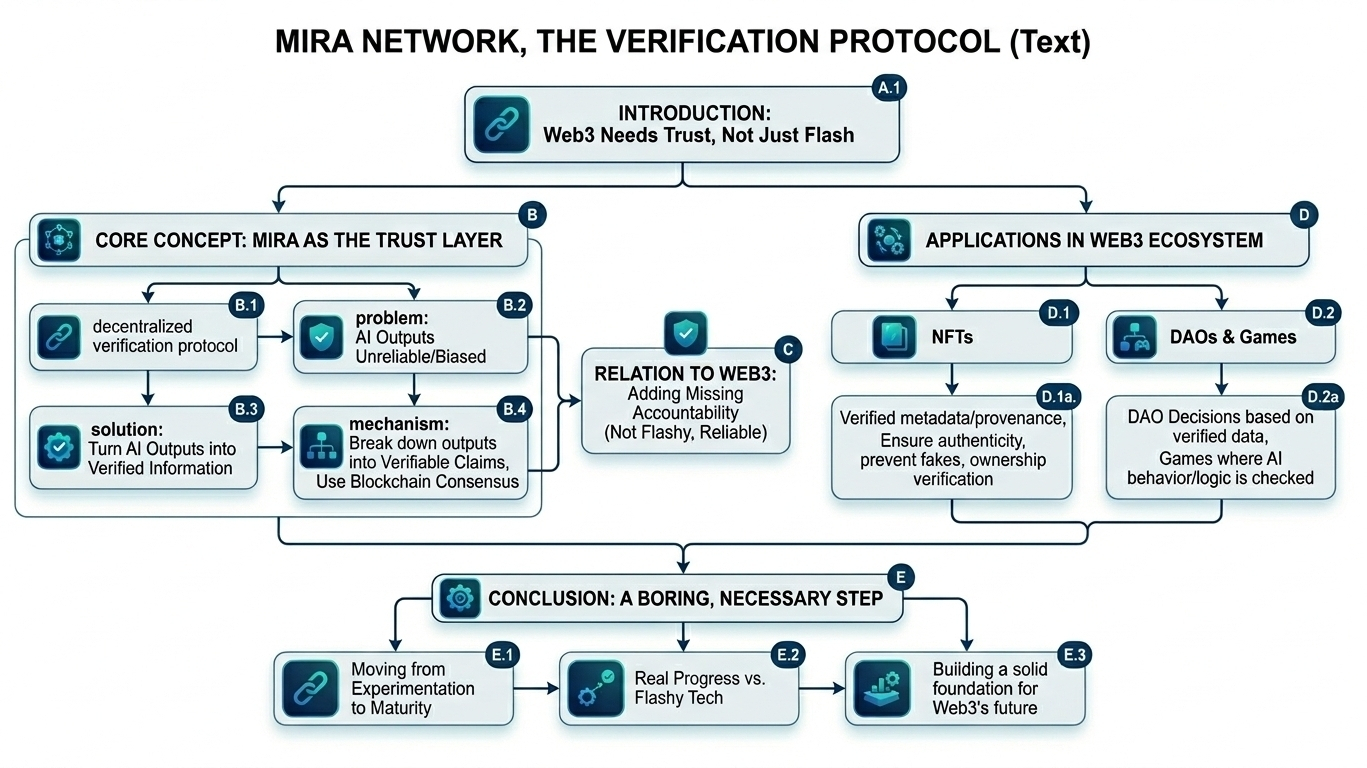

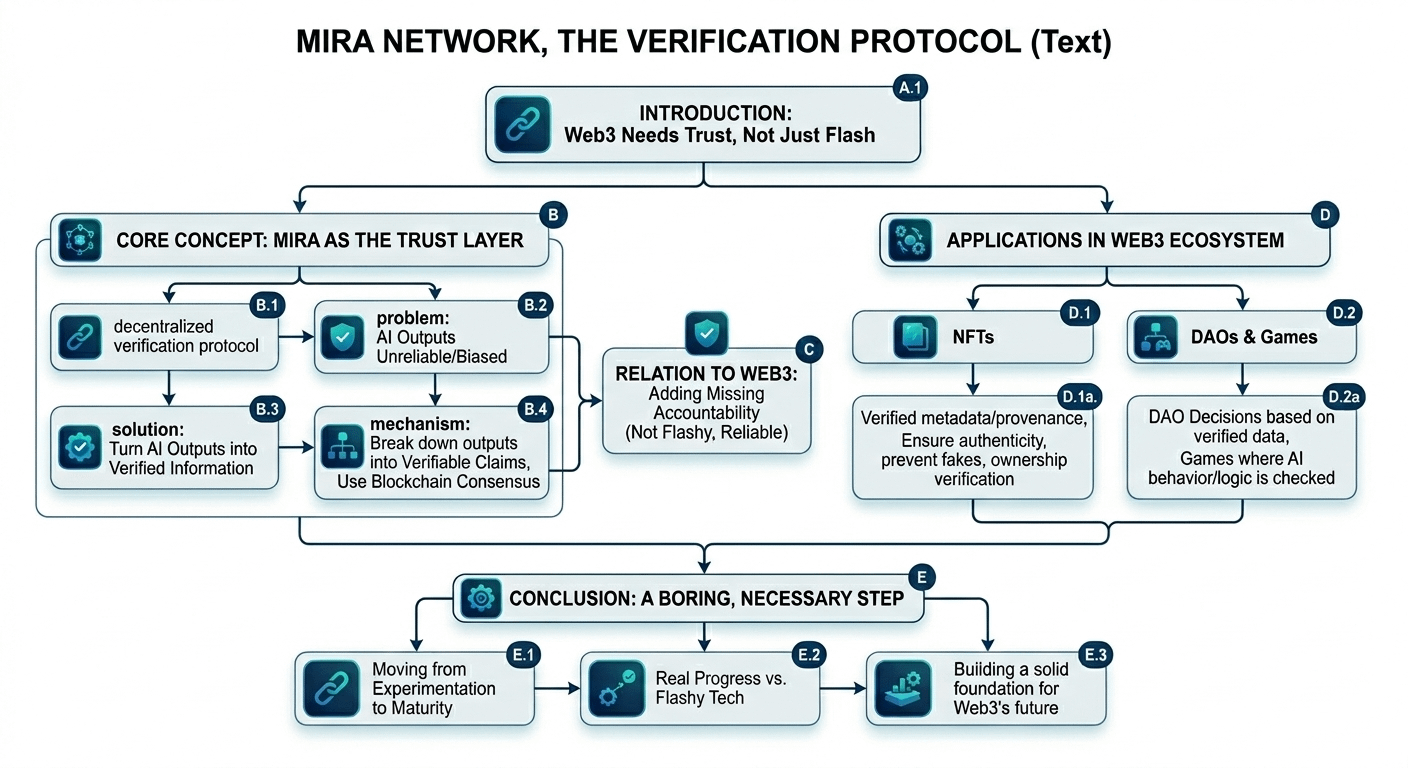

That’s where Mira Network comes in. Now, before you start thinking this is another miracle cure for all of Web3’s problems, let me clarify: it’s not. Mira isn’t going to suddenly make everything perfect. It’s not a hero. But it’s a serious attempt to tackle something that Web3 desperately needs: trust.

Mira is a decentralized verification protocol. The idea is simple—AI systems today often get things wrong. Whether it’s errors in the data or bias in decision-making, the outputs aren’t always reliable. Mira works by turning those AI outputs into verified information. Instead of just hoping the system is correct, Mira breaks down each piece of information into verifiable claims that are checked and validated through blockchain consensus.

I know, it sounds boring. And honestly, that’s the point. It’s not about being flashy; it’s about being reliable. The whole idea of Mira is to add that layer of accountability that’s missing from most Web3 systems. It’s about making sure that if an AI system messes up, there’s a way to check, verify, and correct it.

This isn’t the kind of thing that gets people excited at a conference. But it’s necessary. It’s the boring stuff that’s going to make the difference in the long run. Web3 isn’t going to grow up without it.

So why does this matter for NFTs, DAOs, games, and the broader Web3 space? Think about it. NFTs aren’t just collectibles anymore. They could represent ownership, access, rights—really important stuff. But if the information behind them isn’t verified, they’re useless. They could be fakes, copies, or misrepresented in ways that we can’t even detect. Mira helps fix that. It adds a layer of trust so that when you buy an NFT, you know what you're getting is real.

The same goes for DAOs. These organizations are supposed to be decentralized, with decisions made by the community. But how can we trust the data behind those decisions? If the AI system running the DAO is pulling from incorrect or biased data, it’s not really decentralized anymore, is it? It’s just another system pretending to be decentralized. Mira steps in here too, making sure that every piece of data used in these decisions is verified, so that the entire system is accountable.

At its core, Mira is about adding trust where it’s sorely needed. And sure, it’s not the most exciting thing you’re going to hear about today, but it’s one of the most important. It’s about making sure that Web3 can actually work in the long term. We need systems that can be trusted, systems where mistakes matter, and systems where there are real consequences for failure. Without that, we’re just building another house of cards.

So, what does Web3 need to grow up? It needs to stop pretending that decentralization alone is enough. We need trust, and we need accountability. We need systems that are actually reliable, not just theoretically so. And while Mira isn’t going to fix everything, it’s a step in the right direction. It’s a boring, necessary step that’s going to make all the flashy things in Web3 actually work the way they should.

The next time someone tells you about the wonders of Web3, remember: the real progress isn’t going to come from flashy tech or new platforms. It’s going to come from making sure the systems we have in place actually work.

$MIRA @Mira - Trust Layer of AI #MIRA