Most token threads promise clarity and deliver a red box of recycled talking points. When I started researching MIRA, I noticed the same pattern: fast opinions, slow evidence. So I built a simple, repeatable framework to Learn what matters, filter signal from trending chatter, and decide whether $MIRA fits my strategy—without hype or guaranteed outcomes.

This is the 30-minute playbook I use before I even consider pressing buy.

What MIRA Is (Context for Intermediate Users)

MIRA positions itself as an infrastructure layer designed to coordinate on-chain activity with measurable utility. Instead of vague “ecosystem growth,” I look for concrete mechanisms: how value flows, who can claim it, and what technical constraints exist. The project’s official updates via @Mira - Trust Layer of AI give directional context, but I don’t outsource judgment. I test every claim against on-chain behavior and product releases.

When I reference #Mira here, I’m focusing on architecture and incentives—not price speculation.

The 30-Minute Research Framework

I split my review into four tight blocks. Think of it as a decision tree: if X is missing, I slow down; if Y improves, I revisit.

1) Utility & Flow: Where Does the Reward Come From?

I map the token flow like a network packet: origin → validation → distribution.

What triggers value creation?

Who earns a reward, and for doing what?

Is utility dependent on perpetual emissions, or is there demand-side pressure?

If the answer is “it’s complicated,” I draw a simple box diagram on paper. If I can’t explain it in five sentences, I assume the design may be overfit.

Example: If a feature requires active participation (e.g., governance, staking, or usage), I ask whether incentives align with real usage or just short-term competition for yield.

2) Product Reality Check: Code Before Narrative

I scan repositories and update notes. Not to audit line-by-line code, but to solve one puzzle: is shipping velocity consistent?

Are releases incremental and coherent?

Do docs reduce friction so a new user can Learn the flow?

Is there a working demo, or just a roadmap word cloud?

If documentation explains how a transaction packet moves through the system and what edge cases exist, confidence increases. If everything is abstract, I downgrade conviction.

3) Incentive Design: Who Can Claim Value?

Token systems fail when insiders claim outsized benefits early while public participants bear dilution risk. I review allocation structures and unlock schedules—not to predict price, but to understand power dynamics.

I also look for sustainable earn mechanics. If users Earn only through emissions, that’s fragile. If they Earn because activity creates measurable throughput or fee generation, that’s more durable.

Mini Case Study (Hypothetical):

Imagine a builder integrating MIRA into a trading dashboard. If each interaction produces verifiable on-chain state changes and the token is required to process or validate that state, usage could justify demand. If instead it’s optional, the token risks becoming cosmetic.

4) Narrative Detox: Signal vs Trending Noise

Crypto runs on story cycles. I run a quick quiz for myself:

Is this update technical, or just branding?

Does it expand the addressable market, or just repackage the same users?

Would the system still function if social hype disappeared for 30 day(s)?

When a project trends, I assume volatility—not inevitability. My job is to separate the structural code from the temporary red-hot narrative.

Risks & What to Watch

Emission Pressure: If reward distribution outpaces organic demand, dilution risk rises.

Concentration Risk: Large holders can influence governance outcomes.

Integration Friction: If developers struggle to integrate, adoption slows.

Speculative Loops: Heavy trading without product usage may distort signals.

Security Surface Area: As features expand, attack vectors increase.

None of these invalidate the project—but each changes the risk-reward profile.

One Nuanced Take (What Would Change My View)

If MIRA evolves into a system where utility is provably decoupled from token necessity—meaning users can access core features without touching the token—I would reassess the long-term thesis. Tokens must either coordinate scarce resources or encode verifiable rights. If neither holds, narrative alone won’t sustain value.

Conversely, if new integrations demonstrate measurable throughput that requires token participation, that strengthens the case. I don’t need certainty—I need falsifiable progress.

Practical Takeaways

Map the token flow in one box diagram before forming an opinion.

Track shipping consistency more than influencer commentary.

Revisit your thesis quarterly; don’t let one trending week define it.

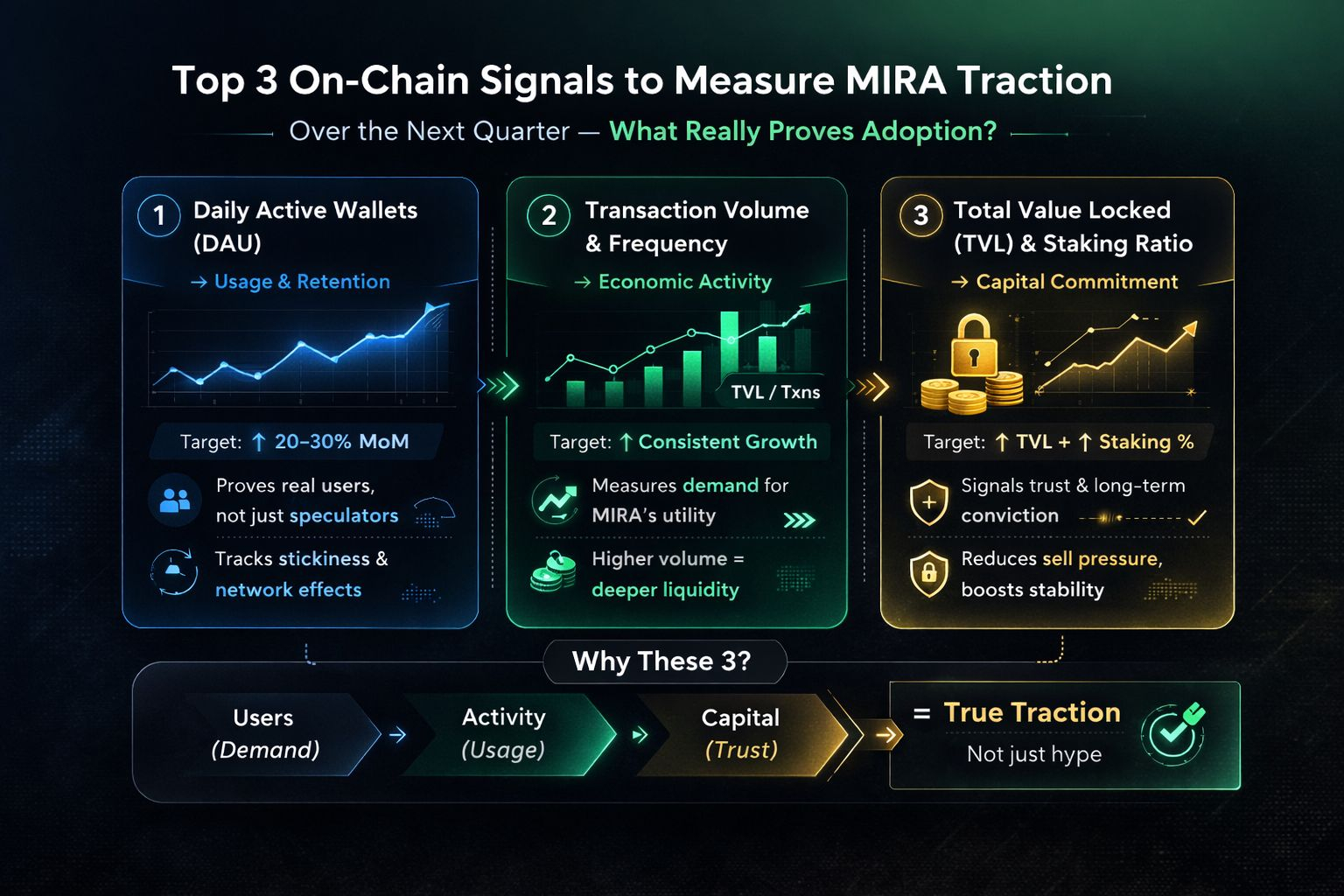

Optional visual: A simple flow diagram showing user action → protocol processing → token utility → reward distribution.