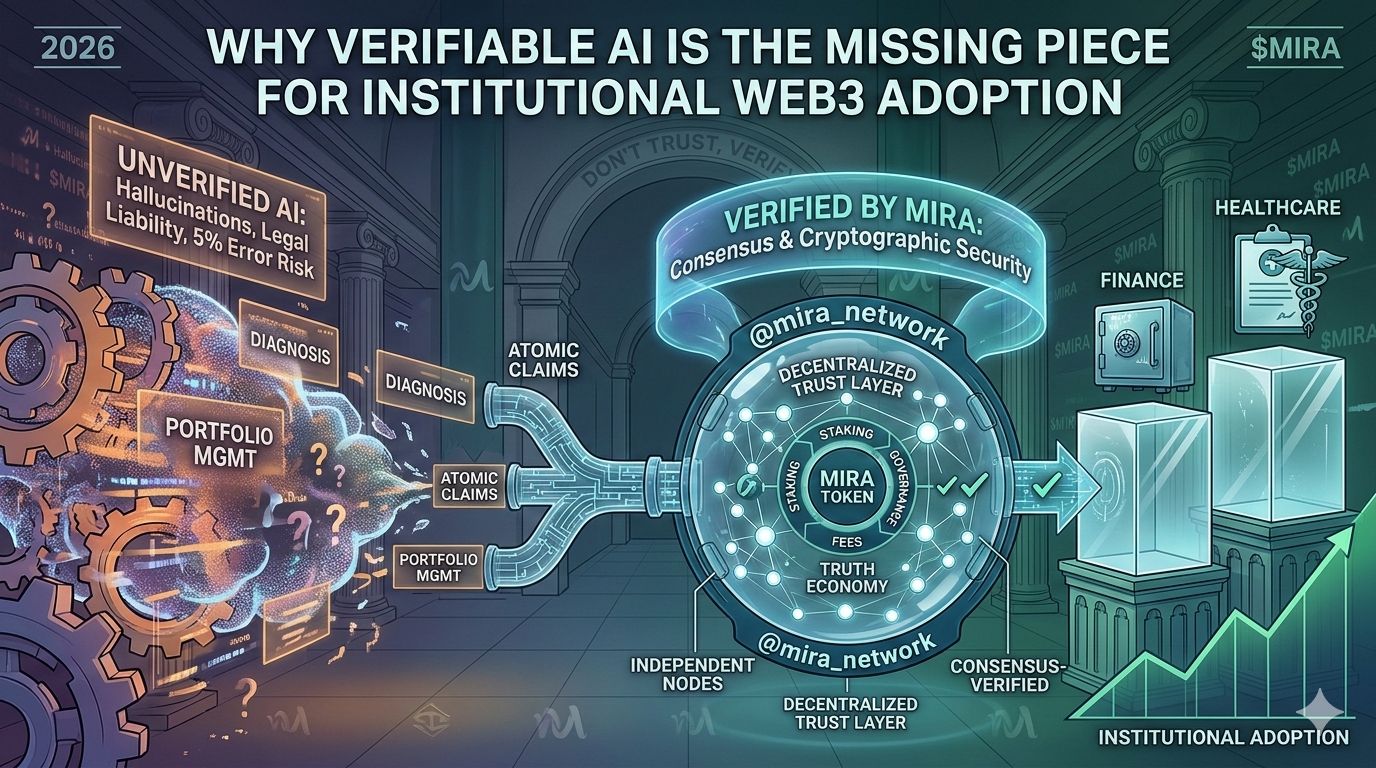

We’ve all been there: You ask an AI a complex question about a DeFi protocol or a medical fact, and it gives you an answer so confident you almost don't double-check it. Then you realize—it completely made up the source. In 2026, we’ve moved past being "wowed" by AI fluency. Now, the industry is waking up to the Reliability Gap.

For high-stakes sectors like healthcare and finance, a 5% "hallucination" rate isn't a small quirk; it’s a legal nightmare. This is exactly why I’ve been following @mira_network lately. They aren't trying to build just another chatbot. They are building the Trust Layer that AI actually needs to be useful for institutions.

How it works (without the jargon)

Instead of taking a single AI model’s word for it, Mira treats an output as a "proposal." It breaks the response down into small "atomic claims" and sends them to a decentralized jury of independent nodes. These nodes run different models to cross-verify the info.

Think of it like the peer-review process for science, but happening in seconds on-chain. If the nodes reach a consensus, you get a cryptographically secured proof of truth. If they don't, the "hallucination" is caught before it can cause damage.

The $MIRA Engine

What makes this stick is the economic incentive. The $MIRA token isn't just a speculative asset; it’s the fuel for this "Truth Economy":

Staking: Nodes have to stake $MIRA to participate. If they try to lie or get lazy, they get slashed. Simple as that.

API Fees: Developers use $MIRA to access the verification layer, creating actual utility-driven demand.

Governance: Holders actually decide h ow the "Audit Engine" evolves.

ow the "Audit Engine" evolves.

As we see more autonomous agents managing real-world assets (RWAs) and on-chain portfolios, "Don't Trust, Verify" isn't just a Bitcoin maxim anymore—it's the only way we survive the AI boom. In a world of deepfakes, Mira is bringing the transparency we've been missing.

#Mira $MIRA @mira_network