I’ve been watching the AI narrative accelerate at a pace that feels structurally unsustainable. Models are getting larger, outputs are getting more convincing, and capital is flowing aggressively into anything labeled “AI.” But the more I study the architecture underneath, the more obvious one structural flaw becomes: we’ve optimized for generation, not verification.

That gap is exactly where I see MIRA positioning itself.

Most AI systems today operate as black boxes. They produce answers, predictions, or creative outputs — and we accept them based on probability, not proof. For consumer applications, that might be tolerable. For financial systems, legal workflows, autonomous agents, or high-stakes decision engines, it’s not. The economic layer of AI requires verifiability, not trust.

What draws me to Mira is that it doesn’t compete at the model layer. It doesn’t try to out-train or out-scale foundation models. Instead, it focuses on the coordination and validation layer — where outputs can be independently checked, challenged, and cryptographically confirmed.

That design choice matters.

From Generation to Verification: A Structural Shift

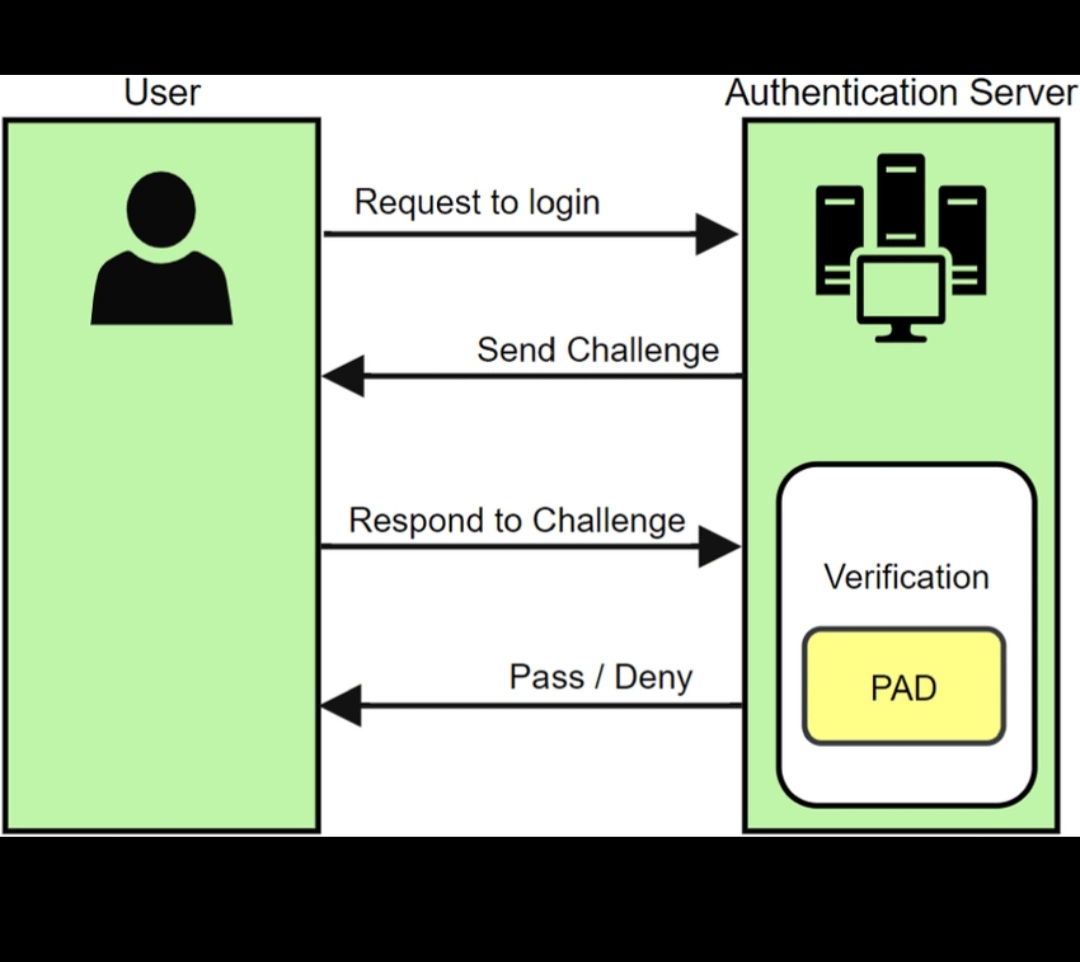

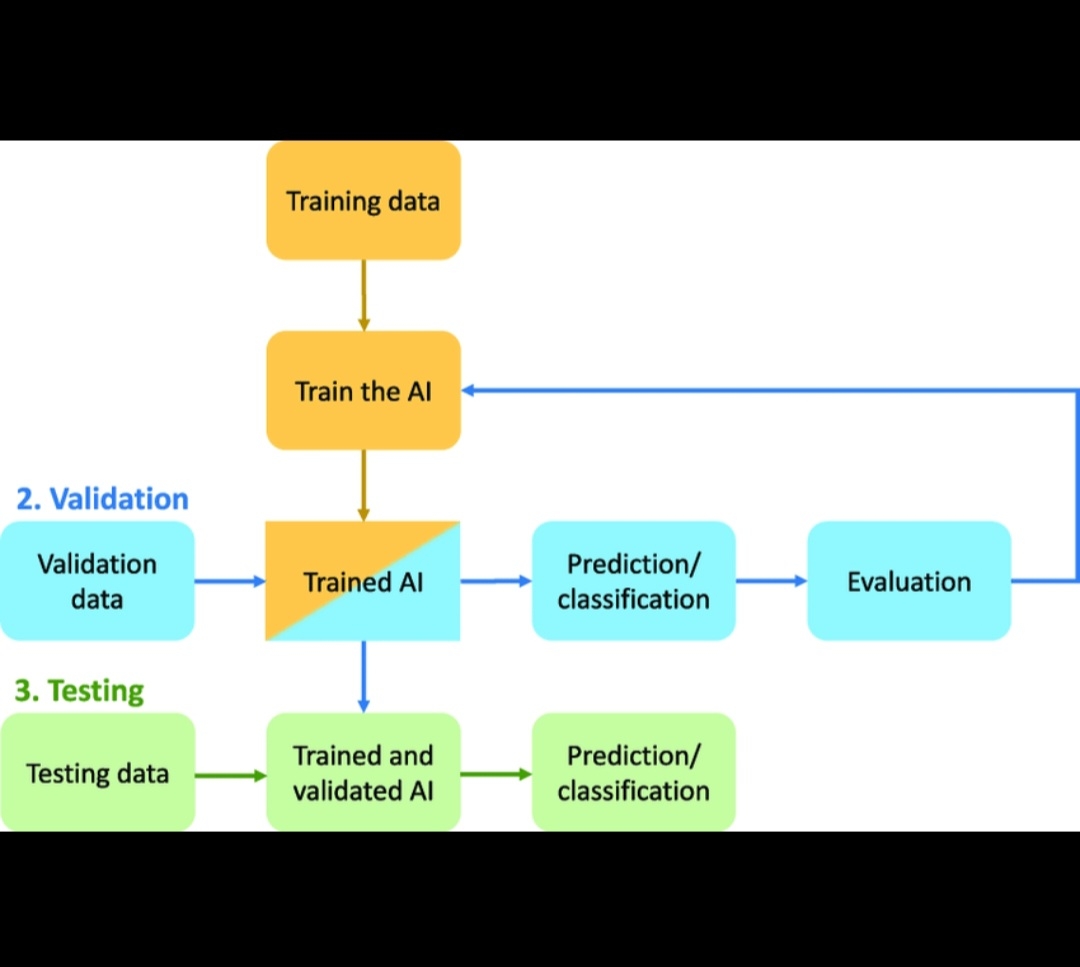

In traditional AI deployment, a model produces an output and the system accepts it as final. Mira introduces a different mental model: outputs can be submitted to a decentralized network of validators who verify correctness or consistency under defined rules.

That shift transforms AI from a probabilistic oracle into something closer to a verifiable service layer.

From an infrastructure perspective, this changes incentive design. Instead of trusting a centralized provider, verification becomes a market function. Validators are economically incentivized to check outputs. Incorrect outputs can be challenged. Correct verification is rewarded.

This is not just a technical adjustment — it’s an economic redesign.

And in my view, that economic redesign is what gives MIRA long-term relevance. AI usage is scaling exponentially. If even a fraction of high-value AI workflows require independent validation, the demand for a verification network compounds alongside model adoption.

Modular by Design, Not Monolithic by Default

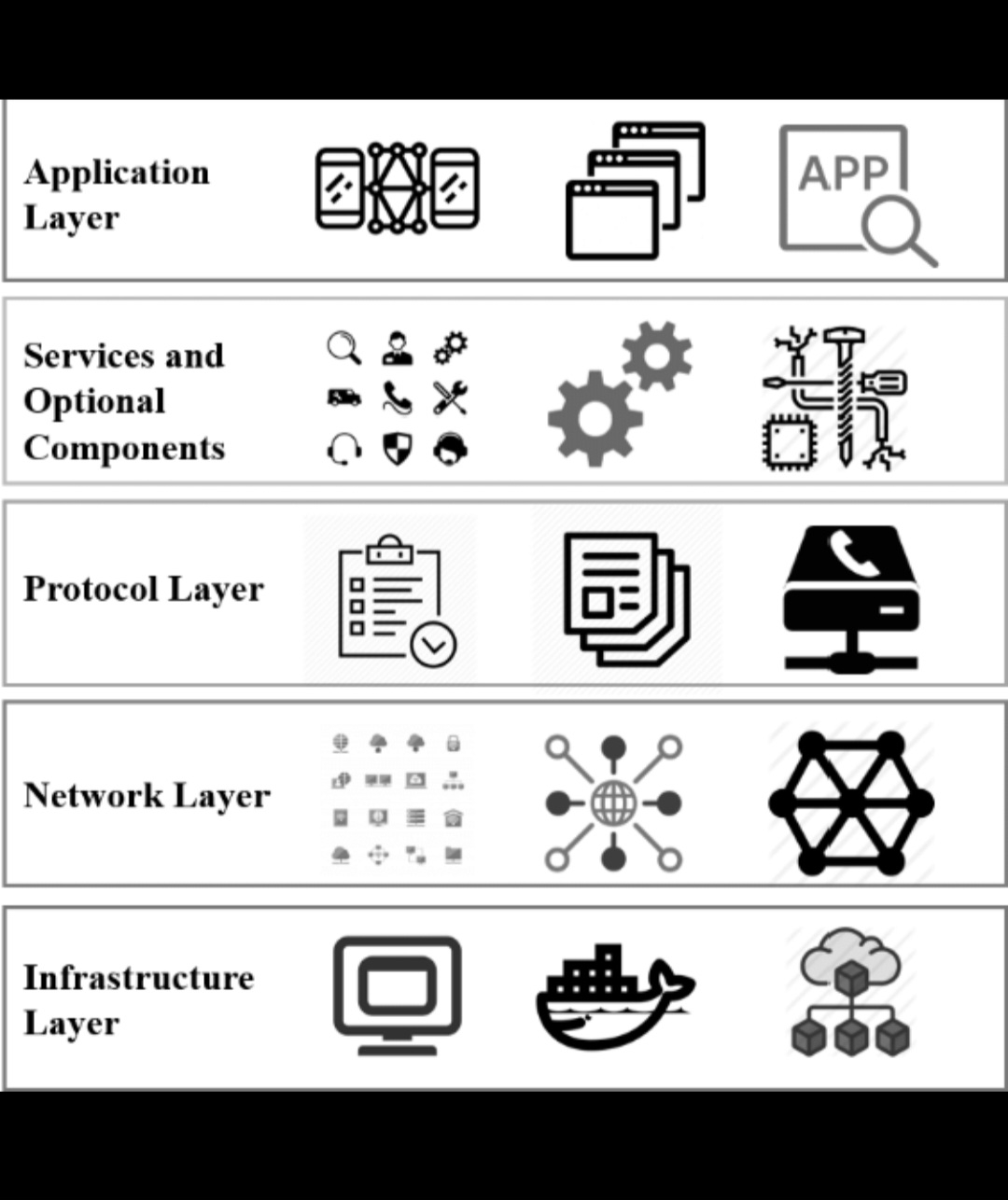

Another aspect I pay attention to is architectural positioning. Mira appears designed as a modular layer rather than a vertically integrated AI product. That matters because infrastructure that plugs into multiple ecosystems scales differently from applications competing for end users.

In practical terms, this means developers can integrate verification into existing AI pipelines without rebuilding everything from scratch. Verification becomes composable. It becomes programmable.

And that composability is what allows infrastructure to outlive narrative cycles.

The token layer — MIRA — sits at the center of this coordination mechanism. It’s not simply a speculative asset; it functions as the economic glue between requesters and validators. If verification demand grows, the token’s utility expands with network activity.

I’m not interested in short-term volatility. I’m interested in whether the protocol creates structural demand for participation. In Mira’s case, verification requests, staking dynamics, and validator incentives form a closed economic loop. That loop is what I evaluate when assessing durability.

Why Verification May Become Non-Optional

The more AI agents begin interacting with financial systems, governance mechanisms, and automated contracts, the less acceptable unverifiable outputs become. Enterprises won’t rely solely on probabilistic confidence when capital or liability is involved.

Regulatory pressure, audit requirements, and institutional risk management all point in one direction: provability.

If that trend accelerates, verification networks stop being optional enhancements and start becoming baseline infrastructure.

That’s where I see Mira’s asymmetric positioning. It doesn’t need every AI output to be verified. It only needs the economically meaningful ones. High-value AI calls — financial execution, compliance checks, structured data extraction, automated trading logic — represent concentrated demand.

Infrastructure that secures those flows captures durable relevance.

Infrastructure Ahead of Hype

What keeps me focused on MIRA isn’t narrative momentum. It’s the structural logic of the problem it addresses. AI is scaling. Trust is fragmenting. Verification demand is rising.

Protocols that sit between generation and execution occupy a powerful position in the stack.

I approach Mira the same way I approach any infrastructure layer:

Does it solve a real coordination problem?

Are incentives aligned between participants?

Can it integrate across ecosystems rather than compete with them?

Does its token model tie directly to network usage?

In Mira’s case, the answers are directionally compelling.

We’re early in the lifecycle of verifiable AI networks. Many will experiment. Few will achieve economic density. But I believe the verification layer is structurally inevitable if AI is going to underpin serious financial and autonomous systems.

That’s why I’m watching MIRA not as a narrative trade — but as infrastructure.

And in this cycle, infrastructure is where durability is built.