03:17.

Two task hashes land almost on top of each other.

Didn't notice at first. Both clean. Both from separate agents. Fabric's Autonomous task initiation fired at the edge like it always does. Machine-to-ledger submission pushed intent upstream before I finished scanning the first payload.

Parallel.

For a second, it looked clean.

Then saw the root.

Same dependency node. Or no. Whatever.

Different robots. Different sessions. One shared task dependency graph branch.

Dispatch went through. Conflict didn't.

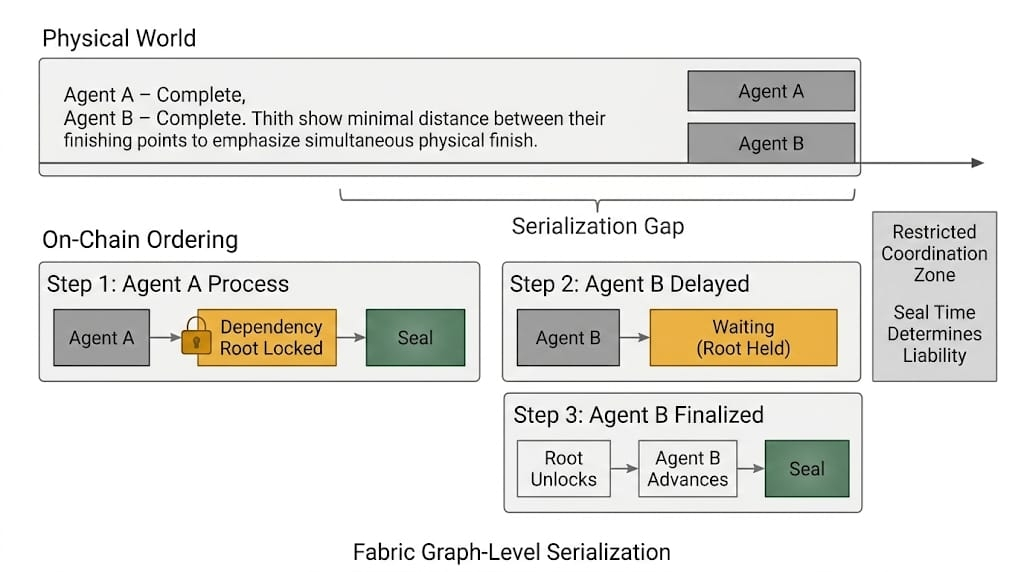

The first artifact routes into decentralized task arbitration. Fabric protocol Proof-of-execution confirmation attached. Dependency root locked for sequencing. Not sealed yet. Just… held.

dependency_root: locked

arbitration_lane: lane_2

The second artifact arrives a breath later and tries to traverse the same branch.

doesn't fail. just...

stops moving.

status: pending_dependency

Upstream, both agents are already moving through their execution envelopes. On their side, autonomy is intact. State checkpoint logged locally. Sensor-signed data proofs bundled. Everything still feels concurrent.

On-chain, everything funnels into that root.

Second hash can't attach to the Fabric's task-bound state transition while the root is held. It just sits in arbitration, unresolved, while the first keeps climbing.

I scroll.

Miss it.

Scroll again.

There it is... shared root identifier. Same branch. Different actor IDs.

I should have split the dependency.

I knew they were touching the same node.

Assumed the gap was enough.... wasn't.

The first artifact clears task priority arbitration because it arrived first. First-in holds the root.

Same branch. One ordering.

The robot keeps moving. The proof doesn’t.

The second robot finishes its physical sequence. Arm retracts. Load placed. Local loop reports complete.

No verified action certificate yet.

Liability keys off the seal time. Not the movement.

Onchain action ordering hasn’t hardened for it.

The second proof sits behind the first in the validation path. Same dependency root. Same @Fabric Foundation arbitration lane.

I start looking for congestion out of habit, then stop. This isn’t congestion. It’s the graph.

I lean back. Let it scroll. Hate that I’m waiting for it to “agree.”

No dispute surface. Just serialization.

The first artifact seals. Root unlocks. The second advances.

A few cycles lost.

Enough.

Because the second task was referencing a restricted coordination zone... the kind where we don't treat “local complete” as permission. The physical action already occurred under the assumption of independent autonomy. The economic reality waited for dependency clearance.

I open the task dependency graph visualization. Two branches converging into one root. Should’ve forked the state before dispatch. That't on me. Not fully.

Both agents kept moving.

The root stayed locked.

I hesitate before pushing the next batch.

There's a third agent queued. Different Fabric protocol's session-bound command window. Unknown dependency overlap. I check the graph this time before dispatch.

One branch looks isolated.

Looks.

I don't fully trust "looks" anymore.

The first two artifacts are now sealed. Both legitimate. No rollback. No fault.

But the second one cleared later than the physical world assumed.

I throttle nothing yet.

I split the dependency root for the next run. Separate coordination nodes. Artificial isolation. More branches. Less shared contention.

Now I’ve got two receipts to stitch.

The third artifact enters.

Different root.

Arbitration accepts it immediately.

I watch longer than I should.

Another agent on Fabric agent-native infrastructure initiates before the third hardens.

I check the graph again.

Same branch as the third.

Cursor on dispatch.

I don't send.

Not yet.