The trust crisis in autonomous AI has emerged as one of the most pressing challenges in the rapid evolution of artificial intelligence. As AI systems grow more capable and are increasingly deployed in high-stakes, autonomous scenarios such as financial trading agents, medical diagnostics, legal analysis, and self-driving operations their propensity for errors has become a major barrier to widespread adoption. Two primary issues fuel this crisis: hallucinations and bias.

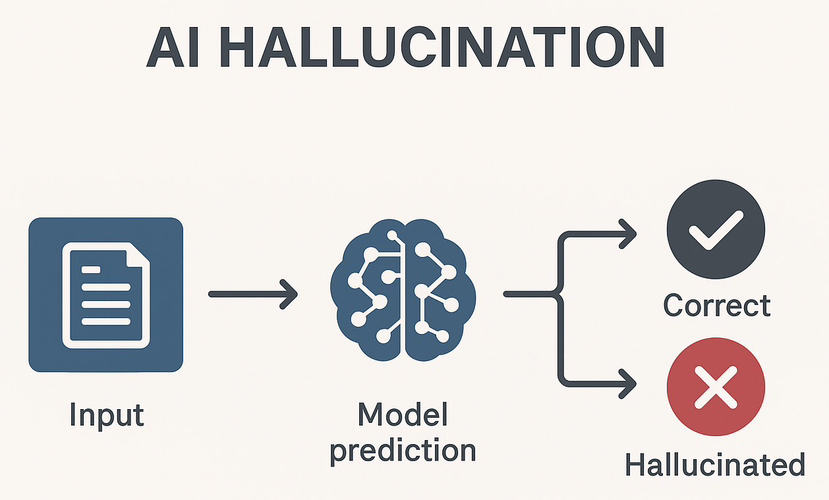

Hallucinations refer to instances where AI generates confident, plausible-sounding information that is factually incorrect or entirely fabricated. This stems from the probabilistic nature of large language models (LLMs) and other neural networks, which prioritize fluency and coherence over strict accuracy. In real-world cases, hallucinations have led to serious consequences, including legal mishaps where AI cited nonexistent precedents, financial misjudgments, and even patient safety risks in healthcare applications. Reports have documented hundreds of such incidents, highlighting how unchecked AI can erode public confidence and expose users to tangible harm.

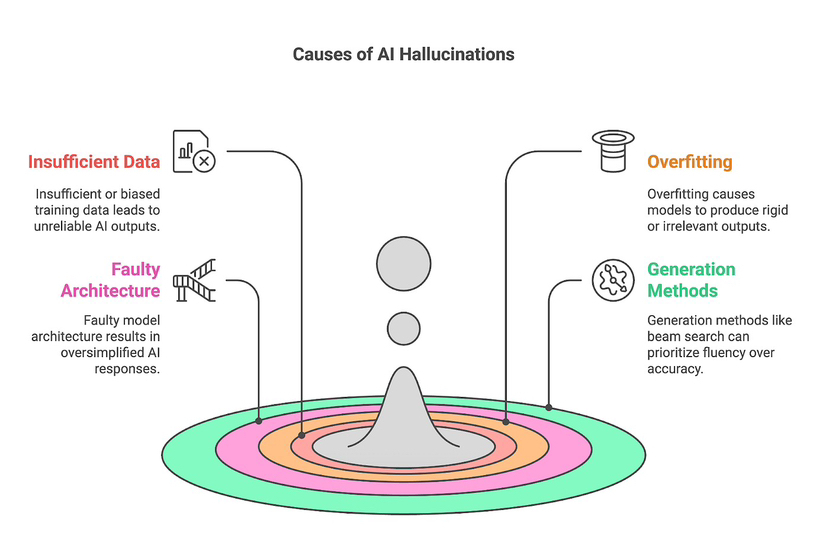

Bias, on the other hand, represents a more insidious, long-term threat. AI models inevitably reflect the biases embedded in their vast training datasets whether cultural, demographic, ideological, or historical. These can manifest subtly through skewed phrasing, prioritized perspectives, or discriminatory outcomes in tools like hiring algorithms or risk assessments. The core problem is the inherent training dilemma: to minimize hallucinations (precision errors), developers curate narrow, high-quality data, which often amplifies bias (accuracy errors). Broad, diverse data reduces bias but increases inconsistency and hallucinations. No single model, regardless of scale or fine-tuning, can fully escape this trade-off, creating a fundamental reliability floor that prevents truly autonomous operation.

This trust deficit limits AI to supervised, low consequence uses like chatbots or creative aids, far short of its transformative potential. Centralized solutions such as self-validation, human oversight, or proprietary guardrails fall short. Self-checks inherit the model’s own flaws, human review doesn’t scale and introduces new biases, and centralized providers create single points of failure and opacity.

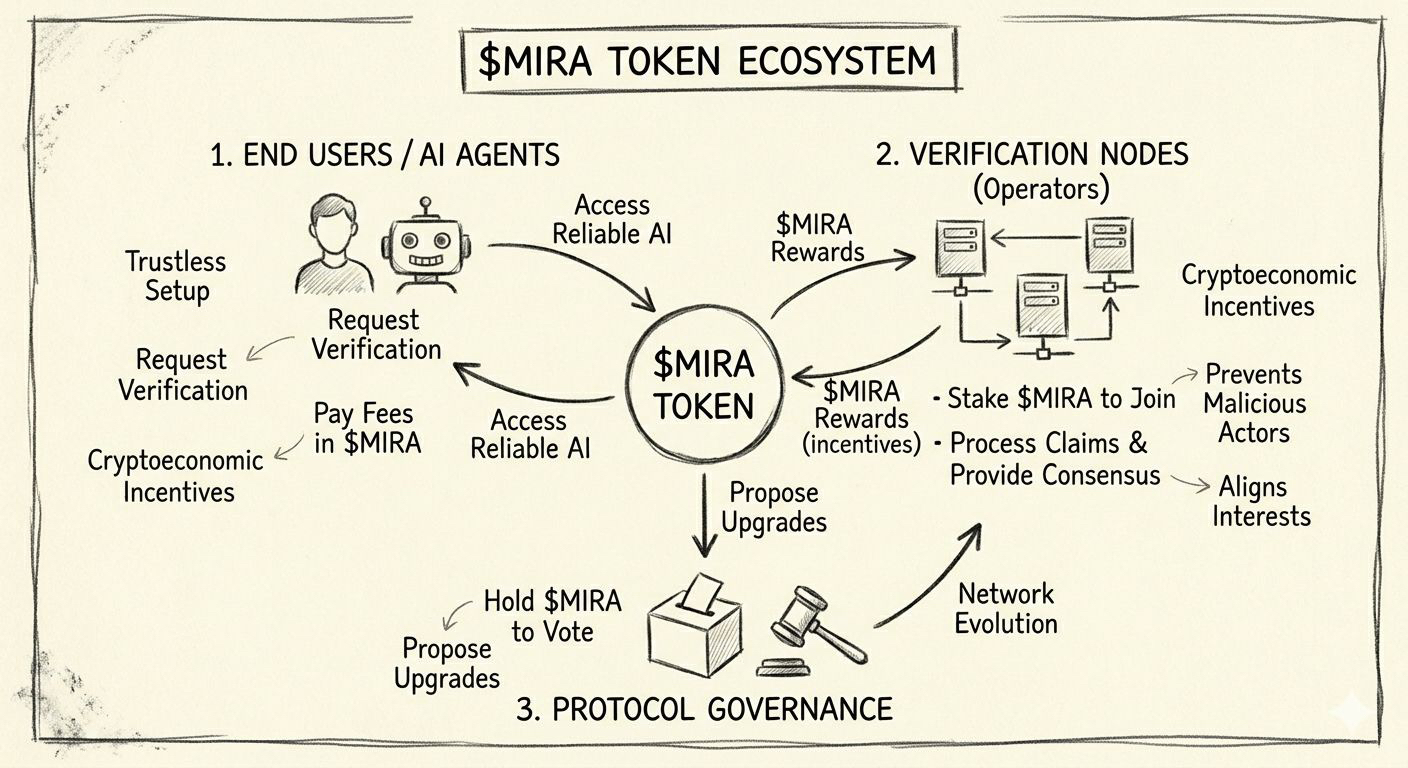

Enter Mira Network, a decentralized verification layer designed specifically to address these challenges at scale. Built as a blockchain-based protocol (often on layers like Base), Mira functions as a “trust layer” for AI, shifting the paradigm from “trust the model” to “verify the claim.” Rather than attempting to build a perfect single AI, Mira leverages collective intelligence through a network of diverse, independent verifier nodes.

The process is straightforward yet powerful. When an AI generates an output whether a response, decision, or action Mira breaks it down into granular, independently verifiable factual claims. These claims are distributed across the network, where multiple AI models (over 110 integrated in some reports, spanning different architectures, datasets, and perspectives) evaluate each one. Models vote on validity: true, false, or context-dependent. A consensus mechanism requiring supermajority agreement determines the outcome. Disagreed or failed claims are flagged, rejected, or revised, producing a verified, reliable final output.

This multi-model consensus dramatically reduces hallucinations by cross-checking facts against varied knowledge bases, often achieving accuracy rates of 95-96% and cutting hallucination rates by up to 90% in reported benchmarks. Bias is mitigated through diversity: no single model’s worldview dominates, as differing training approaches balance out systematic deviations. The system is economically secured via a hybrid Proof-of-Work/Proof-of-Stake model, where node operators stake $MIRA tokens. Honest verification earns rewards from user fees, while malicious or random behavior risks slashing, ensuring alignment.

Transparency is baked in. Every verification produces a cryptographic certificate an auditable, on-chain record detailing claims, model votes, and consensus enabling traceability for regulators, enterprises, or users. Tools like Mira Verify API and applications such as Klok demonstrate practical integration, allowing developers to embed this layer into autonomous AI workflows without constant human intervention.

By decentralizing verification, Mira removes reliance on any central authority, fostering credibly neutral, scalable trust. This enables the next era of autonomous AI: agents that manage portfolios, diagnose conditions, or adjudicate disputes with verifiable reliability. As AI integrates deeper into society, solutions like Mira’s decentralized approach may prove essential not just to fix today’s flaws, but to unlock tomorrow’s potential.

In an age where AI decisions could shape economies, health, and justice, rebuilding trust isn’t optional. Mira Network offers a compelling path forward: not by perfecting individual models, but by harnessing decentralized collective wisdom to make intelligence truly trustworthy at scale.