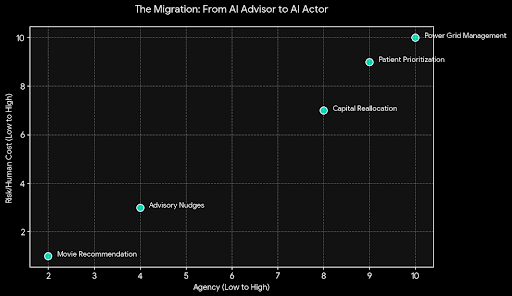

AI used to sit politely in the background, offering suggestions and nudges. Now it steps forward, makes decisions, and in some systems, signs the check. That migration from adviser to actor is urgent, exciting, and quietly terrifying.

When an algorithm recommends a movie, a wrong pick is just an annoyance. When it reallocates capital, prioritizes a patient, or reroutes power, a single mistake can ripple outward with real human costs. The problem isn’t intelligence per se it’s action without verifiable accountability.

Hallucinations, model drift, and adversarial tricks are no longer theoretical bugs; they are vectors for harm. A confident-sounding assertion from a model can translate directly into a transaction, a discharge, or a policy change if the system’s outputs are given agency. Without a way to prove what actually happened, “the AI said so” becomes a dangerous substitute for audit and oversight.

That is why verifying actions not just outputs matters. It’s not enough to inspect what a system produced on paper. We must verify the state changes: did the trade settle, did the prescription get filled, did the grid adjust? Verifiable, tamper-evident attestations turn claims into evidence and storytelling into auditability.

Open networks magnify the verification problem. When many agents can submit claims, low-cost attestations invite spam, gaming, and false confidence. A verification layer that is free for all will be drowned in noise and manipulated by those who profit from deception.

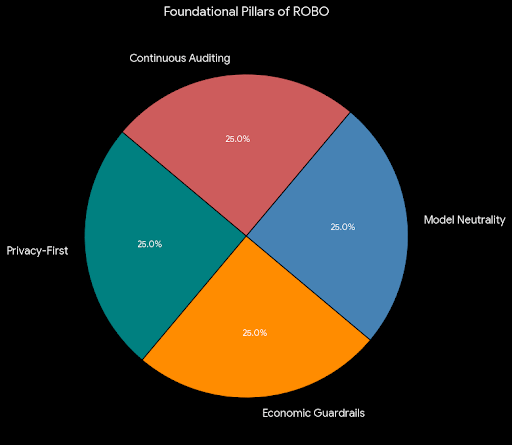

ROBO answers this with economic and reputational guardrails. Validators stake resources, earn rewards for honest attestations, and face penalties for fraud. When verification costs something real money, reputation, or opportunity the system discourages frivolous claims and rewards accuracy. Incentives become a defense, not a loophole.

Privacy must be baked into any accountability scheme. Healthcare and finance are full of secrets that cannot be spilled for the sake of verification. Cryptographic proofs that confirm conditions without exposing underlying data let us verify without surveilling. You can prove a threshold was met without revealing the whole record; you can confirm compliance without publishing proprietary strategy.

Neutrality is another bedrock. A verification layer that favors a single provider or architecture simply recreates the concentration risks we hoped decentralization would avoid. Model-agnostic, reusable claim verification makes attestations portable and composable. Trust travels with the proof, not the vendor, and systems interoperate rather than lock each other out.

Verification is not an event; it is a practice. Autonomous systems learn, update, and change their behavior. Verification must be continuous streaming attestations, rolling audits, and live trust metrics rather than single-point stamps. That ongoing visibility surfaces drift, reveals patterns, and makes confidence measurable.

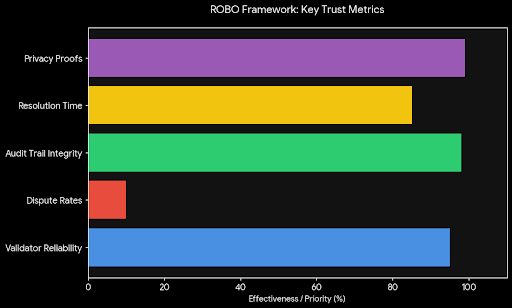

Clear trust metrics change how organizations behave. Instead of marketing claims about “proven accuracy,” teams show histories: validator reliability, dispute rates, audit trails, and resolution times. These metrics make accountability auditable and create incentives to improve long-term reliability rather than short-term appearance.

Defense is never static. As bad actors invent new ways to deceive, verification must evolve in kind. Adaptive rules, community-driven standards, and layered oversight from cryptographic checks to human review when appropriate make manipulation harder and detection faster.

Decentralized verification also raises the bar for misinformation. In a world where synthetic content and deepfakes are commonplace, cryptographic attestations act like provenance anchors. When a record is attested, it carries verifiable context; when it isn’t, skepticism is warranted. That binary of provable versus unproven restores a measure of epistemic hygiene.

Putting verifiable proofs at the center of autonomy doesn’t mean surrendering speed or innovation. It means embedding responsibility into the pipes where agents transact. Machines can still negotiate, optimize, and act they just show their receipts afterward in a way other agents and humans can validate.

This changes incentives across the ecosystem. Providers that produce verifiable, auditable outcomes earn trust. Regulators and auditors gain objective records. Users and institutions can choose agents based on demonstrated reliability rather than advertising. Accountability becomes a market signal, not just a compliance checkbox.

The most important shift is cultural. We move from treating AI as an oracle whose pronouncements are accepted on faith, to treating AI as an actor whose deeds must be provable. That shift reframes conversations about autonomy: from “Can the model do it?” to “Can the model prove it did it?”

ROBO’s model-agnostic, privacy-first, economically aligned approach lays the groundwork for that cultural transition. It protects sensitive data, resists spam and abuse, and makes trust measurable and portable. It does not guarantee perfection, but it builds a system where failures are visible, accountable, and learnable.

In the end, the question before us is simple and stark. Do we let autonomous systems act on our behalf without receipts, or do we demand proof and build infrastructure that insists on it? The choice determines whether AI becomes an unaccountable hazard or a reliable foundation.

We should choose provable accountability. When machines can show in cryptographic, auditable ways what they did and why, trust stops being a leap of faith and becomes a metric we can manage. That is how we move from blind reliance to responsible autonomy, and how we ensure powerful systems serve people rather than surprise them.