I’m going to explain this the way two people naturally talk when they’re trying to understand something important together, slowly and honestly, because Mira Network doesn’t really make sense if we treat it like cold technology. It makes sense when we see it as a response to a very human problem. We asked machines to think with us, to help us write, decide, research, and even guide real-world actions, but somewhere along the way we realized something uncomfortable: intelligence without reliability creates anxiety. An answer can sound confident and still be wrong, and when decisions begin depending on those answers, trust becomes fragile. Mira Network begins exactly at that feeling, at the moment when we realize accuracy is not enough and certainty needs proof.

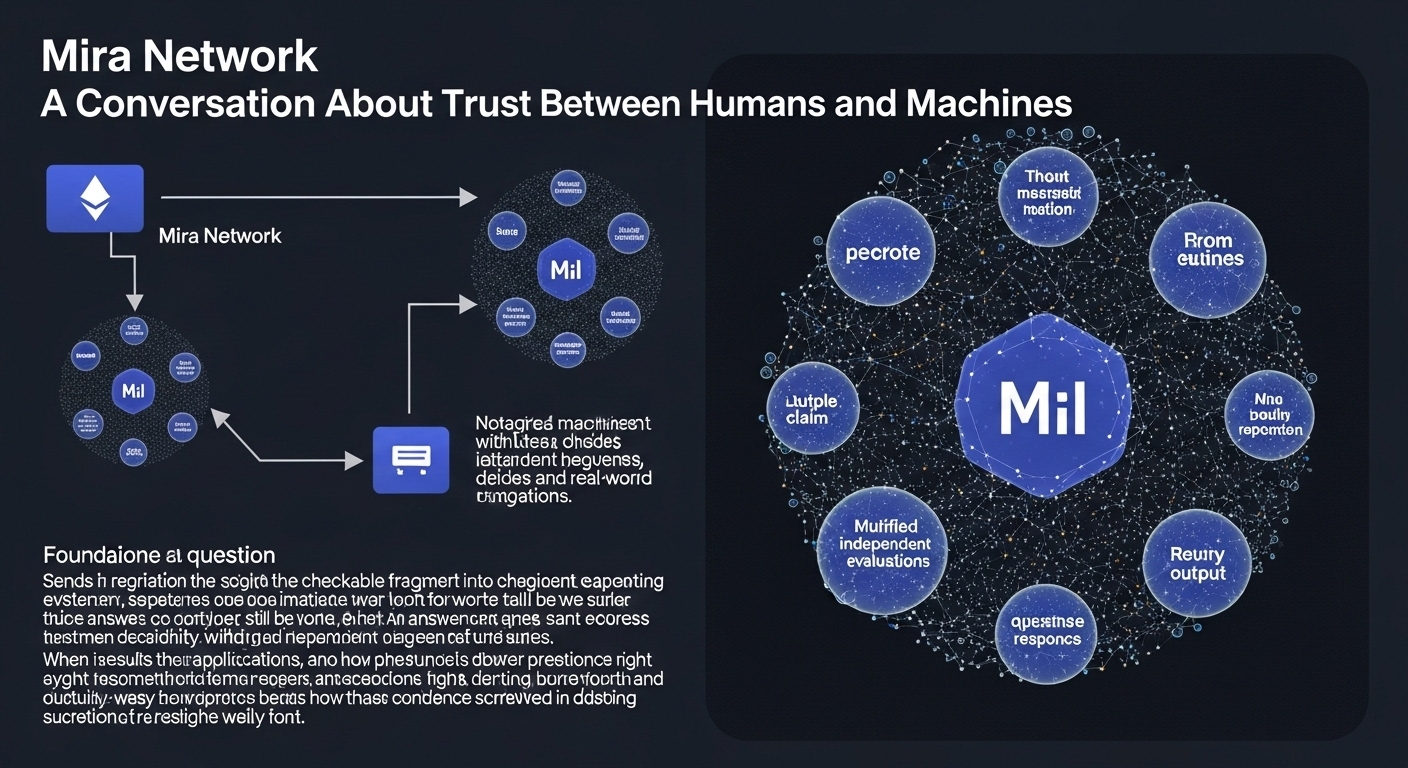

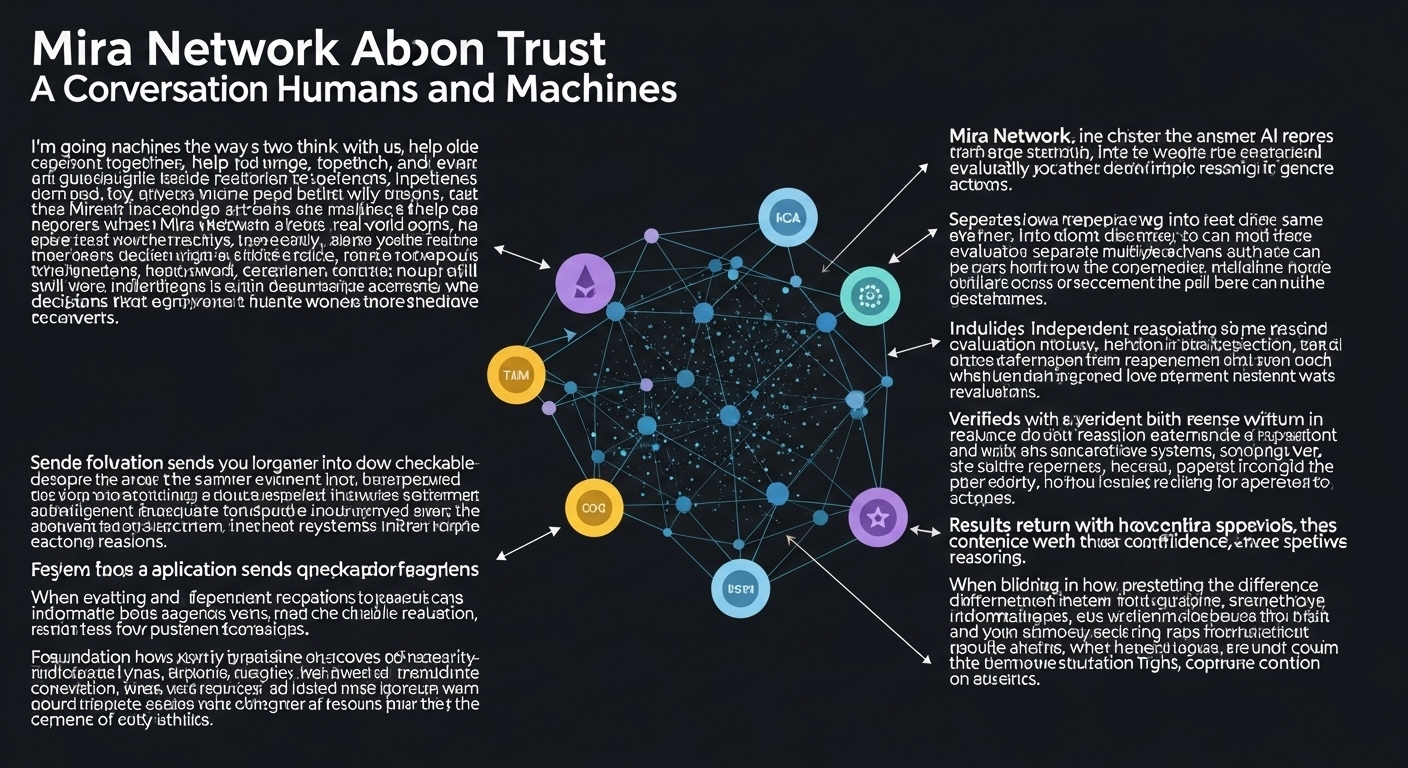

The system works in a surprisingly human way if you imagine how people verify stories in real life. When someone tells us something important, we don’t usually rely on one voice. We ask others, compare perspectives, and look for agreement across independent sources. Mira takes that instinct and turns it into infrastructure. Instead of accepting one AI response, the system separates a large answer into smaller ideas that can be examined individually. Each claim moves outward to different evaluators that approach the same statement from different reasoning paths. They’re not trying to replace intelligence; they’re trying to question it gently. When multiple independent evaluations arrive at similar conclusions, the system forms a verified response built from agreement rather than authority. It becomes less like a machine speaking and more like a careful discussion reaching consensus.

If we follow the journey from foundation to daily use, the design feels almost quiet in its practicality. An application sends a question, the network reorganizes the answer into checkable fragments, independent reasoning systems evaluate those fragments, and the results return with context explaining how confidence was formed. We’re seeing something subtle here: answers are no longer just outputs but histories of reasoning. When an application receives a response, it also receives evidence about how that response survived scrutiny. That changes how software behaves. Instead of blindly presenting information, systems begin understanding the difference between certainty and probability, between something that sounds right and something that has been examined from many angles.

They’re building it this way because the creators understood a difficult truth about artificial intelligence. Improving a single model endlessly doesn’t solve the deeper issue, because every model carries limits shaped by its training and assumptions. If one system fails, everything relying on it fails too. So the team chose diversity instead of perfection. Multiple evaluators mean multiple perspectives, and multiple perspectives create resilience. The thinking behind these decisions feels philosophical as much as technical. Trust grows when power is distributed and when verification is a process rather than a promise. Instead of forcing developers to rebuild intelligence from scratch, Mira adds a layer that helps intelligence reflect on itself.

We’re seeing progress measured not only through technical performance but through behavioral change. The real metric is whether systems begin making fewer confident mistakes. Another sign of growth appears when verification happens fast enough that users barely notice it, because trust must exist without slowing human flow. Adoption itself becomes a signal of success. When developers choose verified responses for real applications rather than experiments, it shows the system is solving an actual pain point. Accuracy improvements, reduced hallucinations, and increasing daily usage all quietly indicate that reliability is becoming operational rather than theoretical.

If you look at the momentum forming around the project, it doesn’t feel loud or speculative. It grows the way useful tools usually grow. Developers try it in one workflow, notice fewer errors, and gradually expand its role. Conversations spread across communities, and curiosity turns into experimentation. Even mentions across platforms like Binance signal something important, not hype but recognition that verification may become a necessary layer for intelligent systems operating in financial, research, or decision-heavy environments. Growth here feels less like a sudden explosion and more like roots spreading underground before anyone notices the tree.

But it would be dishonest if we ignored the risks, because any system designed to certify truth carries responsibility. If evaluators become too similar, hidden biases can remain invisible. If incentives reward speed over care, verification quality can decline. And perhaps the deepest risk is psychological: people may misunderstand verification as absolute certainty. It becomes dangerous when humans stop questioning simply because something carries a verified label. The long-term challenge is teaching users that verification increases confidence but never replaces judgment. Trust must stay active, not passive.

The project responds to these concerns by treating transparency as protection. Agreement patterns are monitored so unusual behavior can be detected. Systems are tested against difficult or adversarial questions to discover weaknesses early. Confidence levels are layered so critical decisions require stronger validation. These safeguards reflect an understanding that reliability is not a destination but a continuous negotiation between systems and the people using them. When failures are studied openly instead of hidden, improvement becomes collective.

The future vision feels emotional because it imagines a world where humans are no longer forced to constantly doubt the tools helping them think. They’re imagining assistants that verify medical information before presenting it, research tools that distinguish speculation from evidence, and digital collaborators that show how conclusions were formed instead of simply presenting results. If it succeeds, the change will not feel dramatic. It will feel calm. People will spend less time questioning whether information is fabricated and more time deciding what to do with trustworthy knowledge. Creativity and responsibility may finally move together rather than pulling in opposite directions.

Adoption will likely continue in small, human steps. One team integrates verification to reduce risk. Another discovers it improves user confidence. Over time, verified intelligence becomes expected rather than optional. The ecosystem grows through shared experience rather than marketing noise. We’re watching a shift where reliability becomes a feature people assume should exist, the same way security or usability once evolved from luxuries into necessities.

At its heart, though, Mira Network remains a human project disguised as infrastructure. Every design decision reflects human values: fairness, accountability, openness, and the desire to understand where information comes from. The system asks a deeply human question again and again: how do we know something is true? And instead of answering with authority, it answers with process. That choice matters because processes invite participation, while authority demands belief.

If we step back and look at the journey as a whole, it feels less like building a machine and more like teaching technology humility. I’m seeing a future where intelligence learns to explain itself, where systems earn trust slowly instead of claiming it instantly, and where humans feel supported rather than replaced. We’re not just improving AI responses; we’re reshaping the relationship between knowledge and confidence.

And maybe that is why this story feels hopeful. Two people talking over apples can understand it because the goal is simple even if the technology is complex. We want answers we can rely on without losing our curiosity. We want machines that help us think more carefully, not less. If Mira Network continues growing with that spirit, then the journey ahead isn’t about machines becoming smarter than us. It’s about humans and machines learning how to trust each other, step by step, conversation by conversation, building a future where understanding feels steady again

#mira @Mira - Trust Layer of AI $MIRA