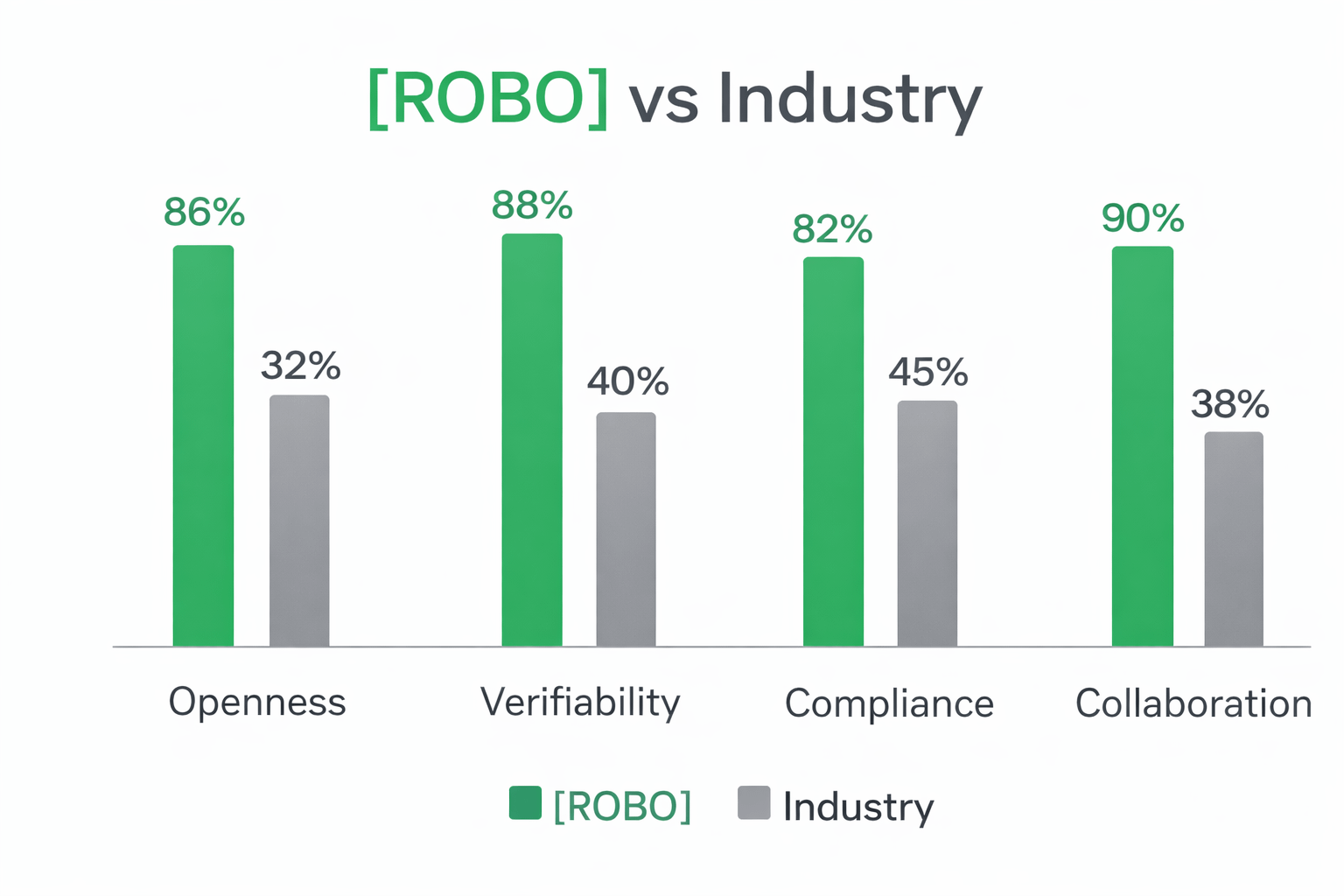

Robotics is hitting a turning point. For years, most advanced robots have been built inside closed ecosystems where hardware, software, and data are locked together like a black box. That model slows innovation, makes collaboration harder, and creates a dangerous gap in safety and regulation. When a robot makes a decision in the real world, people need more than confidence and hype. They need proof. They need to know what happened, why it happened, and who is accountable if something goes wrong.

This is where Fabric Protocol enters with a different philosophy. Instead of trying to be “another robot company,” Fabric positions itself as the open infrastructure that robots can be built on, upgraded through, and governed through. The idea is bigger than one product. It is about creating a shared foundation where robots can evolve like software does, but with trust and verification built into the system from the start.

At the core, Fabric Protocol is described as a global open network supported by the Fabric Foundation. Think of it like a decentralized nervous system for robotics. Individual robots are treated as agents that can operate autonomously, but remain synchronized to common standards across the network. In a future where robots move through homes, hospitals, warehouses, and public spaces, autonomy is not enough. Autonomy must be accountable. Fabric’s vision is to make that accountability part of the infrastructure, not an afterthought.

One of the most important problems Fabric targets is the black box issue in AI robotics. A robot can act with high confidence and still be wrong. When mistakes happen, the hardest part is often understanding the chain of decisions that led to the outcome. Fabric’s approach is built around verifiable computing, where actions, communications, and execution traces can be recorded in a way that can later be verified. This creates what many describe as “proof of execution,” meaning a robot’s behavior can be audited instead of guessed.

That matters for safety audits because investigators need an accurate trail of what the robot did, when it did it, and under which policies or model versions. It matters for regulatory compliance because rules differ by region, and proving that an autonomous system followed required constraints could become essential for legal operation. It also matters for data integrity, because if training data or operational inputs are compromised, the robot’s decisions become unreliable. A verification layer helps detect and discourage manipulation, while making responsibility clearer.

Another powerful idea inside the Fabric narrative is modular evolution. In most closed systems, every team is forced to rebuild similar components repeatedly. Fabric promotes a more modular approach where contributors can develop specialized modules such as computer vision, locomotion, navigation, manipulation, or safety policies. If one module improves, the ecosystem can benefit rather than keeping progress trapped inside a single company. This shifts robotics from isolated innovation into collaborative evolution, where the baseline capabilities of robots can rise faster because improvements can be shared in a structured way.

The bigger story is about bridging the human–machine trust gap. People do not only fear robots because they are advanced. People fear robots because they are unpredictable and hard to explain. Fabric’s vision aims to reduce that fear by making autonomy verifiable. In a world like that, robots are not just machines acting in the dark. They become systems that can be inspected, audited, and trusted because their actions can be proven.

If this vision holds, the long-term value of Fabric Protocol is not just technical. It is cultural. It pushes robotics toward a future where trust is not a promise, but a property of the system. And that is why many see $ROBO as more than a ticker. It becomes a narrative around building the accountability layer for the next era of general-purpose robotics.